- Sign in / Register

- Administration

- Edit profile

The PhET website does not support your browser. We recommend using the latest version of Chrome, Firefox, Safari, or Edge.

Agar Cell Diffusion

All biological cells require the transport of materials across the plasma membrane into and out of the cell. By infusing cubes of agar with a pH indicator, and then soaking the treated cubes in vinegar, you can model how diffusion occurs in cells. Then, by observing cubes of different sizes, you can discover why larger cells might need extra help to transport materials.

- Agar-agar powder

- Digital scale

- Graduated cylinder

- Whisk or fork

- Microwaveable bowl or container at least 500ml in volume

- Microwave (not shown)

- Hot pad or oven mitt

- Heat-safe surface

- pH indicator, such as bromothymol blue or phenolphthalein

- Small glass baking pan or cube-shaped silicone ice-cube molds

- Clear plastic metric ruler

- Sharp knife

- Clear container for immersing agar cubes

- Pencil and notepaper

- White paper or plate

- Measure out 1.6 g of agar-agar and 200 ml water. Mix them together with a whisk or fork in a large microwave-safe bowl.

- Heat the solution in the microwave on high for 30 seconds. Remove to a heat-safe surface using a hot pad or oven mitts, stir, and return to the microwave for 30 seconds. Repeat this process until the mixture boils. (Keep your eye on it as it can boil over very easily!) When done, remove the container, and set it on a trivet or other heat-safe surface.

- Carefully pour the agar solution into silicone ice-cube molds or a small glass baking pan. Make sure the agar block(s) will be at least 3 cm deep when they solidify. If you don’t have enough solution, make more using the ratio of 0.8 g agar-agar powder to 100 ml water.

Place a few millileters of the pH indicator into a small container ( either bromothymol blue or phenolphthalein ). Using a dropper, add a few drops of vinegar. What do you notice?

As an acid, vinegar has a large number of hydrogen ions. When the hydrogen ions come into contact with the pH indicator, the solution changes color.

Fill a clear container with vinegar to a 3-cm depth. Place one agar cube of each size in the vinegar, making sure the blocks are submerged. The untreated blocks (one of each size) will be used for comparison. What do you think will happen to each cube?

Determine the surface area and volume of each cube. To find the surface area, multiply the length of a side of the cube by the width of a side of the cube. This will give you the area of one face of the cube. Multiply this number by 6 (the number of faces on a cube) to determine the total surface area. To find the volume, multiply the length of the cube by its width by its height. Then determine the surface-area-to-volume ratios by dividing the surface area by the volume for each cube.

How will you know if hydrogen ions are moving into the cube? How long do you think it will take the hydrogen ions to diffuse fully into each of the cubes? Why? How would you be able to tell when the vinegar has fully penetrated the cube?

After 5 minutes, remove the cubes from the vinegar with a plastic spoon, and place them on white paper or on a white plate. Compare the treated cubes to the untreated cubes and observe any color changes.

How much vinegar has been absorbed by each treated cube? One way to measure this is to calculate the percentage of the volume of the cube that has been penetrated by the vinegar. (Hint: It may be easier to first consider the volume that has not been penetrated by the vinegar—the portion that has not yet changed color.) Do you want to adjust any of your predictions for the diffusion times? What are your new predictions?

Carefully return all of the treated cubes to the vinegar. Continue checking the vinegar-soaked cubes every 5 minutes by removing them to determine the percentage of the cube that has been penetrated by the vinegar. Continue this process until the vinegar has fully penetrated the cubes. Make a note of the time when this occurs.

What do you notice about the percentage of penetration for each of the cubes at the different time intervals? What relationships do you notice between surface area, volume, surface-area-to-volume ratio, and percentage penetration? What does this say about diffusion as an object gets larger?

Biological cells can only survive if materials can move in and out of them. In this Snack, you used cubes of agar to visualize how diffusion changes depending on the size of the object taking up the material.

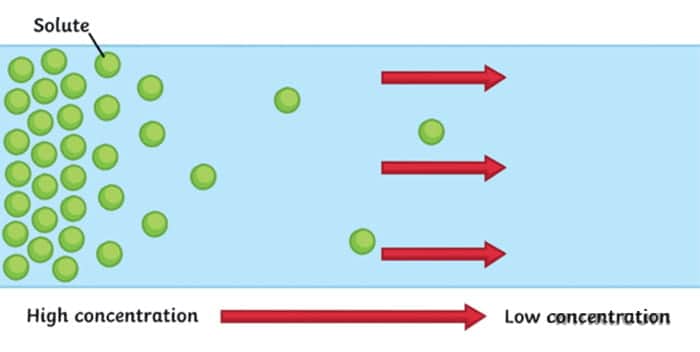

Diffusion occurs when molecules in an area of higher concentration move to an area of lower concentration. As hydrogen ions from the vinegar move into the agar cube, the color of the cube changes allowing you to see how far they have diffused. While random molecular motion will cause individual molecules and ions to continue moving back and forth between the cube and the vinegar solution, the overall concentrations will remain in equilibrium, with equal concentrations inside and outside the agar cube.

How did you find the percentage of the cube that was penetrated by the hydrogen ions at the various time intervals? One way to do this is to start with the volume of the cube that has not been penetrated—in other words, the part in the center that has not yet changed color. To determine the volume of this inner cube, measure the length of this inner cube and multiply it by the width and height. Subtract this from the original volume of the cube and you obtain the volume of the cube that has been penetrated. By dividing this number by the original volume and multiplying by 100%, you can determine the percentage penetration for each cube.

You may have noticed that the bigger the vinegar-soaked cube gets, the time it takes for additional vinegar to diffuse into the cube also increases—but not in a linear fashion. In other words, if the cube dimensions are doubled, the time it takes for the hydrogen ions to completely diffuse in more than doubles. When you triple the size, the time to diffuse MUCH more than triples. Why would this happen?

As the size of an object increases, the volume also increases, but by more than you might think. For example, when the cube doubles from a length of 1 cm to a length of 2 cm, the surface area increase by a factor of four, going from 6 cm 2 (1 cm x 1 cm x 6 sides) to 24 cm 2 (2 cm x 2 cm x 6 sides). The volume, though, increases by a factor of eight, increasing from 1 cm 3 (1cm x 1 cm x 1 cm) to 8 cm 3 (2 cm x 2 cm x 2 cm).

Because the volume is increasing at a greater factor than the surface area, the surface-area-to-volume ratio decreases. As the cube size increases, the surface-area-to-volume ratio decreases (click to enlarge the table below). The vinegar can only enter the cube through its surface, so as that ratio decreases, the time it takes for diffusion to occur throughout the whole volume increases significantly.

$$\begin{array}{|c|c|c|c|} \hline \begin{array}{c} \text { Cube Side } \\ \text { Length } \end{array} & \text { Surface Area } & \text { Volume } & \begin{array}{c} \text { Surface-area- } \\ \text { to-volume ratio } \end{array} \\ \hline 1 \mathrm{~cm} & 6 \mathrm{~cm}^2 & 1 \mathrm{~cm}^3 & 6 \mathrm{~cm}^{-1} \\ \hline 2 \mathrm{~cm} & 24 \mathrm{~cm}^2 & 8 \mathrm{~cm}^3 & 3 \mathrm{~cm}^{-1} \\ \hline 3 \mathrm{~cm} & 54 \mathrm{~cm}^2 & 27 \mathrm{~cm}^3 & 2 \mathrm{~cm}^{-1} \\ \hline \end{array}$$

Anything that comes into a cell (such as oxygen and food) or goes out of it (such as waste) must travel across the cell membrane. As cells grow larger, the ratio of surface area to volume decreases dramatically, just like in your agar cubes. Larger cells must still transport materials across their membranes, but have a larger volume to supply and a proportionately smaller surface area through which to do so.

Bacterial cells are fairly small and have a comparatively larger surface-area-to-volume ratio. Eukaryotic cells, such as those in plants and animals, are much larger, but have additional structures to help them conduct the required amount of transport across membranes. A series of membrane-bound structures continuous with the plasma membrane, such as the endoplasmic reticulum, provide additional surface area inside the cell, allowing sufficient transport to occur. Even with these strategies, though, there are upper limits to cell size.

While this Snack investigates how the size of an agar cube impacts diffusion, the shape of each cube remains consistent. Biological cells, however, come in different shapes. To see how different shapes of “cells” affect diffusion rates, try various shapes of agar solids. Ice-cube molds can be found in spherical and rod shapes in addition to cubes. How does the shape impact the surface-area-to-volume ratios?

This Snack fits well into a series of investigations on osmosis and diffusion. The Naked Egg Snack will allow students to explore how concentration gradients power movement of materials into and out of cells. The Cellular Soap Opera Snack will help students consider the types of materials that move through cell membranes.

To help students better understand the concepts of surface area, volume, and surface-area-to-volume ratio, have them build models with plastic centimeter cubes. Physical models can help make these ideas more concrete. Students can also graph class data to better understand the mathematical relationships involved.

If there’s not enough time within a class period for the largest cubes to be fully penetrated by the hydrogen ions present in the vinegar, students can make note of the percentage of the cube that has been penetrated by the vinegar and use that data to extrapolate a result. Alternatively, students in the following period may be able to note the time for the previous class.

Agar-agar comes as a powder and can be purchased online or at markets featuring Asian foods. Unflavored gelatin can be used as a substitute, but is more difficult to handle. To make cubes from gelatin, add boiling water (25% less than the amount recommended on the package) to the gelatin powder, stir, and refrigerate overnight. You may need to experiment with the ratio of water to gelatin to achieve the perfect consistency.

Cabbage juice can be used as an inexpensive alternative to commercial pH indicator solutions. To make cabbage juice indicator, pour boiling water over chopped red cabbage and let it sit for 10 minutes. Strain out the cabbage, and use the remaining purple water to mix with the agar powder.

Related Snacks

STEM Little Explorers

Knowing through exploring.

Home » Articles » STEM » STEM Science » How to Demonstrate Diffusion with Hot and Cold Water

How to Demonstrate Diffusion with Hot and Cold Water

We all need some space sometimes, right that’s true down to a molecular level. molecules don’t like to stay too close together and will try to move to less crowded areas. that process is called diffusion and we will explore all about it in this simple but revealing experiment., article contents.

What is Diffusion?

Have you ever smelled your neighbor’s lunch on your way home? Or smelled someone’s perfume minutes after that person was gone? You experienced the diffusion!

Diffusion is a movement of particles from the area of high concentration to an area of low concentration. It usually occurs in liquids and gases.

Let’s get some complex-sounding terminology out of the way. When talking about diffusion, we often hear something about the concentration gradient (or electrical gradient if looking at electrons). Gradient just means a change in the quantity of a variable over some distance. In the case of concentration gradient, a variable that changes is the concentration of a substance. So we can define the concentration gradient as space over which the concentration of our substance changes.

For example, think of the situation when we spray the air freshener in the room. There is one spot where the concentration of our substance is very high (where we sprayed it initially) and in the rest of the room it is very low (nothing initially). Slowly concentration gradient is diffusing – our freshener is moving through the air. When the concentration gradient is diffused, we reach equilibrium – the state at which a substance is equally distributed throughout a space.

It’s important to note that particles never stop moving , even after the equilibrium is reached. Imagine two parts of the room divided by a line. It may seem like nothing is happening, but particles from both sides are moving back and forth. It’s just that it is an equal probability of them moving from left to right as it’s from right to left. So we can’t notice any net change.

Diffusion is a type of passive transport . That means it doesn’t require energy to start. It happens naturally, without any shaking or stirring.

There is also a facilitated diffusion which happens in the cell membranes when molecules are transported with the help of the proteins.

You may remember hearing about Osmosis and think about how is this different from it. It is actually a very similar concept. Osmosis is just a diffusion through the partially permeable membrane. We talked about it more in our Gummy Bear Osmosis Experiment so definitely check it out.

What causes Diffusion?

Do particles really want to move somewhere less crowded? Well, no, not in the way we would think of it. There is no planning around, just the probability.

All fluids are bound to the same physical laws – studied by Fluid mechanics , part of the physics. We usually think of fluids as liquids, but in fact, air and other types of gas are also fluids ! By definition , fluid is a substance that has no fixed shape and yields easily to external pressure.

Another property of the fluids is that they flow or move around. Molecules in fluids move around randomly and that causes collisions between them and makes them bounce off in different directions.

This random motion of particles in a fluid is called Brownian motion . It was named by the biologist Robert Brown who observed and described the phenomenon in 1827. While doing some experiments with pollen under the microscope, he noticed it wiggles in the water. He concluded that pollen must be alive. Even though his theory was far off, his observation was important in proving the existence of atoms and molecules.

Factors that influence Diffusion

There are several factors that influence the speed of diffusion. The first is the extent of the concentration gradient . The bigger the difference in concentration over the gradient, the faster diffusion occurs.

Another important factor is the distance over which our particles are moving. We can look at it as the size of a container. As you may imagine, with the bigger distance, diffusion is slower, since particles need to move further.

Then we have characteristics of the solvent and substance. The most notable is the mass of the substance and density of the solvent . Heavier molecules move more slowly; therefore, they diffuse more slowly. And it’s a similar case with the density of the solvent. As density increases, the rate of diffusion decreases. It’s harder to move through the denser solvent, therefore our molecules slow down.

And the last factor we will discuss is the temperature . Both heating and cooling change the kinetic energy of the particles in our substance. In the case of heating, we are increasing the kinetic energy of our particles and that makes them move a lot quicker. So the higher the temperature, the higher the diffusion rate.

We will demonstrate the diffusion of food coloring in water and observe how it’s affected by the difference in temperature. Onwards to the experiment!

Materials needed for demonstrating Diffusion

- 2 transparent glasses – Common clear glasses will do the trick. You probably have more than needed around the house. We need one for warm water and one for cold water so we can observe the difference in diffusion.

- Hot and cold water – The bigger the difference in temperature in two glasses, the bigger difference in diffusion will be observed. You can heat the water to near boiling or boiling state and use it as hot water. Use regular water from the pipe as “cold water”. That is enough difference to observe the effects of temperature on diffusion.

- Food coloring – Regular food coloring or some other colors like tempera (poster paint) will do the trick. Color is required to observe the diffusion in our solvent (water). To make it more fun, you can use 2 different colors. Like red for hot and blue for cold.

Instructions for demonstrating diffusion

We have a video on how to demonstrate diffusion at the start of the article so you can check it out if you prefer a video guide more. Or continue reading instructions below if you prefer step by step text guide.

- Take 2 transparent glasses and fill them with the water . In one glass, pour the cold water and in the other hot water. As we mentioned, near-boiling water for hot and regular temperature water from the pipe will be good to demonstrate the diffusion.

- Drop a few drops of food coloring in each cup . 3-4 drops are enough and you should not put too much food color. If you put too much, the concentration of food color will be too large and it will defuse too fast in both glasses.

- Watch closely how the color spreads . You will notice how color diffuses faster in hot water. It will take longer to diffuse if there is more water, less food color and if the water is cooler.

What will you develop and learn

- What is diffusion and how it relates to osmosis

- Factors that influence diffusion

- What is Brownian motion

- How to conduct a science experiment

- That science is fun! 😊

If you liked this activity and are interested in more simple fun experiments, we recommend exploring all about the heat conduction . For more cool visuals made by chemistry, check out Lava lamp and Milk polarity experiment . And if you, like us, find the water fascinating, definitely read our article about many interesting properties of water .

If you’re searching for some great STEM Activities for Kids and Child development tips, you’re in the right place! Check the Categories below to find the right activity for you.

STEM Science

Videos, guides and explanations about STEM Science in a step-by-step way with materials you probably already have at your home. Find new Science ideas.

STEM Technology

Videos, guides and explanations about STEM Technology in a step-by-step way with materials you probably already have at your home. Find new Technology ideas.

STEM Engineering

Videos, guides and explanations about STEM Engineering in a step-by-step way with materials you probably already have at your home. New Engineering ideas!

Videos, guides and explanations about STEM Math in a step-by-step way with materials you probably already have at your home. Find new Mathematics ideas.

Find out all about development psychology topics that you always wanted to know. Here are articles from child psychology and development psychology overall.

First year of Child’s Life

Following a Child’s development every month from its birth. Personal experiences and tips on how to cope with challenges that you will face in parenting.

4 thoughts on “ How to Demonstrate Diffusion with Hot and Cold Water ”

- Pingback: How to Make Colorful Milk Polarity Experiment - STEM Little Explorers

- Pingback: How to make a Lava Lamp | STEM Little Explorers

- Pingback: Learn about pressure with Can Crush Experiment - STEM Little Explorers

- Pingback: Gummy bear Osmosis Experiment - STEM Little Explorers

Leave a Reply Cancel reply

You must be logged in to post a comment.

Get Fresh news from STEM fields

I'm not interested in STEM

Module 4: Diffusion and Osmosis

Diffusion and osmosis.

The cell membrane plays the dual roles of protecting the living cell by acting as a barrier to the outside world, yet at the same time it must allow the passage of food and waste products into and out of the cell for metabolism to proceed. How does the cell carry out these seemingly paradoxical roles? To understand this process you need to understand the makeup of the cell membrane and an important phenomenon known as diffusion.

Diffusion is the movement of a substance from an area of high concentration to an area of low concentration due to random molecular motion. All atoms and molecules possess kinetic energy, which is the energy of movement. It is this kinetic energy that makes each atom or molecule vibrate and move around. (In fact, you can quantify the kinetic energy of the atoms/molecules in a substance by measuring its temperature.) The moving atoms bounce off each other, like bumper cars in a carnival ride. The movement of particles due to this energy is called Brownian motion. As these atoms/molecules bounce off each other, the result is the movement of these particles from an area of high concentration to an area of low concentration. This is diffusion. The rate of diffusion is influenced by both temperature (how fast the particles move) and size (how big they are).

Part 1: Brownian Motion

In this part of the lab, you will use a microscope to observe Brownian motion in carmine red powder, which is a dye obtained from the pulverized guts of female cochineal beetles.

- Glass slide

- Carmine red powder

- Obtain a microscope slide and place a drop of tap water on it.

- Using a toothpick, carefully add a very minuscule quantity of carmine red powder to the drop of water and add a coverslip.

- Observe under scanning, low, and then high power.

Lab Questions

- Describe the activity of the carmine red particles in water.

- If the slide were warmed up, would the rate of motion of the molecules speed up, slow down, or remain the same? Why?

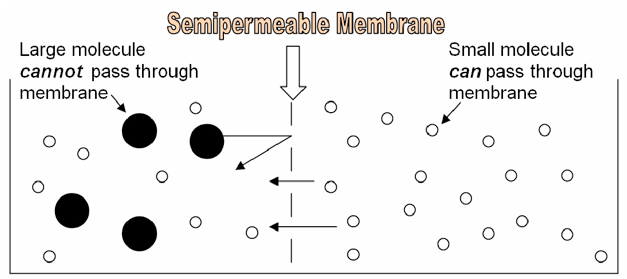

Part 2: Diffusion across a Semipermeable Membrane

Because of its structure, the cell membrane is a semipermeable membrane. This means that SOME substances can easily diffuse through it, like oxygen, or carbon dioxide. Other substances, like glucose or sodium ions, are unable to pass through the cell membrane unless they are specifically transported via proteins embedded in the membrane itself. Whether or not a substance is able to diffuse through a cell membrane depends on the characteristics of the substance and characteristics of the membrane. In this lab, we will make dialysis tubing “cells” and explore the effect of size on a molecule’s ability to diffuse through a “cell membrane.”

The following information might be useful in understanding and interpreting your results in this lab:

- Atomic formula: C 20 H 14 O 4

- Atomic mass: 318.32 g/mol

- Color in acidic solution : Clear

- Color in basic solution: Pink

- Atomic formula: I or I2

- Atomic mass: 126 g/mol

- Atomic formula: (C 6 H 10 O 5 )n

- Atomic mass: HUGE!

- Color in Iodine: Bluish

- Atomic formula: NaOH

- Atomic mass: 40.1 g/mol

- Acid/Base: Base

- 2 pieces of dialysis tubing

- Phenolphthalein

- Starch solution

- Using a wax pencil, label one beaker #1. Label the other beaker #2.

- Fill beaker #1 with 300 ml of tap water, then add 10 drops of 1 M NaOH. Do not spill the NaOH—it is very caustic!

- Fill beaker #2 with 300 ml of tap water, then add iodine drops drop by drop until the solution is bright yellow.

- Now prepare your 2 dialysis tubing “bags.” Seal one end of each dialysis tube by carefully folding the end “hotdog style” 2 times, then “hamburger style” 1 time. Tie the folded portion of the tube securely with string. It is critical that your tubing is tightly sealed, to prevent leaks.

- Add 10 ml of water and three drops of phenolphthalein to one of your dialysis tube bags. Seal the other end of the bag by carefully folding and tying as before.

- Thoroughly rinse the bag containing phenolphthalein, then place it in into the beaker containing the NaOH.

- Add 10 ml of starch solution to the other dialysis tube. Again seal the bag tightly and rinse as above. Place this bag containing the starch solution into beaker #2.

- Let diffusion occur between the bags and the solutions in the beakers.

Record the colors (below) and label contents inside and outside the bags (above):

| Beaker 1 | Beaker 2 | |||

|---|---|---|---|---|

| Initial | Final | Initial | Final | |

| Color inside bag | ||||

| Color outside bag (in beaker) | ||||

- Which substance diffused across the membrane in beaker #1? How do you know?

- Which substance diffused across the membrane in beaker #2? How do you know?

- Why might some ions and molecules pass through the dialysis bag while others might not?

Part 3: Osmosis and the Cell Membrane

Osmosis is the movement of water across a semipermeable membrane (such as the cell membrane). The tonicity of a solution involves comparing the concentration of a cell’s cytoplasm to the concentration of its environment. Ultimately, the tonicity of a solution can be determined by examining the effect a solution has on a cell within the solution.

By definition, a hypertonic solution is one that causes a cell to shrink. Though it certainly is more complex than this, for our purposes in this class, we can assume that a hypertonic solution is more concentrated with solutes than the cytoplasm. This will cause water from the cytoplasm to leave the cell, causing the cell to shrink. If a cell shrinks when placed in a solution, then the solution is hypertonic to the cell.

If a solution is hypotonic to a cell, then the cell will swell when placed in the hypotonic solution. In this case, you can imagine that the solution is less concentrated than the cell’s cytoplasm, causing water from the solution to flow into the cell. The cell swells!

Finally, an isotonic solution is one that causes no change in the cell. You can imagine that the solution and the cell have equal concentrations, so there is no net movement of water molecules into or out of the cell.

In this exercise, you will observe osmosis by exposing a plant cell to salt water.

What do you think will happen to the cell in this environment? Draw a picture of your hypothesis.

- Elodea leaf

- Microscope slide

- 5% NaCl solution

- Remove a leaf from an Elodea plant using the forceps.

- Make a wet mount of the leaf. Use the pond water to make your wet mount.

- Observe the Elodea cells under the compound microscope at high power (400 X) and draw a typical cell below.

- Next, add several drops of 5% salt solution to the edge of the coverslip to allow the salt to diffuse under the coverslip. Observe what happens to the cells (this may require you to search around along the edges of the leaf). Look for cells that have been visibly altered.

Draw a typical cell in both pond and salt water and label the cell membrane and the cell wall.

- What do you see occurring to the cell membrane when the cell was exposed to salt water? Why does this happen?

- Describe the terms hypertonic, hypotonic and isotonic.

- How would your observations change if NaCl could easily pass through the cell membrane and into the cell?

Part 4: Experimental Design

You and your group will design an experiment to determine the relative molecular weights of methylene blue and potassium permanganate. You may use a petri dish of agar, which is a jello-like medium made from a polysaccharide found in the cell walls of red algae. You will also have access to a cork borer and a small plastic ruler.

- 1 petri dish of agar

- Methlylene blue

- Potassium permanganate

Your experiment design should include all of the following portions:

- Experimental design

- Conclusions

- Further questions/other comments

- Biology Labs. Authored by : Wendy Riggs . Provided by : College of the Redwoods. Located at : http://www.redwoods.edu . License : CC BY: Attribution

- Osmotic pressure on blood cells diagram. Authored by : LadyofHats. Located at : https://commons.wikimedia.org/wiki/File:Osmotic_pressure_on_blood_cells_diagram.svg . License : Public Domain: No Known Copyright

The Biology Corner

Biology Teaching Resources

Virtual Diffusion Lab

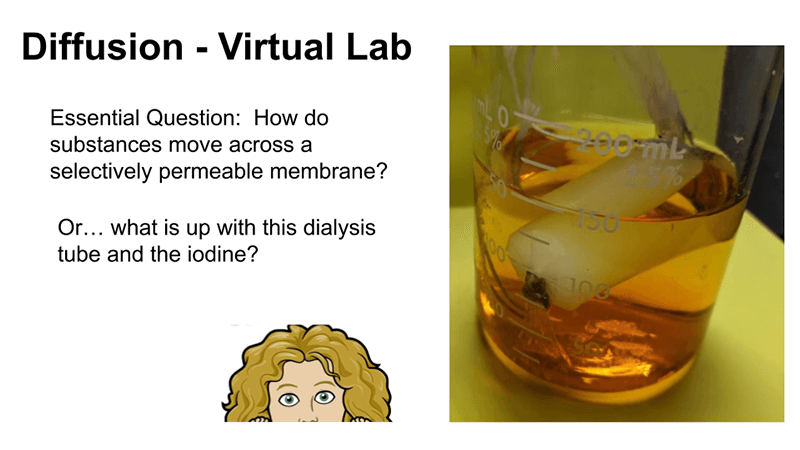

The diffusion lab has been a yearly activity in my biology class as part of a unit on cells and cell transport. Students fill a bag with starch and water and then submerge it in a solution of iodine and observe what happens. The iodine diffuses across the plastic bag and turns the starch purple.

If students are absent for the lab, they can complete Google Slides that shows the step-by-step process of the investigation. They watch videos showing the set-up and observe a time-lapse video that shows the starch in the bag turn purple. Why does it turn purple?

Iodine in the beaker is a small molecule that can move through the plastic of the bag. When it encounters the starch solution, the color will change to purple. This is an excellent model for how diffusion occurs across semi-permeable membranes.

In the slide activity, a dialysis tube was used instead of a plastic bag because the process does occur faster with the dialysis tube. In class, I use a cheaper version, plastic sandwich bags. Save time and frustration by making the bags ahead of time. Simply put a spoonful of starch in the bag and fill with tap water. Tie the bag like a balloon. Students then place the bag into a beaker with a few drops of iodine .

Google Slides

Other Osmosis Resources

Modeling Osmosis with Deco Cubes

Osmosis Lab

Observing Osmosis in an Egg

Shannan Muskopf

- STEM Ambassadors

- School trusts

- ITE and governors

- Invest in schools

- Student programmes

- Benefits and impact

- Our supporters

- Advertising and sponsorship

- Become a STEM Ambassador

- Request a STEM Ambassador

- Employer information

- Training and support

- STEM Ambassadors Partners

- Working with community groups

- Search icon

- Join the STEM Community

This list provides a range of activities and demonstrations, together with background information and suggested teaching strategies, which explore diffusion. The use of models and analogies here can aid understanding and students should be challenged to use a simple particle model to explain what they observe.

The resources link to the following topics:

- diffusion in terms of the particle model

- diffusion in liquids and gases driven by differences in concentration

- Brownian motion in gases

Visit the secondary science webpage to access all lists: www.nationalstemcentre.org.uk/secondaryscience

Whilst this list provides a source of information and ideas for experimental work, it is important to note that recommendations can date very quickly. Do NOT follow suggestions which conflict with current advice from CLEAPSS, SSERC or recent safety guides. eLibrary users are responsible for ensuring that any activity, including practical work, which they carry out is consistent with current regulations related to health and safety and that they carry an appropriate risk assessment. Further information is provided in our Health and Safety guidance.

Quality Assured Category: Physics Publisher: Longman

Although slightly dated, this pupil book and teacher guide has some really well explained theory and good practicals that fit in with this topic. Each chapter also has a series of good written activities that could be taken and re-purposed in a more up to date way.

Perfumes and Smelling

Quality Assured Category: Science Publisher: Association for Science Education (ASE)

This is a really good set of activities based around perfumes. There are instructions for a perfume circus activity which would make a good starter activity and also for two different ways of making perfume as class practicals. There are full teacher and technician notes and a set of student worksheets.

Diffusion with jelly cubes

In this experiment, students can investigate diffusion by placing agar cubes of varying sizes in acid and observing the colour change. The webpage contains full teacher and technician notes.

Diffusion in liquids

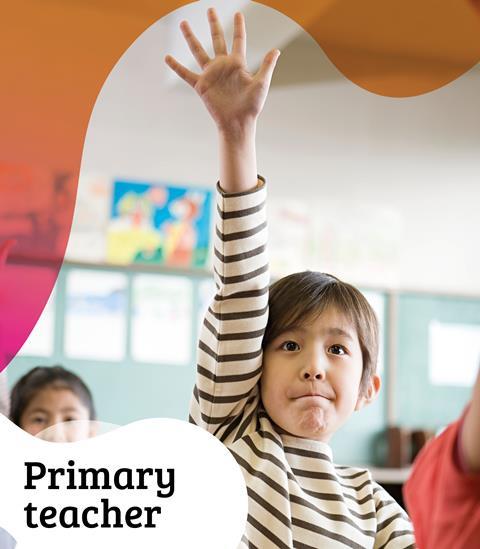

In this experiment, students place colourless crystals of lead nitrate and potassium iodide at opposite sides of a Petri dish of de-ionised water. As these substances dissolve and diffuse towards each other, students can observe clouds of yellow lead iodide forming, demonstrating that diffusion has taken place.

Brownian Motion

Quality Assured Category: Physics Publisher: National STEM Learning Centre and Network

This video shows how to show the movement of particles by Brownian motion. Instead of using the traditional smoke cell, the video shows how Brownian motion can be observed in a suspension containing micrometre diameter polystyrene spheres. Using a microscope and video camera, students can observe the motion of the polystyrene spheres. The video also shows how Brownian motion can be simulated using a vibrating loudspeaker, table tennis balls and a small balloon.

Your browser is not supported

Sorry but it looks as if your browser is out of date. To get the best experience using our site we recommend that you upgrade or switch browsers.

Find a solution

- Skip to main content

- Skip to navigation

- Back to parent navigation item

- Primary teacher

- Secondary/FE teacher

- Early career or student teacher

- Higher education

- Curriculum support

- Literacy in science teaching

- Periodic table

- Interactive periodic table

- Climate change and sustainability

- Resources shop

- Collections

- Remote teaching support

- Starters for ten

- Screen experiments

- Assessment for learning

- Microscale chemistry

- Faces of chemistry

- Classic chemistry experiments

- Nuffield practical collection

- Anecdotes for chemistry teachers

- On this day in chemistry

- Global experiments

- PhET interactive simulations

- Chemistry vignettes

- Context and problem based learning

- Journal of the month

- Chemistry and art

- Art analysis

- Pigments and colours

- Ancient art: today's technology

- Psychology and art theory

- Art and archaeology

- Artists as chemists

- The physics of restoration and conservation

- Ancient Egyptian art

- Ancient Greek art

- Ancient Roman art

- Classic chemistry demonstrations

- In search of solutions

- In search of more solutions

- Creative problem-solving in chemistry

- Solar spark

- Chemistry for non-specialists

- Health and safety in higher education

- Analytical chemistry introductions

- Exhibition chemistry

- Introductory maths for higher education

- Commercial skills for chemists

- Kitchen chemistry

- Journals how to guides

- Chemistry in health

- Chemistry in sport

- Chemistry in your cupboard

- Chocolate chemistry

- Adnoddau addysgu cemeg Cymraeg

- The chemistry of fireworks

- Festive chemistry

- Education in Chemistry

- Teach Chemistry

- On-demand online

- Live online

- Selected PD articles

- PD for primary teachers

- PD for secondary teachers

- What we offer

- Chartered Science Teacher (CSciTeach)

- Teacher mentoring

- UK Chemistry Olympiad

- Who can enter?

- How does it work?

- Resources and past papers

- Top of the Bench

- Schools' Analyst

- Regional support

- Education coordinators

- RSC Yusuf Hamied Inspirational Science Programme

- RSC Education News

- Supporting teacher training

- Interest groups

- More navigation items

Diffusion in liquids

In association with Nuffield Foundation

Demonstrate that diffusion takes place in liquids by allowing lead nitrate and potassium iodide to form lead iodide as they diffuse towards each other in this practical

In this experiment, students place colourless crystals of lead nitrate and potassium iodide at opposite sides of a Petri dish of deionised water. As these substances dissolve and diffuse towards each other, students can observe clouds of yellow lead iodide forming, demonstrating that diffusion has taken place.

This practical activity takes around 30 minutes.

- Eye protection

- White tile or piece of white paper

- Lead nitrate (TOXIC, DANGEROUS FOR THE ENVIRONMENT), 1 crystal

- Potassium iodide, 1 crystal

- Deionised water

Greener alternatives

To reduce the use of toxic chemicals in this experiment you can conduct the experiment in microscale, using drops of water on a laminated sheet, find full instructions and video here, and/or use a less toxic salt than lead nitrate, eg sodium carbonate and barium chloride. More information is available from CLEAPSS.

Health, safety and technical notes

- Read our standard health and safety guidance.

- Wear eye protection throughout.

- Lead nitrate, Pb(NO 3 ) 2 (s), (TOXIC, DANGEROUS FOR THE ENVIRONMENT) – see CLEAPSS Hazcard HC057a .

- Potassium iodide, KI(s) – see CLEAPSS Hazcard HC047b .

- Place a Petri dish on a white tile or piece of white paper. Fill it nearly to the top with deionised water.

- Using forceps, place a crystal of lead nitrate at one side of the petri dish and a crystal of potassium iodide at the other.

- Observe as the crystals begin to dissolve and a new compound is formed between them.

Source: Royal Society of Chemistry

As the crystals of potassium iodide and lead nitrate dissolve and diffuse, they will begin to form yellow lead iodide

Teaching notes

The lead nitrate and potassium iodide each dissolve and begin to diffuse through the water. When the lead ions and iodide ions meet they react to form solid yellow lead iodide which precipitates out of solution.

lead nitrate + potassium iodide → lead iodide + potassium nitrate

Pb(aq) + 2I – (aq) → PbI 2 (s)

The precipitate does not form exactly between the two crystals. This is because the lead ion is heavier and diffuses more slowly through the liquid than the iodide ion.

Another experiment – a teacher demonstration providing an example of a solid–solid reaction – involves the same reaction but in the solid state.

Additional information

This is a resource from the Practical Chemistry project , developed by the Nuffield Foundation and the Royal Society of Chemistry. This collection of over 200 practical activities demonstrates a wide range of chemical concepts and processes. Each activity contains comprehensive information for teachers and technicians, including full technical notes and step-by-step procedures. Practical Chemistry activities accompany Practical Physics and Practical Biology .

The experiment is also part of the Royal Society of Chemistry’s Continuing Professional Development course: Chemistry for non-specialists .

© Nuffield Foundation and the Royal Society of Chemistry

- 11-14 years

- 14-16 years

- Practical experiments

- Physical chemistry

- Reactions and synthesis

Specification

- Precipitation is the reaction of two solutions to form an insoluble salt called a precipitate.

- Motion of particles in solids, liquids and gases.

- Diffusion (Graham's law not required).

Related articles

Help learners master equilibrium and reversible reactions

2024-06-24T06:59:00Z By Emma Owens

Use this poster, fact sheet and storyboard activity to ensure your 14–16 students understand dynamic equilibrium

Non-burning paper: investigate the fire triangle and conditions for combustion

2024-06-10T05:00:00Z By Declan Fleming

Use this reworking of the classic non-burning £5 note demonstration to explore combustion with learners aged 11–16 years

Everything you need to introduce alkenes

2024-06-04T08:22:00Z By Dan Beech

Help your 14–16 learners to master the fundamentals of the reactions of alkenes with these ideas and activities

1 Reader's comment

Only registered users can comment on this article., more experiments.

‘Gold’ coins on a microscale | 14–16 years

By Dorothy Warren and Sandrine Bouchelkia

Practical experiment where learners produce ‘gold’ coins by electroplating a copper coin with zinc, includes follow-up worksheet

Practical potions microscale | 11–14 years

By Kirsty Patterson

Observe chemical changes in this microscale experiment with a spooky twist.

Antibacterial properties of the halogens | 14–18 years

By Kristy Turner

Use this practical to investigate how solutions of the halogens inhibit the growth of bacteria and which is most effective

- Contributors

- Email alerts

Site powered by Webvision Cloud

Sciencing_Icons_Science SCIENCE

Sciencing_icons_biology biology, sciencing_icons_cells cells, sciencing_icons_molecular molecular, sciencing_icons_microorganisms microorganisms, sciencing_icons_genetics genetics, sciencing_icons_human body human body, sciencing_icons_ecology ecology, sciencing_icons_chemistry chemistry, sciencing_icons_atomic & molecular structure atomic & molecular structure, sciencing_icons_bonds bonds, sciencing_icons_reactions reactions, sciencing_icons_stoichiometry stoichiometry, sciencing_icons_solutions solutions, sciencing_icons_acids & bases acids & bases, sciencing_icons_thermodynamics thermodynamics, sciencing_icons_organic chemistry organic chemistry, sciencing_icons_physics physics, sciencing_icons_fundamentals-physics fundamentals, sciencing_icons_electronics electronics, sciencing_icons_waves waves, sciencing_icons_energy energy, sciencing_icons_fluid fluid, sciencing_icons_astronomy astronomy, sciencing_icons_geology geology, sciencing_icons_fundamentals-geology fundamentals, sciencing_icons_minerals & rocks minerals & rocks, sciencing_icons_earth scructure earth structure, sciencing_icons_fossils fossils, sciencing_icons_natural disasters natural disasters, sciencing_icons_nature nature, sciencing_icons_ecosystems ecosystems, sciencing_icons_environment environment, sciencing_icons_insects insects, sciencing_icons_plants & mushrooms plants & mushrooms, sciencing_icons_animals animals, sciencing_icons_math math, sciencing_icons_arithmetic arithmetic, sciencing_icons_addition & subtraction addition & subtraction, sciencing_icons_multiplication & division multiplication & division, sciencing_icons_decimals decimals, sciencing_icons_fractions fractions, sciencing_icons_conversions conversions, sciencing_icons_algebra algebra, sciencing_icons_working with units working with units, sciencing_icons_equations & expressions equations & expressions, sciencing_icons_ratios & proportions ratios & proportions, sciencing_icons_inequalities inequalities, sciencing_icons_exponents & logarithms exponents & logarithms, sciencing_icons_factorization factorization, sciencing_icons_functions functions, sciencing_icons_linear equations linear equations, sciencing_icons_graphs graphs, sciencing_icons_quadratics quadratics, sciencing_icons_polynomials polynomials, sciencing_icons_geometry geometry, sciencing_icons_fundamentals-geometry fundamentals, sciencing_icons_cartesian cartesian, sciencing_icons_circles circles, sciencing_icons_solids solids, sciencing_icons_trigonometry trigonometry, sciencing_icons_probability-statistics probability & statistics, sciencing_icons_mean-median-mode mean/median/mode, sciencing_icons_independent-dependent variables independent/dependent variables, sciencing_icons_deviation deviation, sciencing_icons_correlation correlation, sciencing_icons_sampling sampling, sciencing_icons_distributions distributions, sciencing_icons_probability probability, sciencing_icons_calculus calculus, sciencing_icons_differentiation-integration differentiation/integration, sciencing_icons_application application, sciencing_icons_projects projects, sciencing_icons_news news.

- Share Tweet Email Print

- Home ⋅

- Science Fair Project Ideas for Kids, Middle & High School Students ⋅

Diffusion Lab Experiments

Chemistry Projects for Diffusion in Liquids

Diffusion is a physical phenomenon that occurs everywhere, and we barely notice it or understand how it works. However, a few simple experiments can reveal the mysterious nature of this simple phenomenon.

Preparing for the Experiments

Taking some time to set these experiments up can make your life much easier and allow you to better focus on the results of the experiment. First, grab three glass beakers. Make sure the beakers are transparent. Fill a large pitcher of water or do your experiments near a tap. Also, get three different colors of food dye. To be very precise, you will want a thermometer, but you don't need one unless you are picky. Also have a timer or stopwatch. Finally, make sure you have some way of heating or cooling the water before you start.

Observing Simple Diffusion

This is by far the most simple experiment. However, you'll have to know beforehand that diffusion is the propagation of a substance from an area of high concentration to an area of low concentration, the purpose of which is to reach a state of equilibrium, or a state in which there is an even concentration of a substance across a medium. Now that you know what diffusion is, you need to see it yourself. Take a beaker and fill it with water to around three-quarters. Now, simply pour a small amount of food dye into the water. Observe whether the dye diffuses from a high concentration to a low concentration and try to observe where those two states occur. This will give you a good idea of what diffusion looks like.

Testing How Temperature Affects Diffusion

Now, all your preparation will come to fruition. Fill all three beakers with tap water to around three-quarters filled. The tap water should be around 50 to 60 degrees Fahrenheit, or as close as you can get. Now, cool one beaker by placing it in a refrigerator or similar device. Heat the other beaker with a stove, microwave or, if you have one, a Bunsen burner. You can make the temperatures of all thee beakers whatever you want, really. The important thing is that one is around 20 degrees hotter than another, which is around 20 degrees hotter than another. Finally, put one color of dye in each beaker and observe the diffusion. Your objective in this experiment should be to measure how fast each dye diffuses through each temperature of water. Make sure to write down how fast the dye diffuses in each temperature of water.

Related Articles

Science projects on what liquid freezes faster, science project: the evaporation of fresh water vs...., water density science experiments, fun science experiments with potatoes, how to turn a glass of water with red dye back into..., osmosis egg experiments, heat retention science projects, food coloring experiments, easy 10-minute science projects, osmosis science activities for kids, measuring wet bulb temperature, ideas for fast & easy science fair projects, convection experiments for kids, thermal energy science experiments for kids, how to separate ink from water, how to make salt crystals at home, what is the fastest way to cool a soda for a science..., how to build a hygrometer, how to make a greenhouse for a science project.

About the Author

David Scott has been a firefighter for the Seattle Fire Department's Technical Rescue Team for almost 20 years. He has been writing primarily since 2005, but did author the book, "The White River Ranger District Trail Guide" in 1988. In addition to his work for Demand Studios, Scott spends much of his time writing poetry and a novel.

Find Your Next Great Science Fair Project! GO

Simple Diffusion Experiment

Introduction: Simple Diffusion Experiment

The simple diffusion experiment by Faisal Alwabel in Dhahran Ahliyya Schools MYP 9/C

Step 1: Research Question

How does temperature affect the rate of diffusion?

Step 2: My Hypothesis

•My hypothesis is that:

The lower the temperature of water the slower the diffusion rate inside the water and the longer the time will take, and the higher the temperature the faster diffusion rate inside the water and less time of diffusion in the beaker will take because of the increase of kinetic energy in the particles so they will mix more quickly.

Step 3: Variables (Dependent / Independent / Controlled)

Dependent variable: Rate of diffusion

•Independent variable: Temperature

•Controlled variables: Food coloring drop, time, amount of water for each glass, amount of food coloring

Step 4: Background Research

•What is diffusion? Diffusion is the movement of molecules from a place where they are at a higher concentration to an area with a low concentration, and it works by itself without doing anything to it like shaking and stirring.

•How Does diffusion work? In gases and liquids, the particles move randomly from place to place, and the particles collide with each other or with their container and then the particles are spread to the whole container.

Step 5: Materials

•The materials I will use are:

•* 3 Beakers

•* One cold water, One boiled / hot water, One room temp (30 Celsius)

•* Food coloring

Step 6: Procedure

•1. First of all, I made sure that I had 3 regular spoons for 3 food coloring drops.

•2. Next, I Made sure that every water was in the right temp where one boiled water, one room temp 30 C and one cold water approximately 15 Degrees and they were all 300 ml.

•3. Then I prepared the timer for 5 mins.

•4. After that, I put one food coloring drop for every beaker and start the timer

•5. After 5 minutes, I took photos of all 3 beakers and watched the whole thing.

Step 7: Data

•I saw that the food coloring drip only took 30 seconds to collide with the hot / boiled water, I looked at the room temperature water 30 Degrees C needed approximately 2.5 minutes for the food coloring to collide with it, and finally the 5 minutes finished BUT the food coloring did not collide well with the cold water and had many low concentration areas.

Step 8: Data Analysis

•I found out that there is a huge difference between the three beakers (except for the hot and room temp), I saw that the food coloring and hot water collided really quickly and by that I can say that the rate of diffusion increases as the kinetic energy increases. Similarly, the beaker with the 30 Degrees water was close to the boiled water and all molecules collided with the food coloring but took 2 minutes more. Lastly the cold water, the cold water needed more than 5 minutes to collide but still not all molecules had collided with the food coloring drip and there were many areas with low concentration.

Step 9: Results

•After finishing the experiment, I can start with the results that came out and they are:

•Diffusion rate increases as kinetic energy increases and by that I can say that the boiled / hot water has the highest rate of diffusion because of the kinetic energy inside it.

•The diffusion rate in cold water would be really low because of the small amount of kinetic energy in it so water molecules would not collide with the food coloring.

Step 10: Conclusion

•In conclusion, as you remember my research question was that “How does temperature affect the rate of diffusion?” and I answered it in my data analysis where the rate of diffusion in hot water is high and the rate of diffusion increases as the kinetic energy increases.

•And even my hypothesis was right where I stated that the higher the temperature the faster diffusion rate inside the water and less time of diffusion in the beaker will take because of the increase of the kinetic energy in the particles so they will mix more quickly.

Step 11: Application

•This is a really important subject to study in science because diffusion happens all the time where the diffusion of oxygen and carbon dioxide gas occurs in our lungs, the diffusion of water, salts, and waste that occurs in the kidneys.

Step 12: Evaluation

•The research ATL Skill was really helpful in this experiment wherein background research I had to search for some information to help you understand the process going on and that will happen in the experiment.

Recommendations

Art and Sculpture Contest

Outdoor Life Contest

Woodworking Contest

Sven Elflein About Blog

A practical guide to diffusion models.

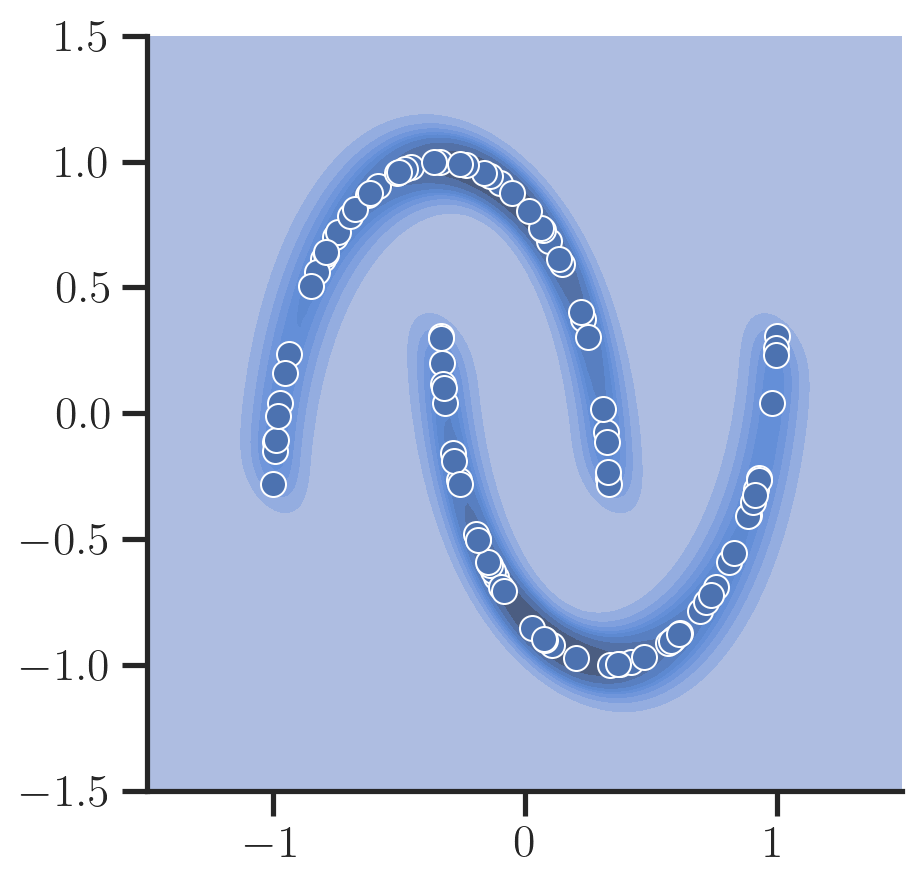

The motivation of this blog post is to provide a intuition and a practical guide to train a (simple) diffusion model [Sohl-Dickstein et al. 2015] together with the respective code leveraging PyTorch. If you are interested in a more mathematical description with proofs I can highly recommend [Luo 2022] .

In general, the goal of a diffusion model is to be able to generate novel data after being trained on data points of that distribution.

Here, let’s consider a simple 2D toy dataset provided by scikit-learn to make this example as simple as possible:

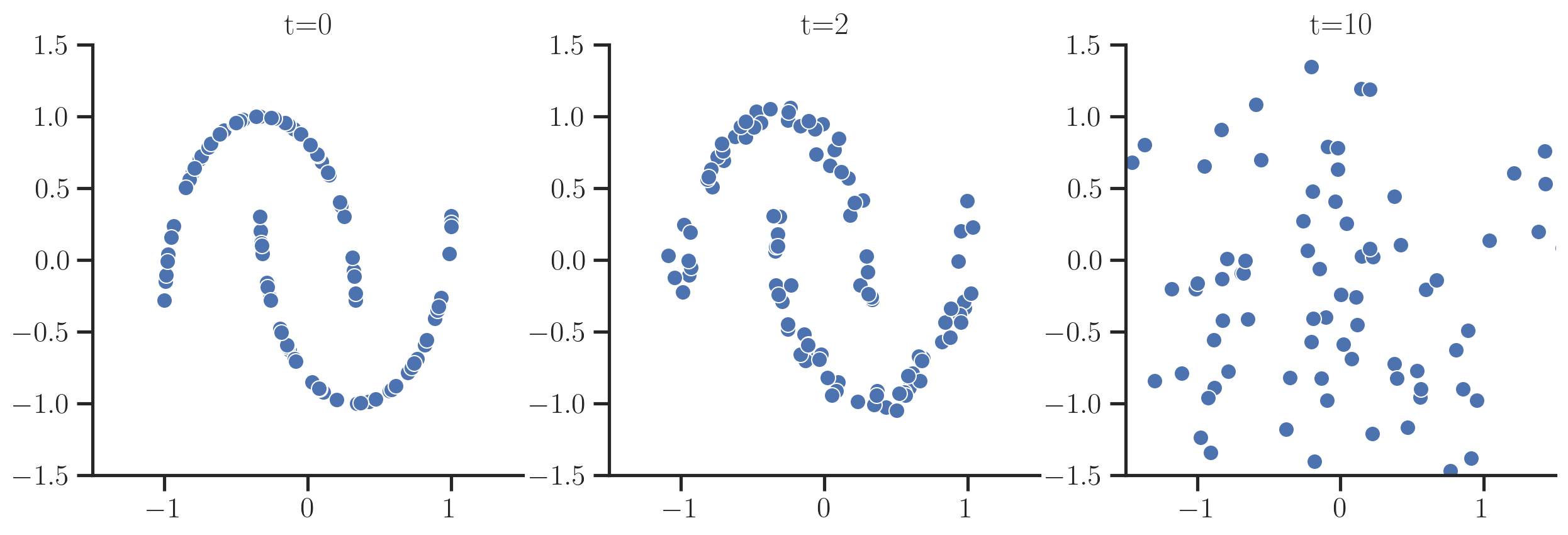

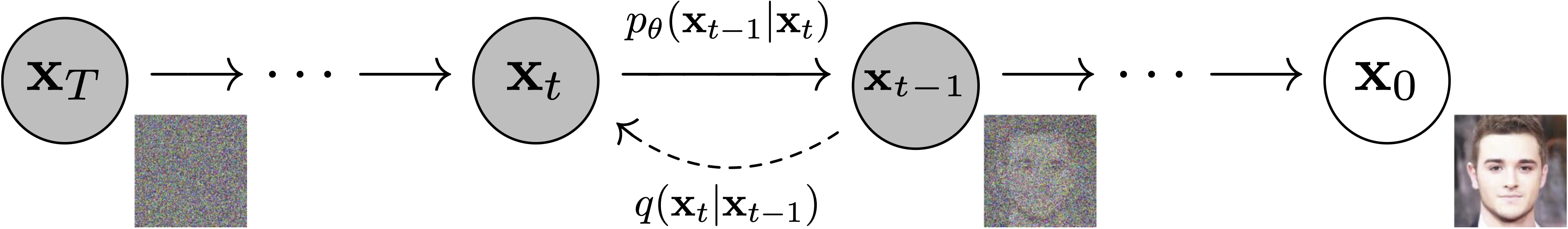

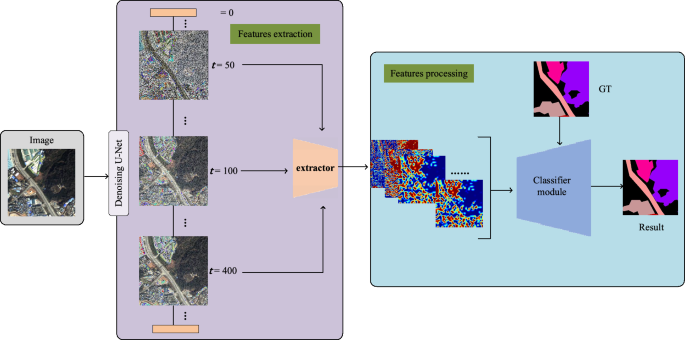

Diffusion models define a forward and backward process:

- the forward process gradually adds noise to the data until the original data is indistinguishable (one arrives at a standard normal distribution $N(0, \mathbf{I})$)

- the backward process aims to reverse the forward process, i.e., start from noise and then gradually tries to restore data

To generate new samples by starting from random noise, one aims to learn the backward process.

To be able to start training a model that learns this backward process, we first need to know how to do the forward process.

The forward process adds noise at every step $t$ controlled by parameters \(\{\beta_t\}_{t=1, \dots, T}, \beta_{t-1} < \beta_t, \beta_T = 1\):

As \(t \rightarrow T\) this distribution becomes a multi-variate Gaussian distribution \(\mathcal{N}(0, \mathbf{I})\).

So one starts with the original data samples $x_0$ and then gradually add noise to the samples:

The cool thing about this being Gaussian noise is that instead of simulating this forward process by iteratively sampling noise, one can derive a closed form for the distribution at a certain $t$ given the original data point $x_0$ so one has to only sample noise once:

with $\alpha_t = 1 - \beta_t$ and $\bar{\alpha}_t = \prod_{s = 1}^t \alpha_s$.

Let’s implement this:

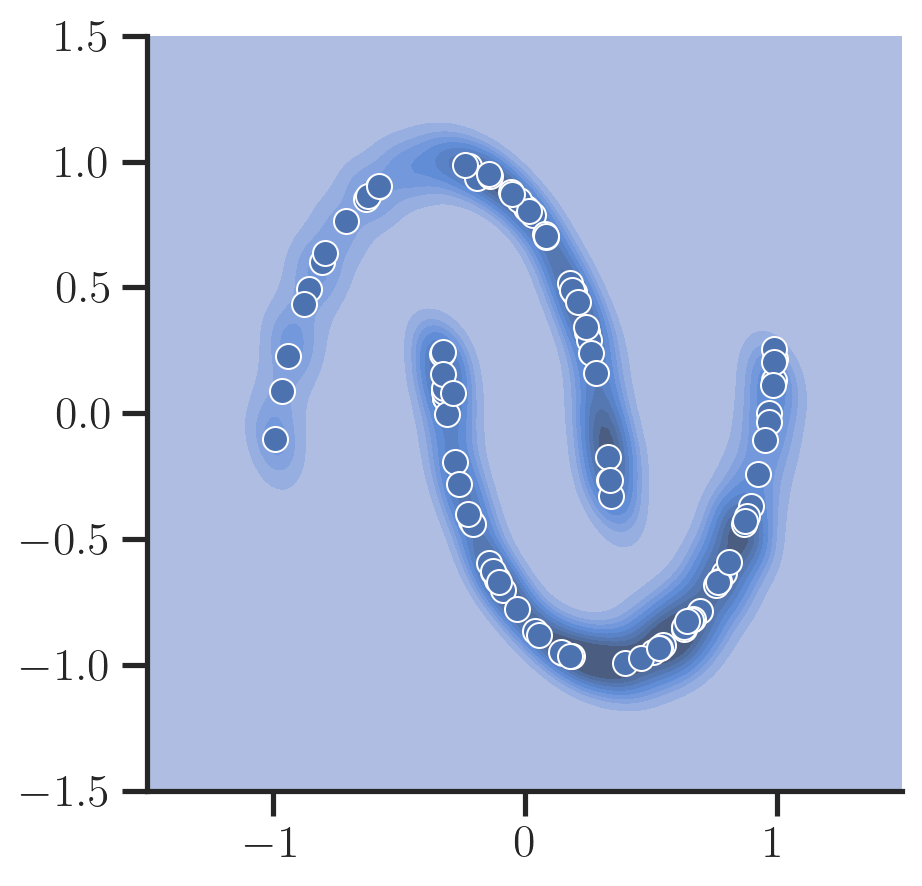

Next, we want to train a model that reverses that process.

For this, one can show that the there is also a closed form for the less noisy version $x_{t-1}$ given the next sample $x_t$ and the original sample $x_0$.

and $\epsilon_0 \sim \mathcal{N}(0, \mathbf{I})$ is the noise drawn to perturb the original data $x_0$ 1 .

Obviously, we cannot use this directly to generate new data since this relies on knowing the original datapoint $x_0$ in the first place but we can use it to generate the ground truth data for training a model that does not rely on $\mathbf{x}_0$ and predicts $\epsilon_0$ from the noisy data $\mathbf{x}_t$ and $t$ alone 2 .

Let’s define a small neural network $\epsilon_{\mathbf{\theta}}(\mathbf{x}_t, t)$ where $\mathbf{\theta}$ are the parameters of the network that does just that:

Here, we encode the timestamp of the diffusion process $t$ as a one-hot vector with a single layer and then concatenate this information with the noisy data.

Next up : Training the model to predict the noise. For this, one can just sample $t$’s, use the forward process to generate the noisy sample $x_t$ together with the noise $e_0$, and train the model to reduce the mean squared error between the predicted noise and $e_0$.

After training the model to predict the noise $\epsilon$, we can simply iteratively run the reverse process to predict $\mathbf{x}_{t-1}$ from $x_t$ starting from random noise $\mathbf{x}_T \sim \mathcal{N}(0, \mathbf{I})$ as defined in \eqref{eq:reverse} where we set the mean:

Now, let’s sample new data points and plot them:

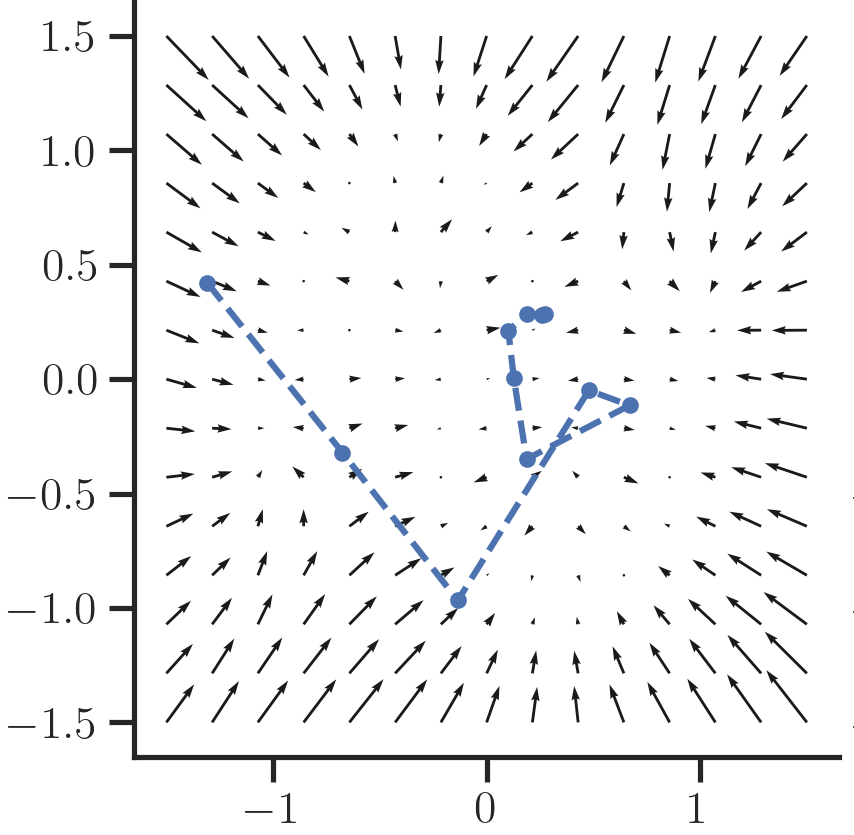

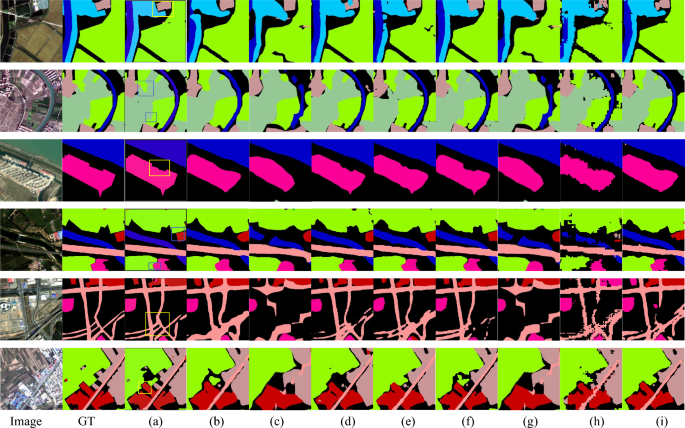

We can also inspect the (negative) direction of the predicted noise vector at a particular timestamp $t$ for each position in a grid to visualize the dynamics a sample follows during the reverse process as a vector field:

One can see that as $t \rightarrow 0$ more fine-grained structure emerges that guides the sample to the original data manifold. At $t=T$ samples are guided coarsely towards the center as the signal is still very noisy and hard for the network to predict.

Working on this small dataset already revealed some important things that one has to consider when training diffusion models. In particular, in the beginning when I started to implement this from the paper description, a huge amount of diffusion steps ($T=1000$) were required to yield good results.

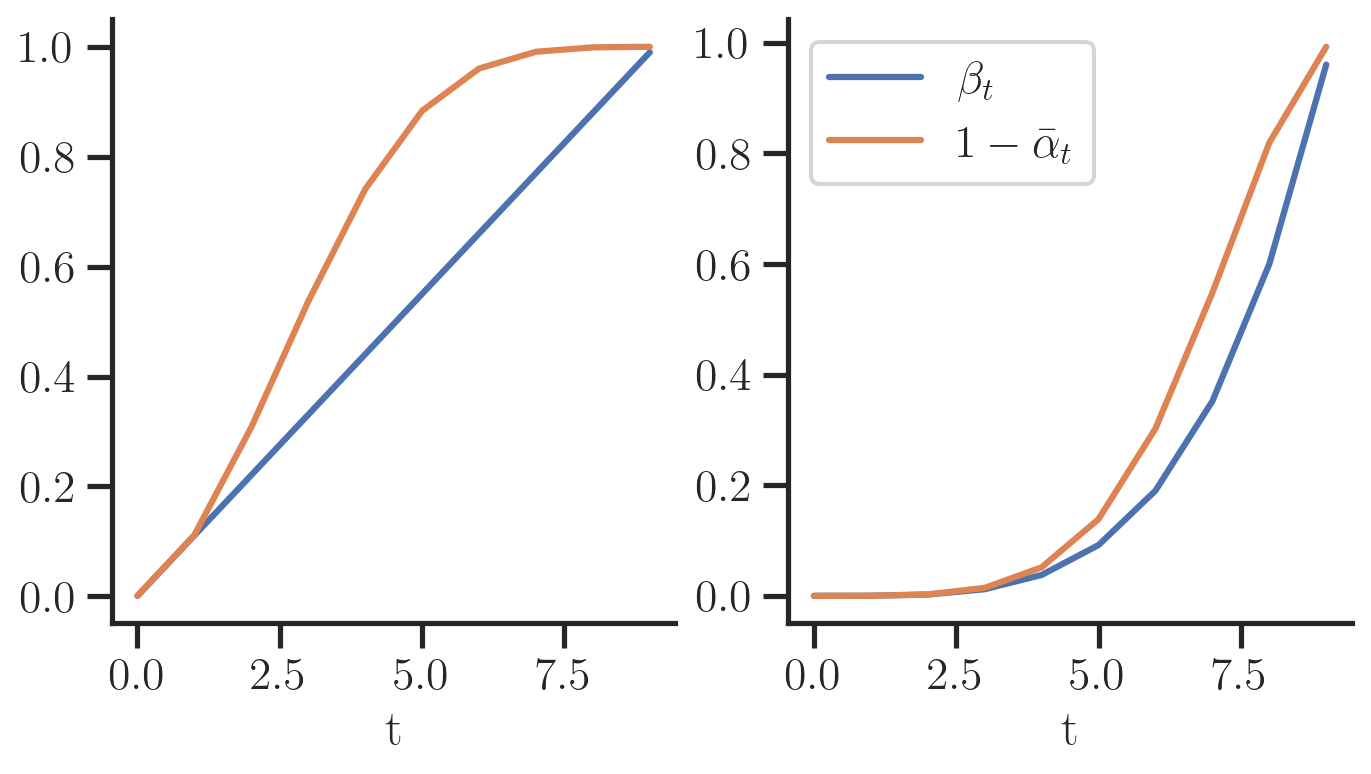

Further looking into the literature and appendix of the papers revealed some things that brought down the diffusion steps required to $T=10$:

- It is important to perform linear scaling of the input data into the range $[-1, 1]$. Standardizing the input data (i.e., subtracting the mean and dividing by the standard dev.) as it is usually done for neural networks yielded worse results

- The variance schedule (${\beta_t}_t$) ideally has small changes towards $t=0$ such that the noise is not too much for the network to reconstruct, i.e., it learn fine-grained details of the data. This was already discovered in [Nichol and Dhariwal 2021] , however, it is interesting to see that his insight can be shown from a toy dataset already instead of training expensive image models. Fig. 5 shows how the variance of the forward process $1 - \bar{\alpha}_t$ evolves for when $\beta_t$ is set linear (left), or polynomial (right). The right setting works much better in practice since the perturbation of the input does not happen too fast.

Check out the full notebook which this blog post is based on here .

- Sohl-Dickstein, J., Weiss, E., Maheswaranathan, N., and Ganguli, S. 2015. Deep unsupervised learning using nonequilibrium thermodynamics. International Conference on Machine Learning , PMLR, 2256–2265.

- Luo, C. 2022. Understanding Diffusion Models: A Unified Perspective. .

- Nichol, A.Q. and Dhariwal, P. 2021. Improved Denoising Diffusion Probabilistic Models. International Conference on Machine Learning , PMLR, 8162–8171.

- Ho, J., Jain, A., and Abbeel, P. 2020. Denoising Diffusion Probabilistic Models. Advances in Neural Information Processing Systems 33 , 6840–6851.

This is one possible parameterization of the mean that is most effective based on the experiments in [Ho et al. 2020] . [Luo 2022] summarizes two other paramterizations in the literature, e.g., regressing the mean directly. ↩

Here we treat the variances as fixed. [Nichol and Dhariwal 2021] propose to learn these with an additional objective. ↩

You may also enjoy:

Learning distributions on compact support using normalizing flows --> january 10, 2022 -->, why did my neural network do that --> august 12, 2020 -->.

Practical: Investigating the Rate of Diffusion ( OCR A Level Biology )

Revision note.

Biology & Environmental Systems and Societies

Practical: Investigating the Rate of Diffusion

- It is possible to investigate the effect of certain factors on the rate of diffusion

- Different apparatus can be used to do this, such as Visking tubing and cubes of agar

Practical 1: Investigating the rate of diffusion using visking tubing

- Visking tubing (sometimes referred to as dialysis tubing) is a non-living partially permeable membrane made from cellulose

- Pores in this membrane are small enough to prevent the passage of large molecules (such as starch and sucrose ) but allow smaller molecules (such as glucose ) to pass through by diffusion

- Filling a section of Visking tubing with a mixture of starch and glucose solutions

- Suspending the tubing in a boiling tube of water for a set period of time

- Testing the water outside of the visking tubing at regular intervals for the presence of starch and glucose to monitor whether the diffusion of either substance out of the tubing has occurred

- The results should indicate that glucose, but not starch, diffuses out of the tubing

An example of how to set up an experiment to investigate diffusion

- Comparisons of the glucose concentration between the time intervals can be made using a set of colour standards (produced by known glucose concentrations) or a colorimeter to give a more quantitative set of results

- A graph could be drawn showing how the rate of diffusion changes with the concentration gradient between the inside and outside of the tubing

Practical 2: Investigating the rate of diffusion using agar

- The effect of surface area to volume ratio on the rate of diffusion can be investigated by timing the diffusion of ions through different sized cubes of agar

- Purple agar can be created if it is made up with very dilute sodium hydroxide solution and Universal Indicator

- Alternatively, the agar can be made up with Universal Indicator only

- The acid should have a higher molarity than the sodium hydroxide so that its diffusion can be monitored by a change in colour of the indicator in the agar blocks

- The time taken for the acid to completely change the colour of the indicator in the agar blocks

- The distance travelled into the block by the acid (shown by the change in colour of the indicator) in a given time period (eg. 5 minutes)

- These times can be converted to rates (1 ÷ time taken)

- A graph could be drawn showing how the rate of diffusion (rate of colour change) changes with the surface area to volume ratio of the agar cubes

An example of how to set up an experiment to investigate the effect of changing surface area to volume ratio on the rate of diffusion

When an agar cube (or for example a biological cell or organism) increases in size, the volume increases faster than the surface area, because the volume is cubed whereas the surface area is squared. When an agar cube (or biological cell / organism) has more volume but proportionately less surface area, diffusion takes longer and is less effective. In more precise scientific terms, the greater the surface area to volume ratio , the faster the rate of diffusion !

You've read 0 of your 10 free revision notes

Get unlimited access.

to absolutely everything:

- Downloadable PDFs

- Unlimited Revision Notes

- Topic Questions

- Past Papers

- Model Answers

- Videos (Maths and Science)

Join the 100,000 + Students that ❤️ Save My Exams

the (exam) results speak for themselves:

Did this page help you?

Author: Alistair

Alistair graduated from Oxford University with a degree in Biological Sciences. He has taught GCSE/IGCSE Biology, as well as Biology and Environmental Systems & Societies for the International Baccalaureate Diploma Programme. While teaching in Oxford, Alistair completed his MA Education as Head of Department for Environmental Systems & Societies. Alistair has continued to pursue his interests in ecology and environmental science, recently gaining an MSc in Wildlife Biology & Conservation with Edinburgh Napier University.

Diffusion Course documentation

Unit 1: An Introduction to Diffusion Models

Diffusion course.

and get access to the augmented documentation experience

to get started

Welcome to Unit 1 of the Hugging Face Diffusion Models Course! In this unit, you will learn the basics of how diffusion models work and how to create your own using the 🤗 Diffusers library.

Start this Unit :rocket:

Here are the steps for this unit:

- Make sure you’ve signed up for this course so that you can be notified when new material is released.

- Read through the introductory material below as well as any of the additional resources that sound interesting.

- Check out the Introduction to Diffusers notebook below to put theory into practice with the 🤗 Diffusers library.

- Train and share your own diffusion model using the notebook or the linked training script.

- (Optional) Dive deeper with the Diffusion Models from Scratch notebook if you’re interested in seeing a minimal from-scratch implementation and exploring the different design decisions involved.

- (Optional) Check out this video for an informal run-through the material for this unit.

:loudspeaker: Don’t forget to join the Discord , where you can discuss the material and share what you’ve made in the #diffusion-models-class channel.

What Are Diffusion Models?

Diffusion models are a relatively recent addition to a group of algorithms known as ‘generative models’. The goal of generative modeling is to learn to generate data, such as images or audio, given a number of training examples. A good generative model will create a diverse set of outputs that resemble the training data without being exact copies. How do diffusion models achieve this? Let’s focus on the image generation case for illustrative purposes.

The secret to diffusion models’ success is the iterative nature of the diffusion process. Generation begins with random noise, but this is gradually refined over a number of steps until an output image emerges. At each step, the model estimates how we could go from the current input to a completely denoised version. However, since we only make a small change at every step, any errors in this estimate at the early stages (where predicting the final output is extremely difficult) can be corrected in later updates.

Training the model is relatively straightforward compared to some other types of generative model. We repeatedly 1) Load in some images from the training data 2) Add noise, in different amounts. Remember, we want the model to do a good job estimating how to ‘fix’ (denoise) both extremely noisy images and images that are close to perfect. 3) Feed the noisy versions of the inputs into the model 4) Evaluate how well the model does at denoising these inputs 5) Use this information to update the model weights

To generate new images with a trained model, we begin with a completely random input and repeatedly feed it through the model, updating it each time by a small amount based on the model prediction. As we’ll see, there are a number of sampling methods that try to streamline this process so that we can generate good images with as few steps as possible.

We will show each of these steps in detail in the hands-on notebooks here in unit 1. In unit 2, we will look at how this process can be modified to add additional control over the model outputs through extra conditioning (such as a class label) or with techniques such as guidance. And units 3 and 4 will explore an extremely powerful diffusion model called Stable Diffusion, which can generate images given text descriptions.

Hands-On Notebooks

At this point, you know enough to get started with the accompanying notebooks! The two notebooks here come at the same idea in different ways.

| Chapter | Colab | Kaggle | Gradient | Studio Lab |

|---|---|---|---|---|

| Introduction to Diffusers | ||||

| Diffusion Models from Scratch |

In Introduction to Diffusers , we show the different steps described above using building blocks from the diffusers library. You’ll quickly see how to create, train and sample your own diffusion models on whatever data you choose. By the end of the notebook, you’ll be able to read and modify the example training script to train diffusion models and share them with the world! This notebook also introduces the main exercise associated with this unit, where we will collectively attempt to figure out good ‘training recipes’ for diffusion models at different scales - see the next section for more info.

In Diffusion Models from Scratch , we show those same steps (adding noise to data, creating a model, training and sampling) but implemented from scratch in PyTorch as simply as possible. Then we compare this ‘toy example’ with the diffusers version, noting how the two differ and where improvements have been made. The goal here is to gain familiarity with the different components and the design decisions that go into them so that when you look at a new implementation you can quickly identify the key ideas.

Project Time

Now that you’ve got the basics down, have a go at training one or more diffusion models! Some suggestions are included at the end of the Introduction to Diffusers notebook. Make sure to share your results, training recipes and findings with the community so that we can collectively figure out the best ways to train these models.

Some Additional Resources

The Annotated Diffusion Model is a very in-depth walk-through of the code and theory behind DDPMs with maths and code showing all the different components. It also links to a number of papers for further reading.

Hugging Face documentation on Unconditional Image-Generation for some examples of how to train diffusion models using the official training example script, including code showing how to create your own dataset.

AI Coffee Break video on Diffusion Models: https://www.youtube.com/watch?v=344w5h24-h8

Yannic Kilcher Video on DDPMs: https://www.youtube.com/watch?v=W-O7AZNzbzQ

Found more great resources? Let us know and we’ll add them to this list.

- BiologyDiscussion.com

- Follow Us On:

- Google Plus

- Publish Now

Top 5 Experiments on Diffusion (With Diagram)

ADVERTISEMENTS:

The following points highlight the top five experiments on diffusion. The experiments are: 1. Diffusion of S olid in Liquid 2. Diffusion of Liquid in Liquid 3. Diffusion of Gas in Gas 4. Comparative Rates of Diffusion of Different Solutes 5. Comparative rates of diffusion through different media.

Experiment # 1

Diffusion of s olid in liquid:.

Experiment:

A beaker is almost filled with water. Some crystals of CuSO 4 or KMnO 4 are dropped carefully without disturbing water and is left as such for some time.

Observation:

The water is uniformly coloured, blue in case of CuSO 4 and pink in case of KMnO 4 .

The molecules of the chemicals diffuse gradually from higher concentration to lower concentration and are uniformly distributed after some time. Here, CuSO 4 or KMnO 4 diffuses independently of water and at the same time water diffuses independently of the chemicals.

Experiment # 2

Diffusion of liquid in liquid:.

Two test tubes are taken. To one 30 rim depth of chloroform and to the other 4 mm depth of water are added. Now to the first test tube 4 mm depth of water and to the other 30 mm depth of ether are added (both chloroform and ether form the upper layer).

Ether must be added carefully to avoid disturbance of water. The tubes are stoppered tightly with corks. The position of liquid layers in each test tube is marked and their thickness measured.

The tubes are set aside for some time and the thickness of the liquids in each test tube is recorded at different intervals.

The rate of diffusion of ether is faster than that of chloroform into water as indicated by their respective volumes.

The rate of diffusion is inversely proportional (approximately) to the square root of density of the substance. Substances having higher molecular weights show slower diffusion rates than those having lower molecular weights.

In the present experiment ether (C 2 H 5 -O-G 2 H 5 , J mol. wt. 74) diffuses faster into water than chloroform (CHCI 3 , mol. wt. 119.5). This ratio (74: 119-5) is known as diffusively or coefficient of diffusion.

Experiment # 3

Diffusion of gas in gas:.

One gas jar is filled with CO 2 (either by laboratory method: CaCO 3 + HCL, or by allowing living plant tissue to respire in a closed jar). Another jar is similarly filled with O 2 (either by laboratory method: MnO 2 + KClO 2 , or by allowing green plant tissue to photosynthesize in a dosed jar). The gases may be tested with glowing match stick.

The oxygen jar is then inverted over the mouth of the carbon dioxide jar and made air-tight with grease. It is then allowed to remain for some time. The jars are carefully removed and tested with glowing match stick.

The glowing match sticks flared up in both the jars.

The diffusion of CO 2 and O 2 takes place in both the jars until finally the concentrations are same in both of them making a mixture of CO 2 and O 2 . Hence the glowing match sticks flared up in both the jars.

Experiment # 4

Comparative rates of diffusion of different solutes:.

3.2gm of agar-agar is completely dissolved in 200 ml of boiling water and when partially cooled, 30 drops of methyl red solution and a little of 0.1 N NaOH are added to give an alkaline yellow colour. 3 test tubes are filled three-fourth full with agar mixture and allowed to set.

The agar is covered with 4 ml portion of the following solutions, stoppered tightly and kept in a cool place:

(a) 4 ml of 0-4% methylene blue,

(b) 4 ml of 0.05 N HCl, and (4.2 ml of 0.1ml HCL plus 2 ml of 0-4% methylene blue.

The diffusion of various solutes is recorded in millimeters after 4 hours. The top of the gel should be marked before the above solutions are added.

The rate of diffusion of HCL alone (tube b) is faster compared to the combination of methylene blue and HCl (tube c) and minimum in case of methylene blue alone (tube a).

Different substances like gases, liquids and solutes can diffuse simultaneously and independently at different rates in the same place without interfering each other.

HCL being gaseous in nature and of lower molecular weight can diffuse much faster than methylene blue which is a dye of higher molecular weight having an adsorptive property. Hence in combination, these; two substances diffuse more readily than methylene blue alone.

Experiment # 5

Comparative rates of diffusion through different media:.

- Skip to primary navigation

- Skip to main content

- Skip to primary sidebar

- FREE Experiments

- Kitchen Science

- Climate Change

- Egg Experiments

- Fairy Tale Science

- Edible Science

- Human Health

- Inspirational Women

- Forces and Motion

- Science Fair Projects

- STEM Challenges

- Science Sparks Books

- Contact Science Sparks

- Science Resources for Home and School

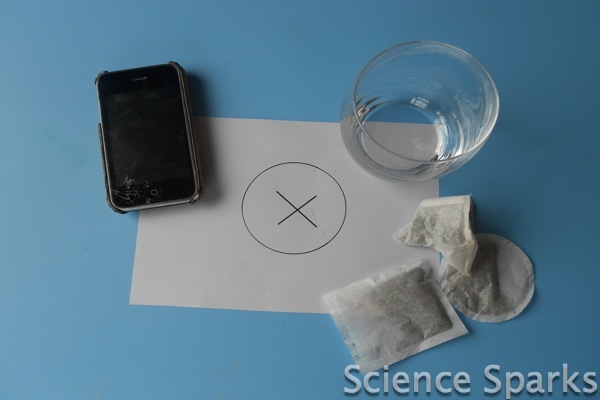

Tea bag diffusion!

January 2, 2012 By Emma Vanstone 9 Comments

I love a good cup of tea. In fact, I cannot actually function without one first thing in the morning. If you’re like me, then this investigation is definitely needed in your house so that you can ensure your kids are equipped with the best tea-making skills and have the best scientific knowledge to back up what makes a good cup of tea! This investigation looks at diffusion through the partially permeable membrane of a tea bag.

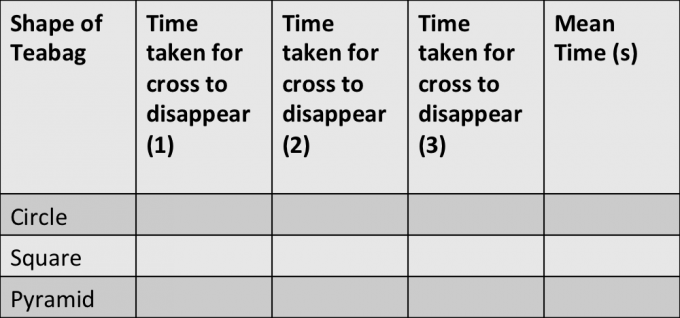

So firstly, we want to know what type of teabag makes the best drink?

Is it a square, a pyramid or a circle bag?

The activity involves using hot water, so adult supervision is essential.

Teabag diffusion

You’ll need

A stopwatch/timer

A piece of white paper

3 clear glass mugs (you are going to add hot water, so not thin ones that could crack)

Circle, triangle and pyramid tea bags

Thermometer or kettle

1. On the piece of white paper, draw a cross with a marker pen

2. Place one mug over the cross

3. Add the circle teabag

4. Boil water from the kettle and measure out 150ml (if you have a thermometer, you can improve reliability by keeping the temperature constant)

5. Pour over the teabag and start the stopwatch

6. Time how long it takes for the cross to disappear

7. Repeat with the pyramid and square teabag.

8. To make the investigation results more accurate, repeat with each teabag three times.

Record your results in a table

How does the tea diffuse into the water?

So which teabag was quicker?

You should find that the pyramid teabag was the quickest.

Why do you think this is?

As the water is added to the teabag, it causes the tea leaves to move and triggers diffusion of the leaves. Diffusion is defined as the movement of a substance from an area of higher concentration to an area of lower concentration. There are lots of tea molecules in the bag and none outside. The leaves themselves can’t pass through the bag, but their smaller particles containing colour and flavour can (the teabag itself acts as the partially permeable membrane). The addition of heat (from the hot water) to the tea bag causes its molecules to move much faster than at room temperature. This energy is more readily released in a shorter period of time than a tea bag filled with room temperature or cold water. The teabag shape affects the surface area and the pyramid due to its 3D shape providing more surface area for diffusion to take place and more area in the middle for the tea molecules to move around in spreading the colour and flavour.

Ok, so now they know which is the best teabag to use and how to let it brew…so I suggest you ask for a nice cuppa now!

Last Updated on February 23, 2023 by Emma Vanstone

Safety Notice

Science Sparks ( Wild Sparks Enterprises Ltd ) are not liable for the actions of activity of any person who uses the information in this resource or in any of the suggested further resources. Science Sparks assume no liability with regard to injuries or damage to property that may occur as a result of using the information and carrying out the practical activities contained in this resource or in any of the suggested further resources.

These activities are designed to be carried out by children working with a parent, guardian or other appropriate adult. The adult involved is fully responsible for ensuring that the activities are carried out safely.

Reader Interactions

January 06, 2012 at 8:20 pm