Journal of Online Learning Research (JOLR)

Issn# 2374-1473.

The Journal of Online Learning Research (JOLR) is a peer-reviewed journal devoted to the theoretical, empirical, and pragmatic understanding of technologies and their impact on pedagogy and policy in primary and secondary (K-12) online and blended environments.

Three issues are published annually. Each submitted manuscript goes through a rigorous blind peer review process. If accepted, article is then published in either the general research or international section. Additional information for each section is found below.

JOLR is Open Access, free-of-charge and distributed by LearnTechLib-The Learning and Technology Library . It is the official journal of the Association for the Advancement of Computing in Education (AACE) . All have free, online access to all back issues via LearnTechLib–The Learning & Technology Library .

- Contents: Current Issue Contents & Abstracts or browse previous issues

- Subscribe: Individual or Library/Institution

- Submit: Author Guidelines

- Review: Review Policies , Reviewer Application, Review Board

- Editors: Mary Rice , Editor-in-chief, Michael Barbour , Associate Editor

- Alert: Sign-up for New Issue Alerts

Types of Articles

Research Section

Articles focus on research related to K-12 online and blended learning. Research articles can:

- Address online learning, catering particularly to the educators who research, practice, design, and/or administer in primary and secondary schooling in online settings. However, the journal also serves those educators who have chosen to blend online learning tools and strategies in their face-to-face classroom.

- Include qualitative, quantitative, and mixed methods research from multiple fields and disciplines that have a shared goal of improving primary and secondary education worldwide.

Research should be both theoretical and practical with implications for research, policy, and practice. Each research article is critically-reviewed by the editors and then undergoes a double blind-peer review process to ensure publication of rigorous and thoughtful research.

International Section

Articles focus on research related to online and blended learning with primary and secondary students in international contexts. Articles can include:

- State-of-a-nation reports that shares trends related to policy, growth, pedagogy, promises, and challenges in areas related to primary and secondary (K-12) distance, online and blended environments.

- Original, empirical research using qualitative, quantitative, or mixed methods research that features participants from outside the United States.

Research should focus discussion on cross cultural connections and that may have implications for global educational settings. Each article is critically reviewed by the editors. It then undergoes a double blind-peer review process with reviewers who have international experience or background to ensure publication of rigorous and thoughtful research.

Practitioner Corner

Practitioner’s personal experiences with teaching and learning can provide valuable information about the contexts to which some researchers expect their findings to apply. Articles in the Practitioner Corner section should present detailed explanations and reflections on educational innovations. Ideally, these articles document problems posed in specific contexts, strategies tried, outcomes, and reflections on learning. Taken together, these articles should reveal trends in educational needs and everyday factors that influence K-12 distance, online, and blended learning. Articles in the Practitioner Corner section should go beyond “Did it work?” to explore how interventions function and the boundaries of their scalability (i.e., how could it be or what is stopping it from being implemented elsewhere?).

Articles in the Practitioner Corner section should contain a structured abstract using the format presented below. The body of the manuscript need not conform to the structure of the abstract.

- Context. Briefly summarize the context in which the intervention was implemented.

- Problem. Briefly state the practical learning or performance gap addressed by the intervention or other strategy and how the present intervention addresses the problem in a novel way

- Intervention. Briefly describe the strategy or intervention, specifying why it addresses the practical problem and was thought to improve upon previous approaches

- Outcomes. Briefly describe what happened to BOTH educational process AND outcomes when the intervention was implemented

- Lessons Learned. Briefly summarize lessons learned that other educators could use when attempting to address a similar practical problem – note this is not a summary of impact, but a reflection on what was learned about implementing the strategy

Articles in the Practitioner Corner section must be at least 1000 words but should be no more than 3500 words. Articles submitted to the Practitioner Corner section will not be sent through a traditional blind review process but will undergo an editorial review or a review by a topical expert.

Inquiries should be sent to Mary Rice .

Book Reviews

JOLR reserves a section for the scholarly review of current books that contribute to the literature related to K-12 online and blended learning. The aim of our Book Reviews is to engage distance educators in sharing their perspectives about new publications that contribute to the field. Book reviews should be composed in the following manner:

- Heading and Signature – Book title, author name, location, publisher, date of publication, book edition, number of pages, and ISBN. Ensure that the name of reviewer and their institutional affiliation is included.

- Introduction – The review should begin with an introduction to the topic and an overview of the content of the book. Describe the background and qualifications of the author. Who is the author’s intended audience? What is the author’s purpose and/or main thesis?

- Organization/Structure – What is the organization/structure of the book? How accurate and current is the information presented? Does the evidence support the conclusions?

- Significance to the Field and Overall Impression – How current is the information presented? How effective is the author’s method of developing the information? What is your assessment of the book’s major strengths and weaknesses? How does it compare with other works on the same subject? Does the book make a meaningful contribution to the literature? What are your overall comments and conclusions about the book?

Authors should aim for 1000-1500 words.

Indexed in leading indices including: ERIC, LearnTechLib-The Learning and Technology Library , Index Copernicus, GetCited, Google Scholar, and several others.

Privacy Overview

Thank you for visiting nature.com. You are using a browser version with limited support for CSS. To obtain the best experience, we recommend you use a more up to date browser (or turn off compatibility mode in Internet Explorer). In the meantime, to ensure continued support, we are displaying the site without styles and JavaScript.

- View all journals

- Explore content

- About the journal

- Publish with us

- Sign up for alerts

- Open access

- Published: 09 January 2024

Online vs in-person learning in higher education: effects on student achievement and recommendations for leadership

- Bandar N. Alarifi 1 &

- Steve Song 2

Humanities and Social Sciences Communications volume 11 , Article number: 86 ( 2024 ) Cite this article

8618 Accesses

2 Citations

2 Altmetric

Metrics details

- Science, technology and society

This study is a comparative analysis of online distance learning and traditional in-person education at King Saud University in Saudi Arabia, with a focus on understanding how different educational modalities affect student achievement. The justification for this study lies in the rapid shift towards online learning, especially highlighted by the educational changes during the COVID-19 pandemic. By analyzing the final test scores of freshman students in five core courses over the 2020 (in-person) and 2021 (online) academic years, the research provides empirical insights into the efficacy of online versus traditional education. Initial observations suggested that students in online settings scored lower in most courses. However, after adjusting for variables like gender, class size, and admission scores using multiple linear regression, a more nuanced picture emerged. Three courses showed better performance in the 2021 online cohort, one favored the 2020 in-person group, and one was unaffected by the teaching format. The study emphasizes the crucial need for a nuanced, data-driven strategy in integrating online learning within higher education systems. It brings to light the fact that the success of educational methodologies is highly contingent on specific contextual factors. This finding advocates for educational administrators and policymakers to exercise careful and informed judgment when adopting online learning modalities. It encourages them to thoroughly evaluate how different subjects and instructional approaches might interact with online formats, considering the variable effects these might have on learning outcomes. This approach ensures that decisions about implementing online education are made with a comprehensive understanding of its diverse and context-specific impacts, aiming to optimize educational effectiveness and student success.

Similar content being viewed by others

Elementary school teachers’ perspectives about learning during the COVID-19 pandemic

Quality of a master’s degree in education in Ecuador

Impact of video-based learning in business statistics: a longitudinal study

Introduction.

The year 2020 marked an extraordinary period, characterized by the global disruption caused by the COVID-19 pandemic. Governments and institutions worldwide had to adapt to unforeseen challenges across various domains, including health, economy, and education. In response, many educational institutions quickly transitioned to distance teaching (also known as e-learning, online learning, or virtual classrooms) to ensure continued access to education for their students. However, despite this rapid and widespread shift to online learning, a comprehensive examination of its effects on student achievement in comparison to traditional in-person instruction remains largely unexplored.

In research examining student outcomes in the context of online learning, the prevailing trend is the consistent observation that online learners often achieve less favorable results when compared to their peers in traditional classroom settings (e.g., Fischer et al., 2020 ; Bettinger et al., 2017 ; Edvardsson and Oskarsson, 2008 ). However, it is important to note that a significant portion of research on online learning has primarily focused on its potential impact (Kuhfeld et al., 2020 ; Azevedo et al., 2020 ; Di Pietro et al., 2020 ) or explored various perspectives (Aucejo et al., 2020 ; Radha et al., 2020 ) concerning distance education. These studies have often omitted a comprehensive and nuanced examination of its concrete academic consequences, particularly in terms of test scores and grades.

Given the dearth of research on the academic impact of online learning, especially in light of Covid-19 in the educational arena, the present study aims to address that gap by assessing the effectiveness of distance learning compared to in-person teaching in five required freshmen-level courses at King Saud University, Saudi Arabia. To accomplish this objective, the current study compared the final exam results of 8297 freshman students who were enrolled in the five courses in person in 2020 to their 8425 first-year counterparts who has taken the same courses at the same institution in 2021 but in an online format.

The final test results of the five courses (i.e., University Skills 101, Entrepreneurship 101, Computer Skills 101, Computer Skills 101, and Fitness and Health Culture 101) were examined, accounting for potential confounding factors such as gender, class size and admission scores, which have been cited in past research to be correlated with student achievement (e.g., Meinck and Brese, 2019 ; Jepsen, 2015 ) Additionally, as the preparatory year at King Saud University is divided into five tracks—health, nursing, science, business, and humanity, the study classified students based on their respective disciplines.

Motivation for the study

The rapid expansion of distance learning in higher education, particularly highlighted during the recent COVID-19 pandemic (Volk et al., 2020 ; Bettinger et al., 2017 ), underscores the need for alternative educational approaches during crises. Such disruptions can catalyze innovation and the adoption of distance learning as a contingency plan (Christensen et al., 2015 ). King Saud University, like many institutions worldwide, faced the challenge of transitioning abruptly to online learning in response to the pandemic.

E-learning has gained prominence in higher education due to technological advancements, offering institutions a competitive edge (Valverde-Berrocoso et al., 2020 ). Especially during conditions like the COVID-19 pandemic, electronic communication was utilized across the globe as a feasible means to overcome barriers and enhance interactions (Bozkurt, 2019 ).

Distance learning, characterized by flexibility, became crucial when traditional in-person classes are hindered by unforeseen circumstance such as the ones posed by COVID-19 (Arkorful and Abaidoo, 2015 ). Scholars argue that it allows students to learn at their own pace, often referred to as self-directed learning (Hiemstra, 1994 ) or self-education (Gadamer, 2001 ). Additional advantages include accessibility, cost-effectiveness, and flexibility (Sadeghi, 2019 ).

However, distance learning is not immune to its own set of challenges. Technical impediments, encompassing network issues, device limitations, and communication hiccups, represent formidable hurdles (Sadeghi, 2019 ). Furthermore, concerns about potential distractions in the online learning environment, fueled by the ubiquity of the internet and social media, have surfaced (Hall et al., 2020 ; Ravizza et al., 2017 ). The absence of traditional face-to-face interactions among students and between students and instructors is also viewed as a potential drawback (Sadeghi, 2019 ).

Given the evolving understanding of the pros and cons of distance learning, this study aims to contribute to the existing literature by assessing the effectiveness of distance learning, specifically in terms of student achievement, as compared to in-person classroom learning at King Saud University, one of Saudi Arabia’s largest higher education institutions.

Academic achievement: in-person vs online learning

The primary driving force behind the rapid integration of technology in education has been its emphasis on student performance (Lai and Bower, 2019 ). Over the past decade, numerous studies have undertaken comparisons of student academic achievement in online and in-person settings (e.g., Bettinger et al., 2017 ; Fischer et al., 2020 ; Iglesias-Pradas et al., 2021 ). This section offers a concise review of the disparities in academic achievement between college students engaged in in-person and online learning, as identified in existing research.

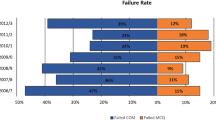

A number of studies point to the superiority of traditional in-person education over online learning in terms of academic outcomes. For example, Fischer et al. ( 2020 ) conducted a comprehensive study involving 72,000 university students across 433 subjects, revealing that online students tend to achieve slightly lower academic results than their in-class counterparts. Similarly, Bettinger et al. ( 2017 ) found that students at for-profit online universities generally underperformed when compared to their in-person peers. Supporting this trend, Figlio et al. ( 2013 ) indicated that in-person instruction consistently produced better results, particularly among specific subgroups like males, lower-performing students, and Hispanic learners. Additionally, Kaupp’s ( 2012 ) research in California community colleges demonstrated that online students faced lower completion and success rates compared to their traditional in-person counterparts (Fig. 1 ).

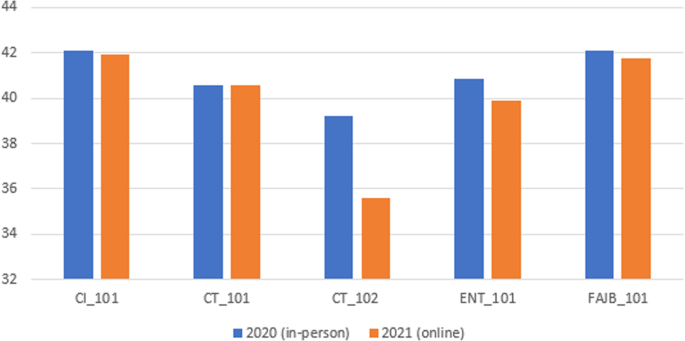

The figure compared student achievement in the final tests in the five courses by year, using independent-samples t-tests; the results show a statistically-significant drop in test scores from 2020 (in person) to 2021 (online) for all courses except CT_101.

In contrast, other studies present evidence of online students outperforming their in-person peers. For example, Iglesias-Pradas et al. ( 2021 ) conducted a comparative analysis of 43 bachelor courses at Telecommunication Engineering College in Malaysia, revealing that online students achieved higher academic outcomes than their in-person counterparts. Similarly, during the COVID-19 pandemic, Gonzalez et al. ( 2020 ) found that students engaged in online learning performed better than those who had previously taken the same subjects in traditional in-class settings.

Expanding on this topic, several studies have reported mixed results when comparing the academic performance of online and in-person students, with various student and instructor factors emerging as influential variables. Chesser et al. ( 2020 ) noted that student traits such as conscientiousness, agreeableness, and extraversion play a substantial role in academic achievement, regardless of the learning environment—be it traditional in-person classrooms or online settings. Furthermore, Cacault et al. ( 2021 ) discovered that online students with higher academic proficiency tend to outperform those with lower academic capabilities, suggesting that differences in students’ academic abilities may impact their performance. In contrast, Bergstrand and Savage ( 2013 ) found that online classes received lower overall ratings and exhibited a less respectful learning environment when compared to in-person instruction. Nevertheless, they also observed that the teaching efficiency of both in-class and online courses varied significantly depending on the instructors’ backgrounds and approaches. These findings underscore the multifaceted nature of the online vs. in-person learning debate, highlighting the need for a nuanced understanding of the factors at play.

Theoretical framework

Constructivism is a well-established learning theory that places learners at the forefront of their educational experience, emphasizing their active role in constructing knowledge through interactions with their environment (Duffy and Jonassen, 2009 ). According to constructivist principles, learners build their understanding by assimilating new information into their existing cognitive frameworks (Vygotsky, 1978 ). This theory highlights the importance of context, active engagement, and the social nature of learning (Dewey, 1938 ). Constructivist approaches often involve hands-on activities, problem-solving tasks, and opportunities for collaborative exploration (Brooks and Brooks, 1999 ).

In the realm of education, subject-specific pedagogy emerges as a vital perspective that acknowledges the distinctive nature of different academic disciplines (Shulman, 1986 ). It suggests that teaching methods should be tailored to the specific characteristics of each subject, recognizing that subjects like mathematics, literature, or science require different approaches to facilitate effective learning (Shulman, 1987 ). Subject-specific pedagogy emphasizes that the methods of instruction should mirror the ways experts in a particular field think, reason, and engage with their subject matter (Cochran-Smith and Zeichner, 2005 ).

When applying these principles to the design of instruction for online and in-person learning environments, the significance of adapting methods becomes even more pronounced. Online learning often requires unique approaches due to its reliance on technology, asynchronous interactions, and potential for reduced social presence (Anderson, 2003 ). In-person learning, on the other hand, benefits from face-to-face interactions and immediate feedback (Allen and Seaman, 2016 ). Here, the interplay of constructivism and subject-specific pedagogy becomes evident.

Online learning. In an online environment, constructivist principles can be upheld by creating interactive online activities that promote exploration, reflection, and collaborative learning (Salmon, 2000 ). Discussion forums, virtual labs, and multimedia presentations can provide opportunities for students to actively engage with the subject matter (Harasim, 2017 ). By integrating subject-specific pedagogy, educators can design online content that mirrors the discipline’s methodologies while leveraging technology for authentic experiences (Koehler and Mishra, 2009 ). For instance, an online history course might incorporate virtual museum tours, primary source analysis, and collaborative timeline projects.

In-person learning. In a traditional brick-and-mortar classroom setting, constructivist methods can be implemented through group activities, problem-solving tasks, and in-depth discussions that encourage active participation (Jonassen et al., 2003 ). Subject-specific pedagogy complements this by shaping instructional methods to align with the inherent characteristics of the subject (Hattie, 2009). For instance, in a physics class, hands-on experiments and real-world applications can bring theoretical concepts to life (Hake, 1998 ).

In sum, the fusion of constructivism and subject-specific pedagogy offers a versatile approach to instructional design that adapts to different learning environments (Garrison, 2011 ). By incorporating the principles of both theories, educators can tailor their methods to suit the unique demands of online and in-person learning, ultimately providing students with engaging and effective learning experiences that align with the nature of the subject matter and the mode of instruction.

Course description

The Self-Development Skills Department at King Saud University (KSU) offers five mandatory freshman-level courses. These courses aim to foster advanced thinking skills and cultivate scientific research abilities in students. They do so by imparting essential skills, identifying higher-level thinking patterns, and facilitating hands-on experience in scientific research. The design of these classes is centered around aiding students’ smooth transition into university life. Brief descriptions of these courses are as follows:

University Skills 101 (CI 101) is a three-hour credit course designed to nurture essential academic, communication, and personal skills among all preparatory year students at King Saud University. The primary goal of this course is to equip students with the practical abilities they need to excel in their academic pursuits and navigate their university lives effectively. CI 101 comprises 12 sessions and is an integral part of the curriculum for all incoming freshmen, ensuring a standardized foundation for skill development.

Fitness and Health 101 (FAJB 101) is a one-hour credit course. FAJB 101 focuses on the aspects of self-development skills in terms of health and physical, and the skills related to personal health, nutrition, sports, preventive, psychological, reproductive, and first aid. This course aims to motivate students’ learning process through entertainment, sports activities, and physical exercises to maintain their health. This course is required for all incoming freshmen students at King Saud University.

Entrepreneurship 101 (ENT 101) is a one-hour- credit course. ENT 101 aims to develop students’ skills related to entrepreneurship. The course provides students with knowledge and skills to generate and transform ideas and innovations into practical commercial projects in business settings. The entrepreneurship course consists of 14 sessions and is taught only to students in the business track.

Computer Skills 101 (CT 101) is a three-hour credit course. This provides students with the basic computer skills, e.g., components, operating systems, applications, and communication backup. The course explores data visualization, introductory level of modern programming with algorithms and information security. CT 101 course is taught for all tracks except those in the human track.

Computer Skills 102 (CT 102) is a three-hour credit course. It provides IT skills to the students to utilize computers with high efficiency, develop students’ research and scientific skills, and increase capability to design basic educational software. CT 102 course focuses on operating systems such as Microsoft Office. This course is only taught for students in the human track.

Structure and activities

These courses ranged from one to three hours. A one-hour credit means that students must take an hour of the class each week during the academic semester. The same arrangement would apply to two and three credit-hour courses. The types of activities in each course are shown in Table 1 .

At King Saud University, each semester spans 15 weeks in duration. The total number of semester hours allocated to each course serves as an indicator of its significance within the broader context of the academic program, including the diverse tracks available to students. Throughout the two years under study (i.e., 2020 and 2021), course placements (fall or spring), course content, and the organizational structure remained consistent and uniform.

Participants

The study’s data comes from test scores of a cohort of 16,722 first-year college students enrolled at King Saud University in Saudi Arabia over the span of two academic years: 2020 and 2021. Among these students, 8297 were engaged in traditional, in-person learning in 2020, while 8425 had transitioned to online instruction for the same courses in 2021 due to the Covid-19 pandemic. In 2020, the student population consisted of 51.5% females and 48.5% males. However, in 2021, there was a reversal in these proportions, with female students accounting for 48.5% and male students comprising 51.5% of the total participants.

Regarding student enrollment in the five courses, Table 2 provides a detailed breakdown by average class size, admission scores, and the number of students enrolled in the courses during the two years covered by this study. While the total number of students in each course remained relatively consistent across the two years, there were noticeable fluctuations in average class sizes. Specifically, four out of the five courses experienced substantial increases in class size, with some nearly doubling in size (e.g., ENT_101 and CT_102), while one course (CT_101) showed a reduction in its average class size.

In this study, it must be noted that while some students enrolled in up to three different courses within the same academic year, none repeated the same exam in both years. Specifically, students who failed to pass their courses in 2020 were required to complete them in summer sessions and were consequently not included in this study’s dataset. To ensure clarity and precision in our analysis, the research focused exclusively on student test scores to evaluate and compare the academic effectiveness of online and traditional in-person learning methods. This approach was chosen to provide a clear, direct comparison of the educational impacts associated with each teaching format.

Descriptive analysis of the final exam scores for the two years (2020 and 2021) were conducted. Additionally, comparison of student outcomes in in-person classes in 2020 to their online platform peers in 2021 were conducted using an independent-samples t -test. Subsequently, in order to address potential disparities between the two groups arising from variables such as gender, class size, and admission scores (which serve as an indicator of students’ academic aptitude and pre-enrollment knowledge), multiple regression analyses were conducted. In these multivariate analyses, outcomes of both in-person and online cohorts were assessed within their respective tracks. By carefully considering essential aforementioned variables linked to student performance, the study aimed to ensure a comprehensive and equitable evaluation.

Study instrument

The study obtained students’ final exam scores for the years 2020 (in-person) and 2021 (online) from the school’s records office through their examination management system. In the preparatory year at King Saud University, final exams for all courses are developed by committees composed of faculty members from each department. To ensure valid comparisons, the final exam questions, crafted by departmental committees of professors, remained consistent and uniform for the two years under examination.

Table 3 provides a comprehensive assessment of the reliability of all five tests included in our analysis. These tests exhibit a strong degree of internal consistency, with Cronbach’s alpha coefficients spanning a range from 0.77 to 0.86. This robust and consistent internal consistency measurement underscores the dependable nature of these tests, affirming their reliability and suitability for the study’s objectives.

In terms of assessing test validity, content validity was ensured through a thorough review by university subject matter experts, resulting in test items that align well with the content domain and learning objectives. Additionally, criterion-related validity was established by correlating students’ admissions test scores with their final required freshman test scores in the five subject areas, showing a moderate and acceptable relationship (0.37 to 0.56) between the test scores and the external admissions test. Finally, construct validity was confirmed through reviews by experienced subject instructors, leading to improvements in test content. With guidance from university subject experts, construct validity was established, affirming the effectiveness of the final tests in assessing students’ subject knowledge at the end of their coursework.

Collectively, these validity and reliability measures affirm the soundness and integrity of the final subject tests, establishing their suitability as effective assessment tools for evaluating students’ knowledge in their five mandatory freshman courses at King Saud University.

After obtaining research approval from the Research Committee at King Saud University, the coordinators of the five courses (CI_101, ENT_101, CT_101, CT_102, and FAJB_101) supplied the researchers with the final exam scores of all first-year preparatory year students at King Saud University for the initial semester of the academic years 2020 and 2021. The sample encompassed all students who had completed these five courses during both years, resulting in a total of 16,722 students forming the final group of participants.

Limitations

Several limitations warrant acknowledgment in this study. First, the research was conducted within a well-resourced major public university. As such, the experiences with online classes at other types of institutions (e.g., community colleges, private institutions) may vary significantly. Additionally, the limited data pertaining to in-class teaching practices and the diversity of learning activities across different courses represents a gap that could have provided valuable insights for a more thorough interpretation and explanation of the study’s findings.

To compare student achievement in the final tests in the five courses by year, independent-samples t -tests were conducted. Table 4 shows a statistically-significant drop in test scores from 2020 (in person) to 2021 (online) for all courses except CT_101. The biggest decline was with CT_102 with 3.58 points, and the smallest decline was with CI_101 with 0.18 points.

However, such simple comparison of means between the two years (via t -tests) by subjects does not account for the differences in gender composition, class size, and admission scores between the two academic years, all of which have been associated with student outcomes (e.g., Ho and Kelman, 2014 ; De Paola et al., 2013 ). To account for such potential confounding variables, multiple regressions were conducted to compare the 2 years’ results while controlling for these three factors associated with student achievement.

Table 5 presents the regression results, illustrating the variation in final exam scores between 2020 and 2021, while controlling for gender, class size, and admission scores. Importantly, these results diverge significantly from the outcomes obtained through independent-sample t -test analyses.

Taking into consideration the variables mentioned earlier, students in the 2021 online cohort demonstrated superior performance compared to their 2020 in-person counterparts in CI_101, FAJB_101, and CT_101, with score advantages of 0.89, 0.56, and 5.28 points, respectively. Conversely, in the case of ENT_101, online students in 2021 scored 0.69 points lower than their 2020 in-person counterparts. With CT_102, there were no statistically significant differences in final exam scores between the two cohorts of students.

The study sought to assess the effectiveness of distance learning compared to in-person learning in the higher education setting in Saudi Arabia. We analyzed the final exam scores of 16,722 first-year college students in King Saud University in five required subjects (i.e., CI_101, ENT_101, CT_101, CT_102, and FAJB_101). The study initially performed a simple comparison of mean scores by tracks by year (via t -tests) and then a number of multiple regression analyses which controlled for class size, gender composition, and admission scores.

Overall, the study’s more in-depth findings using multiple regression painted a wholly different picture than the results obtained using t -tests. After controlling for class size, gender composition, and admissions scores, online students in 2021 performed better than their in-person instruction peers in 2020 in University Skills (CI_101), Fitness and Health (FAJB_101), and Computer Skills (CT_101), whereas in-person students outperformed their online peers in Entrepreneurship (ENT_101). There was no meaningful difference in outcomes for students in the Computer Skills (CT_102) course for the two years.

In light of these findings, it raises the question: why do we observe minimal differences (less than a one-point gain or loss) in student outcomes in courses like University Skills, Fitness and Health, Entrepreneurship, and Advanced Computer Skills based on the mode of instruction? Is it possible that when subjects are primarily at a basic or introductory level, as is the case with these courses, the mode of instruction may have a limited impact as long as the concepts are effectively communicated in a manner familiar and accessible to students?

In today’s digital age, one could argue that students in more developed countries, such as Saudi Arabia, generally possess the skills and capabilities to effectively engage with materials presented in both in-person and online formats. However, there is a notable exception in the Basic Computer Skills course, where the online cohort outperformed their in-person counterparts by more than 5 points. Insights from interviews with the instructors of this course suggest that this result may be attributed to the course’s basic and conceptual nature, coupled with the availability of instructional videos that students could revisit at their own pace.

Given that students enter this course with varying levels of computer skills, self-paced learning may have allowed them to cover course materials at their preferred speed, concentrating on less familiar topics while swiftly progressing through concepts they already understood. The advantages of such self-paced learning have been documented by scholars like Tullis and Benjamin ( 2011 ), who found that self-paced learners often outperform those who spend the same amount of time studying identical materials. This approach allows learners to allocate their time more effectively according to their individual learning pace, providing greater ownership and control over their learning experience. As such, in courses like introductory computer skills, it can be argued that becoming familiar with fundamental and conceptual topics may not require extensive in-class collaboration. Instead, it may be more about exposure to and digestion of materials in a format and at a pace tailored to students with diverse backgrounds, knowledge levels, and skill sets.

Further investigation is needed to more fully understand why some classes benefitted from online instruction while others did not, and vice versa. Perhaps, it could be posited that some content areas are more conducive to in-person (or online) format while others are not. Or it could be that the different results of the two modes of learning were driven by students of varying academic abilities and engagement, with low-achieving students being more vulnerable to the limitations of online learning (e.g., Kofoed et al., 2021 ). Whatever the reasons, the results of the current study can be enlightened by a more in-depth analysis of the various factors associated with such different forms of learning. Moreover, although not clear cut, what the current study does provide is additional evidence against any dire consequences to student learning (at least in the higher ed setting) as a result of sudden increase in online learning with possible benefits of its wider use being showcased.

Based on the findings of this study, we recommend that educational leaders adopt a measured approach to online learning—a stance that neither fully embraces nor outright denounces it. The impact on students’ experiences and engagement appears to vary depending on the subjects and methods of instruction, sometimes hindering, other times promoting effective learning, while some classes remain relatively unaffected.

Rather than taking a one-size-fits-all approach, educational leaders should be open to exploring the nuances behind these outcomes. This involves examining why certain courses thrived with online delivery, while others either experienced a decline in student achievement or remained largely unaffected. By exploring these differentiated outcomes associated with diverse instructional formats, leaders in higher education institutions and beyond can make informed decisions about resource allocation. For instance, resources could be channeled towards in-person learning for courses that benefit from it, while simultaneously expanding online access for courses that have demonstrated improved outcomes through its virtual format. This strategic approach not only optimizes resource allocation but could also open up additional revenue streams for the institution.

Considering the enduring presence of online learning, both before the pandemic and its accelerated adoption due to Covid-19, there is an increasing need for institutions of learning and scholars in higher education, as well as other fields, to prioritize the study of its effects and optimal utilization. This study, which compares student outcomes between two cohorts exposed to in-person and online instruction (before and during Covid-19) at the largest university in Saudi Arabia, represents a meaningful step in this direction.

Data availability

The datasets generated during and/or analyzed during the current study are available from the corresponding author upon reasonable request.

Allen IE, Seaman J (2016) Online report card: Tracking online education in the United States . Babson Survey Group

Anderson T (2003) Getting the mix right again: an updated and theoretical rationale for interaction. Int Rev Res Open Distrib Learn , 4 (2). https://doi.org/10.19173/irrodl.v4i2.149

Arkorful V, Abaidoo N (2015) The role of e-learning, advantages and disadvantages of its adoption in higher education. Int J Instruct Technol Distance Learn 12(1):29–42

Google Scholar

Aucejo EM, French J, Araya MP, Zafar B (2020) The impact of COVID-19 on student experiences and expectations: Evidence from a survey. Journal of Public Economics 191:104271. https://doi.org/10.1016/j.jpubeco.2020.104271

Article PubMed PubMed Central Google Scholar

Azevedo JP, Hasan A, Goldemberg D, Iqbal SA, and Geven K (2020) Simulating the potential impacts of COVID-19 school closures on schooling and learning outcomes: a set of global estimates. World Bank Policy Research Working Paper

Bergstrand K, Savage SV (2013) The chalkboard versus the avatar: Comparing the effectiveness of online and in-class courses. Teach Sociol 41(3):294–306. https://doi.org/10.1177/0092055X13479949

Article Google Scholar

Bettinger EP, Fox L, Loeb S, Taylor ES (2017) Virtual classrooms: How online college courses affect student success. Am Econ Rev 107(9):2855–2875. https://doi.org/10.1257/aer.20151193

Bozkurt A (2019) From distance education to open and distance learning: a holistic evaluation of history, definitions, and theories. Handbook of research on learning in the age of transhumanism , 252–273. https://doi.org/10.4018/978-1-5225-8431-5.ch016

Brooks JG, Brooks MG (1999) In search of understanding: the case for constructivist classrooms . Association for Supervision and Curriculum Development

Cacault MP, Hildebrand C, Laurent-Lucchetti J, Pellizzari M (2021) Distance learning in higher education: evidence from a randomized experiment. J Eur Econ Assoc 19(4):2322–2372. https://doi.org/10.1093/jeea/jvaa060

Chesser S, Murrah W, Forbes SA (2020) Impact of personality on choice of instructional delivery and students’ performance. Am Distance Educ 34(3):211–223. https://doi.org/10.1080/08923647.2019.1705116

Christensen CM, Raynor M, McDonald R (2015) What is disruptive innovation? Harv Bus Rev 93(12):44–53

Cochran-Smith M, Zeichner KM (2005) Studying teacher education: the report of the AERA panel on research and teacher education. Choice Rev Online 43 (4). https://doi.org/10.5860/choice.43-2338

De Paola M, Ponzo M, Scoppa V (2013) Class size effects on student achievement: heterogeneity across abilities and fields. Educ Econ 21(2):135–153. https://doi.org/10.1080/09645292.2010.511811

Dewey, J (1938) Experience and education . Simon & Schuster

Di Pietro G, Biagi F, Costa P, Karpinski Z, Mazza J (2020) The likely impact of COVID-19 on education: reflections based on the existing literature and recent international datasets. Publications Office of the European Union, Luxembourg

Duffy TM, Jonassen DH (2009) Constructivism and the technology of instruction: a conversation . Routledge, Taylor & Francis Group

Edvardsson IR, Oskarsson GK (2008) Distance education and academic achievement in business administration: the case of the University of Akureyri. Int Rev Res Open Distrib Learn, 9 (3). https://doi.org/10.19173/irrodl.v9i3.542

Figlio D, Rush M, Yin L (2013) Is it live or is it internet? Experimental estimates of the effects of online instruction on student learning. J Labor Econ 31(4):763–784. https://doi.org/10.3386/w16089

Fischer C, Xu D, Rodriguez F, Denaro K, Warschauer M (2020) Effects of course modality in summer session: enrollment patterns and student performance in face-to-face and online classes. Internet Higher Educ 45:100710. https://doi.org/10.1016/j.iheduc.2019.100710

Gadamer HG (2001) Education is self‐education. J Philos Educ 35(4):529–538

Garrison DR (2011) E-learning in the 21st century: a framework for research and practice . Routledge. https://doi.org/10.4324/9780203838761

Gonzalez T, de la Rubia MA, Hincz KP, Comas-Lopez M, Subirats L, Fort S, & Sacha GM (2020) Influence of COVID-19 confinement on students’ performance in higher education. PLOS One 15 (10). https://doi.org/10.1371/journal.pone.0239490

Hake RR (1998) Interactive-engagement versus traditional methods: a six-thousand-student survey of mechanics test data for introductory physics courses. Am J Phys 66(1):64–74. https://doi.org/10.1119/1.18809

Article ADS Google Scholar

Hall ACG, Lineweaver TT, Hogan EE, O’Brien SW (2020) On or off task: the negative influence of laptops on neighboring students’ learning depends on how they are used. Comput Educ 153:1–8. https://doi.org/10.1016/j.compedu.2020.103901

Harasim L (2017) Learning theory and online technologies. Routledge. https://doi.org/10.4324/9780203846933

Hiemstra R (1994) Self-directed learning. In WJ Rothwell & KJ Sensenig (Eds), The sourcebook for self-directed learning (pp 9–20). HRD Press

Ho DE, Kelman MG (2014) Does class size affect the gender gap? A natural experiment in law. J Legal Stud 43(2):291–321

Iglesias-Pradas S, Hernández-García Á, Chaparro-Peláez J, Prieto JL (2021) Emergency remote teaching and students’ academic performance in higher education during the COVID-19 pandemic: a case study. Comput Hum Behav 119:106713. https://doi.org/10.1016/j.chb.2021.106713

Jepsen C (2015) Class size: does it matter for student achievement? IZA World of Labor . https://doi.org/10.15185/izawol.190

Jonassen DH, Howland J, Moore J, & Marra RM (2003) Learning to solve problems with technology: a constructivist perspective (2nd ed). Columbus: Prentice Hall

Kaupp R (2012) Online penalty: the impact of online instruction on the Latino-White achievement gap. J Appli Res Community Coll 19(2):3–11. https://doi.org/10.46569/10211.3/99362

Koehler MJ, Mishra P (2009) What is technological pedagogical content knowledge? Contemp Issues Technol Teacher Educ 9(1):60–70

Kofoed M, Gebhart L, Gilmore D, & Moschitto R (2021) Zooming to class?: Experimental evidence on college students’ online learning during COVID-19. SSRN Electron J. https://doi.org/10.2139/ssrn.3846700

Kuhfeld M, Soland J, Tarasawa B, Johnson A, Ruzek E, Liu J (2020) Projecting the potential impact of COVID-19 school closures on academic achievement. Educ Res 49(8):549–565. https://doi.org/10.3102/0013189x20965918

Lai JW, Bower M (2019) How is the use of technology in education evaluated? A systematic review. Comput Educ 133:27–42

Meinck S, Brese F (2019) Trends in gender gaps: using 20 years of evidence from TIMSS. Large-Scale Assess Educ 7 (1). https://doi.org/10.1186/s40536-019-0076-3

Radha R, Mahalakshmi K, Kumar VS, Saravanakumar AR (2020) E-Learning during lockdown of COVID-19 pandemic: a global perspective. Int J Control Autom 13(4):1088–1099

Ravizza SM, Uitvlugt MG, Fenn KM (2017) Logged in and zoned out: How laptop Internet use relates to classroom learning. Psychol Sci 28(2):171–180. https://doi.org/10.1177/095679761667731

Article PubMed Google Scholar

Sadeghi M (2019) A shift from classroom to distance learning: advantages and limitations. Int J Res Engl Educ 4(1):80–88

Salmon G (2000) E-moderating: the key to teaching and learning online . Routledge. https://doi.org/10.4324/9780203816684

Shulman LS (1986) Those who understand: knowledge growth in teaching. Edu Res 15(2):4–14

Shulman LS (1987) Knowledge and teaching: foundations of the new reform. Harv Educ Rev 57(1):1–22

Tullis JG, Benjamin AS (2011) On the effectiveness of self-paced learning. J Mem Lang 64(2):109–118. https://doi.org/10.1016/j.jml.2010.11.002

Valverde-Berrocoso J, Garrido-Arroyo MDC, Burgos-Videla C, Morales-Cevallos MB (2020) Trends in educational research about e-learning: a systematic literature review (2009–2018). Sustainability 12(12):5153

Volk F, Floyd CG, Shaler L, Ferguson L, Gavulic AM (2020) Active duty military learners and distance education: factors of persistence and attrition. Am J Distance Educ 34(3):1–15. https://doi.org/10.1080/08923647.2019.1708842

Vygotsky LS (1978) Mind in society: the development of higher psychological processes. Harvard University Press

Download references

Author information

Authors and affiliations.

Department of Sports and Recreation Management, King Saud University, Riyadh, Saudi Arabia

Bandar N. Alarifi

Division of Research and Doctoral Studies, Concordia University Chicago, 7400 Augusta Street, River Forest, IL, 60305, USA

You can also search for this author in PubMed Google Scholar

Contributions

Dr. Bandar Alarifi collected and organized data for the five courses and wrote the manuscript. Dr. Steve Song analyzed and interpreted the data regarding student achievement and revised the manuscript. These authors jointly supervised this work and approved the final manuscript.

Corresponding author

Correspondence to Bandar N. Alarifi .

Ethics declarations

Competing interests.

The author declares no competing interests.

Ethical approval

This study was approved by the Research Ethics Committee at King Saud University on 25 March 2021 (No. 4/4/255639). This research does not involve the collection or analysis of data that could be used to identify participants (including email addresses or other contact details). All information is anonymized and the submission does not include images that may identify the person. The procedures used in this study adhere to the tenets of the Declaration of Helsinki.

Informed consent

This article does not contain any studies with human participants performed by any of the authors.

Additional information

Publisher’s note Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Supplementary information

Rights and permissions.

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons license, and indicate if changes were made. The images or other third party material in this article are included in the article’s Creative Commons license, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons license and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this license, visit http://creativecommons.org/licenses/by/4.0/ .

Reprints and permissions

About this article

Cite this article.

Alarifi, B.N., Song, S. Online vs in-person learning in higher education: effects on student achievement and recommendations for leadership. Humanit Soc Sci Commun 11 , 86 (2024). https://doi.org/10.1057/s41599-023-02590-1

Download citation

Received : 07 June 2023

Accepted : 21 December 2023

Published : 09 January 2024

DOI : https://doi.org/10.1057/s41599-023-02590-1

Share this article

Anyone you share the following link with will be able to read this content:

Sorry, a shareable link is not currently available for this article.

Provided by the Springer Nature SharedIt content-sharing initiative

Quick links

- Explore articles by subject

- Guide to authors

- Editorial policies

Impact of online classes on the satisfaction and performance of students during the pandemic period of COVID 19

- Published: 21 April 2021

- Volume 26 , pages 6923–6947, ( 2021 )

Cite this article

- Ram Gopal 1 ,

- Varsha Singh 1 &

- Arun Aggarwal ORCID: orcid.org/0000-0003-3986-188X 2

The aim of the study is to identify the factors affecting students’ satisfaction and performance regarding online classes during the pandemic period of COVID–19 and to establish the relationship between these variables. The study is quantitative in nature, and the data were collected from 544 respondents through online survey who were studying the business management (B.B.A or M.B.A) or hotel management courses in Indian universities. Structural equation modeling was used to analyze the proposed hypotheses. The results show that four independent factors used in the study viz. quality of instructor, course design, prompt feedback, and expectation of students positively impact students’ satisfaction and further student’s satisfaction positively impact students’ performance. For educational management, these four factors are essential to have a high level of satisfaction and performance for online courses. This study is being conducted during the epidemic period of COVID- 19 to check the effect of online teaching on students’ performance.

Avoid common mistakes on your manuscript.

1 Introduction

Coronavirus is a group of viruses that is the main root of diseases like cough, cold, sneezing, fever, and some respiratory symptoms (WHO, 2019 ). Coronavirus is a contagious disease, which is spreading very fast amongst the human beings. COVID-19 is a new sprain which was originated in Wuhan, China, in December 2019. Coronavirus circulates in animals, but some of these viruses can transmit between animals and humans (Perlman & Mclntosh, 2020 ). As of March 282,020, according to the MoHFW, a total of 909 confirmed COVID-19 cases (862 Indians and 47 foreign nationals) had been reported in India (Centers for Disease Control and Prevention, 2020 ). Officially, no vaccine or medicine is evaluated to cure the spread of COVID-19 (Yu et al., 2020 ). The influence of the COVID-19 pandemic on the education system leads to schools and colleges’ widespread closures worldwide. On March 24, India declared a country-wide lockdown of schools and colleges (NDTV, 2020 ) for preventing the transmission of the coronavirus amongst the students (Bayham & Fenichel, 2020 ). School closures in response to the COVID-19 pandemic have shed light on several issues affecting access to education. COVID-19 is soaring due to which the huge number of children, adults, and youths cannot attend schools and colleges (UNESCO, 2020 ). Lah and Botelho ( 2012 ) contended that the effect of school closing on students’ performance is hazy.

Similarly, school closing may also affect students because of disruption of teacher and students’ networks, leading to poor performance. Bridge ( 2020 ) reported that schools and colleges are moving towards educational technologies for student learning to avoid a strain during the pandemic season. Hence, the present study’s objective is to develop and test a conceptual model of student’s satisfaction pertaining to online teaching during COVID-19, where both students and teachers have no other option than to use the online platform uninterrupted learning and teaching.

UNESCO recommends distance learning programs and open educational applications during school closure caused by COVID-19 so that schools and teachers use to teach their pupils and bound the interruption of education. Therefore, many institutes go for the online classes (Shehzadi et al., 2020 ).

As a versatile platform for learning and teaching processes, the E-learning framework has been increasingly used (Salloum & Shaalan, 2018 ). E-learning is defined as a new paradigm of online learning based on information technology (Moore et al., 2011 ). In contrast to traditional learning academics, educators, and other practitioners are eager to know how e-learning can produce better outcomes and academic achievements. Only by analyzing student satisfaction and their performance can the answer be sought.

Many comparative studies have been carried out to prove the point to explore whether face-to-face or traditional teaching methods are more productive or whether online or hybrid learning is better (Lockman & Schirmer, 2020 ; Pei & Wu, 2019 ; González-Gómez et al., 2016 ; González-Gómez et al., 2016 ). Results of the studies show that the students perform much better in online learning than in traditional learning. Henriksen et al. ( 2020 ) highlighted the problems faced by educators while shifting from offline to online mode of teaching. In the past, several research studies had been carried out on online learning to explore student satisfaction, acceptance of e-learning, distance learning success factors, and learning efficiency (Sher, 2009 ; Lee, 2014 ; Yen et al., 2018 ). However, scant amount of literature is available on the factors that affect the students’ satisfaction and performance in online classes during the pandemic of Covid-19 (Rajabalee & Santally, 2020 ). In the present study, the authors proposed that course design, quality of the instructor, prompt feedback, and students’ expectations are the four prominent determinants of learning outcome and satisfaction of the students during online classes (Lee, 2014 ).

The Course Design refers to curriculum knowledge, program organization, instructional goals, and course structure (Wright, 2003 ). If well planned, course design increasing the satisfaction of pupils with the system (Almaiah & Alyoussef, 2019 ). Mtebe and Raisamo ( 2014 ) proposed that effective course design will help in improving the performance through learners knowledge and skills (Khan & Yildiz, 2020 ; Mohammed et al., 2020 ). However, if the course is not designed effectively then it might lead to low usage of e-learning platforms by the teachers and students (Almaiah & Almulhem, 2018 ). On the other hand, if the course is designed effectively then it will lead to higher acceptance of e-learning system by the students and their performance also increases (Mtebe & Raisamo, 2014 ). Hence, to prepare these courses for online learning, many instructors who are teaching blended courses for the first time are likely to require a complete overhaul of their courses (Bersin, 2004 ; Ho et al., 2006 ).

The second-factor, Instructor Quality, plays an essential role in affecting the students’ satisfaction in online classes. Instructor quality refers to a professional who understands the students’ educational needs, has unique teaching skills, and understands how to meet the students’ learning needs (Luekens et al., 2004 ). Marsh ( 1987 ) developed five instruments for measuring the instructor’s quality, in which the main method was Students’ Evaluation of Educational Quality (SEEQ), which delineated the instructor’s quality. SEEQ is considered one of the methods most commonly used and embraced unanimously (Grammatikopoulos et al., 2014 ). SEEQ was a very useful method of feedback by students to measure the instructor’s quality (Marsh, 1987 ).

The third factor that improves the student’s satisfaction level is prompt feedback (Kinicki et al., 2004 ). Feedback is defined as information given by lecturers and tutors about the performance of students. Within this context, feedback is a “consequence of performance” (Hattie & Timperley, 2007 , p. 81). In education, “prompt feedback can be described as knowing what you know and what you do not related to learning” (Simsek et al., 2017 , p.334). Christensen ( 2014 ) studied linking feedback to performance and introduced the positivity ratio concept, which is a mechanism that plays an important role in finding out the performance through feedback. It has been found that prompt feedback helps in developing a strong linkage between faculty and students which ultimately leads to better learning outcomes (Simsek et al., 2017 ; Chang, 2011 ).

The fourth factor is students’ expectation . Appleton-Knapp and Krentler ( 2006 ) measured the impact of student’s expectations on their performance. They pin pointed that the student expectation is important. When the expectations of the students are achieved then it lead to the higher satisfaction level of the student (Bates & Kaye, 2014 ). These findings were backed by previous research model “Student Satisfaction Index Model” (Zhang et al., 2008 ). However, when the expectations are students is not fulfilled then it might lead to lower leaning and satisfaction with the course. Student satisfaction is defined as students’ ability to compare the desired benefit with the observed effect of a particular product or service (Budur et al., 2019 ). Students’ whose grade expectation is high will show high satisfaction instead of those facing lower grade expectations.

The scrutiny of the literature show that although different researchers have examined the factors affecting student satisfaction but none of the study has examined the effect of course design, quality of the instructor, prompt feedback, and students’ expectations on students’ satisfaction with online classes during the pandemic period of Covid-19. Therefore, this study tries to explore the factors that affect students’ satisfaction and performance regarding online classes during the pandemic period of COVID–19. As the pandemic compelled educational institutions to move online with which they were not acquainted, including teachers and learners. The students were not mentally prepared for such a shift. Therefore, this research will be examined to understand what factors affect students and how students perceived these changes which are reflected through their satisfaction level.

This paper is structured as follows: The second section provides a description of theoretical framework and the linkage among different research variables and accordingly different research hypotheses were framed. The third section deals with the research methodology of the paper as per APA guideline. The outcomes and corresponding results of the empirical analysis are then discussed. Lastly, the paper concludes with a discussion and proposes implications for future studies.

2 Theoretical framework

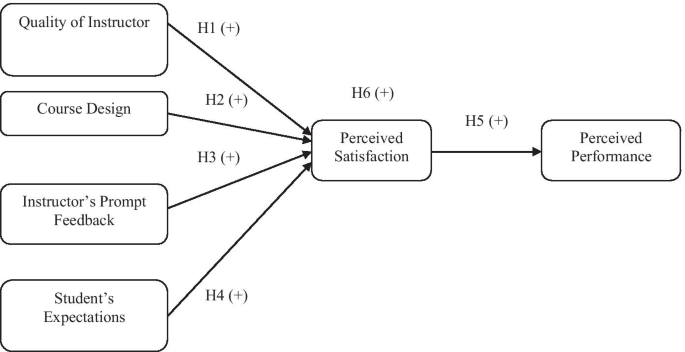

Achievement goal theory (AGT) is commonly used to understand the student’s performance, and it is proposed by four scholars Carole Ames, Carol Dweck, Martin Maehr, and John Nicholls in the late 1970s (Elliot, 2005 ). Elliott & Dweck ( 1988 , p11) define that “an achievement goal involves a program of cognitive processes that have cognitive, affective and behavioral consequence”. This theory suggests that students’ motivation and achievement-related behaviors can be easily understood by the purpose and the reasons they adopted while they are engaged in the learning activities (Dweck & Leggett, 1988 ; Ames, 1992 ; Urdan, 1997 ). Some of the studies believe that there are four approaches to achieve a goal, i.e., mastery-approach, mastery avoidance, performance approach, and performance-avoidance (Pintrich, 1999 ; Elliot & McGregor, 2001 ; Schwinger & Stiensmeier-Pelster, 2011 , Hansen & Ringdal, 2018 ; Mouratidis et al., 2018 ). The environment also affects the performance of students (Ames & Archer, 1988 ). Traditionally, classroom teaching is an effective method to achieve the goal (Ames & Archer, 1988 ; Ames, 1992 ; Clayton et al., 2010 ) however in the modern era, the internet-based teaching is also one of the effective tools to deliver lectures, and web-based applications are becoming modern classrooms (Azlan et al., 2020 ). Hence, following section discuss about the relationship between different independent variables and dependent variables (Fig. 1 ).

Proposed Model

3 Hypotheses development

3.1 quality of the instructor and satisfaction of the students.

Quality of instructor with high fanaticism on student’s learning has a positive impact on their satisfaction. Quality of instructor is one of the most critical measures for student satisfaction, leading to the education process’s outcome (Munteanu et al., 2010 ; Arambewela & Hall, 2009 ; Ramsden, 1991 ). Suppose the teacher delivers the course effectively and influence the students to do better in their studies. In that case, this process leads to student satisfaction and enhances the learning process (Ladyshewsky, 2013 ). Furthermore, understanding the need of learner by the instructor also ensures student satisfaction (Kauffman, 2015 ). Hence the hypothesis that the quality of instructor significantly affects the satisfaction of the students was included in this study.

H1: The quality of the instructor positively affects the satisfaction of the students.

3.2 Course design and satisfaction of students

The course’s technological design is highly persuading the students’ learning and satisfaction through their course expectations (Liaw, 2008 ; Lin et al., 2008 ). Active course design indicates the students’ effective outcomes compared to the traditional design (Black & Kassaye, 2014 ). Learning style is essential for effective course design (Wooldridge, 1995 ). While creating an online course design, it is essential to keep in mind that we generate an experience for students with different learning styles. Similarly, (Jenkins, 2015 ) highlighted that the course design attributes could be developed and employed to enhance student success. Hence the hypothesis that the course design significantly affects students’ satisfaction was included in this study.

H2: Course design positively affects the satisfaction of students.

3.3 Prompt feedback and satisfaction of students

The emphasis in this study is to understand the influence of prompt feedback on satisfaction. Feedback gives the information about the students’ effective performance (Chang, 2011 ; Grebennikov & Shah, 2013 ; Simsek et al., 2017 ). Prompt feedback enhances student learning experience (Brownlee et al., 2009 ) and boosts satisfaction (O'donovan, 2017 ). Prompt feedback is the self-evaluation tool for the students (Rogers, 1992 ) by which they can improve their performance. Eraut ( 2006 ) highlighted the impact of feedback on future practice and student learning development. Good feedback practice is beneficial for student learning and teachers to improve students’ learning experience (Yorke, 2003 ). Hence the hypothesis that prompt feedback significantly affects satisfaction was included in this study.

H3: Prompt feedback of the students positively affects the satisfaction.

3.4 Expectations and satisfaction of students

Expectation is a crucial factor that directly influences the satisfaction of the student. Expectation Disconfirmation Theory (EDT) (Oliver, 1980 ) was utilized to determine the level of satisfaction based on their expectations (Schwarz & Zhu, 2015 ). Student’s expectation is the best way to improve their satisfaction (Brown et al., 2014 ). It is possible to recognize student expectations to progress satisfaction level (ICSB, 2015 ). Finally, the positive approach used in many online learning classes has been shown to place a high expectation on learners (Gold, 2011 ) and has led to successful outcomes. Hence the hypothesis that expectations of the student significantly affect the satisfaction was included in this study.

H4: Expectations of the students positively affects the satisfaction.

3.5 Satisfaction and performance of the students

Zeithaml ( 1988 ) describes that satisfaction is the outcome result of the performance of any educational institute. According to Kotler and Clarke ( 1986 ), satisfaction is the desired outcome of any aim that amuses any individual’s admiration. Quality interactions between instructor and students lead to student satisfaction (Malik et al., 2010 ; Martínez-Argüelles et al., 2016 ). Teaching quality and course material enhances the student satisfaction by successful outcomes (Sanderson, 1995 ). Satisfaction relates to the student performance in terms of motivation, learning, assurance, and retention (Biner et al., 1996 ). Mensink and King ( 2020 ) described that performance is the conclusion of student-teacher efforts, and it shows the interest of students in the studies. The critical element in education is students’ academic performance (Rono, 2013 ). Therefore, it is considered as center pole, and the entire education system rotates around the student’s performance. Narad and Abdullah ( 2016 ) concluded that the students’ academic performance determines academic institutions’ success and failure.

Singh et al. ( 2016 ) asserted that the student academic performance directly influences the country’s socio-economic development. Farooq et al. ( 2011 ) highlights the students’ academic performance is the primary concern of all faculties. Additionally, the main foundation of knowledge gaining and improvement of skills is student’s academic performance. According to Narad and Abdullah ( 2016 ), regular evaluation or examinations is essential over a specific period of time in assessing students’ academic performance for better outcomes. Hence the hypothesis that satisfaction significantly affects the performance of the students was included in this study.

H5: Students’ satisfaction positively affects the performance of the students.

3.6 Satisfaction as mediator

Sibanda et al. ( 2015 ) applied the goal theory to examine the factors persuading students’ academic performance that enlightens students’ significance connected to their satisfaction and academic achievement. According to this theory, students perform well if they know about factors that impact on their performance. Regarding the above variables, institutional factors that influence student satisfaction through performance include course design and quality of the instructor (DeBourgh, 2003 ; Lado et al., 2003 ), prompt feedback, and expectation (Fredericksen et al., 2000 ). Hence the hypothesis that quality of the instructor, course design, prompts feedback, and student expectations significantly affect the students’ performance through satisfaction was included in this study.

H6: Quality of the instructor, course design, prompt feedback, and student’ expectations affect the students’ performance through satisfaction.

H6a: Students’ satisfaction mediates the relationship between quality of the instructor and student’s performance.

H6b: Students’ satisfaction mediates the relationship between course design and student’s performance.

H6c: Students’ satisfaction mediates the relationship between prompt feedback and student’s performance.

H6d: Students’ satisfaction mediates the relationship between student’ expectations and student’s performance.

4.1 Participants

In this cross-sectional study, the data were collected from 544 respondents who were studying the management (B.B.A or M.B.A) and hotel management courses. The purposive sampling technique was used to collect the data. Descriptive statistics shows that 48.35% of the respondents were either MBA or BBA and rests of the respondents were hotel management students. The percentages of male students were (71%) and female students were (29%). The percentage of male students is almost double in comparison to females. The ages of the students varied from 18 to 35. The dominant group was those aged from 18 to 22, and which was the under graduation student group and their ratio was (94%), and another set of students were from the post-graduation course, which was (6%) only.

4.2 Materials

The research instrument consists of two sections. The first section is related to demographical variables such as discipline, gender, age group, and education level (under-graduate or post-graduate). The second section measures the six factors viz. instructor’s quality, course design, prompt feedback, student expectations, satisfaction, and performance. These attributes were taken from previous studies (Yin & Wang, 2015 ; Bangert, 2004 ; Chickering & Gamson, 1987 ; Wilson et al., 1997 ). The “instructor quality” was measured through the scale developed by Bangert ( 2004 ). The scale consists of seven items. The “course design” and “prompt feedback” items were adapted from the research work of Bangert ( 2004 ). The “course design” scale consists of six items. The “prompt feedback” scale consists of five items. The “students’ expectation” scale consists of five items. Four items were adapted from Bangert, 2004 and one item was taken from Wilson et al. ( 1997 ). Students’ satisfaction was measure with six items taken from Bangert ( 2004 ); Wilson et al. ( 1997 ); Yin and Wang ( 2015 ). The “students’ performance” was measured through the scale developed by Wilson et al. ( 1997 ). The scale consists of six items. These variables were accessed on a five-point likert scale, ranging from 1(strongly disagree) to 5(strongly agree). Only the students from India have taken part in the survey. A total of thirty-four questions were asked in the study to check the effect of the first four variables on students’ satisfaction and performance. For full details of the questionnaire, kindly refer Appendix Tables 6 .

The study used a descriptive research design. The factors “instructor quality, course design, prompt feedback and students’ expectation” were independent variables. The students’ satisfaction was mediator and students’ performance was the dependent variable in the current study.

4.4 Procedure

In this cross-sectional research the respondents were selected through judgment sampling. They were informed about the objective of the study and information gathering process. They were assured about the confidentiality of the data and no incentive was given to then for participating in this study. The information utilizes for this study was gathered through an online survey. The questionnaire was built through Google forms, and then it was circulated through the mails. Students’ were also asked to write the name of their college, and fifteen colleges across India have taken part to fill the data. The data were collected in the pandemic period of COVID-19 during the total lockdown in India. This was the best time to collect the data related to the current research topic because all the colleges across India were involved in online classes. Therefore, students have enough time to understand the instrument and respondent to the questionnaire in an effective manner. A total of 615 questionnaires were circulated, out of which the students returned 574. Thirty responses were not included due to the unengaged responses. Finally, 544 questionnaires were utilized in the present investigation. Male and female students both have taken part to fill the survey, different age groups, and various courses, i.e., under graduation and post-graduation students of management and hotel management students were the part of the sample.

5.1 Exploratory factor analysis (EFA)

To analyze the data, SPSS and AMOS software were used. First, to extract the distinct factors, an exploratory factor analysis (EFA) was performed using VARIMAX rotation on a sample of 544. Results of the exploratory analysis rendered six distinct factors. Factor one was named as the quality of instructor, and some of the items were “The instructor communicated effectively”, “The instructor was enthusiastic about online teaching” and “The instructor was concerned about student learning” etc. Factor two was labeled as course design, and the items were “The course was well organized”, “The course was designed to allow assignments to be completed across different learning environments.” and “The instructor facilitated the course effectively” etc. Factor three was labeled as prompt feedback of students, and some of the items were “The instructor responded promptly to my questions about the use of Webinar”, “The instructor responded promptly to my questions about general course requirements” etc. The fourth factor was Student’s Expectations, and the items were “The instructor provided models that clearly communicated expectations for weekly group assignments”, “The instructor used good examples to explain statistical concepts” etc. The fifth factor was students’ satisfaction, and the items were “The online classes were valuable”, “Overall, I am satisfied with the quality of this course” etc. The sixth factor was performance of the student, and the items were “The online classes has sharpened my analytic skills”, “Online classes really tries to get the best out of all its students” etc. These six factors explained 67.784% of the total variance. To validate the factors extracted through EFA, the researcher performed confirmatory factor analysis (CFA) through AMOS. Finally, structural equation modeling (SEM) was used to test the hypothesized relationships.

5.2 Measurement model

The results of Table 1 summarize the findings of EFA and CFA. Results of the table showed that EFA renders six distinct factors, and CFA validated these factors. Table 2 shows that the proposed measurement model achieved good convergent validity (Aggarwal et al., 2018a , b ). Results of the confirmatory factor analysis showed that the values of standardized factor loadings were statistically significant at the 0.05 level. Further, the results of the measurement model also showed acceptable model fit indices such that CMIN = 710.709; df = 480; CMIN/df = 1.481 p < .000; Incremental Fit Index (IFI) = 0.979; Tucker-Lewis Index (TLI) = 0.976; Goodness of Fit index (GFI) = 0.928; Adjusted Goodness of Fit Index (AGFI) = 0.916; Comparative Fit Index (CFI) = 0.978; Root Mean Square Residual (RMR) = 0.042; Root Mean Squared Error of Approximation (RMSEA) = 0.030 is satisfactory.

The Average Variance Explained (AVE) according to the acceptable index should be higher than the value of squared correlations between the latent variables and all other variables. The discriminant validity is confirmed (Table 2 ) as the value of AVE’s square root is greater than the inter-construct correlations coefficient (Hair et al., 2006 ). Additionally, the discriminant validity existed when there was a low correlation between each variable measurement indicator with all other variables except with the one with which it must be theoretically associated (Aggarwal et al., 2018a , b ; Aggarwal et al., 2020 ). The results of Table 2 show that the measurement model achieved good discriminate validity.

5.3 Structural model

To test the proposed hypothesis, the researcher used the structural equation modeling technique. This is a multivariate statistical analysis technique, and it includes the amalgamation of factor analysis and multiple regression analysis. It is used to analyze the structural relationship between measured variables and latent constructs.

Table 3 represents the structural model’s model fitness indices where all variables put together when CMIN/DF is 2.479, and all the model fit values are within the particular range. That means the model has attained a good model fit. Furthermore, other fit indices as GFI = .982 and AGFI = 0.956 be all so supportive (Schumacker & Lomax, 1996 ; Marsh & Grayson, 1995 ; Kline, 2005 ).

Hence, the model fitted the data successfully. All co-variances among the variables and regression weights were statistically significant ( p < 0.001).