- Data Science

- Engineering

- Entrepreneurship

- Technology Insider

- Manufacturing

- MIT Bootcamps

- MIT Open Learning

- MITx MicroMasters Programs

- Online Education

- Professional Development

- Quantum Computing

View All Posts

By: MIT xPRO on August 5th, 2020 3 Minute Read

Print/Save as PDF

Here are the Most Common Problems Being Solved by Machine Learning

Machine Learning

Although machine learning offers important new capabilities for solving today’s complex problems, more organizations may be tempted to apply machine learning techniques as a one-size-fits all solution.

To use machine learning effectively, engineers and scientists need a clear understanding of the most common issues that machine learning can solve. In a recent MIT xPRO Machine Learning whitepaper titled " Applications For Machine Learning In Engineering and the Physical Sciences,” Professor Youssef Marzouk and fellow MIT colleagues outlined the potentials and limitations of machine learning in STEM.

Here are some common challenges that can be solved by machine learning:

Accelerate processing and increase efficiency Machine learning can wrap around existing science and engineering models to create fast and accurate surrogates, identify key patterns in model outputs, and help further tune and refine the models. All this helps more quickly and accurately predict outcomes at new inputs and design conditions.

Quantify and manage risk. Machine learning can be used to model the probability of different outcomes in a process that cannot easily be predicted due to randomness or noise. This is especially valuable for situations where reliability and safety are paramount.

Compensate for missing data. Gaps in a data set can severely limit accurate learning, inference, and prediction. Models trained by machine learning improve with more relevant data. When used correctly, machine learning can also help synthesize missing data that round out incomplete datasets.

Make more accurate predictions or conclusions from your data . You can streamline your data-to-prediction pipeline by tuning how your machine learning model’s parameters will be updated and learning during training. Building better models of your data will also improve the accuracy of subsequent predictions.

Solve complex classification and prediction problems. Predicting how an organism’s genome will be expressed or what the climate will be like in fifty years are examples of highly complex problems. Many modern machine learning problems take thousands or even millions of data samples (or far more) across many dimensions to build expressive and powerful predictors, often pushing far beyond traditional statistical methods.

Create new designs. There is often a disconnect between what designers envision and how products are made. It’s costly and time-consuming to simulate every variation of a long list of design variables. Machine learning can identify key variables, automatically generate good options, and help designers identify which best fits their requirements.

Increase yields. Manufacturers aim to overcome inconsistency in equipment performance and predict maintenance by applying machine learning to flag defects and quality issues before products ship to customers, improve efficiency on the production line, and increase yields by optimizing the use of manufacturing resources.

Machine learning is undoubtedly hitting its stride, as engineers and physical scientists leverage the competitive advantage of big data across industries — from aerospace, to construction, to pharmaceuticals, transportation, and energy. But it has never been more important to understand the physics-based models, computational science, and engineering paradigms upon which machine learning solutions are built.

The list above details the most common problems that organizations can solve with machine learning. For more specific applications across engineering and the physical sciences, download MIT xPRO’s free Machine Learning whitepaper .

- More about MIT xPRO

- About this Site

- Terms of Service

- Privacy Policy

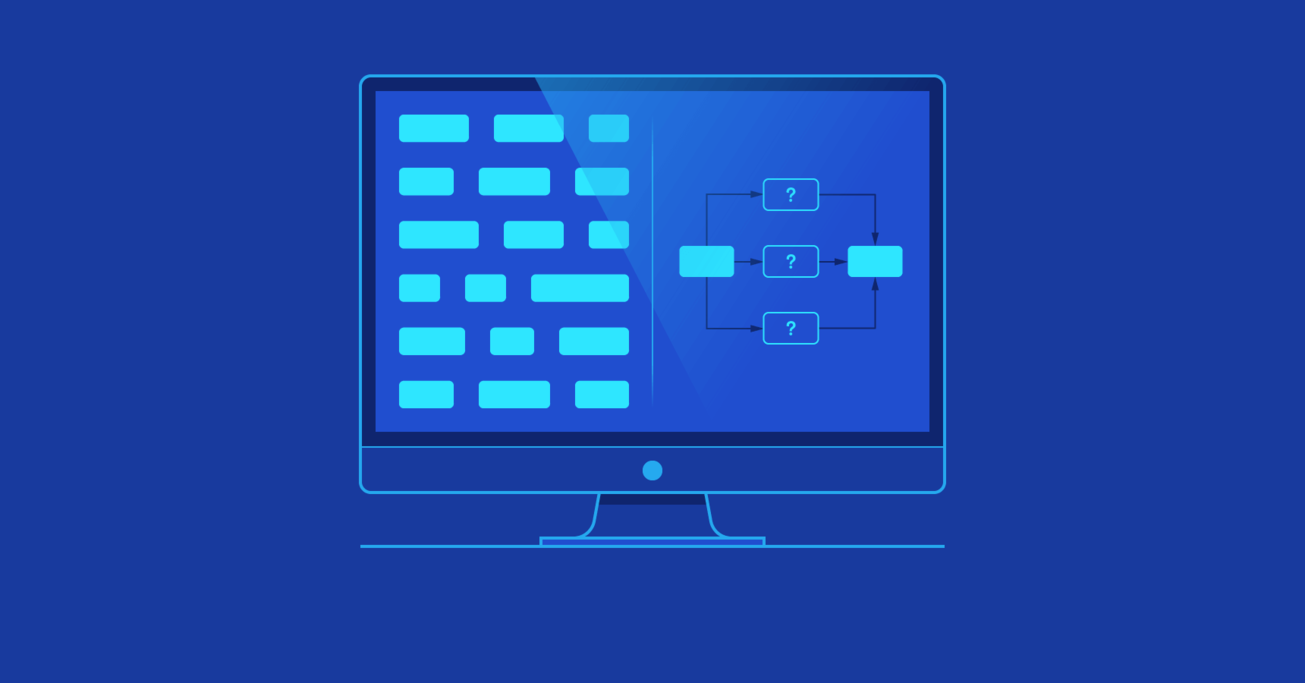

How to Approach Machine Learning Problems

How do you approach machine learning problems? Are neural networks the answer to nearly every challenge you may encounter?

In this article, Toptal Freelance Python Developer Peter Hussami explains the basic approach to machine learning problems and points out where neural may fall short.

By Peter Hussami

Peter’s rare math-modeling expertise includes audio and sensor analysis, ID verification, NPL, scheduling, routing, and credit scoring.

PREVIOUSLY AT

One of the main tasks of computers is to automate human tasks. Some of these tasks are simple and repetitive, such as “move X from A to B.” It gets far more interesting when the computer has to make decisions about problems that are far more difficult to formalize. That is where we start to encounter basic machine learning problems.

Historically, such algorithms were built by scientists or experts that had intimate knowledge of their field and were largely based on rules. With the explosion of computing power and the availability of large and diverse data sets, the focus has shifted toward a more computational approach.

Most popularized machine learning concepts these days have to do with neural networks, and in my experience, this created the impression in many people that neural networks are some kind of a miracle weapon for all inference problems. Actually, this is quite far from the truth. In the eyes of the statistician, they form one class of inference approaches with their associated strengths and weaknesses, and it completely depends on the problem whether neural networks are going to be the best solution or not.

Quite often, there are better approaches.

In this article, we will outline a structure for attacking machine learning problems. There is no scope for going into too much detail about specific machine learning models , but if this article generates interest, subsequent articles could offer detailed solutions for some interesting machine learning problems.

First, however, let us spend some effort showing why you should be more circumspect than to automatically think “ neural network ” when faced with a machine learning problem.

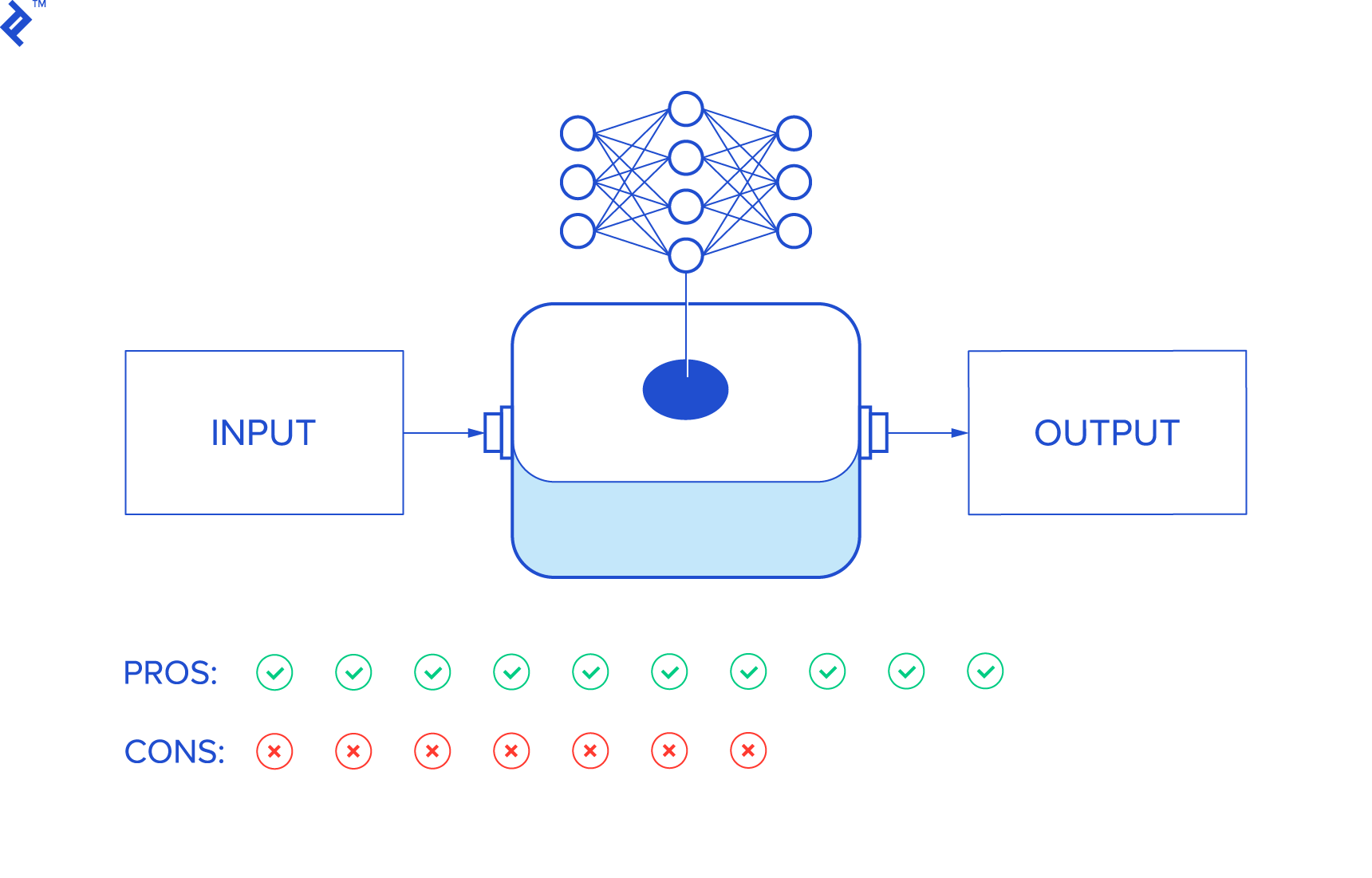

Pros and Cons of Neural Networks

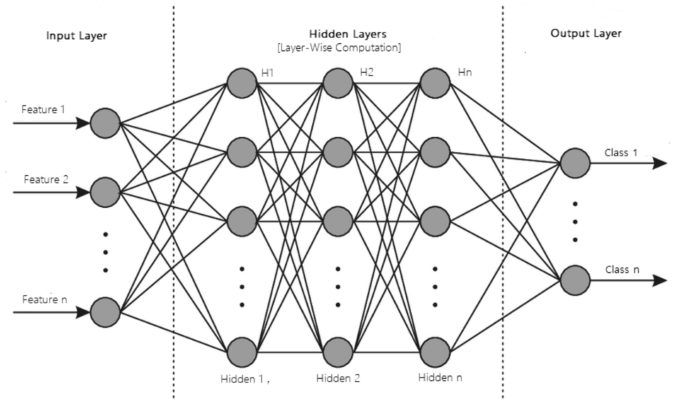

With neural networks, the inference is done through a weighted “network.” The weights are calibrated during the so-called “learning” process, and then, subsequently, applied to assign outcomes to inputs.

As simple as this may sound, all the weights are parameters to the calibrated network, and usually, that means too many parameters for a human to make sense of.

So we might as well just consider neural networks as some kind of an inference black box that connects the input to output, with no specific model in between.

Let us take a closer look at the pros and cons of this approach.

Advantages of Neural Networks

- The input is the data itself. Usable results even with little or no feature engineering.

- Trainable skill. With no feature engineering, there is no need for such hard-to-develop skills as intuition or domain expertise. Standard tools are available for generic inferences.

- Accuracy improves with the quantity of data. The more inputs it sees, the better a neural network performs.

- May outperform classical models when there is not full information about the model. Think of public sentiment, for one.

- Open-ended inference can discover unknown patterns. If you use a model and leave a consideration out of it, it will not detect the corresponding phenomenon. Neural networks might.

Successful neural network example: Google’s AI found a planet orbiting a distant star—where NASA did not—by analyzing accumulated telescope data.

Disadvantages of Neural Networks

- They require a lot of (annotated!) data. First, this amount of data is not always available. Convergence is slow. A solid model (say, in physics) can be calibrated after a few observations—with neural networks, this is out of the question. Annotation is a lot of work, not to mention that it, in itself, is not foolproof.

- No information about the inner structure of the data. Are you interested in what the inference is based on? No luck here. There are situations where manually adjusting the data improves inference by a leap, but a neural network will not be able to help with that.

- Overfitting issues. It happens often that the network has more parameters than the data justifies, which leads to suboptimal inference.

- Performance depends on information. If there is full information about a problem, a solid model tends to outperform a neural network.

- There are sampling problems. Sampling is always a delicate issue, but with a model, one can quickly develop a notion of problematic sampling. Neural networks learn only from the data, so if they get biased data, they will have biased conclusions.

An example of failure: A personal relation told me of a major corporation (that I cannot name) that was working on detecting military vehicles on aerial photos. They had images where there were such vehicles and others that did not. Most images of the former class were taken on a rainy day, while the latter were taken in sunny weather. As a result, the system learned to distinguish light from shadow.

To sum up, neural networks form one class of inference methods that have their pros and cons.

The fact that their popularity outshines all other statistical methods in the eyes of the public has likely more to do with corporate governance than anything else.

Training people to use standard tools and standardized neural network methods is a far more predictable process than hunting for domain experts and artists from various fields. This, however, does not change the fact that using a neural network for a simple, well-defined problem is really just shooting a sparrow with a cannon: It needs a lot of data, requires a lot of annotation work, and in return might just underperform when compared to a solid model. Not the best package.

Still, there is huge power in the fact that they “democratize” statistical knowledge. Once a neural network-based inference solution is viewed as a mere programming tool, it may help even those who don’t feel comfortable with complex algorithms. So, inevitably, a lot of things are now built that would otherwise not exist if we could only operate with sophisticated models.

Approaching Machine Learning Problems

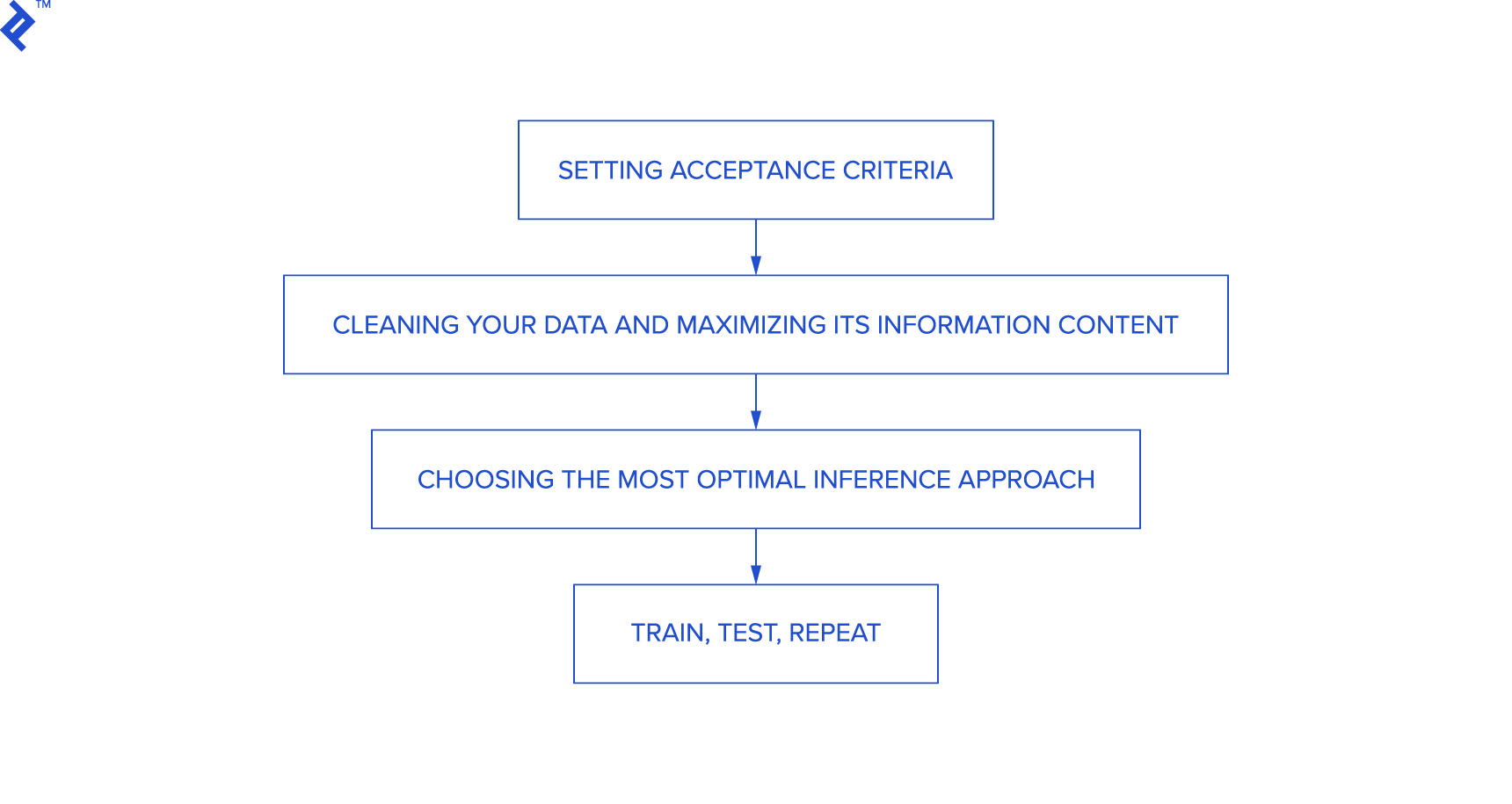

When approaching machine learning problems, these are the steps you will need to go through:

- Setting acceptance criteria

- Cleaning your data and maximizing ist information content

- Choosing the most optimal inference approach

- Train, test, repeat

Let us see these items in detail.

Setting Acceptance Criteria

You should have an idea of your target accuracy as soon as possible, to the extent possible. This is going to be the target you work towards.

Cleansing Your Data and Maximizing Its Information Content

This is the most critical step. First of all, your data should have no (or few) errors. Cleansing it of these is an essential first step. Substitute missing values, try to identify patterns that are obviously bogus, eliminate duplicates and any other anomaly you might notice.

As for information, if your data is very informative (in the linear sense), then practically any inference method will give you good results. If the required information is not in there, then the result will be noise. Maximizing the information means primarily finding any useful non-linear relationships in the data and linearizing them. If that improves the inputs significantly, great. If not, then more variables might need to be added. If all of this does not yield fruit, target accuracy may suffer.

With some luck, there will be single variables that are useful. You can identify useful variables if you—for instance—plot them against the learning target variable(s) and find the plot to be function-like (i.e., narrow range in the input corresponds to narrow range in the output). This variable can then be linearized—for example, if it plots as a parabola, subtract some values and take the square root.

For variables that are noisy—narrow range in input corresponds to a broad range in the output—we may try combining them with other variables.

To have an idea of the accuracy, you may want to measure conditional class probabilities for each of your variables (for classification problems) or to apply some very simple form of regression, such as linear regression (for prediction problems). If the information content of the input improves, then so will your inference, and you simply don’t want to waste too much time at this stage calibrating a model when the data is not yet ready. So keep testing as simple as possible.

Choosing the Most Optimal Inference Approach

Once your data is in decent shape, you can go for the inference method (the data might still be polished later, if necessary).

Should you use a model? Well, if you have good reason to believe that you can build a good model for the task, then you probably should. If you don’t think so, but there is ample data with good annotations, then you may go hands-free with a neural network. In practical machine learning applications, however, there is often not enough data for that.

Playing accuracy vs. cover often pays off tremendously. Hybrid approaches are usually completely fine. Suppose the data is such that you can get near-100% accuracy on 80% of it with a simple model? This means you can demonstrable results quickly, and if your system can identify when it’s operating on the 80% friendly territory, then you’ve basically covered most of the problem. Your client may not yet be fully happy, but this will earn you their trust quickly. And there is nothing to prevent you from doing something similar on the remaining data: with reasonable effort now you cover, say, 92% of the data with 97% accuracy. True, on the rest of the data, it’s a coin flip, but you already produced something useful.

For most practical applications, this is very useful. Say, you’re in the lending business and want to decide whom to give a loan, and all you know is that on 70% of the clients your algorithm is very accurate. Great—true, the other 30% of your applicants will require more processing, but 70% can be fully automated. Or: suppose you’re trying to automate operator work for call centers, you can do a good (quick and dirty) job on the most simple tasks only, but these tasks cover 50% of the calls? Great, the call center saves money if they can automate 50% of their calls reliably.

To sum up: If the data is not informative enough, or the problem is too complex to handle in its entirety, think outside the box. Identify useful and easy-to-solve sub-problems until you have a better idea.

Once you have your system ready, learn, test and loop it until you’re happy with the results.

Train, Test, Repeat

After the previous steps, little of interest is left. You have the data, you have the machine learning method, so it’s time to extract parameters via learning and then test the inference on the test set. Literature suggests 70% of the records should be used for training, and 30% for testing.

If you’re happy with the results, the task is finished. But, more likely, you developed some new ideas during the procedure, and these could help you notch up in accuracy. Perhaps you need more data? Or just more data cleansing? Or another model? Either way, chances are you’ll be busy for quite a while.

So, good luck and enjoy the work ahead!

Further Reading on the Toptal Blog:

- Identifying the Unknown With Clustering Metrics

- Advantages of AI: Using GPT and Diffusion Models for Image Generation

- Stars Realigned: Improving the IMDb Rating System

- Machines and Trust: How to Mitigate AI Bias

Understanding the basics

Machine learning vs. deep learning: what's the difference.

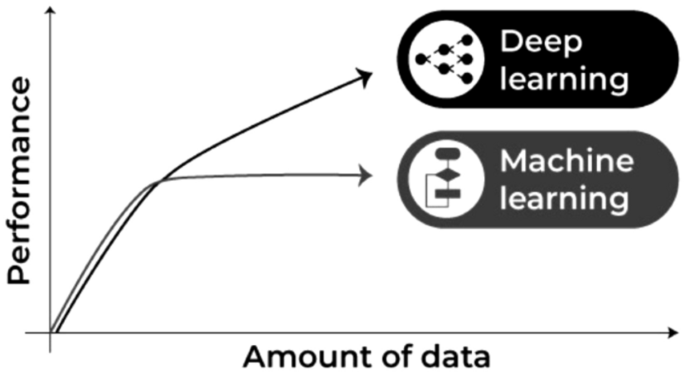

Machine learning includes all inference techniques while deep learning aims at uncovering meaningful non-linear relationships in the data. So deep learning is a subset of machine learning and also a means of automated feature engineering applied to a machine learning problem.

Which language is best for machine learning?

The ideal choice is a language that has both broad programming library support and allows you to focus on the math rather than infrastructure. The most popular language is Python, but algorithmic languages such as Matlab or R or mainstreamers like C++ and Java are all valid choices as well.

Machine learning vs. neural networks: What's the difference?

Neural networks represent just one approach within machine learning with its pros and cons as detailed above.

What is the best way to learn machine learning?

There are some good online courses and summary pages. It all depends on one’s skills and tastes. My personal advice: Think of machine learning as statistical programming. Beef up on your math and avoid all sources that equate machine learning with neural networks.

What are the advantages and disadvantages of artificial neural networks?

Some advantages: no math, feature engineering, or artisan skills required; easy to train; may uncover aspects of the problem not originally considered. Some disadvantages: requires relatively more data; tedious preparation work; leaves no explanation as to why they decide the way they do, overfitting.

- NeuralNetwork

- MachineLearning

Peter Hussami

Budapest, Hungary

Member since July 3, 2017

About the author

World-class articles, delivered weekly.

By entering your email, you are agreeing to our privacy policy .

Toptal Developers

- Algorithm Developers

- Angular Developers

- AWS Developers

- Azure Developers

- Big Data Architects

- Blockchain Developers

- Business Intelligence Developers

- C Developers

- Computer Vision Developers

- Django Developers

- Docker Developers

- Elixir Developers

- Go Engineers

- GraphQL Developers

- Jenkins Developers

- Kotlin Developers

- Kubernetes Experts

- Machine Learning Engineers

- Magento Developers

- .NET Developers

- R Developers

- React Native Developers

- Ruby on Rails Developers

- Salesforce Developers

- SQL Developers

- Tableau Developers

- Unreal Engine Developers

- Xamarin Developers

- View More Freelance Developers

Join the Toptal ® community.

- Machine Learning

- Español – América Latina

- Português – Brasil

- Tiếng Việt

- Foundational courses

- Problem Framing

Introduction to Machine Learning Problem Framing

Introduction to Machine Learning Problem Framing teaches you how to determine if machine learning (ML) is a good approach for a problem and explains how to outline an ML solution.

Except as otherwise noted, the content of this page is licensed under the Creative Commons Attribution 4.0 License , and code samples are licensed under the Apache 2.0 License . For details, see the Google Developers Site Policies . Java is a registered trademark of Oracle and/or its affiliates.

Last updated 2022-07-18 UTC.

Outside USA: +1‑607‑330‑3200

Problem‑Solving with Machine Learning Cornell Course

Select start date, problem-solving with machine learning, course overview, key course takeaways.

- Define and reframe problems using machine learning (supervised learning) concepts and terminology

- Identify the applicability, assumptions, and limitations of the k-NN algorithm

- Simplify and make Python code efficient with matrix operations using NumPy, a library for the Python programming language

- Build a face recognition system using the k-nearest neighbors algorithm

- Compute the accuracy of an algorithm by implementing loss functions

How It Works

Course author.

- Certificates Authored

Kilian Weinberger is an Associate Professor in the Department of Computer Science at Cornell University. He received his Ph.D. from the University of Pennsylvania in Machine Learning under the supervision of Lawrence Saul and his undergraduate degree in Mathematics and Computer Science from the University of Oxford. During his career he has won several best paper awards at ICML (2004), CVPR (2004, 2017), AISTATS (2005) and KDD (2014, runner-up award). In 2011 he was awarded the Outstanding AAAI Senior Program Chair Award and in 2012 he received an NSF CAREER award. He was elected co-Program Chair for ICML 2016 and for AAAI 2018. In 2016 he was the recipient of the Daniel M Lazar ’29 Excellence in Teaching Award. Kilian Weinberger’s research focuses on Machine Learning and its applications. In particular, he focuses on learning under resource constraints, metric learning, machine learned web-search ranking, computer vision and deep learning. Before joining Cornell University, he was an Associate Professor at Washington University in St. Louis and before that he worked as a research scientist at Yahoo! Research in Santa Clara.

- Machine Learning

Who Should Enroll

- Programmers

- Data analysts

- Statisticians

- Data scientists

- Software engineers

Stack To A Certificate

Request information now by completing the form below..

Enter your information to get access to a virtual open house with the eCornell team to get your questions answered live.

9 Real-World Problems that can be Solved by Machine Learning

Machine Learning has gained a lot of prominence in the recent years because of its ability to be applied across scores of industries to solve complex problems effectively and quickly. Contrary to what one might expect, Machine Learning use cases are not that difficult to come across. The most common examples of problems solved by machine learning are image tagging by Facebook and spam detection by email providers.

Hey there! This blog is almost about 2000+ words long and may take ~8 mins to go through the whole thing. We understand that you might not have that much time.

This is precisely why we made a short video on the topic. It is less than 2 mins, and summarizes how can Machine Learning be used in everyday life? . We hope this helps you learn more and save your time. Cheers!

AI for business can resolve incredible challenges across industry domains by working with suitable datasets. In this post, we will learn about some typical problems solved by machine learning and how they enable businesses to leverage their data accurately.

What is Machine Learning?

A sub-area of artificial intelligence, machine learning, is an IT system's ability to recognize patterns in large databases to find solutions to problems without human intervention. It is an umbrella term for various techniques and tools to help computers learn and adapt independently.

Unlike traditional programming, a manually created program that uses input data and runs on a computer to produce the output, in Machine Learning or augmented analytics, the input data and output are given to an algorithm to create a program. It leads to powerful insights that can be used to predict future outcomes.

Machine learning algorithms do all that and more, using statistics to find patterns in vast amounts of data that encompass everything from images, numbers, words, etc. If the data can be stored digitally, it can be fed into a machine-learning algorithm to solve specific problems.

Types Of Machine Learning

Today, Machine Learning algorithms are primarily trained using three essential methods. These are categorized as three types of machine learning, as discussed below –

1. Supervised Learning

One of the most elementary types of machine learning, supervised learning, is one where data is labeled to inform the machine about the exact patterns it should look for. Although the data needs to be labeled accurately for this method to work, supervised learning is compelling and provides excellent results when used in the right circumstances.

For instance, when we press play on a Netflix show, we’re informing the Machine Learning algorithm to find similar shows based on our preference.

How it works –

- The Machine Learning algorithm here is provided with a small training dataset to work with, which is a smaller part of the bigger dataset.

- It serves to give the algorithm an idea of the problem, solution, and various data points to be dealt with.

- The training dataset here is also very similar to the final dataset in its characteristics and offers the algorithm with the labeled parameters required for the problem.

- The Machine Learning algorithm then finds relationships between the given parameters, establishing a cause and effect relationship between the variables in the dataset.

2. Unsupervised Learning

Unsupervised learning, as the name suggests, has no data labels. The machine looks for patterns randomly. It means that there is no human labor required to make the dataset machine-readable. It allows much larger datasets to be worked on by the program. Compared to supervised learning, unsupervised Machine Learning services aren’t much popular because of lesser applications in day-to-day life.

How does it work?

- Since unsupervised learning does not have any labels to work off, it creates hidden structures.

- Relationships between data points are then perceived by the algorithm randomly or abstractly, with absolutely no input required from human beings.

- Instead of a specific, defined, and set problem statement, unsupervised learning algorithms can adapt to the data by changing hidden structures dynamically.

3. Reinforcement Learning

Reinforcement learning primarily describes a class of machine learning problems where an agent operates in an environment with no fixed training dataset. The agent must know how to work using feedback.

- Reinforcement learning features a machine learning algorithm that improves upon itself.

- It typically learns by trial and error to achieve a clear objective.

- In this Machine Learning algorithm, favorable outputs are reinforced or encouraged, whereas non-favorable outputs are discouraged.

Top 4 Issues with Implementing Machine Learning

While Machine learning is extensively used across industries to make data-driven decisions, its implementation observes many problems that must be addressed. Here’s a list of organizations' most common machine learning challenges when inculcating ML in their operations.

1. Inadequate Training Data

Data plays a critical role in the training and processing of machine learning algorithms. Many data scientists attest that insufficient, inconsistent, and unclean data can considerably hamper the efficacy of ML algorithms.

2. Underfitting of Training Data

This anomaly occurs when data fails to link the input and output variables explicitly. In simpler terms, it means trying to fit in an undersized t-shirt. It indicates that data isn’t too coherent to forge a precise relationship.

3. Overfitting of Training Data

Overfitting denotes an ML model trained with enormous amounts of data that negatively affects performance. It's similar to trying an oversized jeans.

4. Delayed Implementation

ML models offer efficient results but consume a lot of time due to data overload, slow programs, and excessive requirements. Additionally, they demand timely monitoring and maintenance to deliver the best output.

9 Real-World Problems Solved by Machine Learning

Applications of Machine learning are many, including external (client-centric) applications such as product recommendation , customer service, and demand forecasts, and internally to help businesses improve products or speed up manual and time-consuming processes.

Machine learning algorithms are typically used in areas where the solution requires continuous improvement post-deployment. Adaptable machine learning solutions are incredibly dynamic and are adopted by companies across verticals.

Here we are discussing nine Machine Learning use cases –

1. Identifying Spam

Spam identification is one of the most basic applications of machine learning. Most of our email inboxes also have an unsolicited, bulk, or spam inbox, where our email provider automatically filters unwanted spam emails.

But how do they know that the email is spam?

They use a trained Machine Learning model to identify all the spam emails based on common characteristics such as the email, subject, and sender content.

If you look at your email inbox carefully, you will realize that it is not very hard to pick out spam emails because they look very different from real emails. Machine learning techniques used nowadays can automatically filter these spam emails in a very successful way.

Spam detection is one of the best and most common problems solved by Machine Learning. Neural networks employ content-based filtering to classify unwanted emails as spam. These neural networks are quite similar to the brain, with the ability to identify spam emails and messages.

2. Making Product Recommendations

Recommender systems are one of the most characteristic and ubiquitous machine learning use cases in day-to-day life. These systems are used everywhere by search engines, e-commerce websites (Amazon), entertainment platforms (Google Play, Netflix), and multiple web & mobile apps.

Prominent online retailers like Amazon and eBay often show a list of recommended products individually for each of their consumers. These recommendations are typically based on behavioral data and parameters such as previous purchases, item views, page views, clicks, form fill-ins, purchases, item details (price, category), and contextual data (location, language, device), and browsing history.

These recommender systems allow businesses to drive more traffic, increase customer engagement, reduce churn rate, deliver relevant content and boost profits. All such recommended products are based on a machine learning model’s analysis of customer’s behavioral data. It is an excellent way for online retailers to offer extra value and enjoy various upselling opportunities using machine learning.

3. Customer Segmentation

Customer segmentation, churn prediction and customer lifetime value (LTV) prediction are the main challenges faced by any marketer. Businesses have a huge amount of marketing relevant data from various sources such as email campaigns, website visitors and lead data.

Using data mining and machine learning , an accurate prediction for individual marketing offers and incentives can be achieved. Using ML, savvy marketers can eliminate guesswork involved in data-driven marketing.

For example, given the pattern of behavior by a user during a trial period and the past behaviors of all users, identifying chances of conversion to paid version can be predicted. A model of this decision problem would allow a program to trigger customer interventions to persuade the customer to convert early or better engage in the trial.

4. Image & Video Recognition

Advances in deep learning (a subset of machine learning) have stimulated rapid progress in image & video recognition techniques over the past few years. They are used for multiple areas, including object detection, face recognition, text detection, visual search, logo and landmark detection, and image composition.

Since machines are good at processing images, Machine Learning algorithms can train Deep Learning frameworks to recognize and classify images in the dataset with much more accuracy than humans.

Similar to image recognition , companies such as Shutterstock , eBay , Salesforce , Amazon , and Facebook use Machine Learning for video recognition where videos are broken down frame by frame and classified as individual digital images.

5. Fraudulent Transactions

Fraudulent banking transactions are quite a common occurrence today. However, it is not feasible (in terms of cost involved and efficiency) to investigate every transaction for fraud, translating to a poor customer service experience.

Machine Learning in finance can automatically build super-accurate predictive maintenance models to identify and prioritize all kinds of possible fraudulent activities. Businesses can then create a data-based queue and investigate the high priority incidents.

It allows you to deploy resources in an area where you will see the greatest return on your investigative investment. Further, it also helps you optimize customer satisfaction by protecting their accounts and not challenging valid transactions. Such fraud detection using machine learning can help banks and financial organizations save money on disputes/chargebacks as one can train Machine Learning models to flag transactions that appear fraudulent based on specific characteristics.

6. Demand Forecasting

The concept of demand forecasting is used in multiple industries, from retail and e-commerce to manufacturing and transportation. It feeds historical data to Machine Learning algorithms and models to predict the number of products, services, power, and more.

It allows businesses to efficiently collect and process data from the entire supply chain, reducing overheads and increasing efficiency.

ML-powered demand forecasting is very accurate, rapid, and transparent. Businesses can generate meaningful insights from a constant stream of supply/demand data and adapt to changes accordingly.

7. Virtual Personal Assistant

From Alexa and Google Assistant to Cortana and Siri, we have multiple virtual personal assistants to find accurate information using our voice instruction, such as calling someone, opening an email, scheduling an appointment, and more.

These virtual assistants use Machine Learning algorithms for recording our voice instructions, sending them over the server to a cloud, followed by decoding them using Machine Learning algorithms and acting accordingly.

8. Sentiment Analysis

Sentiment analysis is one of the beneficial and real-time machine learning applications that help determine the emotion or opinion of the speaker or the writer.

For instance, if you’ve written a review, email, or any other form of a document, a sentiment analyzer will be able to assess the actual thought and tone of the text. This sentiment analysis application can be used to analyze decision-making applications, review-based websites, and more.

9. Customer Service Automation

Managing an increasing number of online customer interactions has become a pain point for most businesses. It is because they simply don’t have the customer support staff available to deal with the sheer number of inquiries they receive daily.

Machine learning algorithms have made it possible and super easy for chatbots and other similar automated systems to fill this gap. This application of machine learning enables companies to automate routine and low priority tasks, freeing up their employees to manage more high-level customer service tasks.

Further, Machine Learning technology can access the data, interpret behaviors and recognize the patterns easily. This could also be used for customer support systems that can work identical to a real human being and solve all of the customers’ unique queries. The Machine Learning models behind these voice assistants are trained on human languages and variations in the human voice because it has to efficiently translate the voice to words and then make an on-topic and intelligent response.

If implemented the right way, problems solved by machine learning can streamline the entire process of customer issue resolution and offer much-needed assistance along with enhanced customer satisfaction.

Wrapping Up

As advancements in machine learning evolve, the range of use cases and applications of machine learning too will expand. To effectively navigate the business issues in this new decade, it’s worth keeping an eye on how machine learning applications can be deployed across business domains to reduce costs, improve efficiency and deliver better user experiences.

However, to implement machine learning accurately in your organization, it is imperative to have a trustworthy partner with deep-domain expertise. At Maruti Techlabs, we offer advanced machine learning services that involve understanding the complexity of varied business issues, identifying the existing gaps, and offering efficient and effective tech solutions to manage these challenges.

If you wish to learn more about how machine learning solutions can increase productivity and automate business processes for your business, get in touch with us .

Pinakin is the VP of Data Science and Technology at Maruti Techlabs. With about two decades of experience leading diverse teams and projects, his technological competence is unmatched.

Artificial Intelligence and Machine Learning - 9 MIN READ

Streamlining the Healthcare Space Using Machine Learning and mHealth

Artificial Intelligence and Machine Learning - 7 MIN READ

Machine Learning Algorithms: Obstacles with Implementation

Understanding the Basics of Artificial Intelligence and Machine Learning

- Software Product Development

- Artificial Intelligence

- Data Engineering

- Product Strategy

- DelightfulHomes (Product Development)

- Sage Data (Product Development)

- PhotoStat (Computer Vision)

- UKHealth (Chatbot)

- A20 Motors (Data Analytics)

- Acme Corporation (Product Development)

- Staff Augmentation

- IT Outsourcing

- Privacy Policy

USA 5900 Balcones Dr Suite 100 Austin, TX 78731, USA

India 10th Floor The Ridge Opp. Novotel, Iscon Cross Road Ahmedabad, Gujarat - 380060

Technologies

Advertisement

Machine Learning: Algorithms, Real-World Applications and Research Directions

- Review Article

- Published: 22 March 2021

- Volume 2 , article number 160 , ( 2021 )

Cite this article

- Iqbal H. Sarker ORCID: orcid.org/0000-0003-1740-5517 1 , 2

511k Accesses

1437 Citations

23 Altmetric

Explore all metrics

In the current age of the Fourth Industrial Revolution (4 IR or Industry 4.0), the digital world has a wealth of data, such as Internet of Things (IoT) data, cybersecurity data, mobile data, business data, social media data, health data, etc. To intelligently analyze these data and develop the corresponding smart and automated applications, the knowledge of artificial intelligence (AI), particularly, machine learning (ML) is the key. Various types of machine learning algorithms such as supervised, unsupervised, semi-supervised, and reinforcement learning exist in the area. Besides, the deep learning , which is part of a broader family of machine learning methods, can intelligently analyze the data on a large scale. In this paper, we present a comprehensive view on these machine learning algorithms that can be applied to enhance the intelligence and the capabilities of an application. Thus, this study’s key contribution is explaining the principles of different machine learning techniques and their applicability in various real-world application domains, such as cybersecurity systems, smart cities, healthcare, e-commerce, agriculture, and many more. We also highlight the challenges and potential research directions based on our study. Overall, this paper aims to serve as a reference point for both academia and industry professionals as well as for decision-makers in various real-world situations and application areas, particularly from the technical point of view.

Similar content being viewed by others

Deep Learning: A Comprehensive Overview on Techniques, Taxonomy, Applications and Research Directions

Machine learning and deep learning

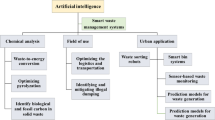

Artificial intelligence for waste management in smart cities: a review

Avoid common mistakes on your manuscript.

Introduction

We live in the age of data, where everything around us is connected to a data source, and everything in our lives is digitally recorded [ 21 , 103 ]. For instance, the current electronic world has a wealth of various kinds of data, such as the Internet of Things (IoT) data, cybersecurity data, smart city data, business data, smartphone data, social media data, health data, COVID-19 data, and many more. The data can be structured, semi-structured, or unstructured, discussed briefly in Sect. “ Types of Real-World Data and Machine Learning Techniques ”, which is increasing day-by-day. Extracting insights from these data can be used to build various intelligent applications in the relevant domains. For instance, to build a data-driven automated and intelligent cybersecurity system, the relevant cybersecurity data can be used [ 105 ]; to build personalized context-aware smart mobile applications, the relevant mobile data can be used [ 103 ], and so on. Thus, the data management tools and techniques having the capability of extracting insights or useful knowledge from the data in a timely and intelligent way is urgently needed, on which the real-world applications are based.

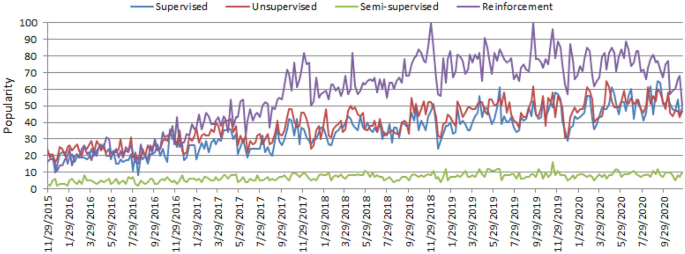

The worldwide popularity score of various types of ML algorithms (supervised, unsupervised, semi-supervised, and reinforcement) in a range of 0 (min) to 100 (max) over time where x-axis represents the timestamp information and y-axis represents the corresponding score

Artificial intelligence (AI), particularly, machine learning (ML) have grown rapidly in recent years in the context of data analysis and computing that typically allows the applications to function in an intelligent manner [ 95 ]. ML usually provides systems with the ability to learn and enhance from experience automatically without being specifically programmed and is generally referred to as the most popular latest technologies in the fourth industrial revolution (4 IR or Industry 4.0) [ 103 , 105 ]. “Industry 4.0” [ 114 ] is typically the ongoing automation of conventional manufacturing and industrial practices, including exploratory data processing, using new smart technologies such as machine learning automation. Thus, to intelligently analyze these data and to develop the corresponding real-world applications, machine learning algorithms is the key. The learning algorithms can be categorized into four major types, such as supervised, unsupervised, semi-supervised, and reinforcement learning in the area [ 75 ], discussed briefly in Sect. “ Types of Real-World Data and Machine Learning Techniques ”. The popularity of these approaches to learning is increasing day-by-day, which is shown in Fig. 1 , based on data collected from Google Trends [ 4 ] over the last five years. The x - axis of the figure indicates the specific dates and the corresponding popularity score within the range of \(0 \; (minimum)\) to \(100 \; (maximum)\) has been shown in y - axis . According to Fig. 1 , the popularity indication values for these learning types are low in 2015 and are increasing day by day. These statistics motivate us to study on machine learning in this paper, which can play an important role in the real-world through Industry 4.0 automation.

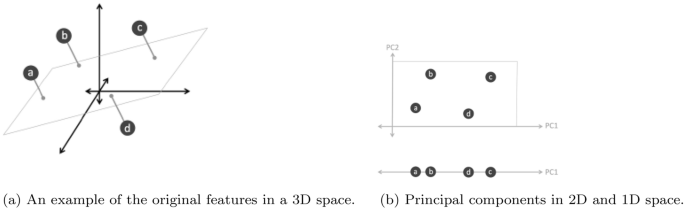

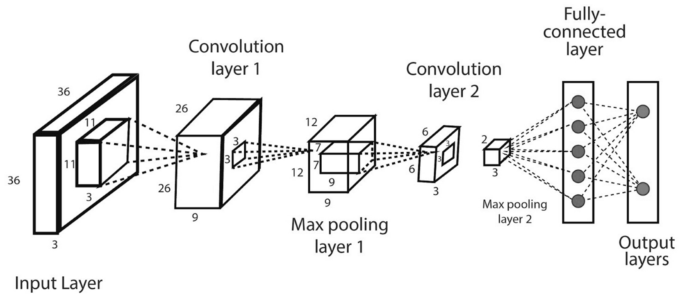

In general, the effectiveness and the efficiency of a machine learning solution depend on the nature and characteristics of data and the performance of the learning algorithms . In the area of machine learning algorithms, classification analysis, regression, data clustering, feature engineering and dimensionality reduction, association rule learning, or reinforcement learning techniques exist to effectively build data-driven systems [ 41 , 125 ]. Besides, deep learning originated from the artificial neural network that can be used to intelligently analyze data, which is known as part of a wider family of machine learning approaches [ 96 ]. Thus, selecting a proper learning algorithm that is suitable for the target application in a particular domain is challenging. The reason is that the purpose of different learning algorithms is different, even the outcome of different learning algorithms in a similar category may vary depending on the data characteristics [ 106 ]. Thus, it is important to understand the principles of various machine learning algorithms and their applicability to apply in various real-world application areas, such as IoT systems, cybersecurity services, business and recommendation systems, smart cities, healthcare and COVID-19, context-aware systems, sustainable agriculture, and many more that are explained briefly in Sect. “ Applications of Machine Learning ”.

Based on the importance and potentiality of “Machine Learning” to analyze the data mentioned above, in this paper, we provide a comprehensive view on various types of machine learning algorithms that can be applied to enhance the intelligence and the capabilities of an application. Thus, the key contribution of this study is explaining the principles and potentiality of different machine learning techniques, and their applicability in various real-world application areas mentioned earlier. The purpose of this paper is, therefore, to provide a basic guide for those academia and industry people who want to study, research, and develop data-driven automated and intelligent systems in the relevant areas based on machine learning techniques.

The key contributions of this paper are listed as follows:

To define the scope of our study by taking into account the nature and characteristics of various types of real-world data and the capabilities of various learning techniques.

To provide a comprehensive view on machine learning algorithms that can be applied to enhance the intelligence and capabilities of a data-driven application.

To discuss the applicability of machine learning-based solutions in various real-world application domains.

To highlight and summarize the potential research directions within the scope of our study for intelligent data analysis and services.

The rest of the paper is organized as follows. The next section presents the types of data and machine learning algorithms in a broader sense and defines the scope of our study. We briefly discuss and explain different machine learning algorithms in the subsequent section followed by which various real-world application areas based on machine learning algorithms are discussed and summarized. In the penultimate section, we highlight several research issues and potential future directions, and the final section concludes this paper.

Types of Real-World Data and Machine Learning Techniques

Machine learning algorithms typically consume and process data to learn the related patterns about individuals, business processes, transactions, events, and so on. In the following, we discuss various types of real-world data as well as categories of machine learning algorithms.

Types of Real-World Data

Usually, the availability of data is considered as the key to construct a machine learning model or data-driven real-world systems [ 103 , 105 ]. Data can be of various forms, such as structured, semi-structured, or unstructured [ 41 , 72 ]. Besides, the “metadata” is another type that typically represents data about the data. In the following, we briefly discuss these types of data.

Structured: It has a well-defined structure, conforms to a data model following a standard order, which is highly organized and easily accessed, and used by an entity or a computer program. In well-defined schemes, such as relational databases, structured data are typically stored, i.e., in a tabular format. For instance, names, dates, addresses, credit card numbers, stock information, geolocation, etc. are examples of structured data.

Unstructured: On the other hand, there is no pre-defined format or organization for unstructured data, making it much more difficult to capture, process, and analyze, mostly containing text and multimedia material. For example, sensor data, emails, blog entries, wikis, and word processing documents, PDF files, audio files, videos, images, presentations, web pages, and many other types of business documents can be considered as unstructured data.

Semi-structured: Semi-structured data are not stored in a relational database like the structured data mentioned above, but it does have certain organizational properties that make it easier to analyze. HTML, XML, JSON documents, NoSQL databases, etc., are some examples of semi-structured data.

Metadata: It is not the normal form of data, but “data about data”. The primary difference between “data” and “metadata” is that data are simply the material that can classify, measure, or even document something relative to an organization’s data properties. On the other hand, metadata describes the relevant data information, giving it more significance for data users. A basic example of a document’s metadata might be the author, file size, date generated by the document, keywords to define the document, etc.

In the area of machine learning and data science, researchers use various widely used datasets for different purposes. These are, for example, cybersecurity datasets such as NSL-KDD [ 119 ], UNSW-NB15 [ 76 ], ISCX’12 [ 1 ], CIC-DDoS2019 [ 2 ], Bot-IoT [ 59 ], etc., smartphone datasets such as phone call logs [ 84 , 101 ], SMS Log [ 29 ], mobile application usages logs [ 137 ] [ 117 ], mobile phone notification logs [ 73 ] etc., IoT data [ 16 , 57 , 62 ], agriculture and e-commerce data [ 120 , 138 ], health data such as heart disease [ 92 ], diabetes mellitus [ 83 , 134 ], COVID-19 [ 43 , 74 ], etc., and many more in various application domains. The data can be in different types discussed above, which may vary from application to application in the real world. To analyze such data in a particular problem domain, and to extract the insights or useful knowledge from the data for building the real-world intelligent applications, different types of machine learning techniques can be used according to their learning capabilities, which is discussed in the following.

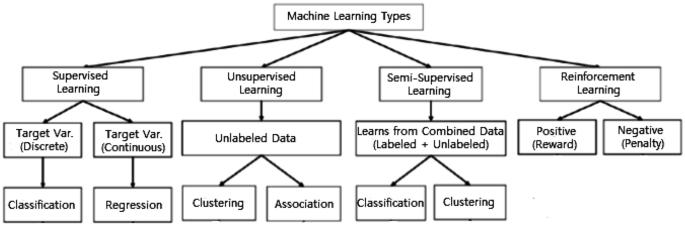

Types of Machine Learning Techniques

Machine Learning algorithms are mainly divided into four categories: Supervised learning, Unsupervised learning, Semi-supervised learning, and Reinforcement learning [ 75 ], as shown in Fig. 2 . In the following, we briefly discuss each type of learning technique with the scope of their applicability to solve real-world problems.

Various types of machine learning techniques

Supervised: Supervised learning is typically the task of machine learning to learn a function that maps an input to an output based on sample input-output pairs [ 41 ]. It uses labeled training data and a collection of training examples to infer a function. Supervised learning is carried out when certain goals are identified to be accomplished from a certain set of inputs [ 105 ], i.e., a task-driven approach . The most common supervised tasks are “classification” that separates the data, and “regression” that fits the data. For instance, predicting the class label or sentiment of a piece of text, like a tweet or a product review, i.e., text classification, is an example of supervised learning.

Unsupervised: Unsupervised learning analyzes unlabeled datasets without the need for human interference, i.e., a data-driven process [ 41 ]. This is widely used for extracting generative features, identifying meaningful trends and structures, groupings in results, and exploratory purposes. The most common unsupervised learning tasks are clustering, density estimation, feature learning, dimensionality reduction, finding association rules, anomaly detection, etc.

Semi-supervised: Semi-supervised learning can be defined as a hybridization of the above-mentioned supervised and unsupervised methods, as it operates on both labeled and unlabeled data [ 41 , 105 ]. Thus, it falls between learning “without supervision” and learning “with supervision”. In the real world, labeled data could be rare in several contexts, and unlabeled data are numerous, where semi-supervised learning is useful [ 75 ]. The ultimate goal of a semi-supervised learning model is to provide a better outcome for prediction than that produced using the labeled data alone from the model. Some application areas where semi-supervised learning is used include machine translation, fraud detection, labeling data and text classification.

Reinforcement: Reinforcement learning is a type of machine learning algorithm that enables software agents and machines to automatically evaluate the optimal behavior in a particular context or environment to improve its efficiency [ 52 ], i.e., an environment-driven approach . This type of learning is based on reward or penalty, and its ultimate goal is to use insights obtained from environmental activists to take action to increase the reward or minimize the risk [ 75 ]. It is a powerful tool for training AI models that can help increase automation or optimize the operational efficiency of sophisticated systems such as robotics, autonomous driving tasks, manufacturing and supply chain logistics, however, not preferable to use it for solving the basic or straightforward problems.

Thus, to build effective models in various application areas different types of machine learning techniques can play a significant role according to their learning capabilities, depending on the nature of the data discussed earlier, and the target outcome. In Table 1 , we summarize various types of machine learning techniques with examples. In the following, we provide a comprehensive view of machine learning algorithms that can be applied to enhance the intelligence and capabilities of a data-driven application.

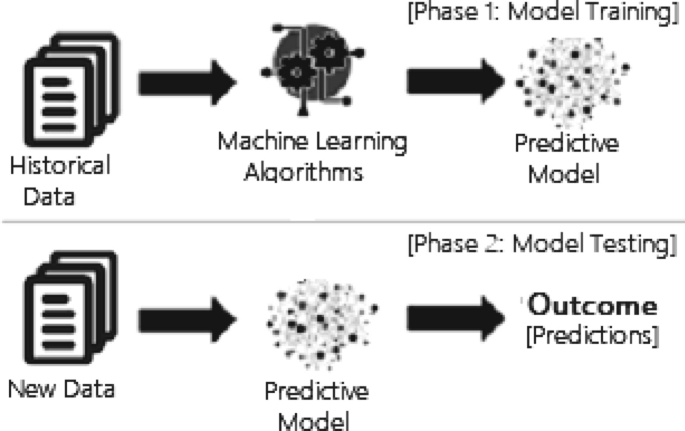

Machine Learning Tasks and Algorithms

In this section, we discuss various machine learning algorithms that include classification analysis, regression analysis, data clustering, association rule learning, feature engineering for dimensionality reduction, as well as deep learning methods. A general structure of a machine learning-based predictive model has been shown in Fig. 3 , where the model is trained from historical data in phase 1 and the outcome is generated in phase 2 for the new test data.

A general structure of a machine learning based predictive model considering both the training and testing phase

Classification Analysis

Classification is regarded as a supervised learning method in machine learning, referring to a problem of predictive modeling as well, where a class label is predicted for a given example [ 41 ]. Mathematically, it maps a function ( f ) from input variables ( X ) to output variables ( Y ) as target, label or categories. To predict the class of given data points, it can be carried out on structured or unstructured data. For example, spam detection such as “spam” and “not spam” in email service providers can be a classification problem. In the following, we summarize the common classification problems.

Binary classification: It refers to the classification tasks having two class labels such as “true and false” or “yes and no” [ 41 ]. In such binary classification tasks, one class could be the normal state, while the abnormal state could be another class. For instance, “cancer not detected” is the normal state of a task that involves a medical test, and “cancer detected” could be considered as the abnormal state. Similarly, “spam” and “not spam” in the above example of email service providers are considered as binary classification.

Multiclass classification: Traditionally, this refers to those classification tasks having more than two class labels [ 41 ]. The multiclass classification does not have the principle of normal and abnormal outcomes, unlike binary classification tasks. Instead, within a range of specified classes, examples are classified as belonging to one. For example, it can be a multiclass classification task to classify various types of network attacks in the NSL-KDD [ 119 ] dataset, where the attack categories are classified into four class labels, such as DoS (Denial of Service Attack), U2R (User to Root Attack), R2L (Root to Local Attack), and Probing Attack.

Multi-label classification: In machine learning, multi-label classification is an important consideration where an example is associated with several classes or labels. Thus, it is a generalization of multiclass classification, where the classes involved in the problem are hierarchically structured, and each example may simultaneously belong to more than one class in each hierarchical level, e.g., multi-level text classification. For instance, Google news can be presented under the categories of a “city name”, “technology”, or “latest news”, etc. Multi-label classification includes advanced machine learning algorithms that support predicting various mutually non-exclusive classes or labels, unlike traditional classification tasks where class labels are mutually exclusive [ 82 ].

Many classification algorithms have been proposed in the machine learning and data science literature [ 41 , 125 ]. In the following, we summarize the most common and popular methods that are used widely in various application areas.

Naive Bayes (NB): The naive Bayes algorithm is based on the Bayes’ theorem with the assumption of independence between each pair of features [ 51 ]. It works well and can be used for both binary and multi-class categories in many real-world situations, such as document or text classification, spam filtering, etc. To effectively classify the noisy instances in the data and to construct a robust prediction model, the NB classifier can be used [ 94 ]. The key benefit is that, compared to more sophisticated approaches, it needs a small amount of training data to estimate the necessary parameters and quickly [ 82 ]. However, its performance may affect due to its strong assumptions on features independence. Gaussian, Multinomial, Complement, Bernoulli, and Categorical are the common variants of NB classifier [ 82 ].

Linear Discriminant Analysis (LDA): Linear Discriminant Analysis (LDA) is a linear decision boundary classifier created by fitting class conditional densities to data and applying Bayes’ rule [ 51 , 82 ]. This method is also known as a generalization of Fisher’s linear discriminant, which projects a given dataset into a lower-dimensional space, i.e., a reduction of dimensionality that minimizes the complexity of the model or reduces the resulting model’s computational costs. The standard LDA model usually suits each class with a Gaussian density, assuming that all classes share the same covariance matrix [ 82 ]. LDA is closely related to ANOVA (analysis of variance) and regression analysis, which seek to express one dependent variable as a linear combination of other features or measurements.

Logistic regression (LR): Another common probabilistic based statistical model used to solve classification issues in machine learning is Logistic Regression (LR) [ 64 ]. Logistic regression typically uses a logistic function to estimate the probabilities, which is also referred to as the mathematically defined sigmoid function in Eq. 1 . It can overfit high-dimensional datasets and works well when the dataset can be separated linearly. The regularization (L1 and L2) techniques [ 82 ] can be used to avoid over-fitting in such scenarios. The assumption of linearity between the dependent and independent variables is considered as a major drawback of Logistic Regression. It can be used for both classification and regression problems, but it is more commonly used for classification.

K-nearest neighbors (KNN): K-Nearest Neighbors (KNN) [ 9 ] is an “instance-based learning” or non-generalizing learning, also known as a “lazy learning” algorithm. It does not focus on constructing a general internal model; instead, it stores all instances corresponding to training data in n -dimensional space. KNN uses data and classifies new data points based on similarity measures (e.g., Euclidean distance function) [ 82 ]. Classification is computed from a simple majority vote of the k nearest neighbors of each point. It is quite robust to noisy training data, and accuracy depends on the data quality. The biggest issue with KNN is to choose the optimal number of neighbors to be considered. KNN can be used both for classification as well as regression.

Support vector machine (SVM): In machine learning, another common technique that can be used for classification, regression, or other tasks is a support vector machine (SVM) [ 56 ]. In high- or infinite-dimensional space, a support vector machine constructs a hyper-plane or set of hyper-planes. Intuitively, the hyper-plane, which has the greatest distance from the nearest training data points in any class, achieves a strong separation since, in general, the greater the margin, the lower the classifier’s generalization error. It is effective in high-dimensional spaces and can behave differently based on different mathematical functions known as the kernel. Linear, polynomial, radial basis function (RBF), sigmoid, etc., are the popular kernel functions used in SVM classifier [ 82 ]. However, when the data set contains more noise, such as overlapping target classes, SVM does not perform well.

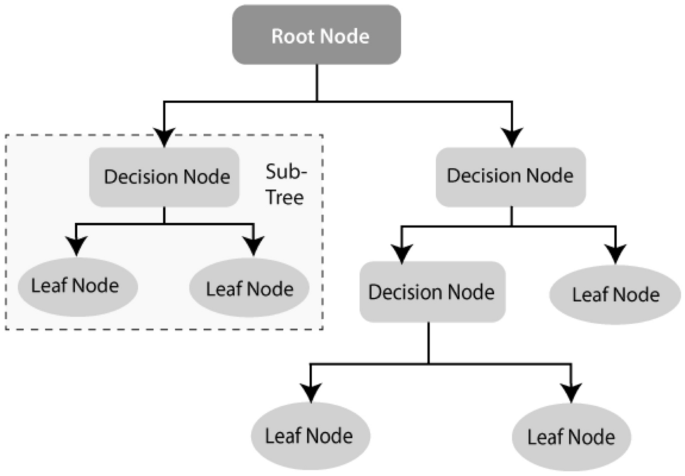

Decision tree (DT): Decision tree (DT) [ 88 ] is a well-known non-parametric supervised learning method. DT learning methods are used for both the classification and regression tasks [ 82 ]. ID3 [ 87 ], C4.5 [ 88 ], and CART [ 20 ] are well known for DT algorithms. Moreover, recently proposed BehavDT [ 100 ], and IntrudTree [ 97 ] by Sarker et al. are effective in the relevant application domains, such as user behavior analytics and cybersecurity analytics, respectively. By sorting down the tree from the root to some leaf nodes, as shown in Fig. 4 , DT classifies the instances. Instances are classified by checking the attribute defined by that node, starting at the root node of the tree, and then moving down the tree branch corresponding to the attribute value. For splitting, the most popular criteria are “gini” for the Gini impurity and “entropy” for the information gain that can be expressed mathematically as [ 82 ].

An example of a decision tree structure

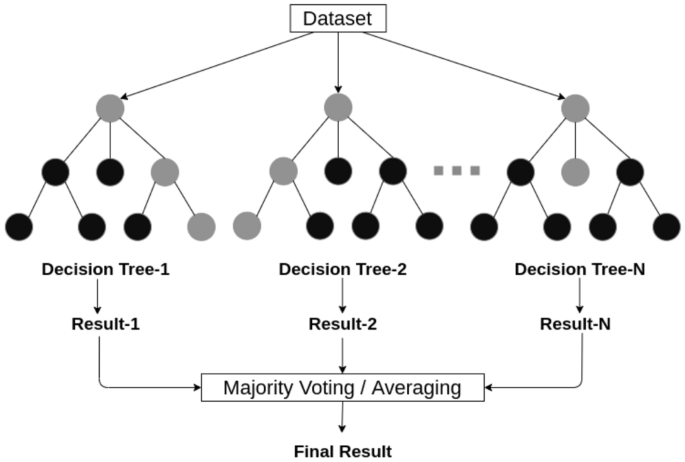

An example of a random forest structure considering multiple decision trees

Random forest (RF): A random forest classifier [ 19 ] is well known as an ensemble classification technique that is used in the field of machine learning and data science in various application areas. This method uses “parallel ensembling” which fits several decision tree classifiers in parallel, as shown in Fig. 5 , on different data set sub-samples and uses majority voting or averages for the outcome or final result. It thus minimizes the over-fitting problem and increases the prediction accuracy and control [ 82 ]. Therefore, the RF learning model with multiple decision trees is typically more accurate than a single decision tree based model [ 106 ]. To build a series of decision trees with controlled variation, it combines bootstrap aggregation (bagging) [ 18 ] and random feature selection [ 11 ]. It is adaptable to both classification and regression problems and fits well for both categorical and continuous values.

Adaptive Boosting (AdaBoost): Adaptive Boosting (AdaBoost) is an ensemble learning process that employs an iterative approach to improve poor classifiers by learning from their errors. This is developed by Yoav Freund et al. [ 35 ] and also known as “meta-learning”. Unlike the random forest that uses parallel ensembling, Adaboost uses “sequential ensembling”. It creates a powerful classifier by combining many poorly performing classifiers to obtain a good classifier of high accuracy. In that sense, AdaBoost is called an adaptive classifier by significantly improving the efficiency of the classifier, but in some instances, it can trigger overfits. AdaBoost is best used to boost the performance of decision trees, base estimator [ 82 ], on binary classification problems, however, is sensitive to noisy data and outliers.

Extreme gradient boosting (XGBoost): Gradient Boosting, like Random Forests [ 19 ] above, is an ensemble learning algorithm that generates a final model based on a series of individual models, typically decision trees. The gradient is used to minimize the loss function, similar to how neural networks [ 41 ] use gradient descent to optimize weights. Extreme Gradient Boosting (XGBoost) is a form of gradient boosting that takes more detailed approximations into account when determining the best model [ 82 ]. It computes second-order gradients of the loss function to minimize loss and advanced regularization (L1 and L2) [ 82 ], which reduces over-fitting, and improves model generalization and performance. XGBoost is fast to interpret and can handle large-sized datasets well.

Stochastic gradient descent (SGD): Stochastic gradient descent (SGD) [ 41 ] is an iterative method for optimizing an objective function with appropriate smoothness properties, where the word ‘stochastic’ refers to random probability. This reduces the computational burden, particularly in high-dimensional optimization problems, allowing for faster iterations in exchange for a lower convergence rate. A gradient is the slope of a function that calculates a variable’s degree of change in response to another variable’s changes. Mathematically, the Gradient Descent is a convex function whose output is a partial derivative of a set of its input parameters. Let, \(\alpha\) is the learning rate, and \(J_i\) is the training example cost of \(i \mathrm{th}\) , then Eq. ( 4 ) represents the stochastic gradient descent weight update method at the \(j^\mathrm{th}\) iteration. In large-scale and sparse machine learning, SGD has been successfully applied to problems often encountered in text classification and natural language processing [ 82 ]. However, SGD is sensitive to feature scaling and needs a range of hyperparameters, such as the regularization parameter and the number of iterations.

Rule-based classification : The term rule-based classification can be used to refer to any classification scheme that makes use of IF-THEN rules for class prediction. Several classification algorithms such as Zero-R [ 125 ], One-R [ 47 ], decision trees [ 87 , 88 ], DTNB [ 110 ], Ripple Down Rule learner (RIDOR) [ 125 ], Repeated Incremental Pruning to Produce Error Reduction (RIPPER) [ 126 ] exist with the ability of rule generation. The decision tree is one of the most common rule-based classification algorithms among these techniques because it has several advantages, such as being easier to interpret; the ability to handle high-dimensional data; simplicity and speed; good accuracy; and the capability to produce rules for human clear and understandable classification [ 127 ] [ 128 ]. The decision tree-based rules also provide significant accuracy in a prediction model for unseen test cases [ 106 ]. Since the rules are easily interpretable, these rule-based classifiers are often used to produce descriptive models that can describe a system including the entities and their relationships.

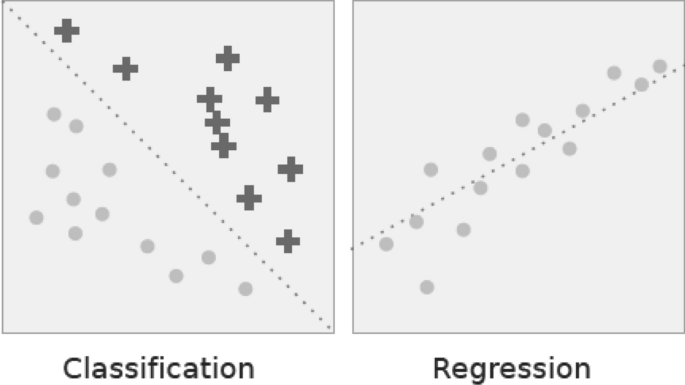

Classification vs. regression. In classification the dotted line represents a linear boundary that separates the two classes; in regression, the dotted line models the linear relationship between the two variables

Regression Analysis

Regression analysis includes several methods of machine learning that allow to predict a continuous ( y ) result variable based on the value of one or more ( x ) predictor variables [ 41 ]. The most significant distinction between classification and regression is that classification predicts distinct class labels, while regression facilitates the prediction of a continuous quantity. Figure 6 shows an example of how classification is different with regression models. Some overlaps are often found between the two types of machine learning algorithms. Regression models are now widely used in a variety of fields, including financial forecasting or prediction, cost estimation, trend analysis, marketing, time series estimation, drug response modeling, and many more. Some of the familiar types of regression algorithms are linear, polynomial, lasso and ridge regression, etc., which are explained briefly in the following.

Simple and multiple linear regression: This is one of the most popular ML modeling techniques as well as a well-known regression technique. In this technique, the dependent variable is continuous, the independent variable(s) can be continuous or discrete, and the form of the regression line is linear. Linear regression creates a relationship between the dependent variable ( Y ) and one or more independent variables ( X ) (also known as regression line) using the best fit straight line [ 41 ]. It is defined by the following equations:

where a is the intercept, b is the slope of the line, and e is the error term. This equation can be used to predict the value of the target variable based on the given predictor variable(s). Multiple linear regression is an extension of simple linear regression that allows two or more predictor variables to model a response variable, y, as a linear function [ 41 ] defined in Eq. 6 , whereas simple linear regression has only 1 independent variable, defined in Eq. 5 .

Polynomial regression: Polynomial regression is a form of regression analysis in which the relationship between the independent variable x and the dependent variable y is not linear, but is the polynomial degree of \(n^\mathrm{th}\) in x [ 82 ]. The equation for polynomial regression is also derived from linear regression (polynomial regression of degree 1) equation, which is defined as below:

Here, y is the predicted/target output, \(b_0, b_1,... b_n\) are the regression coefficients, x is an independent/ input variable. In simple words, we can say that if data are not distributed linearly, instead it is \(n^\mathrm{th}\) degree of polynomial then we use polynomial regression to get desired output.

LASSO and ridge regression: LASSO and Ridge regression are well known as powerful techniques which are typically used for building learning models in presence of a large number of features, due to their capability to preventing over-fitting and reducing the complexity of the model. The LASSO (least absolute shrinkage and selection operator) regression model uses L 1 regularization technique [ 82 ] that uses shrinkage, which penalizes “absolute value of magnitude of coefficients” ( L 1 penalty). As a result, LASSO appears to render coefficients to absolute zero. Thus, LASSO regression aims to find the subset of predictors that minimizes the prediction error for a quantitative response variable. On the other hand, ridge regression uses L 2 regularization [ 82 ], which is the “squared magnitude of coefficients” ( L 2 penalty). Thus, ridge regression forces the weights to be small but never sets the coefficient value to zero, and does a non-sparse solution. Overall, LASSO regression is useful to obtain a subset of predictors by eliminating less important features, and ridge regression is useful when a data set has “multicollinearity” which refers to the predictors that are correlated with other predictors.

Cluster Analysis

Cluster analysis, also known as clustering, is an unsupervised machine learning technique for identifying and grouping related data points in large datasets without concern for the specific outcome. It does grouping a collection of objects in such a way that objects in the same category, called a cluster, are in some sense more similar to each other than objects in other groups [ 41 ]. It is often used as a data analysis technique to discover interesting trends or patterns in data, e.g., groups of consumers based on their behavior. In a broad range of application areas, such as cybersecurity, e-commerce, mobile data processing, health analytics, user modeling and behavioral analytics, clustering can be used. In the following, we briefly discuss and summarize various types of clustering methods.

Partitioning methods: Based on the features and similarities in the data, this clustering approach categorizes the data into multiple groups or clusters. The data scientists or analysts typically determine the number of clusters either dynamically or statically depending on the nature of the target applications, to produce for the methods of clustering. The most common clustering algorithms based on partitioning methods are K-means [ 69 ], K-Mediods [ 80 ], CLARA [ 55 ] etc.

Density-based methods: To identify distinct groups or clusters, it uses the concept that a cluster in the data space is a contiguous region of high point density isolated from other such clusters by contiguous regions of low point density. Points that are not part of a cluster are considered as noise. The typical clustering algorithms based on density are DBSCAN [ 32 ], OPTICS [ 12 ] etc. The density-based methods typically struggle with clusters of similar density and high dimensionality data.

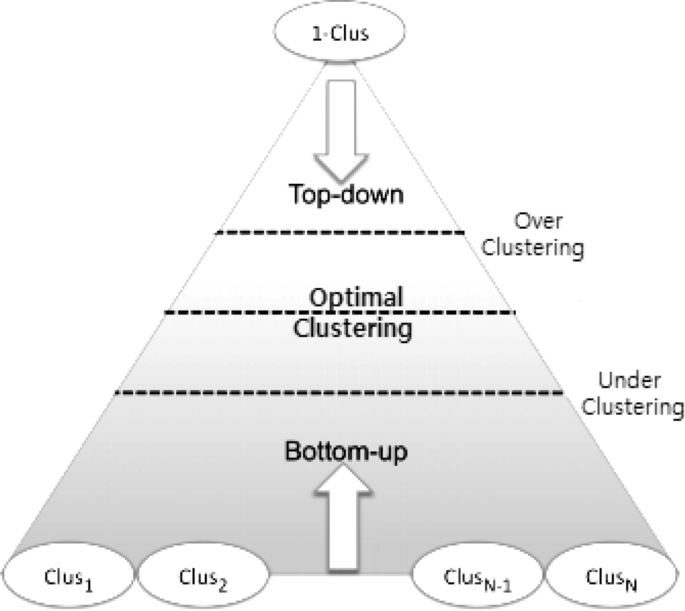

Hierarchical-based methods: Hierarchical clustering typically seeks to construct a hierarchy of clusters, i.e., the tree structure. Strategies for hierarchical clustering generally fall into two types: (i) Agglomerative—a “bottom-up” approach in which each observation begins in its cluster and pairs of clusters are combined as one, moves up the hierarchy, and (ii) Divisive—a “top-down” approach in which all observations begin in one cluster and splits are performed recursively, moves down the hierarchy, as shown in Fig 7 . Our earlier proposed BOTS technique, Sarker et al. [ 102 ] is an example of a hierarchical, particularly, bottom-up clustering algorithm.

Grid-based methods: To deal with massive datasets, grid-based clustering is especially suitable. To obtain clusters, the principle is first to summarize the dataset with a grid representation and then to combine grid cells. STING [ 122 ], CLIQUE [ 6 ], etc. are the standard algorithms of grid-based clustering.

Model-based methods: There are mainly two types of model-based clustering algorithms: one that uses statistical learning, and the other based on a method of neural network learning [ 130 ]. For instance, GMM [ 89 ] is an example of a statistical learning method, and SOM [ 22 ] [ 96 ] is an example of a neural network learning method.

Constraint-based methods: Constrained-based clustering is a semi-supervised approach to data clustering that uses constraints to incorporate domain knowledge. Application or user-oriented constraints are incorporated to perform the clustering. The typical algorithms of this kind of clustering are COP K-means [ 121 ], CMWK-Means [ 27 ], etc.

A graphical interpretation of the widely-used hierarchical clustering (Bottom-up and top-down) technique

Many clustering algorithms have been proposed with the ability to grouping data in machine learning and data science literature [ 41 , 125 ]. In the following, we summarize the popular methods that are used widely in various application areas.

K-means clustering: K-means clustering [ 69 ] is a fast, robust, and simple algorithm that provides reliable results when data sets are well-separated from each other. The data points are allocated to a cluster in this algorithm in such a way that the amount of the squared distance between the data points and the centroid is as small as possible. In other words, the K-means algorithm identifies the k number of centroids and then assigns each data point to the nearest cluster while keeping the centroids as small as possible. Since it begins with a random selection of cluster centers, the results can be inconsistent. Since extreme values can easily affect a mean, the K-means clustering algorithm is sensitive to outliers. K-medoids clustering [ 91 ] is a variant of K-means that is more robust to noises and outliers.

Mean-shift clustering: Mean-shift clustering [ 37 ] is a nonparametric clustering technique that does not require prior knowledge of the number of clusters or constraints on cluster shape. Mean-shift clustering aims to discover “blobs” in a smooth distribution or density of samples [ 82 ]. It is a centroid-based algorithm that works by updating centroid candidates to be the mean of the points in a given region. To form the final set of centroids, these candidates are filtered in a post-processing stage to remove near-duplicates. Cluster analysis in computer vision and image processing are examples of application domains. Mean Shift has the disadvantage of being computationally expensive. Moreover, in cases of high dimension, where the number of clusters shifts abruptly, the mean-shift algorithm does not work well.

DBSCAN: Density-based spatial clustering of applications with noise (DBSCAN) [ 32 ] is a base algorithm for density-based clustering which is widely used in data mining and machine learning. This is known as a non-parametric density-based clustering technique for separating high-density clusters from low-density clusters that are used in model building. DBSCAN’s main idea is that a point belongs to a cluster if it is close to many points from that cluster. It can find clusters of various shapes and sizes in a vast volume of data that is noisy and contains outliers. DBSCAN, unlike k-means, does not require a priori specification of the number of clusters in the data and can find arbitrarily shaped clusters. Although k-means is much faster than DBSCAN, it is efficient at finding high-density regions and outliers, i.e., is robust to outliers.

GMM clustering: Gaussian mixture models (GMMs) are often used for data clustering, which is a distribution-based clustering algorithm. A Gaussian mixture model is a probabilistic model in which all the data points are produced by a mixture of a finite number of Gaussian distributions with unknown parameters [ 82 ]. To find the Gaussian parameters for each cluster, an optimization algorithm called expectation-maximization (EM) [ 82 ] can be used. EM is an iterative method that uses a statistical model to estimate the parameters. In contrast to k-means, Gaussian mixture models account for uncertainty and return the likelihood that a data point belongs to one of the k clusters. GMM clustering is more robust than k-means and works well even with non-linear data distributions.