Chapter Twelve: Positing a Thesis Statement and Composing a Title / Defining Key Terms

Defining Key Terms

You are viewing the first edition of this textbook. a second edition is available – please visit the latest edition for updated information..

Earlier in this course, we discussed how to conduct a library search using key terms. Here we discuss how to present key terms. Place yourself in your audience’s position and try to anticipate their need for information. Is your audience composed mostly of novices or professionals? If they are novices, you will need to provide more definition and context for your key concepts and terms.

Because disciplinary knowledge is filled with specialized terms, an ordinary dictionary is of limited value. Disciplines like psychology, cultural studies, and history use terms in ways that are often different from the way we communicate in daily life. Some disciplines have their own dictionaries of key terms. Others may have terms scattered throughout glossaries in important primary texts and textbooks.

Key terms are the “means of exchange” in disciplines. You gain entry into the discussion by demonstrating how well you know and understand them. Some disciplinary keywords can be tricky because they mean one thing in ordinary speech but can mean something different in the discipline. For instance, in ordinary speech, we use the word shadow to refer to a darker area produced by an object or person between a light source and a surface. In Jungian psychology, shadow refers to the unconscious or unknown aspects of a personality. Sometimes there is debate within a discipline about what key terms mean or how they should be used.

To avoid confusion, define all key terms in your paper before you begin a discussion about them. Even if you think your audience knows the definition of key terms, readers want to see how you understand the terms before you move ahead. If a definition is contested—meaning different writers define the term in different ways—make sure you acknowledge these differences and explain why you favor one definition over the others. Cite your sources when presenting key terms and concepts.

Key Takeaways

| Define key terms | Present key terms without definitions |

| Look for definitions of key terms in disciplinary texts before consulting general-use dictionaries | Assume that ordinary dictionaries will provide you with the best definitions of disciplinary terms |

| Explore the history of the term to see if its meaning has changed over time | Assume that the meaning of a term has stayed the same over years, decades, or centuries |

| If the meaning of a term is contested, present these contested definitions to your reader and explain why you favor one over the others | Present a contested term without explanation |

| Even if you think your audience knows the term, assume they care what your understanding is | Assume your audience doesn’t care about your understanding of a key term |

Strategies for Conducting Literary Research Copyright © 2021 by Barry Mauer & John Venecek is licensed under a Creative Commons Attribution-NonCommercial-ShareAlike 4.0 International License , except where otherwise noted.

Share This Book

Confusion to Clarity: Definition of Terms in a Research Paper

Explore the definition of terms in research paper to enhance your understanding of crucial scientific terminology and grow your knowledge.

Have you ever come across a research paper and found yourself scratching your head over complex synonyms and unfamiliar terms? It’s a hassle as you have to fetch a dictionary and then ruffle through it to find the meaning of the terms.

To avoid that, an exclusive section called ‘ Definition of Terms in a Research Paper ’ is introduced which contains the definitions of terms used in the paper. Let us learn more about it in this article.

What Is The “Definition Of Terms” In A Research Paper?

The definition of terms section in a research paper provides a clear and concise explanation of key concepts, variables, and terminology used throughout the study.

In the definition of terms section, researchers typically provide precise definitions for specific technical terms, acronyms, jargon, and any other domain-specific vocabulary used in their work. This section enhances the overall quality and rigor of the research by establishing a solid foundation for communication and understanding.

Purpose Of Definition Of Terms In A Research Paper

This section aims to ensure that readers have a common understanding of the terminology employed in the research, eliminating confusion and promoting clarity. The definitions provided serve as a reference point for readers, enabling them to comprehend the context and scope of the study. It serves several important purposes:

- Enhancing clarity

- Establishing a shared language

- Providing a reference point

- Setting the scope and context

- Ensuring consistency

Benefits Of Having A Definition Of Terms In A Research Paper

Having a definition of terms section in a research paper offers several benefits that contribute to the overall quality and effectiveness of the study. These benefits include:

Clarity And Comprehension

Clear definitions enable readers to understand the specific meanings of key terms, concepts, and variables used in the research. This promotes clarity and enhances comprehension, ensuring that readers can follow the study’s arguments, methods, and findings more easily.

Consistency And Precision

Definitions provide a consistent framework for the use of terminology throughout the research paper. By clearly defining terms, researchers establish a standard vocabulary, reducing ambiguity and potential misunderstandings. This precision enhances the accuracy and reliability of the study’s findings.

Common Understanding

The definition of terms section helps establish a shared understanding among readers, including those from different disciplines or with varying levels of familiarity with the subject matter. It ensures that readers approach the research with a common knowledge base, facilitating effective communication and interpretation of the results.

Avoiding Misinterpretation

Without clear definitions, readers may interpret terms and concepts differently, leading to misinterpretation of the research findings. By providing explicit definitions, researchers minimize the risk of misunderstandings and ensure that readers grasp the intended meaning of the terminology used in the study.

Accessibility For Diverse Audiences

Research papers are often read by a wide range of individuals, including researchers, students, policymakers, and professionals. Having a definition of terms in a research paper helps the diverse audience understand the concepts better and make appropriate decisions.

Types Of Definitions

There are several types of definitions that researchers can employ in a research paper, depending on the context and nature of the study. Here are some common types of definitions:

Lexical Definitions

Lexical definitions provide the dictionary or commonly accepted meaning of a term. They offer a concise and widely recognized explanation of a word or concept. Lexical definitions are useful for establishing a baseline understanding of a term, especially when dealing with everyday language or non-technical terms.

Operational Definitions

Operational definitions define a term or concept about how it is measured or observed in the study. These definitions specify the procedures, instruments, or criteria used to operationalize an abstract or theoretical concept. Operational definitions help ensure clarity and consistency in data collection and measurement.

Conceptual Definitions

Conceptual definitions provide an abstract or theoretical understanding of a term or concept within a specific research context. They often involve a more detailed and nuanced explanation, exploring the underlying principles, theories, or models that inform the concept. Conceptual definitions are useful for establishing a theoretical framework and promoting deeper understanding.

Descriptive Definitions

Descriptive definitions describe a term or concept by providing characteristics, features, or attributes associated with it. These definitions focus on outlining the essential qualities or elements that define the term. Descriptive definitions help readers grasp the nature and scope of a concept by painting a detailed picture.

Theoretical Definitions

Theoretical definitions explain a term or concept based on established theories or conceptual frameworks. They situate the concept within a broader theoretical context, connecting it to relevant literature and existing knowledge. Theoretical definitions help researchers establish the theoretical underpinnings of their study and provide a foundation for further analysis.

Also read: Understanding What is Theoretical Framework

Types Of Terms

In research papers, various types of terms can be identified based on their nature and usage. Here are some common types of terms:

A key term is a term that holds significant importance or plays a crucial role within the context of a research paper. It is a term that encapsulates a core concept, idea, or variable that is central to the study. Key terms are often essential for understanding the research objectives, methodology, findings, and conclusions.

Technical Term

Technical terms refer to specialized vocabulary or terminology used within a specific field of study. These terms are often precise and have specific meanings within their respective disciplines. Examples include “allele,” “hypothesis testing,” or “algorithm.”

Legal Terms

Legal terms are specific vocabulary used within the legal field to describe concepts, principles, and regulations. These terms have particular meanings within the legal context. Examples include “defendant,” “plaintiff,” “due process,” or “jurisdiction.”

Definitional Term

A definitional term refers to a word or phrase that requires an explicit definition to ensure clarity and understanding within a particular context. These terms may be technical, abstract, or have multiple interpretations.

Career Privacy Term

Career privacy term refers to a concept or idea related to the privacy of individuals in the context of their professional or occupational activities. It encompasses the protection of personal information, and confidential data, and the right to control the disclosure of sensitive career-related details.

A broad term is a term that encompasses a wide range of related concepts, ideas, or objects. It has a broader scope and may encompass multiple subcategories or specific examples.

Also read: Keywords In A Research Paper: The Importance Of The Right Choice

Steps To Writing Definitions Of Terms

When writing the definition of terms section for a research paper, you can follow these steps to ensure clarity and accuracy:

Step 1: Identify Key Terms

Review your research paper and identify the key terms that require definition. These terms are typically central to your study, specific to your field or topic, or may have different interpretations.

Step 2: Conduct Research

Conduct thorough research on each key term to understand its commonly accepted definition, usage, and any variations or nuances within your specific research context. Consult authoritative sources such as academic journals, books, or reputable online resources.

Step 3: Craft Concise Definitions

Based on your research, craft concise definitions for each key term. Aim for clarity, precision, and relevance. Define the term in a manner that reflects its significance within your research and ensures reader comprehension.

Step 4: Use Your Own Words

Paraphrase the definitions in your own words to avoid plagiarism and maintain academic integrity. While you can draw inspiration from existing definitions, rephrase them to reflect your understanding and writing style. Avoid directly copying from sources.

Step 5: Provide Examples Or Explanations

Consider providing examples, explanations, or context for the defined terms to enhance reader understanding. This can help illustrate how the term is applied within your research or clarify its practical implications.

Step 6: Order And Format

Decide on the order in which you present the definitions. You can follow alphabetical order or arrange them based on their importance or relevance to your research. Use consistent formatting, such as bold or italics, to distinguish the defined terms from the rest of the text.

Step 7: Revise And Refine

Review the definitions for clarity, coherence, and accuracy. Ensure that they align with your research objectives and are tailored to your specific study. Seek feedback from peers, mentors, or experts in your field to further refine and improve the definitions.

Step 8: Include Proper Citations

If you have drawn ideas or information from external sources, remember to provide proper citations for those sources. This demonstrates academic integrity and acknowledges the original authors.

Step 9: Incorporate The Section Into Your Paper

Integrate the definition of terms section into your research paper, typically as an early section following the introduction. Make sure it flows smoothly with the rest of the paper and provides a solid foundation for understanding the subsequent content.

By following these steps, you can create a well-crafted and informative definition of terms section that enhances the clarity and comprehension of your research paper.

In conclusion, the definition of terms in a research paper plays a critical role by providing clarity, establishing a common understanding, and enhancing communication among readers. The definition of terms section is an essential component that contributes to the overall quality, rigor, and effectiveness of a research paper.

Also read: Beyond The Main Text: The Value Of A Research Paper Appendix

Join Our Fast-Growing Community Of Users To Revolutionize Scientific Communication!

Every individual needs a community to learn, grow, and nurture their hobbies, passions, and skills. But when you are a scientist, it becomes difficult to identify the right community that aligns with your goals, has like-minded professionals, and understands mutual collaboration.

If you are a scientist, looking for a great community, Mind the Graph is here. Join our fast-growing community of users to revolutionize scientific communication and build a healthy collaboration. Sign up for free.

Subscribe to our newsletter

Exclusive high quality content about effective visual communication in science.

Unlock Your Creativity

Create infographics, presentations and other scientifically-accurate designs without hassle — absolutely free for 7 days!

About Sowjanya Pedada

Sowjanya is a passionate writer and an avid reader. She holds MBA in Agribusiness Management and now is working as a content writer. She loves to play with words and hopes to make a difference in the world through her writings. Apart from writing, she is interested in reading fiction novels and doing craftwork. She also loves to travel and explore different cuisines and spend time with her family and friends.

Content tags

Getting Started: Library Research Strategy

- Choosing Your Topic

- Gathering Background Information

- Defining Key Terms

- Crafting a Research Question

- Gathering Relevant Information

- Evaluating Sources This link opens in a new window

- Formulating a Thesis Statement

- Avoiding Plagiarism This link opens in a new window

- Citation Styles This link opens in a new window

If you have chosen a topic, you may break the topic down into a few main concepts and then list and/or define key terms related to that concept. If you have performed some background searching, you can include some of the words that were used to describe your topic.

For example, if your topic deals with the relationship between teenage smoking and advertising in the United States, the following key terms may apply:

smoking -- tobacco -- nicotine -- cigarettes

teenage -- adolescents -- children -- teens -- youth

advertising -- marketing -- media -- commercials -- TV -- billboards

When listing the key terms or concepts of your topic, be sure to consider synonyms for these terms as well. Since research is an iterative process, you will also find additional key terms to utilize through the resources you encounter throughout your research process.

- << Previous: Gathering Background Information

- Next: Crafting a Research Question >>

- Last Updated: Mar 11, 2024 4:57 PM

- URL: https://libguides.chapman.edu/strategy

- USC Libraries

- Research Guides

Organizing Your Social Sciences Research Paper

Glossary of research terms.

- Purpose of Guide

- Design Flaws to Avoid

- Independent and Dependent Variables

- Reading Research Effectively

- Narrowing a Topic Idea

- Broadening a Topic Idea

- Extending the Timeliness of a Topic Idea

- Academic Writing Style

- Applying Critical Thinking

- Choosing a Title

- Making an Outline

- Paragraph Development

- Research Process Video Series

- Executive Summary

- The C.A.R.S. Model

- Background Information

- The Research Problem/Question

- Theoretical Framework

- Citation Tracking

- Content Alert Services

- Evaluating Sources

- Primary Sources

- Secondary Sources

- Tiertiary Sources

- Scholarly vs. Popular Publications

- Qualitative Methods

- Quantitative Methods

- Insiderness

- Using Non-Textual Elements

- Limitations of the Study

- Common Grammar Mistakes

- Writing Concisely

- Avoiding Plagiarism

- Footnotes or Endnotes?

- Further Readings

- Generative AI and Writing

- USC Libraries Tutorials and Other Guides

- Bibliography

This glossary is intended to assist you in understanding commonly used terms and concepts when reading, interpreting, and evaluating scholarly research. Also included are common words and phrases defined within the context of how they apply to research in the social and behavioral sciences.

- Acculturation -- refers to the process of adapting to another culture, particularly in reference to blending in with the majority population [e.g., an immigrant adopting American customs]. However, acculturation also implies that both cultures add something to one another, but still remain distinct groups unto themselves.

- Accuracy -- a term used in survey research to refer to the match between the target population and the sample.

- Affective Measures -- procedures or devices used to obtain quantified descriptions of an individual's feelings, emotional states, or dispositions.

- Aggregate -- a total created from smaller units. For instance, the population of a county is an aggregate of the populations of the cities, rural areas, etc. that comprise the county. As a verb, it refers to total data from smaller units into a large unit.

- Anonymity -- a research condition in which no one, including the researcher, knows the identities of research participants.

- Baseline -- a control measurement carried out before an experimental treatment.

- Behaviorism -- school of psychological thought concerned with the observable, tangible, objective facts of behavior, rather than with subjective phenomena such as thoughts, emotions, or impulses. Contemporary behaviorism also emphasizes the study of mental states such as feelings and fantasies to the extent that they can be directly observed and measured.

- Beliefs -- ideas, doctrines, tenets, etc. that are accepted as true on grounds which are not immediately susceptible to rigorous proof.

- Benchmarking -- systematically measuring and comparing the operations and outcomes of organizations, systems, processes, etc., against agreed upon "best-in-class" frames of reference.

- Bias -- a loss of balance and accuracy in the use of research methods. It can appear in research via the sampling frame, random sampling, or non-response. It can also occur at other stages in research, such as while interviewing, in the design of questions, or in the way data are analyzed and presented. Bias means that the research findings will not be representative of, or generalizable to, a wider population.

- Case Study -- the collection and presentation of detailed information about a particular participant or small group, frequently including data derived from the subjects themselves.

- Causal Hypothesis -- a statement hypothesizing that the independent variable affects the dependent variable in some way.

- Causal Relationship -- the relationship established that shows that an independent variable, and nothing else, causes a change in a dependent variable. It also establishes how much of a change is shown in the dependent variable.

- Causality -- the relation between cause and effect.

- Central Tendency -- any way of describing or characterizing typical, average, or common values in some distribution.

- Chi-square Analysis -- a common non-parametric statistical test which compares an expected proportion or ratio to an actual proportion or ratio.

- Claim -- a statement, similar to a hypothesis, which is made in response to the research question and that is affirmed with evidence based on research.

- Classification -- ordering of related phenomena into categories, groups, or systems according to characteristics or attributes.

- Cluster Analysis -- a method of statistical analysis where data that share a common trait are grouped together. The data is collected in a way that allows the data collector to group data according to certain characteristics.

- Cohort Analysis -- group by group analytic treatment of individuals having a statistical factor in common to each group. Group members share a particular characteristic [e.g., born in a given year] or a common experience [e.g., entering a college at a given time].

- Confidentiality -- a research condition in which no one except the researcher(s) knows the identities of the participants in a study. It refers to the treatment of information that a participant has disclosed to the researcher in a relationship of trust and with the expectation that it will not be revealed to others in ways that violate the original consent agreement, unless permission is granted by the participant.

- Confirmability Objectivity -- the findings of the study could be confirmed by another person conducting the same study.

- Construct -- refers to any of the following: something that exists theoretically but is not directly observable; a concept developed [constructed] for describing relations among phenomena or for other research purposes; or, a theoretical definition in which concepts are defined in terms of other concepts. For example, intelligence cannot be directly observed or measured; it is a construct.

- Construct Validity -- seeks an agreement between a theoretical concept and a specific measuring device, such as observation.

- Constructivism -- the idea that reality is socially constructed. It is the view that reality cannot be understood outside of the way humans interact and that the idea that knowledge is constructed, not discovered. Constructivists believe that learning is more active and self-directed than either behaviorism or cognitive theory would postulate.

- Content Analysis -- the systematic, objective, and quantitative description of the manifest or latent content of print or nonprint communications.

- Context Sensitivity -- awareness by a qualitative researcher of factors such as values and beliefs that influence cultural behaviors.

- Control Group -- the group in an experimental design that receives either no treatment or a different treatment from the experimental group. This group can thus be compared to the experimental group.

- Controlled Experiment -- an experimental design with two or more randomly selected groups [an experimental group and control group] in which the researcher controls or introduces the independent variable and measures the dependent variable at least two times [pre- and post-test measurements].

- Correlation -- a common statistical analysis, usually abbreviated as r, that measures the degree of relationship between pairs of interval variables in a sample. The range of correlation is from -1.00 to zero to +1.00. Also, a non-cause and effect relationship between two variables.

- Covariate -- a product of the correlation of two related variables times their standard deviations. Used in true experiments to measure the difference of treatment between them.

- Credibility -- a researcher's ability to demonstrate that the object of a study is accurately identified and described based on the way in which the study was conducted.

- Critical Theory -- an evaluative approach to social science research, associated with Germany's neo-Marxist “Frankfurt School,” that aims to criticize as well as analyze society, opposing the political orthodoxy of modern communism. Its goal is to promote human emancipatory forces and to expose ideas and systems that impede them.

- Data -- factual information [as measurements or statistics] used as a basis for reasoning, discussion, or calculation.

- Data Mining -- the process of analyzing data from different perspectives and summarizing it into useful information, often to discover patterns and/or systematic relationships among variables.

- Data Quality -- this is the degree to which the collected data [results of measurement or observation] meet the standards of quality to be considered valid [trustworthy] and reliable [dependable].

- Deductive -- a form of reasoning in which conclusions are formulated about particulars from general or universal premises.

- Dependability -- being able to account for changes in the design of the study and the changing conditions surrounding what was studied.

- Dependent Variable -- a variable that varies due, at least in part, to the impact of the independent variable. In other words, its value “depends” on the value of the independent variable. For example, in the variables “gender” and “academic major,” academic major is the dependent variable, meaning that your major cannot determine whether you are male or female, but your gender might indirectly lead you to favor one major over another.

- Deviation -- the distance between the mean and a particular data point in a given distribution.

- Discourse Community -- a community of scholars and researchers in a given field who respond to and communicate to each other through published articles in the community's journals and presentations at conventions. All members of the discourse community adhere to certain conventions for the presentation of their theories and research.

- Discrete Variable -- a variable that is measured solely in whole units, such as, gender and number of siblings.

- Distribution -- the range of values of a particular variable.

- Effect Size -- the amount of change in a dependent variable that can be attributed to manipulations of the independent variable. A large effect size exists when the value of the dependent variable is strongly influenced by the independent variable. It is the mean difference on a variable between experimental and control groups divided by the standard deviation on that variable of the pooled groups or of the control group alone.

- Emancipatory Research -- research is conducted on and with people from marginalized groups or communities. It is led by a researcher or research team who is either an indigenous or external insider; is interpreted within intellectual frameworks of that group; and, is conducted largely for the purpose of empowering members of that community and improving services for them. It also engages members of the community as co-constructors or validators of knowledge.

- Empirical Research -- the process of developing systematized knowledge gained from observations that are formulated to support insights and generalizations about the phenomena being researched.

- Epistemology -- concerns knowledge construction; asks what constitutes knowledge and how knowledge is validated.

- Ethnography -- method to study groups and/or cultures over a period of time. The goal of this type of research is to comprehend the particular group/culture through immersion into the culture or group. Research is completed through various methods but, since the researcher is immersed within the group for an extended period of time, more detailed information is usually collected during the research.

- Expectancy Effect -- any unconscious or conscious cues that convey to the participant in a study how the researcher wants them to respond. Expecting someone to behave in a particular way has been shown to promote the expected behavior. Expectancy effects can be minimized by using standardized interactions with subjects, automated data-gathering methods, and double blind protocols.

- External Validity -- the extent to which the results of a study are generalizable or transferable.

- Factor Analysis -- a statistical test that explores relationships among data. The test explores which variables in a data set are most related to each other. In a carefully constructed survey, for example, factor analysis can yield information on patterns of responses, not simply data on a single response. Larger tendencies may then be interpreted, indicating behavior trends rather than simply responses to specific questions.

- Field Studies -- academic or other investigative studies undertaken in a natural setting, rather than in laboratories, classrooms, or other structured environments.

- Focus Groups -- small, roundtable discussion groups charged with examining specific topics or problems, including possible options or solutions. Focus groups usually consist of 4-12 participants, guided by moderators to keep the discussion flowing and to collect and report the results.

- Framework -- the structure and support that may be used as both the launching point and the on-going guidelines for investigating a research problem.

- Generalizability -- the extent to which research findings and conclusions conducted on a specific study to groups or situations can be applied to the population at large.

- Grey Literature -- research produced by organizations outside of commercial and academic publishing that publish materials, such as, working papers, research reports, and briefing papers.

- Grounded Theory -- practice of developing other theories that emerge from observing a group. Theories are grounded in the group's observable experiences, but researchers add their own insight into why those experiences exist.

- Group Behavior -- behaviors of a group as a whole, as well as the behavior of an individual as influenced by his or her membership in a group.

- Hypothesis -- a tentative explanation based on theory to predict a causal relationship between variables.

- Independent Variable -- the conditions of an experiment that are systematically manipulated by the researcher. A variable that is not impacted by the dependent variable, and that itself impacts the dependent variable. In the earlier example of "gender" and "academic major," (see Dependent Variable) gender is the independent variable.

- Individualism -- a theory or policy having primary regard for the liberty, rights, or independent actions of individuals.

- Inductive -- a form of reasoning in which a generalized conclusion is formulated from particular instances.

- Inductive Analysis -- a form of analysis based on inductive reasoning; a researcher using inductive analysis starts with answers, but formulates questions throughout the research process.

- Insiderness -- a concept in qualitative research that refers to the degree to which a researcher has access to and an understanding of persons, places, or things within a group or community based on being a member of that group or community.

- Internal Consistency -- the extent to which all questions or items assess the same characteristic, skill, or quality.

- Internal Validity -- the rigor with which the study was conducted [e.g., the study's design, the care taken to conduct measurements, and decisions concerning what was and was not measured]. It is also the extent to which the designers of a study have taken into account alternative explanations for any causal relationships they explore. In studies that do not explore causal relationships, only the first of these definitions should be considered when assessing internal validity.

- Life History -- a record of an event/events in a respondent's life told [written down, but increasingly audio or video recorded] by the respondent from his/her own perspective in his/her own words. A life history is different from a "research story" in that it covers a longer time span, perhaps a complete life, or a significant period in a life.

- Margin of Error -- the permittable or acceptable deviation from the target or a specific value. The allowance for slight error or miscalculation or changing circumstances in a study.

- Measurement -- process of obtaining a numerical description of the extent to which persons, organizations, or things possess specified characteristics.

- Meta-Analysis -- an analysis combining the results of several studies that address a set of related hypotheses.

- Methodology -- a theory or analysis of how research does and should proceed.

- Methods -- systematic approaches to the conduct of an operation or process. It includes steps of procedure, application of techniques, systems of reasoning or analysis, and the modes of inquiry employed by a discipline.

- Mixed-Methods -- a research approach that uses two or more methods from both the quantitative and qualitative research categories. It is also referred to as blended methods, combined methods, or methodological triangulation.

- Modeling -- the creation of a physical or computer analogy to understand a particular phenomenon. Modeling helps in estimating the relative magnitude of various factors involved in a phenomenon. A successful model can be shown to account for unexpected behavior that has been observed, to predict certain behaviors, which can then be tested experimentally, and to demonstrate that a given theory cannot account for certain phenomenon.

- Models -- representations of objects, principles, processes, or ideas often used for imitation or emulation.

- Naturalistic Observation -- observation of behaviors and events in natural settings without experimental manipulation or other forms of interference.

- Norm -- the norm in statistics is the average or usual performance. For example, students usually complete their high school graduation requirements when they are 18 years old. Even though some students graduate when they are younger or older, the norm is that any given student will graduate when he or she is 18 years old.

- Null Hypothesis -- the proposition, to be tested statistically, that the experimental intervention has "no effect," meaning that the treatment and control groups will not differ as a result of the intervention. Investigators usually hope that the data will demonstrate some effect from the intervention, thus allowing the investigator to reject the null hypothesis.

- Ontology -- a discipline of philosophy that explores the science of what is, the kinds and structures of objects, properties, events, processes, and relations in every area of reality.

- Panel Study -- a longitudinal study in which a group of individuals is interviewed at intervals over a period of time.

- Participant -- individuals whose physiological and/or behavioral characteristics and responses are the object of study in a research project.

- Peer-Review -- the process in which the author of a book, article, or other type of publication submits his or her work to experts in the field for critical evaluation, usually prior to publication. This is standard procedure in publishing scholarly research.

- Phenomenology -- a qualitative research approach concerned with understanding certain group behaviors from that group's point of view.

- Philosophy -- critical examination of the grounds for fundamental beliefs and analysis of the basic concepts, doctrines, or practices that express such beliefs.

- Phonology -- the study of the ways in which speech sounds form systems and patterns in language.

- Policy -- governing principles that serve as guidelines or rules for decision making and action in a given area.

- Policy Analysis -- systematic study of the nature, rationale, cost, impact, effectiveness, implications, etc., of existing or alternative policies, using the theories and methodologies of relevant social science disciplines.

- Population -- the target group under investigation. The population is the entire set under consideration. Samples are drawn from populations.

- Position Papers -- statements of official or organizational viewpoints, often recommending a particular course of action or response to a situation.

- Positivism -- a doctrine in the philosophy of science, positivism argues that science can only deal with observable entities known directly to experience. The positivist aims to construct general laws, or theories, which express relationships between phenomena. Observation and experiment is used to show whether the phenomena fit the theory.

- Predictive Measurement -- use of tests, inventories, or other measures to determine or estimate future events, conditions, outcomes, or trends.

- Principal Investigator -- the scientist or scholar with primary responsibility for the design and conduct of a research project.

- Probability -- the chance that a phenomenon will occur randomly. As a statistical measure, it is shown as p [the "p" factor].

- Questionnaire -- structured sets of questions on specified subjects that are used to gather information, attitudes, or opinions.

- Random Sampling -- a process used in research to draw a sample of a population strictly by chance, yielding no discernible pattern beyond chance. Random sampling can be accomplished by first numbering the population, then selecting the sample according to a table of random numbers or using a random-number computer generator. The sample is said to be random because there is no regular or discernible pattern or order. Random sample selection is used under the assumption that sufficiently large samples assigned randomly will exhibit a distribution comparable to that of the population from which the sample is drawn. The random assignment of participants increases the probability that differences observed between participant groups are the result of the experimental intervention.

- Reliability -- the degree to which a measure yields consistent results. If the measuring instrument [e.g., survey] is reliable, then administering it to similar groups would yield similar results. Reliability is a prerequisite for validity. An unreliable indicator cannot produce trustworthy results.

- Representative Sample -- sample in which the participants closely match the characteristics of the population, and thus, all segments of the population are represented in the sample. A representative sample allows results to be generalized from the sample to the population.

- Rigor -- degree to which research methods are scrupulously and meticulously carried out in order to recognize important influences occurring in an experimental study.

- Sample -- the population researched in a particular study. Usually, attempts are made to select a "sample population" that is considered representative of groups of people to whom results will be generalized or transferred. In studies that use inferential statistics to analyze results or which are designed to be generalizable, sample size is critical, generally the larger the number in the sample, the higher the likelihood of a representative distribution of the population.

- Sampling Error -- the degree to which the results from the sample deviate from those that would be obtained from the entire population, because of random error in the selection of respondent and the corresponding reduction in reliability.

- Saturation -- a situation in which data analysis begins to reveal repetition and redundancy and when new data tend to confirm existing findings rather than expand upon them.

- Semantics -- the relationship between symbols and meaning in a linguistic system. Also, the cuing system that connects what is written in the text to what is stored in the reader's prior knowledge.

- Social Theories -- theories about the structure, organization, and functioning of human societies.

- Sociolinguistics -- the study of language in society and, more specifically, the study of language varieties, their functions, and their speakers.

- Standard Deviation -- a measure of variation that indicates the typical distance between the scores of a distribution and the mean; it is determined by taking the square root of the average of the squared deviations in a given distribution. It can be used to indicate the proportion of data within certain ranges of scale values when the distribution conforms closely to the normal curve.

- Statistical Analysis -- application of statistical processes and theory to the compilation, presentation, discussion, and interpretation of numerical data.

- Statistical Bias -- characteristics of an experimental or sampling design, or the mathematical treatment of data, that systematically affects the results of a study so as to produce incorrect, unjustified, or inappropriate inferences or conclusions.

- Statistical Significance -- the probability that the difference between the outcomes of the control and experimental group are great enough that it is unlikely due solely to chance. The probability that the null hypothesis can be rejected at a predetermined significance level [0.05 or 0.01].

- Statistical Tests -- researchers use statistical tests to make quantitative decisions about whether a study's data indicate a significant effect from the intervention and allow the researcher to reject the null hypothesis. That is, statistical tests show whether the differences between the outcomes of the control and experimental groups are great enough to be statistically significant. If differences are found to be statistically significant, it means that the probability [likelihood] that these differences occurred solely due to chance is relatively low. Most researchers agree that a significance value of .05 or less [i.e., there is a 95% probability that the differences are real] sufficiently determines significance.

- Subcultures -- ethnic, regional, economic, or social groups exhibiting characteristic patterns of behavior sufficient to distinguish them from the larger society to which they belong.

- Testing -- the act of gathering and processing information about individuals' ability, skill, understanding, or knowledge under controlled conditions.

- Theory -- a general explanation about a specific behavior or set of events that is based on known principles and serves to organize related events in a meaningful way. A theory is not as specific as a hypothesis.

- Treatment -- the stimulus given to a dependent variable.

- Trend Samples -- method of sampling different groups of people at different points in time from the same population.

- Triangulation -- a multi-method or pluralistic approach, using different methods in order to focus on the research topic from different viewpoints and to produce a multi-faceted set of data. Also used to check the validity of findings from any one method.

- Unit of Analysis -- the basic observable entity or phenomenon being analyzed by a study and for which data are collected in the form of variables.

- Validity -- the degree to which a study accurately reflects or assesses the specific concept that the researcher is attempting to measure. A method can be reliable, consistently measuring the same thing, but not valid.

- Variable -- any characteristic or trait that can vary from one person to another [race, gender, academic major] or for one person over time [age, political beliefs].

- Weighted Scores -- scores in which the components are modified by different multipliers to reflect their relative importance.

- White Paper -- an authoritative report that often states the position or philosophy about a social, political, or other subject, or a general explanation of an architecture, framework, or product technology written by a group of researchers. A white paper seeks to contain unbiased information and analysis regarding a business or policy problem that the researchers may be facing.

Elliot, Mark, Fairweather, Ian, Olsen, Wendy Kay, and Pampaka, Maria. A Dictionary of Social Research Methods. Oxford, UK: Oxford University Press, 2016; Free Social Science Dictionary. Socialsciencedictionary.com [2008]. Glossary. Institutional Review Board. Colorado College; Glossary of Key Terms. Writing@CSU. Colorado State University; Glossary A-Z. Education.com; Glossary of Research Terms. Research Mindedness Virtual Learning Resource. Centre for Human Servive Technology. University of Southampton; Miller, Robert L. and Brewer, John D. The A-Z of Social Research: A Dictionary of Key Social Science Research Concepts London: SAGE, 2003; Jupp, Victor. The SAGE Dictionary of Social and Cultural Research Methods . London: Sage, 2006.

- << Previous: Independent and Dependent Variables

- Next: 1. Choosing a Research Problem >>

- Last Updated: May 30, 2024 9:38 AM

- URL: https://libguides.usc.edu/writingguide

Academic Phrasebank

Defining terms.

- GENERAL LANGUAGE FUNCTIONS

- Being cautious

- Being critical

- Classifying and listing

- Compare and contrast

- Describing trends

- Describing quantities

- Explaining causality

- Giving examples

- Signalling transition

- Writing about the past

In academic work students are often expected to give definitions of key words and phrases in order to demonstrate to their tutors that they understand these terms clearly. More generally, however, academic writers define terms so that their readers understand exactly what is meant when certain key terms are used. When important words are not clearly understood misinterpretation may result. In fact, many disagreements (academic, legal, diplomatic, personal) arise as a result of different interpretations of the same term. In academic writing, teachers and their students often have to explore these differing interpretations before moving on to study a topic.

Introductory phrases

The term ‘X’ was first used by … The term ‘X’ can be traced back to … Previous studies mostly defined X as … The term ‘X’ was introduced by Smith in her … Historically, the term ‘X’ has been used to describe … It is necessary here to clarify exactly what is meant by … This shows a need to be explicit about exactly what is meant by the word ‘X’.

Simple three-part definitions

| A university is | an institution | where knowledge is produced and passed on to others |

| Social Economics may be defined as | the branch of economics | [which is] concerned with the measurement, causes, and consequences of social problems. |

| Research may be defined as | a systematic process | which consists of three elements or components: (1) a question, problem, or hypothesis, (2) data, and (3) analysis and interpretation of data. |

| Braille is | a system | of touch reading and writing for blind people in which raised dots on paper represent the letters of the alphabet. |

General meanings or application of meanings

X can broadly be defined as … X can be loosely described as … X can be defined as … It encompasses … In the literature, the term tends to be used to refer to … In broad terms, X can be defined as any stimulus that is … Whereas X refers to the operations of …, Y refers to the … The broad use of the term ‘X’ is sometimes equated with … The term ‘disease’ refers to a biological event characterised by … Defined as …, X is now considered a worldwide problem and is associated with …

| The term ‘X’ | refers to … encompasses A), B), and C). has come to be used to refer to … is generally understood to mean … has been used to refer to situations in which … carries certain connotations in some types of … is a relatively new name for a Y, commonly referred to as … |

Indicating varying definitions

The definition of X has evolved. There are multiple definitions of X. Several definitions of X have been proposed. In the field of X, various definitions of X are found. The term ‘X’ embodies a multitude of concepts which … This term has two overlapping, even slightly confusing meanings. Widely varying definitions of X have emerged (Smith and Jones, 1999). Despite its common usage, X is used in different disciplines to mean different things. Since the definition of X varies among researchers, it is important to clarify how the term is …

| The meaning of this term | has evolved. has varied over time. has been extended to refer to … has been broadened in recent years. has not been consistent throughout … has changed somewhat from its original definition … |

Indicating difficulties in defining a term

X is a contested term. X is a rather nebulous term … X is challenging to define because … A precise definition of X has proved elusive. A generally accepted definition of X is lacking. Unfortunately, X remains a poorly defined term. There is no agreed definition on what constitutes … There is little consensus about what X actually means. There is a degree of uncertainty around the terminology in … These terms are often used interchangeably and without precision. Numerous terms are used to describe X, the most common of which are …. The definition of X varies in the literature and there is terminological confusion. Smith (2001) identified four abilities that might be subsumed under the term ‘X’: a) … ‘X’ is a term frequently used in the literature, but to date there is no consensus about … X is a commonly-used notion in psychology and yet it is a concept difficult to define precisely. Although differences of opinion still exist, there appears to be some agreement that X refers to …

| The meaning of this term | has been disputed. has been debated ever since … has proved to be notoriously hard to define. has been an object of major disagreement in … has been a matter of ongoing discussion among … |

Specifying terms that are used in an essay or thesis

The term ‘X’ is used here to refer to … In the present study, X is defined as … The term ‘X’ will be used solely when referring to … In this essay, the term ‘X’ will be used in its broadest sense to refer to all … In this paper, the term that will be used to describe this phenomenon is ‘X’. In this dissertation, the terms ‘X’ and ‘Y’ are used interchangeably to mean … Throughout this thesis, the term ‘X’ is used to refer to informal systems as well as … While a variety of definitions of the term ‘X’ have been suggested, this paper will use the definition first suggested by Smith (1968) who saw it as …

Referring to people’s definitions: author prominent

For Smith (2001), X means … Smith (2001) uses the term ‘X’ to refer to … Smith (1954) was apparently the first to use the term … In 1987, psychologist John Smith popularized the term ‘X’ to describe … According to a definition provided by Smith (2001:23), X is ‘the maximally … This definition is close to those of Smith (2012) and Jones (2013) who define X as … Smith, has shown that, as late as 1920, Jones was using the term ‘X’ to refer to particular … One of the first people to define nursing was Florence Nightingale (1860), who wrote: ‘… …’ Chomsky writes that a grammar is a ‘device of some sort for producing the ….’ (1957, p.11). Aristotle defines the imagination as ‘the movement which results upon an actual sensation.’ Smith et al . (2002) have provided a new definition of health: ‘health is a state of being with …

Referring to people’s definitions: author non-prominent

X is defined by Smith (2003: 119) as ‘… …’ The term ‘X’ is used by Smith (2001) to refer to … X is, for Smith (2012), the situation which occurs when … A further definition of X is given by Smith (1982) who describes … The term ‘X’ is used by Aristotle in four overlapping senses. First, it is the underlying … X is the degree to which an assessment process or device measures … (Smith et al ., 1986).

Commenting on a definition

| This definition | includes … allows for … highlights the … helps distinguish … takes into account … poses a problem for … will continue to evolve. can vary depending on … was agreed upon after … has been broadened to include … |

| The following definition is | intended to … modelled on … too simplistic: useful because … problematic as … inadequate since … in need of revision since … important for what it excludes. the most precise produced so far. |

+44 (0) 161 306 6000

The University of Manchester Oxford Rd Manchester M13 9PL UK

Connect With Us

The University of Manchester

Want to create or adapt books like this? Learn more about how Pressbooks supports open publishing practices.

Introduction to Research Methods

3 Understanding Key Research Concepts and Terms

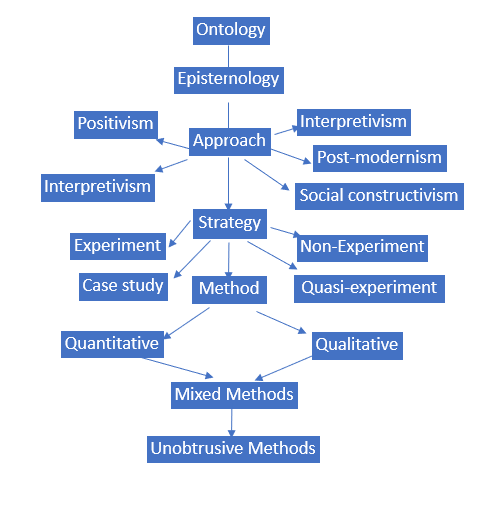

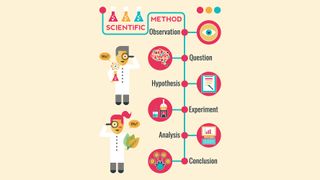

Many terms and concepts are associated with research methods, particularly as it relates to the research planning decisions you must make along the way. Throughout this textbook, you will be exposed to many of these terms and concepts. Figure 1.1 is a general chart that will help you contextualize many of these terms and also understand the research process. As you can see, Figure 1.1 begins with two key concepts: ontology and epistemology, advances through other concepts and concludes with three research methodological approaches: qualitative, quantitative and mixed methods.

However, it is important to note that research does not end with making decisions about the type of methods you will use. In fact, we could argue that the work is just beginning at this point. As such, Figure 1.1 does not represent an all-encompassing list of concepts and terms related to research methods. Keep in mind that each strategy has its own data collection and analysis approaches, which are associated with the various methodological approaches you choose. Figure 1.1 is meant to provide a general overview of the lay of the research land. You may want to keep this figure handy as you read through the various chapters.

Ontology & epistemology

Thinking about what you know and how you know what you know involves questions of ontology and epistemology. Perhaps you have heard these concepts before in a philosophy class? These concepts are relevant to the work of sociologists as well. As sociologists (those who undertake socially-focused research), we want to understand some aspect of our social world. Usually, we are not starting with zero knowledge. In fact, we usually start with some understanding of: 1) what is; 2) what can be known about what is; and, 3) what the best mechanism happens to be for learning about what is (Schmitz, 2012). In the following sections, we will define these terms and provide an example of ontology and epistemology

Ontology is a Greek word that means the study, theory, or science of being. Ontology is concerned with the what is or the nature of reality (Saunders, Lewis, & Thornhill, 2009). It can involve some very large and difficult to answer questions, such as: What is the purpose of life? What, if anything, exists beyond our universe? Ontology also asks: What categories does it belong to? Is there such a thing as objective reality? What does the verb “to be” mean?

Ontology is comprised of two aspects: objectivism and subjectivism. Objectivism means that social entities exist externally to the social actors who are concerned with their existence. Subjectivism means that social phenomena are created from the perceptions and actions of the social actors who are concerned with their existence (Saunders, et al., 2009). Figure 1.2 provides an example of a similar research project to be undertaken by two different students. While the projects being proposed by the students are similar, they each have different research questions. Read the scenario and then answer the questions that follow.

Subjectivist and objectivist approaches (adapted from Saunders et al., 2009)

Ana is an Emergency & Security Management Studies (ESMS) student at a local college. She is just beginning her capstone research project and she plans to do research at the City of Vancouver. Her research question is as follows: What is the role of City of Vancouver managers, working in the emergency management department, in enabling positive community relationships? She will be collecting data related to the roles and duties of managers in enabling positive community relationships.

Robert is also an ESMS student at the same college. He too will be undertaking his research at the City of Vancouver. His research question is as follows: What is the effect of the City of Vancouver’s corporate culture in enabling managers, working in the emergency management department, to develop a positive relationship with the local community? He will be collecting data related to perceptions of corporate culture and its effect on enabling positive community-emergency management department relationships.

Before the students begin collecting data, they learn that six months ago, the long-time emergency department manager and assistance manager both retired. They have been replaced by two senior staff managers who have Bachelor’s degrees in Emergency Services Management. These new managers are considered more up-to-date and knowledgeable on emergency services management, give their specialized academic training and practical on-the-job work experience in this department. The new managers have, essentially, the same job duties and operate under the same procedures as the managers they replaced. When Ana and Robert approach the managers to ask them to participate in their separate studies, the new managers state that they are just new on the job and probably cannot answer the research questions and they decline to participate. Ana and Robert are worried that they will need to start all over again with a new research project. They return to their supervisors to get their opinions on what they should do.

Before reading about their supervisors’ responses, answer the following questions:

- Is Ana’s research question indicative of an objectivist or a subjectivist approach?

- Is Robert’s research question indicative of an objectivist or a subjectivist approach?

- Given your answer in question 1, which managers could Ana interview (new, old, or both) for her research study? Why?

- Given your answer in question 2, which managers could Robert interview (new, old, or both) for his research study? Why?

Ana’s supervisor tells her that her research question set up for an objectivist approach. Her supervisor tells her that in her study the social entity (the City) exists in reality external to the social actors (the managers). In other words, there is a formal management structure at the City that has largely remained unchanged since the old managers left and the new ones started. The procedures remain the same regardless of whoever occupies those positions. As such, Ana using an objectivist approach, could state that the new managers have job descriptions which describe their duties and that they are a part of a formal structure with a hierarchy of people reporting to them and to whom they report to. She could further state that this hierarchy, which unique to this organization, also resembles hierarchies found in other similar organizations. As such, she can argue that the new managers will be able to speak about the role they play in enabling positive community relationships. Their answers are likely to be no different than the old managers, because the management structure and the procedures remain the same. Therefore, she can go back to the new managers and ask them to participate in her research study.

Robert’s supervisor tells him that his research sets up for a subjectivist approach because in his study the social phenomena (the effect of corporate culture on the relationship with the community) is created from the perceptions and consequent actions of the social actors (the managers). In other words, there is a continual process of social interaction, that is influenced by the corporate culture at the City, and it is these interactions that influence perceptions of the relationship with the community. The relationship is in a constant state of revision. As such, Robert, using a subjectivist approach, could state that the new managers may have had few interactions with the community members to date and therefore may not be fully cognizant of how the corporate culture affects the department’s relationship with the community. While it will be important to get the new mangers’ perceptions, he will also need to speak with the precious managers to get their perceptions from the time they were employed in their positions. This is because the community-department relationship is in a state of constant revision, which is influenced by the various managers perceptions of the corporate culture and its effect on their ability to form positive community relationships. Therefore, he can go back to the current managers and ask them to participate in his study and also ask that the department please contact the previous managers to see if they would be willing to participate in his study.

As you can see from the previous examples, it is the research question of each study that served to guide the decision as to whether the researcher should take a subjective or an objective ontological approach. This decision, in turn, guided their approach to the research study, including to whom they should interview in order to answer their respective interview questions. We will be speaking a lot more about research questions in the upcoming chapters.

- Epistemology

Epistemology has to do with knowledge. Rather than dealing with questions about what is , epistemology deals with questions of how we know what is. In sociology, there are many ways to uncover knowledge. We might interview people to understand public opinion about some topic, or perhaps we’ll observe them in their natural environment. We could avoid face-to-face interaction altogether by mailing people surveys for them to complete on their own or by reading what people have to say about their opinions in newspaper editorials. These methods are all ways that sociologists gain knowledge. Each method of data collection comes with its own set of epistemological assumptions about how to find things out (Schmitz, 2012). There are two main subsections of epistemology: positivist and interpretivist philosophies. We will examine these philosophies or paradigms in the following sections.

Long Descriptions

Figure 1.1 long description: The research process.

- Interpretivism

- Post-modernism

- Social constructivism

- Non-experiment

- Quasi-experiment

- Quantitative

- Qualitative

- Mixed methods

- Unobtrusive methods

[Return to Figure 1.1]

An Introduction to Research Methods in Sociology Copyright © 2019 by Valerie A. Sheppard is licensed under a Creative Commons Attribution-NonCommercial-ShareAlike 4.0 International License , except where otherwise noted.

Share This Book

Have a language expert improve your writing

Run a free plagiarism check in 10 minutes, generate accurate citations for free.

- Knowledge Base

- Dissertation

- What is a Glossary? | Definition, Templates, & Examples

What Is a Glossary? | Definition, Templates, & Examples

Published on May 24, 2022 by Tegan George . Revised on July 18, 2023.

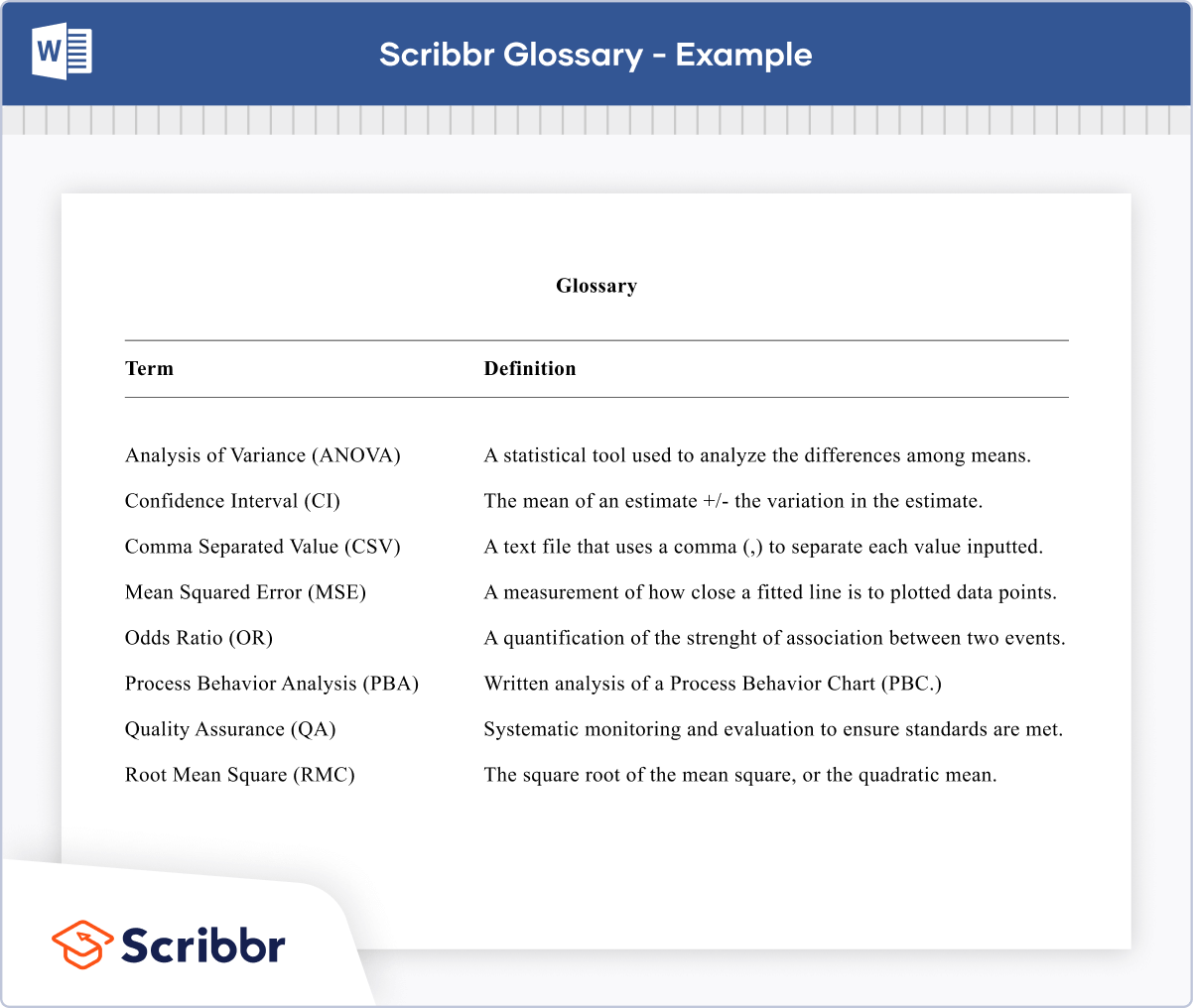

A glossary is a collection of words pertaining to a specific topic. In your thesis or dissertation , it’s a list of all terms you used that may not immediately be obvious to your reader.

Your glossary only needs to include terms that your reader may not be familiar with, and it’s intended to enhance their understanding of your work. Glossaries are not mandatory, but if you use a lot of technical or field-specific terms, it may improve readability to add one.

If you do choose to include a glossary, it should go at the beginning of your document, just after the table of contents and (if applicable) list of tables and figures or list of abbreviations . It’s helpful to place your glossary at the beginning, so your readers can familiarize themselves with key terms relevant to your thesis or dissertation topic prior to reading your work. Remember that glossaries are always in alphabetical order.

To help you get started, download our glossary template in the format of your choice below.

Download Word doc Download Google doc

Instantly correct all language mistakes in your text

Upload your document to correct all your mistakes in minutes

- Table of contents

Example of a glossary

Citing sources for your glossary, additional lists to include in your dissertation, other interesting articles, frequently asked questions about glossaries.

Receive feedback on language, structure, and formatting

Professional editors proofread and edit your paper by focusing on:

- Academic style

- Vague sentences

- Style consistency

See an example

Glossaries and definitions often fall into the category of common knowledge , meaning that they don’t necessarily have to be cited.

However, it’s always better to be safe than sorry when it comes to citing your sources , in order to avoid accidental plagiarism .

If you’d prefer to cite just in case, you can follow guidance for citing dictionary entries in MLA or APA Style for citations in your glossary. Remember that direct quotes should always be accompanied by a citation.

In addition to the glossary, you can also include a list of tables and figures and a list of abbreviations in your thesis or dissertation if you choose.

Include your lists in the following order:

- List of figures and tables

- List of abbreviations

If you want to know more about AI for academic writing, AI tools, or research bias, make sure to check out some of our other articles with explanations and examples or go directly to our tools!

Research bias

- Anchoring bias

- Halo effect

- The Baader–Meinhof phenomenon

- The placebo effect

- Nonresponse bias

- Deep learning

- Generative AI

- Machine learning

- Reinforcement learning

- Supervised vs. unsupervised learning

(AI) Tools

- Grammar Checker

- Paraphrasing Tool

- Text Summarizer

- AI Detector

- Plagiarism Checker

- Citation Generator

A glossary is a collection of words pertaining to a specific topic. In your thesis or dissertation, it’s a list of all terms you used that may not immediately be obvious to your reader. In contrast, dictionaries are more general collections of words.

A glossary or “glossary of terms” is a collection of words pertaining to a specific topic. In your thesis or dissertation, it’s a list of all terms you used that may not immediately be obvious to your reader. Your glossary only needs to include terms that your reader may not be familiar with, and is intended to enhance their understanding of your work.

Glossaries are not mandatory, but if you use a lot of technical or field-specific terms, it may improve readability to add one to your thesis or dissertation. Your educational institution may also require them, so be sure to check their specific guidelines.

A glossary is a collection of words pertaining to a specific topic. In your thesis or dissertation, it’s a list of all terms you used that may not immediately be obvious to your reader. In contrast, an index is a list of the contents of your work organized by page number.

Definitional terms often fall into the category of common knowledge , meaning that they don’t necessarily have to be cited. This guidance can apply to your thesis or dissertation glossary as well.

However, if you’d prefer to cite your sources , you can follow guidance for citing dictionary entries in MLA or APA style for your glossary.

Cite this Scribbr article

If you want to cite this source, you can copy and paste the citation or click the “Cite this Scribbr article” button to automatically add the citation to our free Citation Generator.

George, T. (2023, July 18). What Is a Glossary? | Definition, Templates, & Examples. Scribbr. Retrieved June 10, 2024, from https://www.scribbr.com/dissertation/glossary-of-a-dissertation/

Is this article helpful?

Tegan George

Other students also liked, dissertation table of contents in word | instructions & examples, figure and table lists | word instructions, template & examples, list of abbreviations | example, template & best practices, what is your plagiarism score.

Research Tips and Tricks

- Narrowing Topics

- Research Questions and Key Terms

- Combining Search Terms

- Finding Resources: Databases

- The Rhetorical Situation

Creating Key Terms

What do you even search for once you've got your topic and research question solidified, or at least started? Well, you take the most important words in your research statement/question and use them as key terms. Use those key terms in conjunction with each other (see the section on "Combining Key Terms" for advice about how to do so). Also, use synonyms of your key terms.

Example Key Terms

Research question:.

- What effect would paying college athletes have on collegiate sports?

- college athletes

- college sports

- << Previous: Narrowing Topics

- Next: Combining Search Terms >>

- Last Updated: Jan 26, 2024 10:10 AM

- URL: https://guides.library.unk.edu/research-tips

- University of Nebraska Kearney

- Library Policies

- Accessibility

- Research Guides

- Citation Guides

- A to Z Databases

- Open Nebraska

- Interlibrary Loan

2508 11th Avenue, Kearney, NE 68849-2240

Circulation Desk: 308-865-8599 Main Office: 308-865-8535

Ask A Librarian

Live revision! Join us for our free exam revision livestreams Watch now →

Reference Library

Collections

- See what's new

- All Resources

- Student Resources

- Assessment Resources

- Teaching Resources

- CPD Courses

- Livestreams

Study notes, videos, interactive activities and more!

Psychology news, insights and enrichment

Currated collections of free resources

Browse resources by topic

- All Psychology Resources

Resource Selections

Currated lists of resources

Study Notes

Research Methods Key Term Glossary

Last updated 22 Mar 2021

- Share on Facebook

- Share on Twitter

- Share by Email

This key term glossary provides brief definitions for the core terms and concepts covered in Research Methods for A Level Psychology.

Don't forget to also make full use of our research methods study notes and revision quizzes to support your studies and exam revision.

The researcher’s area of interest – what they are looking at (e.g. to investigate helping behaviour).

A graph that shows the data in the form of categories (e.g. behaviours observed) that the researcher wishes to compare.

Behavioural categories

Key behaviours or, collections of behaviour, that the researcher conducting the observation will pay attention to and record

In-depth investigation of a single person, group or event, where data are gathered from a variety of sources and by using several different methods (e.g. observations & interviews).

Closed questions

Questions where there are fixed choices of responses e.g. yes/no. They generate quantitative data

Co-variables

The variables investigated in a correlation

Concurrent validity

Comparing a new test with another test of the same thing to see if they produce similar results. If they do then the new test has concurrent validity

Confidentiality

Unless agreed beforehand, participants have the right to expect that all data collected during a research study will remain confidential and anonymous.

Confounding variable

An extraneous variable that varies systematically with the IV so we cannot be sure of the true source of the change to the DV

Content analysis

Technique used to analyse qualitative data which involves coding the written data into categories – converting qualitative data into quantitative data.

Control group

A group that is treated normally and gives us a measure of how people behave when they are not exposed to the experimental treatment (e.g. allowed to sleep normally).

Controlled observation

An observation study where the researchers control some variables - often takes place in laboratory setting

Correlational analysis

A mathematical technique where the researcher looks to see whether scores for two covariables are related

Counterbalancing

A way of trying to control for order effects in a repeated measures design, e.g. half the participants do condition A followed by B and the other half do B followed by A

Covert observation

Also known as an undisclosed observation as the participants do not know their behaviour is being observed

Critical value

The value that a test statistic must reach in order for the hypothesis to be accepted.

After completing the research, the true aim is revealed to the participant. Aim of debriefing = to return the person to the state s/he was in before they took part.

Involves misleading participants about the purpose of s study.

Demand characteristics

Occur when participants try to make sense of the research situation they are in and try to guess the purpose of the research or try to present themselves in a good way.

Dependent variable

The variable that is measured to tell you the outcome.

Descriptive statistics

Analysis of data that helps describe, show or summarize data in a meaningful way

Directional hypothesis

A one-tailed hypothesis that states the direction of the difference or relationship (e.g. boys are more helpful than girls).

Dispersion measure

A dispersion measure shows how a set of data is spread out, examples are the range and the standard deviation

Double blind control

Participants are not told the true purpose of the research and the experimenter is also blind to at least some aspects of the research design.

Ecological validity

The extent to which the findings of a research study are able to be generalized to real-life settings

Ethical guidelines

These are provided by the BPS - they are the ‘rules’ by which all psychologists should operate, including those carrying out research.

Ethical issues

There are 3 main ethical issues that occur in psychological research – deception, lack of informed consent and lack of protection of participants.

Evaluation apprehension

Participants’ behaviour is distorted as they fear being judged by observers

Event sampling

A target behaviour is identified and the observer records it every time it occurs

Experimental group

The group that received the experimental treatment (e.g. sleep deprivation)

External validity

Whether it is possible to generalise the results beyond the experimental setting.

Extraneous variable

Variables that if not controlled may affect the DV and provide a false impression than an IV has produced changes when it hasn’t.

Face validity

Simple way of assessing whether a test measures what it claims to measure which is concerned with face value – e.g. does an IQ test look like it tests intelligence.

Field experiment

An experiment that takes place in a natural setting where the experimenter manipulates the IV and measures the DV

A graph that is used for continuous data (e.g. test scores). There should be no space between the bars, because the data is continuous.

This is a formal statement or prediction of what the researcher expects to find. It needs to be testable.

Independent groups design

An experimental design where each participants only takes part in one condition of the IV

Independent variable

The variable that the experimenter manipulates (changes).

Inferential statistics

Inferential statistics are ways of analyzing data using statistical tests that allow the researcher to make conclusions about whether a hypothesis was supported by the results.

Informed consent

Psychologists should ensure that all participants are helped to understand fully all aspects of the research before they agree (give consent) to take part

Inter-observer reliability

The extent to which two or more observers are observing and recording behaviour in the same way

Internal validity

In relation to experiments, whether the results were due to the manipulation of the IV rather than other factors such as extraneous variables or demand characteristics.

Interval level data

Data measured in fixed units with equal distance between points on the scale

Investigator effects

These result from the effects of a researcher’s behaviour and characteristics on an investigation.

Laboratory experiment

An experiment that takes place in a controlled environment where the experimenter manipulates the IV and measures the DV

Matched pairs design

An experimental design where pairs of participants are matched on important characteristics and one member allocated to each condition of the IV