Advertisement

The Promises and Challenges of Artificial Intelligence for Teachers: a Systematic Review of Research

- Original Paper

- Open access

- Published: 25 March 2022

- Volume 66 , pages 616–630, ( 2022 )

Cite this article

You have full access to this open access article

- Ismail Celik ORCID: orcid.org/0000-0002-5027-8284 1 ,

- Muhterem Dindar 2 ,

- Hanni Muukkonen 1 &

- Sanna Järvelä 2

69k Accesses

132 Citations

20 Altmetric

Explore all metrics

This study provides an overview of research on teachers’ use of artificial intelligence (AI) applications and machine learning methods to analyze teachers’ data. Our analysis showed that AI offers teachers several opportunities for improved planning (e.g., by defining students’ needs and familiarizing teachers with such needs), implementation (e.g., through immediate feedback and teacher intervention), and assessment (e.g., through automated essay scoring) of their teaching. We also found that teachers have various roles in the development of AI technology. These roles include acting as models for training AI algorithms and participating in AI development by checking the accuracy of AI automated assessment systems. Our findings further underlined several challenges in AI implementation in teaching practice, which provide guidelines for developing the field.

Similar content being viewed by others

The Role of AI Algorithms in Intelligent Learning Systems

Why Not Go All-In with Artificial Intelligence?

Teaching and Learning with AI in Higher Education: A Scoping Review

Explore related subjects.

- Artificial Intelligence

- Digital Education and Educational Technology

Avoid common mistakes on your manuscript.

Introduction

Artificial intelligence (AI) has been penetrating our everyday lives in various ways such as through web search engines, mobile apps, and healthcare systems (Sánchez-Prieto et al., 2020 ). The swift advancement of AI technologies also has important implications for learning and teaching. In fact, AI-supported instruction is expected to transform education (Zawacki-Richter et al., 2019 ). Thus, considerable investments have been made to integrate AI into teaching and learning (Cope et al., 2020 ). A significant challenge in the effective integration of AI into teaching and learning, however, is the profit orientation of most current AI applications in education. AI developers know little about learning sciences and lack pedagogical knowledge for the effective implementation of AI in teaching (Luckin & Cukurova, 2019 ). Moreover, AI developers often fail to consider the expectations of AI end-users in education, that is, of teachers (Cukurova & Luckin, 2018 , Luckin & Cukurova, 2019 ). Teachers are considered among the most crucial stakeholders in AI-based teaching (Seufert et al., 2020 ), so their views, experiences, and expectations need to be considered for the successful adoption of AI in schools (Holmes et al., 2019 ). Specifically, to make AI pedagogically relevant, the advantages that it offers teachers and the challenges that teachers face in AI-based teaching need to be understood better. However, little attention has been paid to AI-based education from the perspective of teachers. Moreover, teachers’ skills in the pedagogical use of AI and the roles of teachers in the development of AI have been somehow ignored in literature (Langran et al., 2020 ; Seufert et al., 2020 ). To address these research gaps, this study explores the promises and challenges of AI in teaching practice that have been surfaced in research. Since the field of AI-based instruction is still developing, this study can contribute to the development of comprehensive AI-based instruction systems that allow teachers to participate in the design process.

Educational Use of Artificial Intelligence

There have been several waves of emerging educational technologies over the past few decades, and now, there is artificial intelligence (AI; Bonk & Wiley, 2020 ). The term artificial intelligence was first mentioned in 1956 by John McCarthy (Russel & Norvig, 2010 ). Baker and Smith ( 2019 ) pointed out that AI does not refer to a single technology but is defined as “computers [that] perform cognitive tasks, usually associated with human minds, particularly learning and problem-solving” (p. 10). AI is a general term that refers to diverse analytical methods. These methods can be classified as machine learning, neural networks, and deep learning (Aggarwal, 2018 ). Machine learning is defined as the capacity of a computer algorithm learning from the data to make decisions without being programmed (Popenici & Kerr, 2017 ). Although numerous machine learning models exist, the two most used models are supervised and unsupervised learning models (Alloghani et al., 2020 ). Supervised machine learning algorithms build a model based on the sample data (or training data), while unsupervised machine learning algorithms are created from untagged data (Alenezi & Faisal, 2020 ). In other words, the unsupervised model performs on its own to explore patterns that were formerly undetected by humans.

AI is used in education in different ways. For instance, AI is integrated into several instructional technologies such as chatbots (Clark, 2020 ), intelligent tutoring, and automated grading systems (Heffernan & Heffernan, 2014 ). These AI-based systems offer several opportunities to all stakeholders throughout the learning and instructional process (Chen et al., 2020 ). Previous research conducted on the educational use of AI presented AI’s support for student collaboration and personalization of learning experiences (Luckin et al., 2016 ), scheduling of learning activities and adaptive feedback on learning processes (Koedinger et al., 2012 ), reducing teachers’ workload in collaborative knowledge construction (Roll & Wylie, 2016 ), predicting the probability of learners dropping out of school or being admitted into school (Popenici & Kerr, 2017 ), profiling students’ backgrounds (Cohen et al., 2017 ), monitoring student progress (Gaudioso et al., 2012 ; Swiecki et al., 2019 ), and summative assessment such as automated essay scoring (Okada et al., 2019 ; Vij et al., 2020 ; Yuan et al., 2020 ). Despite these opportunities, the educational use of AI is more behind what is expected, unlike in other sectors (e.g., finance and health). To achieve successful AI implementation in education, various stakeholders, specifically, teachers, should participate in AI creation, development, and integration (Langran et al., 2020 ; Qin et al., 2020 ).

The Roles of Teachers in AI-based Education

The evolution of education towards digital education does not imply that people will need less teachers in the future (Dillenbourg, 2016 ). Instead of speculating if AI will replace teachers, understanding the advantages that AI offers teachers and how these advantages can change teachers’ roles in the classroom is more reasonable (Hrastinski et al., 2019 ). Salomon ( 1996 ) demonstrated this during the early stages of development of educational technology by pointing out the need to consider how learning occurs through and with computers. As for AI, Holstein et al. ( 2019 ) suggested that in the future, AI-based machines can help teachers perform what Dillenbourg ( 2013 ) emphasized as their orchestrator role in the learning and teaching process. For AI to be able to truly help teachers in this way, however, it must first learn effective orchestration of learning and teaching from teachers’ data. This is because effective teaching depends on teachers’ capability to implement appropriate pedagogical methods in their instruction (Tondeur et al., 2020 ), and their pedagogically meaningful and productive teaching incidents can serve as models for AI-based educational systems (Prieto et al., 2018 ). That is, the data collected from the learning setting orchestrated by teachers form the foundation of AI-based teaching. For example, the data may help researchers to understand when and how teaching is effectively progressing (Luckin & Cukurova, 2019 ; Luckin et al., 2016 ). To prove that the role of teachers in providing the data on features of effective learning is crucial for the development of AI algorithms, we investigated the kind of data collected from teachers and teachers’ roles in the creation of AI algorithms.

To effectively integrate AI-based education in schools, teachers must be empowered to implement such integration by endowing them with the requisite knowledge, skills, and attitudes (Häkkinen et al., 2017 ; Kirschner, 2015 ; Seufert et al., 2020 ). However, teachers’ AI-related skills have not yet been sufficiently defined because the potential of AI in education has not yet been fully exploited (Luckin et al., 2016 ). To explore teachers’ AI-related knowledge, skills, and attitudes, their engagement with AI-based systems within their teaching setting has to be investigated in detail (Dillenbourg, 2016 ; Seufert et al., 2020 ). Therefore, in this study, we reviewed empirical research on how teachers interacted with AI-based systems and how they participated in the development of AI-based education systems. We believe that our synthesis of empirical research on the topic will contribute to the identification of AI-related teaching skills and the effective implementation of AI-based education in schools with the support of teachers.

This study explored the perspective and roles of teachers in AI-based research through a systematic review of the latest research on the topic. Our specific research questions (RQs) are as follows:

RQ1—What was the distribution over time of the studies that examined teachers’ AI use?

RQ2—What data were collected from teachers in the studies on AI-based education?

RQ3—What were the roles of teachers in AI-based research?

RQ4—What advantages did AI offer teachers?

RQ5—What challenges did teachers face when using AI for education?

RQ6—Which AI methods were utilized in AI-based research that teachers participated in?

Table 1 below lists these RQs with their corresponding rationales.

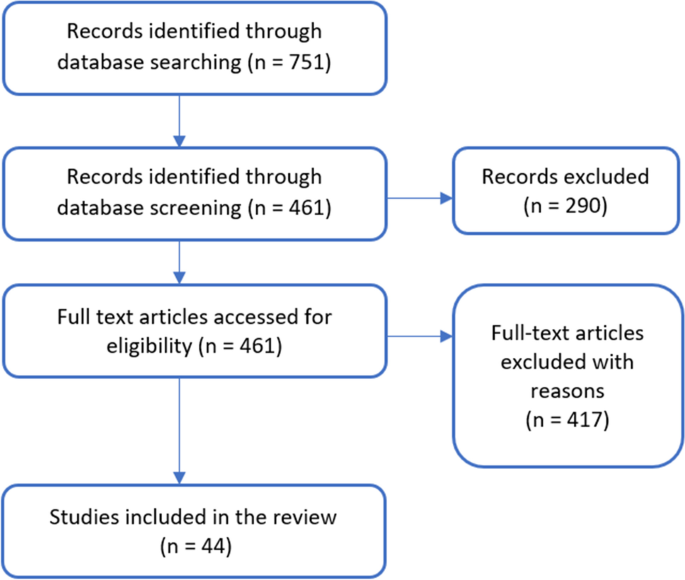

Manuscript Search and Selection Criteria

In reviews of research, several methods are used to select the studies that will be reviewed. Studies published in important journals of a given domain are selected from databases such as ProQuest (Heitink et al., 2016 ), Education Resources Information Center (ERIC), and the Social Science Citation Index (SSCI) (Akçayır & Akçayır, 2017 ; Kucuk et al., 2013 ). For this review, we selected English-language scientific studies on teachers’ AI use that were published in journals from the Web of Science (WoS) database within the last 20 years until 14 September 2020. We used this method because the field tags (e.g., the topic and research area) of the studies were easy to access from the WoS database (Luor et al., 2008 ). We used the following search string: “artificial intelligence,” “deep learning,” “reinforcement learning,” “supervised learning,” “unsupervised learning,” “neural network,” “ANN,” “natural language processing,” “fuzzy logic,” “decision trees,” “ensemble,” “Bayesian,” “clustering,” and “regularization.” To narrow our search, we used “teacher,” “teacher education,” “teacher professional development,” “K-12,” “middle school*,” “high school*,” “elementary school*,” and “kindergarten*.” We selected the search strings based on the main concepts of AI in education in past studies and literature reviews (Baran, 2014 ; Zawacki-Richter et al., 2019 ). Figure 1 presents our study search procedure.

Flow chart for the selection of articles

In our first search, we found 751 studies. Next, we checked them to see if they met our inclusion and exclusion criteria. Our inclusion criteria were as follows: (a) empirical studies on AI in pre-service and in-service teacher education and on in-service teachers’ use of AI; (b) studies on AI applications and algorithms (e.g., personal tutors, automated scoring, personal assistant; decision trees, and artificial neural networks) for teaching or analyzing teachers’ data; and (c) studies on data collected from in-service K-12 teachers or pre-service teachers. We excluded editorials, reviews, and studies conducted at the higher education level. After we applied the criteria, 44 articles remained suitable for inclusion in this study.

Data Coding and Analysis

The publication year of the articles was noted to determine the distribution of the studies over time (RQ1). For RQ2, the following categories and category numbers were assigned to the data collected from teachers in previous AI-based research: self-report (1), video (2), interview (3), observation (4), feedback/discourse (5), grading (6), eye tracking (7), audiovisual/accelerometry (8), and log file (9). We qualitatively analyzed the content of the 44 articles to determine the advantages and challenges of AI for teachers (RQ4 and RQ5, respectively) and teachers’ roles in AI-based instruction as found in research (RQ3). We coded the studies not with the preliminary or template coding scheme, which would have unnecessarily limited them by fitting them into a pre-determined coding scheme (Şimşek & Yıldırım, 2011 ), but with the open coding process (Akçayır & Akçayır, 2017 ; Williamson, 2015 ), which followed these steps: (1) Familiarize with the whole set of articles; (2) Choose a document randomly, consider its primary meaning, and write down your thought on such meaning on the margin of the document; (3) List all your thoughts on the subject, combine similar thoughts, create three columns for key, unique, and leftover thoughts, and put each thought in the appropriate column; (4) Code the text; (5) Find the most illustrative phrases for your thoughts and turn them into categories; (6) Decide on an abbreviation for each category and alphabetize these abbreviations; (7) Incorporate the final codes and perform the initial analysis; and (8) Recode the studies if needed. To classify the AI methods (RQ6), we used previous literature reviews of AI use in diverse areas such as higher education, medicine, and business (Borges et al., 2020 ; Contreras & Vehi, 2018 ). We performed the investigator triangulation method to ensure the reliability of the coding process (Denzin, 2017 ). Accordingly, the first author coded the articles separately and then shared the codes with the second author. We negotiated disagreements by checking the code list and the relevant studies, and we updated and renamed some categories. Finally, we recoded the studies using the final code list.

Results and Discussion

Distribution of the studies.

(RQ1—What was the distribution over time of the studies that examined teachers’ AI use?)

Our analysis indicated that the first study on teachers’ AI use was published in 2004. Of the 44 studies we reviewed, 22 were published in 2018 and the following years. It has been forecasted that the usage of educational AI applications will increase (Qin et al., 2020 ; Zawacki-Richter et al., 2019 ). Such increase is implied in our finding that the publication of studies on AI-based teaching increased after 2017. Figure 2 presents the research trend on AI and teachers.

Number of articles published by year

Figure 2 further indicates that research on teachers’ AI use in education intensified in the last four years. This implies that AI-based instruction by teachers is most likely to become more common in the near future. Supporting this, our review of literature on the topics “AI” and “education” showed that studies published between 2015 and 2019 accounted for 70% of all the studies from Web of Science and Google Scholar since 2010 (Chen et al., 2020 ). The availability of AI technologies and of educational software companies to create AI-based applications is increasing rapidly all over the world (Renz & Hilbig, 2020 ). Accordingly, it seems likely that teachers’ use of AI in the teaching process will grow and more studies will be conducted on this topic.

On the other hand, there are still fewer studies on AI use in education than in other areas such as medicine and business (Borges et al., 2020 ; Luckin & Cukurova, 2019 ). The educational technology (EdTech) market is growing much more slowly than other markets with respect to the dynamics of digital transformation. One of the reasons for this is the resistance of decision-makers such as educators, teachers, and traditional textbook publishers to the use of AI (EdTechXGlobal Report, 2016 ). Considering this resistance, it can be argued that more AI research is needed to show the pedagogical uses of AI in instructional processes and to speed up the uptake of AI technologies in education.

Data Types Collected from Teachers

(RQ2—What data were collected from teachers in the studies on AI-based education?)

Self-reported data were the most common data collected from teachers in the AI-based education studies. The researchers collected self-reported data to predict teacher-related variables such as engagement, performance, and teaching quality. In these studies, machine learning algorithms were used instead of conventional regression analysis to reveal nonlinear relationships between variables of teaching practice. For instance, Wang et al. ( 2020 ) collected data from 165 early childhood teachers to better understand indicators of quality teacher–child interaction. Similarly, in Yoo and Rho ( 2020 ), teachers’ self-reported job satisfaction was predicted by a machine learning technique. In some AI studies, teacher grades of student assignments or essays were used to train AI algorithms. For example, Yuan et al. ( 2020 ), in developing an automated scoring approach, needed expert teachers’ grades to validate their AI-based scoring system. A notable finding from our review is that self-reported grades accounted for nearly 44% of all data obtained from teachers (Fig. 3 ).

In 11 of the studies that we reviewed, teachers provided more than one type of data. The data were mostly collected during or after teachers’ instruction. Our review findings highlight the crucial role of teachers in the instructional process (e.g., Huang et al., 2010 ; Lu, 2019 ; McCarthy et al., 2016 ; Pelham et al., 2020 ). For example, Schwarz et al. ( 2018 ) presented an online learning environment that uses machine learning to inform teachers about learners’ critical moments in collaborative learning by sending the teachers warnings. In their study, they observed how the teacher guided several groups at different times in a mathematics classroom. In addition to observations, they collected interview data from the teachers about the effectiveness of the online environment. Our review indicates that there is a significant gap in physiological data collection in AI studies with teachers. Only one of the studies we reviewed collected physiological data, that is, data on eye tracking and audiovisual/accelerometry data from sensors worn by the teachers (Prieto et al., 2018 ). In fact, physiological data can be considered relevant and useful for providing process-oriented, objective metrics regarding the critical moments that impact the quality of teaching or learning in an educational activity (Järvelä et al., 2021 ).

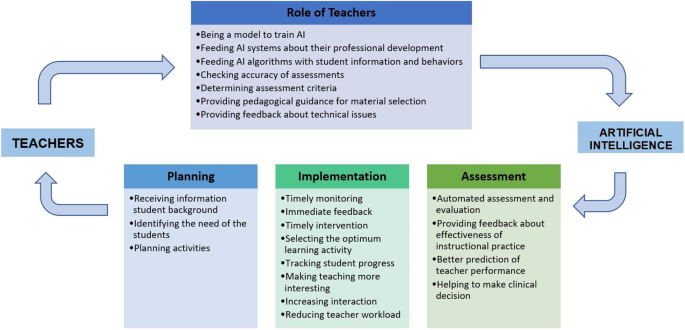

The Roles of Teachers in AI-based Research

(RQ3—What were the roles of teachers in AI-based research?)

Our findings from our open-coding analysis indicate that teachers have seven roles in AI research. These roles and their descriptions are shown in Table 2 . As seen from the table, teachers participated in AI research as models to train AI algorithms. This role was found to be the most common role of teachers in AI-based instruction ( f = 18). This finding underlines the pivotal role of teachers in the development of AI-based education systems. For instance, Kelly et al. ( 2018 ) conducted a study to train AI algorithms to automatically detect teachers’ authentic questions in real-life classrooms. During the training of the AI algorithms, the teachers’ effective authentic questions were fed to the AI system as features. Following the AI training, the researchers tested AI in a different classroom and found that AI successfully identified authentic questions.

Another role that teachers were observed to have in AI research was providing big data to AI systems to enable them to forecast teachers’ professional development. In this line of research, teachers mostly provided data to AI systems for the latter’s prediction of different variables of the professional development of teachers such as their job satisfaction, performance, and engagement. For example, in one study, 10,642 teachers answered a survey (Buddhtha et al., 2019 ). Then, using AI, predictors of teacher engagement were determined. Similar to other areas, big data have played an important role in education, and teachers are considered among the most important sources of big data (Ruiz-Palmero et al., 2020 ). Our findings imply that AI can effectively inform teachers of their professional development.

This study also found that teachers involved in AI research provided input information on students’ characteristics for the AI-based implementation. For example, Nikiforos et al. ( 2020 ) investigated automatic detection of learners’ aggressive behavior in a virtual learning community. The AI system utilized teacher observations of students’ behavioral characteristics to predict the students who were more likely to bully others in the online community. Our review further revealed that teachers have taken on the role of grading assignments and essays to test the accuracy of AI algorithms in grading student performance. In such studies, the accuracy rate of the AI-based assessment was determined with the help of experienced teacher assessments (Bonneton-Botté et al., 2020 ; Gaudioso et al., 2012 ; McCarthy et al., 2016 ; Yuan et al., 2020 ).

In some AI-based education studies, teachers determined the criteria for some components of AI-based systems and assessments. For example, Huang et al. ( 2010 ) investigated the effect of the learning assistance tool ICT Literacy . The tool used machine learning. In their study, experienced teachers guided the AI system by defining the criteria for effective and timely feedback. In some studies, teachers also provided pedagogical guidance on the selection of materials for AI-based implementation. For example, Fitzgerald et al. ( 2015 ) utilized AI to present learning content with varying degrees of text complexity to early-grade students. They attempted to explore early-grade text complexity features. Text complexity in the AI system was determined based on teachers’ pedagogical guidance. Furthermore, teachers commented on the usability and design of AI-based technologies (Burstein et al., 2004 ). Finally, our results revealed a notable absence of pre-service teachers as participants in AI use studies. That is, there were no studies in which pre-service teachers actively participated or interacted with AI technologies.

Advantages of AI for Teachers

(RQ4—What advantages did AI offer teachers?)

We found several advantages of AI from our review of selected empirical studies on teachers’ AI use. The open coding revealed three categories of AI advantages: planning, implementation, and assessment (see Table 3 ).

The advantages of AI related to planning involved receiving information on students’ backgrounds and assisting teachers in deciding on the learning content during lesson planning. In a study, an AI system provided teachers background information on students’ risk factors for delinquency, such as aggression (Pelham et al., 2020 ). In terms of teacher assistance in planning learning content, Dalvean and Enkhbayar ( 2018 ) used machine learning to classify the readability of English fiction texts. The results of their study suggested that the classification can help English teachers to plan the course contents considering the readability features (Table 4 ).

Implementation

According to our review (see Table 3 ), the most prominent advantage of AI was stated as timely monitoring of learning processes ( f = 12 ). For example, Su et al. ( 2014 ) developed a sensor-based learning concentration detection system using AI in a classroom environment. The system allowed teachers to monitor the degree of students’ concentration on lesson activities. Such AI-based monitoring can help teachers to provide immediate feedback (Burstein et al., 2004 ; Huang et al., 2010 , 2011 ) and quickly perform the necessary interventions (Nikiforos et al., 2020 ; Schwarz et al., 2018 ). For instance, teachers were able to discover critical moments in group learning and provide adaptive interventions for all the groups (Schwarz et al., 2018 ). Hence, AI systems can decrease the teaching burden on teachers by providing them feedback and assisting them with planning interventions and with student monitoring. In several studies, these contributions to teachers were particularly emphasized (Lu, 2019 ; Ma et al., 2020 ). Therefore, we assume that reduced teaching load may be another significant advantage of AI systems in education. For example, researchers reported that teachers benefitted from an AI-based peer tutor recommender system and saved time for other activities (Ma et al., 2020 ).

Our findings further revealed that AI can enable teachers to select or adapt the optimum learning activity based on AI feedback. For example, in Bonneton-Botté et al. ( 2020 ), teachers decided to implement exercises such as writing letters and numbers for students with a low graphomotor level based on the feedback they received from AI. According to our synthesis, AI can also make the teaching process more interesting for teachers. Teachers reported that AI-tutors facilitated enjoyable teaching experiences for them by breaking the monotony in the classroom (McCarthy et al., 2016 ). We also found out that AI algorithms can increase opportunities for teacher-student interaction by capturing and analyzing data from productive moments (Lamb & Premo, 2015 ) and tracking student progress (Farhan et al., 2018 ).

According to our review, AI helps teachers in exam automation and essay scoring and in decision-making on student performance. It has been found that an automated essay scoring system can not only significantly advance the effectiveness of essay scoring but also make scoring more objective (Yuan et al., 2020 ). Therefore, researchers are interested in the use of AI affordances to investigate automated systems. An important utility of AI-based applications in the context of assessment is to detect plagiarism in student essays (Dawson et al., 2020 ). Several existing AI-based systems (e.g., Turnitin) allow teachers to check the authenticity of essays submitted by students in graduate courses (Alharbi & Al-Hoorie, 2020 ). This can be considered an important utility of AI in student assessment. We coded seven studies on the advantage of exam automation and essay scoring. Six of these studies investigated the scoring of student-related outcomes (Annabestani et al., 2020 ; Huang et al., 2010 ; Tepperman et al., 2010 ; Yuan et al., 2020 ; Vij et al., 2020 ; Yang, 2012 ), and one study used AI-based systems to score teachers’ open-ended responses, to assess usable mathematics teaching knowledge (Kersting et al., 2014 ). We suggest that more studies be conducted on automatic scoring of teacher-related variables such as technological and pedagogical knowledge. Considering that classroom video analysis (CVA) assessment is capable of scoring and assessing teacher knowledge (Kersting et al., 2014 ), CVA can be used in both in-service and pre-service teacher education, particularly on micro-teaching methods. For example, natural language processing methods (Bywater et al., 2019 ) can utilize existing CVA scoring schemes to detect teachers’ verbal communication patterns in conveying instructional content to students. Furthermore, machine vision methods (Ozdemir & Tekin, 2016 ) can be applied to teachers’ video recordings to observe the patterns in their body posture. Such methods may provide valuable feedback to novice teachers on developing their teaching skills.

AI could also help provide teachers feedback on the effectiveness of their instructional practice (Farhan et al., 2018 ; Lamb & Premo, 2015 ). Teachers’ pedagogically meaningful teaching aspects can be modeled automatically using multiple data sources and AI (Dillenbourg, 2016 ; Prieto et al., 2018 ). Through these models, teachers can improve their instructional practices. Besides, the pedagogically effective models can train AI algorithms to make them more sophisticated.

Also, AI technologies were used to better predict or assess teacher performance or outcomes. Researchers predicted pre-service or in-service teachers’ professional development outcomes such as course achievement using machine learning algorithms, which are beneficial in revealing complex and nonlinear relationships. While seven studies collected data from in-service teachers, two studies obtained data from pre-service teachers (Akgün & Demir, 2018 ; Demir, 2015 ).

In addition, Cohen et al. ( 2017 ) conducted a study on a sample with autism spectrum disorder and another sample without. The results revealed that a machine learning tool can provide accurate and informative data for diagnosing autism spectrum disorder. In the study of Cohen et al., teachers commented on the accuracy of the tool.

Figure 4 illustrates the role of teachers in AI research and the advantages of AI for teachers. This gives us ideas about AI expectations from teachers and AI opportunities for teachers.

Advantages of AI and teacher roles in AI research

Challenges in AI Use by Teachers

(RQ5—What challenges did teachers face when using AI for education?)

The challenges in teachers’ use of AI are summarized in Table 3 . One of the most observed challenges is the limited technical capacity of AI. For example, AI may not be efficient for scoring graphics or figures and text. Fitzgerald et al. ( 2015 ) reported that an AI-based system failed to assess the complexity of texts when they included images. The limited reliability of the AI algorithm was found to be another considerable challenge. Therefore, automated writing evaluation technologies that use AI algorithms have to be improved to provide trustworthy evaluations for teachers (Qian et al., 2020 ). Inefficiency of AI systems in assessment and evaluation is related more to validity than to reliability. AI-based scoring may sometimes improperly evaluate performance (Lu, 2019 ). Our review further indicated that AI systems may be too context-dependent such that using them in varying educational settings can be challenging. For example, an AI algorithm designed to detect specific behavior in a specific online learning environment cannot work in different languages (Nikiforos et al., 2020 ). In other words, this limitation can stem from cultural differences.

The lack of technological knowledge of teachers (Chiu & Chai, 2020 ) and the lack of technical infrastructure in schools (McCarthy et al., 2016 ) are two other challenges in integrating AI into education. It has also been reported that AI-based feedback is sometimes slow. This can lead to teacher boredom in using AI (McCarthy et al., 2016 ). Although adaptive and personalized feedback is important for teachers to reduce their workload, AI systems are not always capable of giving different kinds of feedback based on students’ needs (Burstein et al., 2004 ). Therefore, AI systems currently fall short of meeting the needs of teachers for effective feedback (Fig. 5 ).

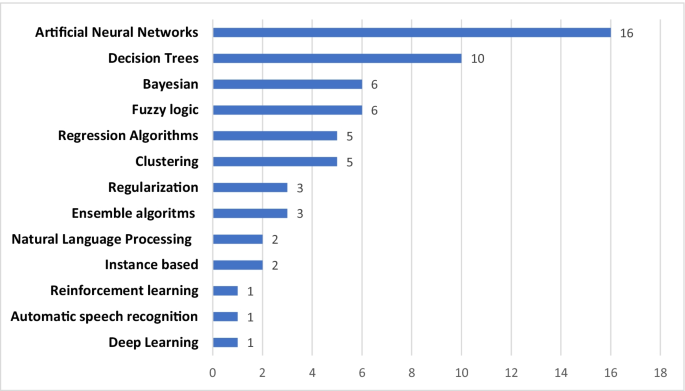

AI methods in the reviewed studies

AI Methods in Research

(RQ6—Which AI methods were utilized in AI-based research that teachers participated in?)

We coded AI methods in the studies, following previous reviews (Borges et al., 2020 ; Contreras & Vehi, 2018 ; Saa et al., 2019 ). Artificial neural networks (ANN) appeared to have been the most used ( f = 16 ) AI method in the education studies involving teachers. ANN is a machine learning method that is widely used in business, economics, engineering, and higher education (Musso et al., 2013 ). According to our review, ANN also processes common data sourced from teachers. For example, Alzahrani and his colleagues (Alzahrani et al., 2020 ) investigated the relationship between thermal comfort and teacher performance. Through ANN analysis, they analyzed the data related to teachers’ productivity and the classroom temperature. Decision trees, another machine learning algorithm, were frequently utilized in our reviewed studies. For instance, Gaudioso et al. ( 2012 ) used decision tree algorithms on data to support teachers in detecting moments in which students were having problems in an adaptive educational system. Similar to our findings, a review of predictive machine learning methods for university students’ academic performance found that the decision tree algorithm was the most commonly used (Saa et al., 2019 ).

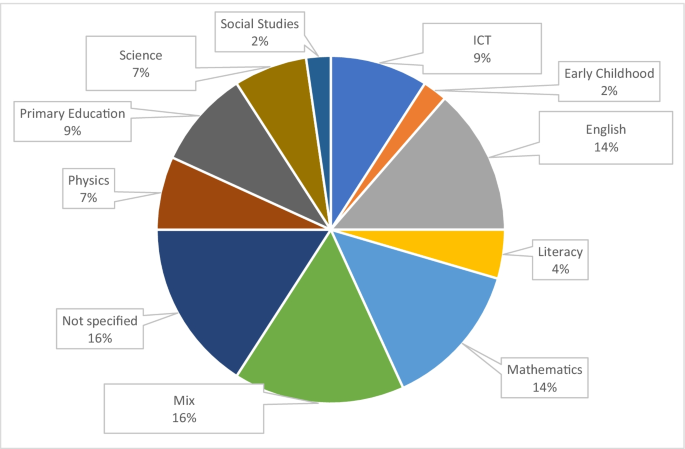

In our review, we also investigated the subject domains of teachers’ AI-based instruction. The studies with teachers from various domains accounted for 16% of all research (see Fig. 6 ). These studies generally had a larger sample size than the studies with teachers from a single domain (e.g., Buddhtha et al., 2019 ). Primary education and the English language appeared to be the domains where teachers use AI the most. Studies on automated essay scoring and adaptive feedback were conducted in English language courses. We found that 46% of all the studies we reviewed were performed in fields related to science, technology, engineering, and mathematics (STEM), and a much smaller percentage of studies were performed in the social science and early childhood fields together. These might have been because teachers in STEM fields are more accustomed to technology use (Chai et al., 2020 ).

Distribution of studies by subject domain

Conclusions and Future Research

Due to the growing interest in AI use, the number of studies on teachers’ use of AI has been increasing in the last few years, and more studies are needed to know more about teachers’ AI use. As AI continues to become popular in education, undoubtedly more research will focus on AI use in teachers’ instruction. Our synthesis of relevant studies shows that there has been little interest in investigating AI in pre-service teacher education. Hence, we recommend more empirical studies on pre-service teachers’ AI use. Developing AI awareness and skills among pre-service teachers may facilitate better adoption of AI-based teaching in future classrooms. As Valtonen et al. ( 2021 ) have shown, teachers’ and students’ use of emerging technologies can make a major contribution to the development of 21st-century practices in schools.

Another gap we found in our review is the limited variety of methods and data channels used in AI-based systems. It seems that AI-based systems in education do not exploit the potential of multimodal data. Most of the AI applications that teachers use utilize only self-reported and/or observation data, while different data modalities can create more opportunities to understand teaching and learning processes (Järvelä & Bannert, 2021 ). Enriching AI systems with other data types (e.g., physiological data) may give a better understanding of different layers of teaching and learning, and thus, help teachers to plan effective learning interventions, provide timely feedback and conduct more accurate assessments of students’ cognitive and emotional states during the instruction. Utilizing multimodal data can help to model more efficient and effective AI systems for education. Thus, we conclude that further work is necessary to improve the capabilities of AI systems with multimodal data.

Our review revealed that teachers have limited involvement in the development of AI-based education systems. Although in some studies, experienced teachers were recruited to train AI algorithms, further efforts are needed to involve a wider population of teachers in developing AI systems. Such involvement should go beyond training AI algorithms and involve teachers in the crucial decision-making processes on how (not) to develop AI systems for better teaching. For their part, AI developers and software companies should consider involving teachers in the development process to a greater extent.

This study showed that AI has been reported as generally beneficial to teachers’ instruction. Teachers can take advantage of AI in their planning, implementation, and assessment work. AI assists them in identifying their students’ needs so that they can determine the most suitable learning content and activities for their students. During the activities, such as a collaborative task, with the help of AI, teachers can monitor their students in a timely manner and give them immediate feedback (e.g., Swiecki et al., 2019 ). After the instruction, AI-based automated scoring systems can help teachers with assessment (e.g., Kersting et al., 2014 ). These advantages mainly reduce teachers’ workload and help them to focus their attention on critical issues such as timely intervention and assessment (Vij et al., 2020 ). However, many of the studies reviewed were conducted to predict outcome variables (e.g., performance, engagement, and job satisfaction) through machine learning algorithms (Yoo & Rho, 2020 ). More studies are needed to enable AI systems to provide information and feedback on how the learning processes temporally unfold during teachers’ instruction. Then, teachers will be able to interact with actual AI systems to better understand possible opportunities.

This study revealed several limitations and challenges of AI for teachers’ use such as its limited reliability, technical capacity, and applicability in multiple settings. Future empirical research is necessary to address the challenges reported in this study. We conclude that developing AI systems that are technically and pedagogically capable of contributing to quality education in diverse learning settings is yet to be achieved. To achieve this objective, multidisciplinary collaboration between multiple stakeholders (e.g., AI developers, pedagogical experts, teachers, and students) is crucial. We hope that this review will serve as a springboard for such collaboration.

*References marked with an asterisk show the articles used in the review

Aggarwal, C. C. (2018). Neural networks and deep learning. Springer , 10 , 978-3. https://doi.org/10.1007/978-3-319-94463-0

Akçayır, M., & Akçayır, G. (2017). Advantages and challenges associated with augmented reality for education: A systematic review of the literature. Educational Research Review, 20 , 1–11. https://doi.org/10.1016/j.edurev.2016.11.002

Article Google Scholar

*Akgün, E., & Demir, M. (2018). Modeling course achievements of elementary education teacher candidates with artificial neural networks. International Journal of Assessment Tools in Education , 5 (3), 491–509. https://doi.org/10.21449/ijate.444073

Alenezi, H. S., & Faisal, M. H. (2020). Utilizing crowdsourcing and machine learning in education: Literature review. Education and Information Technologies , 1-16. https://doi.org/10.1007/s10639-020-10102-w

Alharbi, M. A., & Al-Hoorie, A. H. (2020). Turnitin peer feedback: Controversial vs. non-controversial essays. International Journal of Educational Technology in Higher Education, 17 , 1–17. https://doi.org/10.1186/s41239-020-00195-1

Alloghani, M., Al-Jumeily, D., Mustafina, J., Hussain, A., & Aljaaf, A. J. (2020). A systematic review on supervised and unsupervised machine learning algorithms for data science. In Supervised and Unsupervised Learning for Data Science (pp. 3–21). Springer, Cham. https://doi.org/10.1007/978-3-030-22475-2_1

Alzahrani, H., Arif, M., Kaushik, A., Goulding, J., & Heesom, D. (2020). Artificial neural network analysis of teachers’ performance against thermal comfort. International Journal of Building Pathology and Adaptation . https://doi.org/10.1108/IJBPA-11-2019-0098

Annabestani, M., Rowhanimanesh, A., Mizani, A., & Rezaei, A. (2020). Fuzzy descriptive evaluation system: Real, complete and fair evaluation of students. Soft Computing, 24 (4), 3025–3035. https://doi.org/10.1007/s00500-019-04078-0

Baker, T., & Smith, L. (2019). Educ-AI-tion rebooted? Exploring the future of artificial intelligence in schools and colleges . Retrieved from Nesta Foundation website: https://media.nesta.org.uk/documents/Future_of_AI_and_education_v5_WEB.pdf

Baran, E. (2014). A review of research on mobile learning in teacher education. Journal of Educational Technology & Society, 17 (4), 17–32.

Google Scholar

*Bonneton-Botté, N., Fleury, S., Girard, N., Le Magadou, M., Cherbonnier, A., Renault, M., ... & Jamet, E. (2020). Can tablet apps support the learning of handwriting? An investigation of learning outcomes in kindergarten classroom. Computers & Education , 151 , 103831. https://doi.org/10.1016/j.compedu.2020.103831

Borges, A. F., Laurindo, F. J., Spínola, M. M., Gonçalves, R. F., & Mattos, C. A. (2020). The strategic use of artificial intelligence in the digital era: Systematic literature review and future research directions. International Journal of Information Management , 102225. https://doi.org/10.1016/j.ijinfomgt.2020.102225

Bonk, C. J., & Wiley, D. A. (2020). Preface: Reflections on the waves of emerging learning technologies. Educational Technology Research and Development, 68 (4), 1595–1612. https://doi.org/10.1007/s11423-020-09809-x

Buddhtha, S., Natasha, C., Irwansyah, E., & Budiharto, W. (2019). Building an artificial neural network with backpropagation algorithm to determine teacher engagement based on the indonesian teacher engagement index and presenting the data in a Web-Based GIS. International Journal of Computational Intelligence Systems, 12 (2), 1575–1584. https://doi.org/10.2991/ijcis.d.191101.003

Burstein, J., Chodorow, M., & Leacock, C. (2004). Automated essay evaluation: The Criterion online writing service. Ai Magazine, 25 (3), 27–27. https://doi.org/10.1609/aimag.v25i3.1774

Bywater, J. B., Chiu J. l., Hong J., & Sankaranarayanan,V. (2019). The teacher responding tool: Scaffolding the teacher practice of responding to student ideas in mathematics classrooms. Computers & Education 139 , 16-30. https://doi.org/10.1016/j.compedu.2019.05.004

Chai, C. S., Jong, M., & Yan, Z. (2020). Surveying Chinese teachers’ technological pedagogical STEM knowledge: A pilot validation of STEM-TPACK survey. International Journal of Mobile Learning and Organisation, 14 (2), 203–214. https://doi.org/10.1504/IJMLO.2020.106181

Chen, L., Chen, P., & Lin, Z. (2020). Artificial intelligence in education: A review. IEEE Access, 8 , 75264–75278. https://doi.org/10.1109/ACCESS.2020.2988510

Chiu, T. K., & Chai, C. S. (2020). Sustainable curriculum planning for artificial intelligence education: A self-determination theory perspective. Sustainability, 12 (14), 5568. https://doi.org/10.3390/su12145568

Clark, D. (2020). Artificial ıntelligence for learning: How to use AI to support employee development . Kogan Page Publishers.

*Cohen, I. L., Liu, X., Hudson, M., Gillis, J., Cavalari, R. N., Romanczyk, R. G., ... & Gardner, J. M. (2017). Level 2 Screening with the PDD Behavior Inventory: Subgroup Profiles and Implications for Differential Diagnosis. Canadian Journal of School Psychology , 32 (3-4), 299-315. https://doi.org/10.1177/0829573517721127

Contreras, I., & Vehi, J. (2018). Artificial intelligence for diabetes management and decision support: Literature review. Journal of Medical Internet Research, 20 (5), e10775. https://doi.org/10.2196/10775

Cope, B., Kalantzis, M., & Searsmith, D. (2020). Artificial intelligence for education: Knowledge and its assessment in AI-enabled learning ecologies. Educational Philosophy and Theory , 1–17.

Cukurova, M., & Luckin, R. (2018). Measuring the impact of emerging technologies in education: A pragmatic approach. Springer, Cham. https://discovery.ucl.ac.uk/id/eprint/10068777

*Dalvean, M., & Enkhbayar, G. (2018). Assessing the readability of fiction: a corpus analysis and readability ranking of 200 English fiction texts* 4. Linguistic Research , 35 , 137–170. https://doi.org/10.17250/khisli.35.201809.006

Dawson, P., Sutherland-Smith, W., & Ricksen, M. (2020). Can software improve marker accuracy at detecting contract cheating? A pilot study of the Turnitin authorship investigate alpha. Assessment & Evaluation in Higher Education, 45 (4), 473–482.

*Demir, M. (2015). Predicting pre-service classroom teachers’ civil servant recruitment examination’s educational sciences test scores using artificial neural networks. Educational Sciences: Theory & Practice , 15 (5). Retrieved from https://doi.org/10.12738/estp.2015.5.0018

Denzin, N. K. (2017). The research act: A theoretical introduction to sociological methods . Transaction publishers.

Dillenbourg, P. (2013). Design for classroom orchestration. Computers & Education, 69, 485–492. https://doi.org/10.1016/j.compedu.2013.04.013 .

Dillenbourg, P. (2016). The evolution of research on digital education. International Journal of Artificial Intelligence in Education, 26 (2), 544–560. https://doi.org/10.1007/s40593-016-0106-z

EdTechXGlobal. (2016). EdTechXGlobal report 2016—Global EdTech industry report: a map for the future of education and work. Retrieved from http://ecosystem.edtechxeurope.com/2016-edtech-report

Farhan, M., Jabbar, S., Aslam, M., Ahmad, A., Iqbal, M. M., Khan, M., & Maria, M. E. A. (2018). A real-time data mining approach for interaction analytics assessment: IoT based student interaction framework. International Journal of Parallel Programming, 46 (5), 886–903. https://doi.org/10.1007/s10766-017-0553-7

Fitzgerald, J., Elmore, J., Koons, H., Hiebert, E. H., Bowen, K., Sanford-Moore, E. E., & Stenner, A. J. (2015). Important text characteristics for early-grades text complexity. Journal of Educational Psychology, 107 (1), 4. https://doi.org/10.1037/a0037289

Gaudioso, E., Montero, M., & Hernandez-Del-Olmo, F. (2012). Supporting teachers in adaptive educational systems through predictive models: A proof of concept. Expert Systems with Applications, 39 (1), 621–625. https://doi.org/10.1016/j.eswa.2011.07.052

Häkkinen, P., Järvelä, S., Mäkitalo-Siegl, K., Ahonen, A., Näykki, P., & Valtonen, T. (2017). Preparing teacher students for 21st century learning practices (PREP 21): A framework for enhancing collaborative problem solving and strategic learning skills. Teachers and Teaching: Theory and Practice, 23 (1), 25–41. https://doi.org/10.1080/13540602.2016.1203772

Heffernan, N. T., & Heffernan, C. L. (2014). The ASSISTments ecosystem: Building a platform that brings scientists and teachers together for minimally invasive research on human learning and teaching. International Journal of Artificial Intelligence in Education, 24 (4), 470–497. https://doi.org/10.1007/s40593-014-0024-x

Heitink, M. C., Van der Kleij, F. M., Veldkamp, B. P., Schildkamp, K., & Kippers, W. B. (2016). A systematic review of prerequisites for implementing assessment for learning in classroom practice. Educational Research Review, 17 , 50–62. https://doi.org/10.1016/j.edurev.2015.12.002

Holmes, W., Bialik, M., & Fadel, C. (2019). Artificial intelligence in education: Promises and Implications for Teaching and Learning . Center for Curriculum Redesign.

Holstein, K., McLaren, B. M., & Aleven, V. (2019). Co-designing a real-time classroom orchestration tool to support teacher–AI complementarity. Journal of Learning Analytics , 6 (2), 27–52. https://doi.org/10.18608/jla.2019.62.3

Hrastinski, S., Olofsson, A. D., Arkenback, C., Ekström, S., Ericsson, E., Fransson, G., ... & Utterberg, M. (2019). Critical imaginaries and reflections on artificial intelligence and robots in post digital K-12 education. Post digital Science and Education , 1 (2), 427-445. https://doi.org/10.1007/s42438-019-00046-x

*Huang, C. J., Liu, M. C., Chang, K. E., Sung, Y. T., Huang, T. H., Chen, C. H., ... & Chang, T. Y. (2010). A learning assistance tool for enhancing ICT literacy of elementary school students. Journal of Educational Technology & Society , 13 (3), 126-138.

Huang, C. J., Wang, Y. W., Huang, T. H., Chen, Y. C., Chen, H. M., & Chang, S. C. (2011). Performance evaluation of an online argumentation learning assistance agent. Computers & Education, 57 (1), 1270–1280. https://doi.org/10.1016/j.compedu.2011.01.013

Järvelä, S. & Bannert, M. (2021). Temporal and adaptive processes of regulated learning – What can multimodal data tell? Learning and Instruction , 72, https://doi.org/10.1016/j.learninstruc.2019.101268

Järvelä, S., Malmberg, J., Haataja, E., Sobocinski, M., & Kirschner, P. A. (2021). What multimodal data can tell us about the students’ regulation of their learning process. Learning and Instruction , 101203 . https://doi.org/10.1016/j.learninstruc.2019.04.004

Kelly, S., Olney, A. M., Donnelly, P., Nystrand, M., & D’Mello, S. K. (2018). Automatically measuring question authenticity in real-world classrooms. Educational Researcher, 47 (7), 451–464. https://doi.org/10.3102/0013189X18785613

Kersting, N. B., Sherin, B. L., & Stigler, J. W. (2014). Automated scoring of teachers’ open-ended responses to video prompts: Bringing the classroom-video-analysis assessment to scale. Educational and Psychological Measurement, 74 (6), 950–974. https://doi.org/10.1177/0013164414521634

Kirschner, P. A. (2015). Do we need teachers as designers of technology enhanced learning? Instructional Science, 43 (2), 309–322. https://doi.org/10.1007/s11251-015-9346-9

Koedinger, K. R., Corbett, A. T., & Perfetti, C. (2012). The Knowledge-Learning-Instruction framework: Bridging the science-practice chasm to enhance robust student learning. Cognitive Science, 36 (5), 757–798. https://doi.org/10.1111/j.1551-6709.2012.01245.x

Kucuk, S., Aydemir, M., Yildirim, G., Arpacik, O., & Goktas, Y. (2013). Educational technology research trends in Turkey from 1990 to 2011. Computers & Education, 68 , 42–50. https://doi.org/10.1016/j.compedu.2013.04.016

Lamb, R., & Premo, J. (2015). Computational modeling of teaching and learning through application of evolutionary algorithms. Computation, 3 (3), 427–443. https://doi.org/10.3390/computation3030427

Langran, E., Searson, M., Knezek, G., & Christensen, R. (2020). AI in Teacher Education. In Society for Information Technology & Teacher Education International Conference (pp. 735–740). Association for the Advancement of Computing in Education (AACE). https://www.learntechlib.org/p/215821/

Lu, X. (2019). An empirical study on the artificial intelligence writing evaluation system in China CET. Big Data, 7 (2), 121–129. https://doi.org/10.1089/big.2018.0151

Luckin, R., & Cukurova, M. (2019). Designing educational technologies in the age of AI: A learning sciences-driven approach. British Journal of Educational Technology, 50 (6), 2824–2838. https://doi.org/10.1111/bjet.12861

Luckin, R., Holmes, W., Griffiths, M., & Forcier, L. B. (2016). Intelligence unleashed: An argument for AI in education . Pearson Education.

Luor, T., Johanson, R. E., Lu, H. P., & Wu, L. L. (2008). Trends and lacunae for future computer assisted learning (CAL) research: An assessment of the literature in SSCI journals from 1998–2006. Journal of the American Society for Information Science and Technology, 59 (8), 1313–1320. https://doi.org/10.1002/asi.20836

Ma, Z. H., Hwang, W. Y., & Shih, T. K. (2020). Effects of a peer tutor recommender system (PTRS) with machine learning and automated assessment on vocational high school students’ computer application operating skills. Journal of Computers in Education, 7 (3), 435–462. https://doi.org/10.1007/s40692-020-00162-9

McCarthy, T., Rosenblum, L. P., Johnson, B. G., Dittel, J., & Kearns, D. M. (2016). An artificial intelligence tutor: A supplementary tool for teaching and practicing braille. Journal of Visual Impairment & Blindness, 110 (5), 309–322. https://doi.org/10.1177/0145482X1611000503

Musso, M. F., Kyndt, E., Cascallar, E. C., & Dochy, F. (2013). Predicting general academic performance and ıdentifying the differential contribution of participating variables using artificial neural networks. Frontline Learning Research , 1 (1), 42–71. https://doi.org/10.14786/flr.v1i1.13

Nikiforos, S., Tzanavaris, S., & Kermanidis, K. L. (2020). Virtual learning communities (VLCs) rethinking: Influence on behavior modification—bullying detection through machine learning and natural language processing. Journal of Computers in Education, 7 , 531–551. https://doi.org/10.1007/s40692-020-00166-5

Okada, A., Whitelock, D., Holmes, W., & Edwards, C. (2019). e-Authentication for online assessment: A mixed-method study. British Journal of Educational Technology, 50 (2), 861–875.

Ozdemir, O., & Tekin, A. (2016). Evaluation of the presentation skills of the pre-service teachers via fuzzy logic. Computers in Human Behavior, 61 , 288–299. https://doi.org/10.1016/j.chb.2016.03.013

Pelham, W. E., Petras, H., & Pardini, D. A. (2020). Can machine learning improve screening for targeted delinquency prevention programs? Prevention Science, 21 (2), 158–170. https://doi.org/10.1007/s11121-019-01040-2

Popenici, S. A., & Kerr, S. (2017). Exploring the impact of artificial intelligence on teaching and learning in higher education. Research and Practice in Technology Enhanced Learning, 12 (1), 1–13. https://doi.org/10.1186/s41039-017-0062-8

Prieto, L. P., Sharma, K., Kidzinski, Ł, Rodríguez-Triana, M. J., & Dillenbourg, P. (2018). Multimodal teaching analytics: Automated extraction of orchestration graphs from wearable sensor data. Journal of Computer Assisted Learning, 34 (2), 193–203. https://doi.org/10.1111/jcal.12232

Qian, L., Zhao, Y., & Cheng, Y. (2020). Evaluating China’s automated essay scoring system iWrite. Journal of Educational Computing Research, 58 (4), 771–790. https://doi.org/10.1177/0735633119881472

Qin, F., Li, K., & Yan, J. (2020). Understanding user trust in artificial intelligence-based educational systems: Evidence from China. British Journal of Educational Technology, 51 (5), 1693–1710. https://doi.org/10.1111/bjet.12994

Renz, A., & Hilbig, R. (2020). Prerequisites for artificial intelligence in further education: Identification of drivers, barriers, and business models of educational technology companies. International Journal of Educational Technology in Higher Education, 17 , 1–21. https://doi.org/10.1186/s41239-020-00193-3

Roll, I., & Wylie, R. (2016). Evolution and revolution in artificial intelligence in education. International Journal of Artificial Intelligence in Education, 26 (2), 582–599. https://doi.org/10.1007/s40593-016-0110-3

Ruiz-Palmero, J., Colomo-Magaña, E., Ríos-Ariza, J. M., & Gómez-García, M. (2020). Big data in education: Perception of training advisors on its use in the educational system. Social Sciences, 9 (4), 53. https://doi.org/10.3390/socsci9040053

Russel, S., & Norvig, P. (2010). Artificial intelligence - a modern approach . Pearson Education.

Saa, A. A., Al-Emran, M., & Shaalan, K. (2019). Factors affecting students’ performance in higher education: A systematic review of predictive data mining techniques. Technology, Knowledge and Learning, 24 (4), 567–598. https://doi.org/10.1007/s10758-019-09408-7

Salomon, G. (1996). Studying novel learning environments as patterns of change. In S. Vosiniadou, E. De Corte, R. Glaser & H. Mandl (Eds.). International Perspectives on the design of Technology Supported Learning. NJ: Lawrence Erlbaum Associates.

Swiecki, Z., Ruis, A. R., Gautam, D., Rus, V., & Williamson Shaffer, D. (2019). Understanding when students are active-in-thinking through modeling-in-context. British Journal of Educational Technology, 50 (5), 2346–2364. https://doi.org/10.1111/bjet.12869

Sánchez-Prieto, J. C., Cruz-Benito, J., Therón Sánchez, R., & García Peñalvo, F. J. (2020). Assessed by machines: Development of a TAM-based tool to measure ai-based assessment acceptance among students. International Journal of Interactive Multimedia and Artificial Intelligence, 6 (4), 80–86. https://doi.org/10.9781/ijimai.2020.11.009

Schwarz, B. B., Prusak, N., Swidan, O., Livny, A., Gal, K., & Segal, A. (2018). Orchestrating the emergence of conceptual learning: A case study in a geometry class. International Journal of Computer-Supported Collaborative Learning, 13 (2), 189–211. https://doi.org/10.1007/s11412-018-9276-z

Seufert, S., Guggemos, J., & Sailer, M. (2020). Technology-related knowledge, skills, and attitudes of pre-and in-service teachers: The current situation and emerging trends. Computers in Human Behavior, 115 , 106552. https://doi.org/10.1016/j.chb.2020.106552

Şimşek, H., & Yıldırım, A. (2011). Qualitative research methods in social sciences . Seçkin Publishing.

Su, Y. N., Hsu, C. C., Chen, H. C., Huang, K. K., & Huang, Y. M. (2014). Developing a sensor-based learning concentration detection system. Engineering Computations., 31 (2), 216–230. https://doi.org/10.1108/EC-01-2013-0010

Tepperman, J., Lee, S., Narayanan, S., & Alwan, A. (2010). A generative student model for scoring word reading skills. IEEE Transactions on Audio, Speech, and Language Processing, 19 (2), 348–360. https://doi.org/10.1109/TASL.2010.2047812

Tondeur, J., Scherer, R., Siddiq, F., & Baran, E. (2020). Enhancing pre-service teachers’ technological pedagogical content knowledge (TPACK): A mixed-method study. Educational Technology Research and Development, 68 (1), 319–343. https://doi.org/10.1007/s11423-019-09692-1

Valtonen, T., Hoang, N., Sointu, E., Näykki, P., Virtanen, A., Pöysä-Tarhonen, J., Häkkinen, P., Järvelä, S., Mäkitalo, K., & Kukkonen, J. (2021). How pre-service teachers perceive their 21st-century skills and dispositions: A longitudinal perspective. Computers in Human Behavior, 116 , 106643. https://doi.org/10.1016/j.chb.2020.106643

Vij, S., Tayal, D., & Jain, A. (2020). A machine learning approach for automated evaluation of short answers using text similarity based on WordNet graphs. Wireless Personal Communications, 111 (2), 1271–1282. https://doi.org/10.1007/s11277-019-06913-x

Wang, S., Hu, B. Y., & LoCasale-Crouch, J. (2020). Modeling the nonlinear relationship between structure and process quality features in Chinese preschool classrooms. Children and Youth Services Review, 109 , 104677. https://doi.org/10.1016/j.childyouth.2019.104677

Williamson, M. (2015). “I wasn’t reinventing the wheel, just operating the tools”: The evolution of the writing processes of online first-year composition students (unpublished doctorial dissertation) . Arizona State University.

Yang, C. H. (2012). Fuzzy fusion for attending and responding assessment system of affective teaching goals in distance learning. Expert Systems with Applications, 39 (3), 2501–2508. https://doi.org/10.1016/j.eswa.2011.08.102

*Yoo, J. E., & Rho, M. (2020). Exploration of predictors for Korean teacher job satisfaction via a machine learning technique, Group Mnet. Frontiers in psychology , 11 , 441. https://doi.org/10.3389/fpsyg.2020.00441

*Yuan, S., He, T., Huang, H., Hou, R., & Wang, M. (2020). Automated Chinese essay scoring based on deep learning. CMC-Computers Materials & Continua , 65 (1), 817–833. https://doi.org/10.32604/cmc.2020.010471

Zawacki-Richter, O., Marín, V. I., Bond, M., & Gouverneur, F. (2019). Systematic review of research on artificial intelligence applications in higher education–where are the educators? International Journal of Educational Technology in Higher Education, 16 (1), 39. https://doi.org/10.1186/s41239-019-0171-0

Download references

Open Access funding provided by University of Oulu including Oulu University Hospital.

Author information

Authors and affiliations.

Learning and Learning Processes Research Unit, Faculty of Education, University of Oulu, 90014, Oulu, Finland

Ismail Celik & Hanni Muukkonen

Learning and Educational Technology Research Unit, Faculty of Education, University of Oulu, 90014, Oulu, Finland

Muhterem Dindar & Sanna Järvelä

You can also search for this author in PubMed Google Scholar

Corresponding author

Correspondence to Ismail Celik .

Ethics declarations

Human and animal rights.

There were no human participants and/or animals.

Informed Consent

This study is a literature review; therefore, no informed consent was needed.

Conflict of Interest

There are no potential conflicts of interest.

Additional information

Publisher's note.

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article's Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article's Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/ .

Reprints and permissions

About this article

Celik, I., Dindar, M., Muukkonen, H. et al. The Promises and Challenges of Artificial Intelligence for Teachers: a Systematic Review of Research. TechTrends 66 , 616–630 (2022). https://doi.org/10.1007/s11528-022-00715-y

Download citation

Accepted : 07 March 2022

Published : 25 March 2022

Issue Date : July 2022

DOI : https://doi.org/10.1007/s11528-022-00715-y

Share this article

Anyone you share the following link with will be able to read this content:

Sorry, a shareable link is not currently available for this article.

Provided by the Springer Nature SharedIt content-sharing initiative

- Artificial intelligence in education

- Systematic review

- Teacher professional development

- Technology integration

- Find a journal

- Publish with us

- Track your research

Classroom Q&A

With larry ferlazzo.

In this EdWeek blog, an experiment in knowledge-gathering, Ferlazzo will address readers’ questions on classroom management, ELL instruction, lesson planning, and other issues facing teachers. Send your questions to [email protected]. Read more from this blog.

Research Studies That Teachers Can Get Behind

- Share article

Today’s post is the latest in a series on research findings that could be useful to teachers.

Reading Motivation

Julia B. Lindsey is a foundational literacy expert and the author of the Scholastic title, Reading Above the Fray: Reliable, Research-based Routines for Developing Decoding Skills :

Lately, I’ve noticed that many conversations about children’s foundational reading skills are disconnected from conversations about children’s motivation to read. When foundational skills and reading are mentioned together, there seem to be two very different positions: Either, there’s a concern that motivation will get in the way of teaching skills, or a concern that focusing on skills will harm children’s motivation.

The simple fact is that these shouldn’t be either-or conversations, and many of us worry about both foundational skills and long-term reading motivation. So, what should educators focus on the most in the early years? Just like many areas of reading instruction, we can look to the research to understand more about the relationship between foundational skills and reading motivation.

A recent meta-analysis of motivation and reading achievement found that, on average across over 132 studies, early reading was a stronger predictor of later motivation than early motivation was of later reading (Toste et al., 2020). In other words, children’s skill in reading is likely to help drive their motivation over time, but their motivation may not drive their growth in skill to the same level over time. Highly motivated young readers need knowledge and skills, not only motivation, to drive their continued growth in reading.

This idea has recently been confirmed and extended. Just last year, researchers published a study investigating the literacy skills over time of several thousand twins (van Bergen et al., 2022). Among other findings, researchers found that early literacy skills impacted later literacy enjoyment, but early enjoyment did not impact skill. Strong, early skills are likely to lead to motivation and enjoyment over time. But, motivation without support to develop children’s skills is unlikely to lead to long-term skill or enjoyment.

How can we navigate conversations about foundational skills and reading motivation? We can acknowledge these critical research findings that tell us supporting young readers in acquiring excellent skills is likely a powerful way to support their long-term motivation. Though we can certainly continue to address children’s motivation in other research-based ways throughout their reading lives, it is critical to know: Skills are not a motivation killer; they are a motivation driver!

Teaching ELLs

Irina McGrath, Ph.D., is an assistant principal at Newcomer Academy in the Jefferson County district in Kentucky and the president of KYTESOL. She is also an adjunct professor at the University of Louisville, Indiana University Southeast, and Bellarmine University. She is a co-creator of the ELL2.0 site that offers free resources for teachers of English learners:

The topic of retaining new learning in a second language deserves greater attention than it has received so far. Retention refers to one’s ability to remember learning over time and recall it when necessary, which can be difficult. Humans tend to forget information easily.

German psychologist Hermann Ebbinghaus discovered in the 1880s that information is quickly forgotten without any reinforcement or connections to prior knowledge. In just one hour, people can forget about 56 percent of the information, approximately 66 percent after one day, and as much as 75 percent after six days. Various factors influence these percentages, including one’s prior knowledge of the topic, the difficulty of the material, the initial degree of learning, and the learning strategies used.

The learning process in a second language can be a challenging task, as students not only have to comprehend new concepts and ideas but also do so in a non-native language, which adds an additional layer of difficulty to retaining information.

Fortunately, researchers are making progress in identifying ways to support English learners in retaining and recalling information in their second or third language. An increasing number of studies are now focusing on specific strategies to enhance retention among ELs. One such effective strategy is the use of mnemonic devices, which have been found to improve ELs’ vocabulary retention by an average of 9 percent (Hill, 2022).

A 2021 study by Karatas, Özemir, and Ullman demonstrated that when students studied vocabulary words in their second language and utilized memory-enhancement techniques like spacing and retrieval practice, they experienced significant improvements in both learning and retention of the new vocabulary words.

Spacing allows learners to study across multiple sessions instead of cramming information into a single session, facilitating continuous built-in review and reducing the risk of learning burnout. On the other hand, retrieval practice involves active recall of information rather than passive engagement, such as quietly reviewing or rereading learned materials.

Recognizing the power of research-based strategies that promote retention of new learning in English learners is key to ensuring that valuable instructional time is not wasted and information does not fade away into the depths of forgetfulness.

Supporting Student Home Languages

Stephanie Dewing, Ph.D., is an associate professor of clinical education and the chair of the bilingual authorization program at the University of Southern California’s Rossier School of Education. A former classroom teacher in Ecuador and the United States, Stephanie specializes in language and literacy development and dual-language instruction, with an emphasis on newcomers :

Did you know that multilingualism can delay the onset of Alzheimers and dementia by up to five years?! This is just one of the many benefits associated with having a bilingual or multilingual brain . As a language teacher, I often get asked by other educators and multilingual families if it is best to use English only at home and in school so students do not get “confused” between the different languages. Thanks to decades of research, we more confidently know the answer to this question: no. It’s best to maintain the home language(s)! The brain is an amazing organ that, over time, will figure out which language is most appropriate to use with which person and in which context. ¿Increíble, no?

Several studies have found that the development of the first language, or L1, is beneficial to the development of English and other subsequent languages. For example, Umanksky and Reardon (2014) did a longitudinal study over 12 years that looked at the reclassification patterns of Latino English learners. Reclassification is when students who are identified as English learners demonstrate proficiency in English based on state exams and other bodies of evidence. What they found was that those who were enrolled in dual-language programs tended to reclassify a bit slower at the elementary level, but by the end of high school, they had a higher likelihood of becoming proficient in English and reclassifying (Umansky & Reardon, 2014).

The benefits of focusing on L1 acquisition in the early grades was undeniable. Riches and Genesee (2006) also argued for the importance of early literacy experiences and found through their research that those who develop literacy in their first language(s) develop skills that transfer to literacy development in English (or other additional languages). In fact, English learners who had developed literacy in their L1 were found to progress more quickly and successfully in English literacy development than those who had no prior L1 literacy.

One study even found that L1 reading abilities was the best predictor of L2, or second language, reading achievement in later grades (Riches & Genesee, 2006). Having bilingual or multilingual repertoires from which to draw is an asset, which means that whenever the opportunity presents itself, we should encourage families to maintain their home languages and engage in literacy-based activities in those languages.

Knowing which resources are available, such as print resources in different languages at local libraries, multilingual apps or websites, audiobooks in other languages, etc., can empower educators and families to reach this goal.

Finally, it is important to note the role that time plays in these studies. Language development takes time, and our patience and support is essential. When we give this incredible process the time, attention, and recognition it deserves (and start early!), we are not only setting our multilingual learners up for greater success, but we are helping to shape a more global, multilingual world, which is something to celebrate!

Thanks to Julia, Irina, and Stephanie for contributing their thoughts!

Today’s post answered this question:

What are one to three research findings that you think teachers should know about but that you also think that many of them do not?

Part One in this series featured responses from Ron Berger, Wendi Pillars, and Marina Rodriguez.

In Part Two , Erica Silva, Min Oh, and Marilyn Chu contributed their answers.

Consider contributing a question to be answered in a future post. You can send one to me at [email protected] . When you send it in, let me know if I can use your real name if it’s selected or if you’d prefer remaining anonymous and have a pseudonym in mind.

You can also contact me on Twitter at @Larryferlazzo .

Just a reminder; you can subscribe and receive updates from this blog via email . And if you missed any of the highlights from the first 12 years of this blog, you can see a categorized list here .

The opinions expressed in Classroom Q&A With Larry Ferlazzo are strictly those of the author(s) and do not reflect the opinions or endorsement of Editorial Projects in Education, or any of its publications.

Sign Up for EdWeek Update

Edweek top school jobs.

Sign Up & Sign In

An official website of the United States government

The .gov means it’s official. Federal government websites often end in .gov or .mil. Before sharing sensitive information, make sure you’re on a federal government site.

The site is secure. The https:// ensures that you are connecting to the official website and that any information you provide is encrypted and transmitted securely.

- Publications

- Account settings

The PMC website is updating on October 15, 2024. Learn More or Try it out now .

- Advanced Search

- Journal List

- HHS Author Manuscripts

Teacher and Teaching Effects on Students’ Attitudes and Behaviors

David blazar.

Harvard Graduate School of Education

Matthew A. Kraft

Brown University

Associated Data

Research has focused predominantly on how teachers affect students’ achievement on tests despite evidence that a broad range of attitudes and behaviors are equally important to their long-term success. We find that upper-elementary teachers have large effects on self-reported measures of students’ self-efficacy in math, and happiness and behavior in class. Students’ attitudes and behaviors are predicted by teaching practices most proximal to these measures, including teachers’ emotional support and classroom organization. However, teachers who are effective at improving test scores often are not equally effective at improving students’ attitudes and behaviors. These findings lend empirical evidence to well-established theory on the multidimensional nature of teaching and the need to identify strategies for improving the full range of teachers’ skills.

1. Introduction

Empirical research on the education production function traditionally has examined how teachers and their background characteristics contribute to students’ performance on standardized tests ( Hanushek & Rivkin, 2010 ; Todd & Wolpin, 2003 ). However, a substantial body of evidence indicates that student learning is multidimensional, with many factors beyond their core academic knowledge as important contributors to both short- and long-term success. 1 For example, psychologists find that emotion and personality influence the quality of one’s thinking ( Baron, 1982 ) and how much a child learns in school ( Duckworth, Quinn, & Tsukayama, 2012 ). Longitudinal studies document the strong predictive power of measures of childhood self-control, emotional stability, persistence, and motivation on health and labor market outcomes in adulthood ( Borghans, Duckworth, Heckman, & Ter Weel, 2008 ; Chetty et al., 2011 ; Moffitt et. al., 2011 ). In fact, these sorts of attitudes and behaviors are stronger predictors of some long-term outcomes than test scores ( Chetty et al., 2011 ).

Consistent with these findings, decades worth of theory also have characterized teaching as multidimensional. High-quality teachers are thought and expected not only to raise test scores but also to provide emotionally supportive environments that contribute to students’ social and emotional development, manage classroom behaviors, deliver accurate content, and support critical thinking ( Cohen, 2011 ; Lampert, 2001 ; Pianta & Hamre, 2009 ). In recent years, two research traditions have emerged to test this theory using empirical evidence. The first tradition has focused on observations of classrooms as a means of identifying unique domains of teaching practice ( Blazar, Braslow, Charalambous, & Hill, 2015 ; Hamre et al., 2013 ). Several of these domains, including teachers’ interactions with students, classroom organization, and emphasis on critical thinking within specific content areas, aim to support students’ development in areas beyond their core academic skill. The second research tradition has focused on estimating teachers’ contribution to student outcomes, often referred to as “teacher effects” ( Chetty Friedman, & Rockoff, 2014 ; Hanushek & Rivkin, 2010 ). These studies have found that, as with test scores, teachers vary considerably in their ability to impact students’ social and emotional development and a variety of observed school behaviors ( Backes & Hansen, 2015 ; Gershenson, 2016 ; Jackson, 2012 ; Jennings & DiPrete, 2010 ; Koedel, 2008 ; Kraft & Grace, 2016 ; Ladd & Sorensen, 2015 ; Ruzek et al., 2015 ). Further, weak to moderate correlations between teacher effects on different student outcomes suggest that test scores alone cannot identify teachers’ overall skill in the classroom.

Our study is among the first to integrate these two research traditions, which largely have developed in isolation. Working at the intersection of these traditions, we aim both to minimize threats to internal validity and to open up the “black box” of teacher effects by examining whether certain dimensions of teaching practice predict students’ attitudes and behaviors. We refer to these relationships between teaching practice and student outcomes as “teaching effects.” Specifically, we ask three research questions:

- To what extent do teachers impact students’ attitudes and behaviors in class?

- To what extent do specific teaching practices impact students’ attitudes and behaviors in class?

- Are teachers who are effective at raising test-score outcomes equally effective at developing positive attitudes and behaviors in class?