|

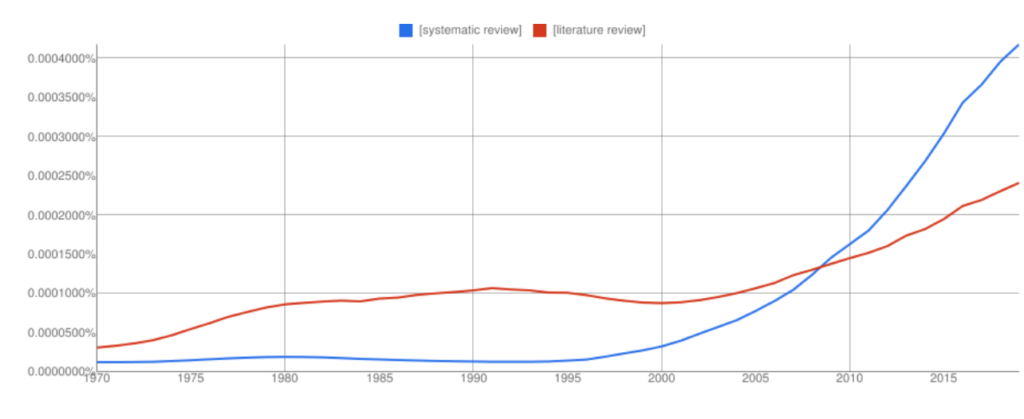

| | Narrative Literature Review | Systematic Literature Review | | | Broad | Narrow | | | Not specified, potentially biased | Comprehensive sources and search approach explicitly specified | | | Not usually specified, potentially biased | uniformly applied preselected inclusion/exclusion criteria | | | Variable | Rigorous critical evaluation | | | Often qualitative, quantitative through meta-analysis* | Often qualitative, quantitative through meta-analysis* | * Meta-analysis is a method of statistically combining the results of multiple studies in order to arrive at a quantitative conclusion about a body of literature and is most often used to assess the clinical effectiveness of healthcare interventions ("Meta-analysis", 2008). Steps for a Systematic Review - Develop an answerable question

- Check for recent systematic reviews

- Agree on specific inclusion and exclusion criteria

- Develop a system to organize data and notes

- Devise reproducible search methods

- Launch and track exhaustive search

- Organize search results

- Reproduce search results

- Abstract data into a standardized format

- Synthesize data using statistical methods (meta-analysis)

- Write about what you found

To learn more, see this presentation. Timeline for a Cochrane Review Table reproduced from Cochrane systematic reviews handbook. Recommended Guidelines The Cochrane Handbook for Systematic Reviews of Interventions is the official document that describes in detail the process of preparing and maintaining Cochrane systematic reviews on the effects of healthcare interventions. Welcome to the Preferred Reporting Items for Systematic Reviews and Meta-Analyses (PRISMA) website! PRISMA is an evidence-based minimum set of items for reporting in systematic reviews and meta-analyses. PRISMA focuses on the reporting of reviews evaluating randomized trials, but can also be used as a basis for reporting systematic reviews of other types of research, particularly evaluations of interventions. The JBI Reviewers’ Manual is designed to provide authors with a comprehensive guide to conducting JBI systematic reviews. It describes in detail the process of planning, undertaking and writing up a systematic review of qualitative, quantitative, economic, text and opinion based evidence. It also outlines JBI support mechanisms for those doing review work and opportunities for publication and training. The JBI Reviewers Manual should be used in conjunction with the JBI SUMARI User Guide. These standards are for systematic reviews of comparative effectiveness research of therapeutic medical or surgical interventions Green, S., & Higgins, J. P. T. (editors). (2011). Chapter 2: Preparing a Cochrane review. In J. P. T. Higgins, & S. Green (Eds.). Cochrane Handbook for Systematic Reviews of Interventions (Version 5.1.0). Available from http://handbook.cochrane.org Meta-Analysis. (2008). In W. A. Darity, Jr. (Ed.), International Encyclopedia of the Social Sciences (2nd ed., Vol. 5, pp. 104-105). Detroit: Macmillan Reference USA. - << Previous: Literature Reviews

- Next: Search Strategies >>

- Last Updated: Aug 16, 2024 4:29 PM

- URL: https://libguides.uta.edu/c.php?g=1388834

University of Texas Arlington Libraries 702 Planetarium Place · Arlington, TX 76019 · 817-272-3000 - Internet Privacy

- Accessibility

- Problems with a guide? Contact Us.

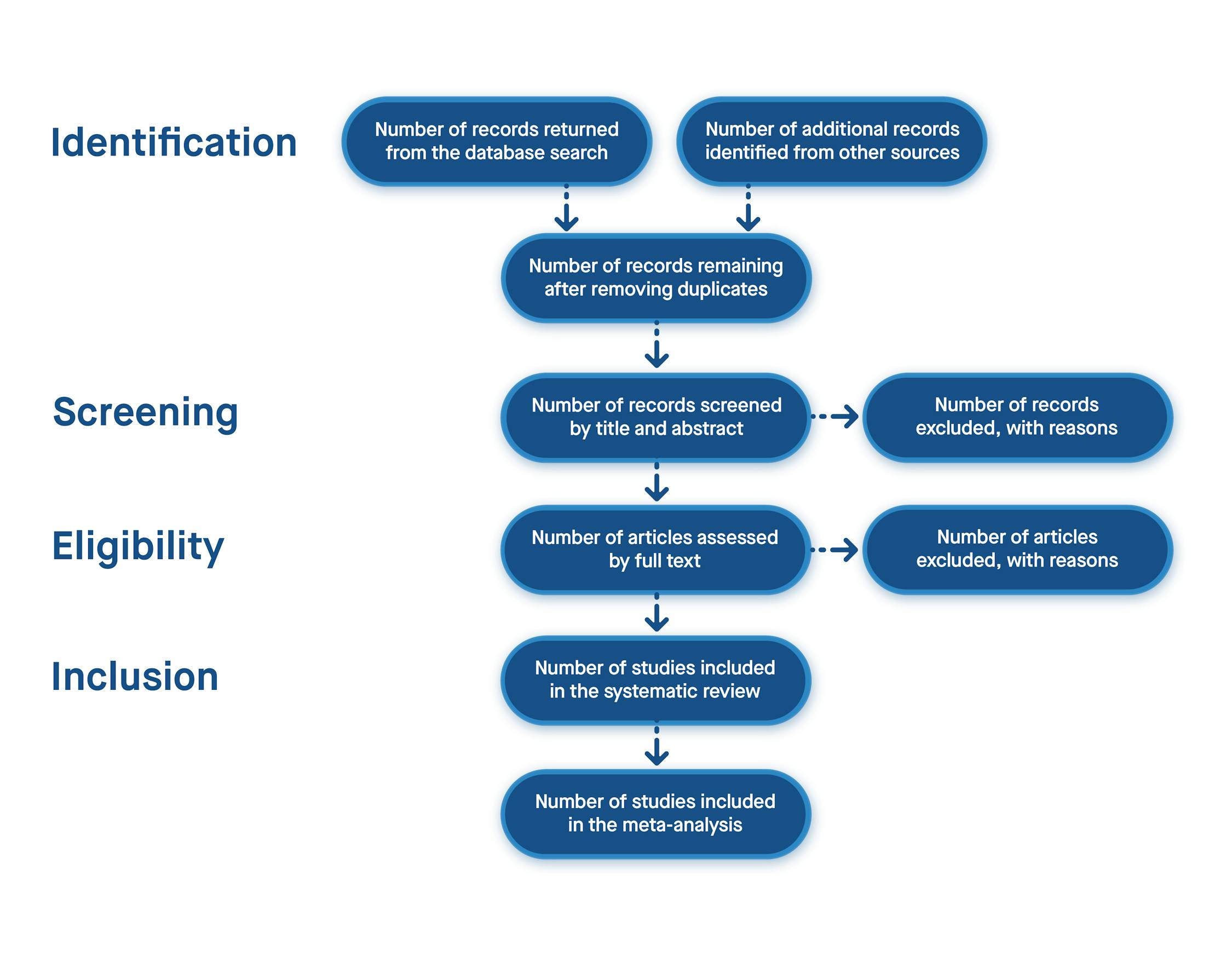

Start your free trialArrange a trial for your organisation and discover why FSTA is the leading database for reliable research on the sciences of food and health. REQUEST A FREE TRIAL What is the difference between a systematic review and a systematic literature review?By Carol Hollier on 07-Jan-2020 14:23:00  For those not immersed in systematic reviews, understanding the difference between a systematic review and a systematic literature review can be confusing. It helps to realise that a “systematic review” is a clearly defined thing, but ambiguity creeps in around the phrase “systematic literature review” because people can and do use it in a variety of ways. A systematic review is a research study of research studies. To qualify as a systematic review, a review needs to adhere to standards of transparency and reproducibility. It will use explicit methods to identify, select, appraise, and synthesise empirical results from different but similar studies. The study will be done in stages: - In stage one, the question, which must be answerable, is framed

- Stage two is a comprehensive literature search to identify relevant studies

- In stage three the identified literature’s quality is scrutinised and decisions made on whether or not to include each article in the review

- In stage four the evidence is summarised and, if the review includes a meta-analysis, the data extracted; in the final stage, findings are interpreted. [1]

Some reviews also state what degree of confidence can be placed on that answer, using the GRADE scale. By going through these steps, a systematic review provides a broad evidence base on which to make decisions about medical interventions, regulatory policy, safety, or whatever question is analysed. By documenting each step explicitly, the review is not only reproducible, but can be updated as more evidence on the question is generated. Sometimes when people talk about a “systematic literature review”, they are using the phrase interchangeably with “systematic review”. However, people can also use the phrase systematic literature review to refer to a literature review that is done in a fairly systematic way, but without the full rigor of a systematic review. For instance, for a systematic review, reviewers would strive to locate relevant unpublished studies in grey literature and possibly by contacting researchers directly. Doing this is important for combatting publication bias, which is the tendency for studies with positive results to be published at a higher rate than studies with null results. It is easy to understand how this well-documented tendency can skew a review’s findings, but someone conducting a systematic literature review in the loose sense of the phrase might, for lack of resource or capacity, forgo that step. Another difference might be in who is doing the research for the review. A systematic review is generally conducted by a team including an information professional for searches and a statistician for meta-analysis, along with subject experts. Team members independently evaluate the studies being considered for inclusion in the review and compare results, adjudicating any differences of opinion. In contrast, a systematic literature review might be conducted by one person. Overall, while a systematic review must comply with set standards, you would expect any review called a systematic literature review to strive to be quite comprehensive. A systematic literature review would contrast with what is sometimes called a narrative or journalistic literature review, where the reviewer’s search strategy is not made explicit, and evidence may be cherry-picked to support an argument. FSTA is a key tool for systematic reviews and systematic literature reviews in the sciences of food and health. The patents indexed help find results of research not otherwise publicly available because it has been done for commercial purposes. The FSTA thesaurus will surface results that would be missed with keyword searching alone. Since the thesaurus is designed for the sciences of food and health, it is the most comprehensive for the field. All indexing and abstracting in FSTA is in English, so you can do your searching in English yet pick up non-English language results, and get those results translated if they meet the criteria for inclusion in a systematic review. FSTA includes grey literature (conference proceedings) which can be difficult to find, but is important to include in comprehensive searches. FSTA content has a deep archive. It goes back to 1969 for farm to fork research, and back to the late 1990s for food-related human nutrition literature—systematic reviews (and any literature review) should include not just the latest research but all relevant research on a question. You can also use FSTA to find literature reviews.FSTA allows you to easily search for review articles (both narrative and systematic reviews) by using the subject heading or thesaurus term “REVIEWS" and an appropriate free-text keyword. On the Web of Science or EBSCO platform, an FSTA search for reviews about cassava would look like this: DE "REVIEWS" AND cassava. On the Ovid platform using the multi-field search option, the search would look like this: reviews.sh. AND cassava.af. In 2011 FSTA introduced the descriptor META-ANALYSIS, making it easy to search specifically for systematic reviews that include a meta-analysis published from that year onwards. On the EBSCO or Web of Science platform, an FSTA search for systematic reviews with meta-analyses about staphylococcus aureus would look like this: DE "META-ANALYSIS" AND staphylococcus aureus. On the Ovid platform using the multi-field search option, the search would look like this: meta-analysis.sh. AND staphylococcus aureus.af. Systematic reviews with meta-analyses published before 2011 are included in the REVIEWS controlled vocabulary term in the thesaurus. An easy way to locate pre-2011 systematic reviews with meta-analyses is to search the subject heading or thesaurus term "REVIEWS" AND meta-analysis as a free-text keyword AND another appropriate free-text keyword. On the Web of Science or EBSCO platform, the FSTA search would look like this: DE "REVIEWS" AND meta-analysis AND carbohydrate* On the Ovid platform using the multi-field search option, the search would look like this: reviews .sh. AND meta-analysis.af. AND carbohydrate*.af. Related resources:- Literature Searching Best Practise Guide

- Predatory publishing: Investigating researchers’ knowledge & attitudes

- The IFIS Expert Guide to Journal Publishing

Library image by Paul Schafer , microscope image by Matthew Waring , via Unsplash.  - FSTA - Food Science & Technology Abstracts

- IFIS Collections

- Resources Hub

- Diversity Statement

- Sustainability Commitment

- Company news

- Frequently Asked Questions

- Privacy Policy

- Terms of Use for IFIS Collections

Ground Floor, 115 Wharfedale Road, Winnersh Triangle, Wokingham, Berkshire RG41 5RB Get in touch with IFIS© International Food Information Service (IFIS Publishing) operating as IFIS – All Rights Reserved | Charity Reg. No. 1068176 | Limited Company No. 3507902 | Designed by Blend Systematic Reviews: Types of reviewsSystematic literature reviews. Using a systematic approach in conducting a literature review A literature review may be undertaken in a systematic way using a rigorous and structured search strategy in order to be comprehensive, without necessarily attempting to include all available research on a particular topic, as in a systematic review. Why be systematic? This approach can: - Provide a robust overview of the available literature on your topic

- Ensure relevant literature is identified and key publications are not overlooked

- Reduce irrelevant search results through search planning

- Help you to create a reproducible search strategy.

In addition, applying a systematic approach will allow you to work more efficiently. Not every review is a systematic review. Be sure to select the review type that matches the purpose and scope of your project. All reviews should be methodical and done in a careful and deliberate manner with a defined protocol. Questions to ask yourself: - What is the purpose of this review?

- What is the research question?

- How long do I have to complete it?

- Am I doing it alone or part of a team?

- How much of the literature do I need to capture?

- Does my literature search have to be transparent and replicable?

- Are there standard methods that need to be followed

- Types of reviews

- Systematic review

- Rapid review

- Umbrella review

Scoping review A systematic review attempts to identify, appraise and synthesize all the empirical evidence that meets pre-specified eligibility criteria to answer a given research question. Researchers conducting systematic reviews use explicit methods aimed at minimizing bias in order to produce more reliable findings that can be used to inform decision making. An essential step in the early development of a systematic review is the development of a review protocol. A protocol pre-defines the objectives and methods of the systematic review which allows transparency of the process. It must be done prior to conducting the systematic review as it is important in restricting the presence of reporting bias. The protocol is a completely separate document to the systematic review report. Adapted from: JBI Manual for Evidence Synthesis In summary, a systematic review: - Addresses a specific question

- Uses specified methodology

- Assesses quality of the literature

- Requires a team and long term commitment

What is a rapid review? The Cochrane Rapid Reviews Methods Group has proposed the following definition: “A form of knowledge synthesis that accelerates the process of conducting a traditional systematic review through streamlining or omitting specific methods to produce evidence for stakeholders in a resource-efficient manner.” Rapid reviews are usually undertaken when decision makers have urgent and emerging needs which require evidence produced on a short time frame. Typically, to compensate for the short time frame of a rapid review, methodological rigour may be sacrificed. For example, the grey literature may not be sought and preference may be given to the more readily available research published and written in English. A rapid review follows most of the principle steps of a systematic review, using systematic and transparent methods to identify, select, critically appraise and analyze data from relevant research. However, to provide timely evidence, some of the components of a systematic review process are either simplified or omitted. There are various approaches for simplifying the review components, such as by reducing the number of databases, assigning a single reviewer in each step while another reviewer verifies the results, excluding or limiting the use of grey literature, or by narrowing the scope of the review. In general, a rapid review takes about four months or less. Adapted from: Health Evaluation and Applied Research Development (HEARD). (June 25th, 2018). Rapid reviews versus systematic reviews. https://www.heardproject.org/news/rapid-review-vs-systematic-review-what-are-the-differences/ Umbrella reviews are sometimes referred to as a "review of reviews". They are an attempt to identify and appraise, extract and summarise all the evidence from research syntheses related to a topic or question. Umbrella reviews may: - Include analyses of different interventions for the same problem or condition.

- Analyse the same intervention and condition, but different outcomes.

- Analyse the same intervention but different conditions, problems or populations.

Umbrella reviews offer the possibility to address a broad scope of issues related to the topic of interest. In summary, an umbrella review: - Is a systematic review of systematic reviews

- Synthesizes systematic reviews of the same topic

- Assesses scope and quality of individual systematic reviews

"Scoping reviews, a type of knowledge synthesis, follow a systematic approach to map evidence on a topic and identify main concepts, theories, sources, and knowledge gaps" (Tricco, et al., 2018). "Scoping reviews conducted as precursors to systematic reviews may enable authors to identify the nature of a broad field of evidence so that ensuing reviews can be assured of locating adequate numbers of relevant studies for inclusion" (Munn, Z., Peters, M., Stern, C., Tufanaru, C., McArthur, A., & Aromataris, E., 2018). A scoping review may be undertaken as a preliminary exercise prior to the conduct of a systematic review, or as a stand alone review. A scoping review may be used: - As a precursor to a systematic review.

- To identify the types of available evidence in a given field.

- To identify and analyse knowledge gaps.

- To clarify key concepts/ definitions in the literature.

- To examine how research is conducted on a certain topic or field.

- To identify key characteristics or factors related to a concept.

Adapted from: JBI Manual for Evidence Synthesis, chapter 11 Scoping reviews. https://doi.org/10.46658/JBIMES-20-01 Getting started: Cochrane: Scoping reviews: what they are and how you can do them Reporting: The PRISMA extension for scoping reviews was published in 2018. The checklist contains 20 essential reporting items and 2 optional items to include when completing a scoping review. Scoping reviews serve to synthesize evidence and assess the scope of literature on a topic. Among other objectives, scoping reviews help determine whether a systematic review of the literature is warranted. A traditional literature review or narrative review examines and evaluates the scholarly literature on a topic. Literature reviews often do not answer one specific question, rather they usually bring together a summary of the literature in a qualitative manner. A literature review may be undertaken in a systematic way in order to be comprehensive, without being a systematic review. It is important to recognise the differences between the two and determine which type of review is best suited to your needs - or whether one of the other reviews detailed here is more applicable. Narrative reviews: - provide a (generally qualitative) summary of the relevant literature, as determined by the author.

- do not necessarily provide an analysis of the literature or its quality.

- usually do not include a description of the methodology of the search process.

- refer to key journal literature without going into the grey literature.

- don't always answer a specific research question.

- are not protocol driven.

Barnard, M. (2015). Research essentials: How to undertake a literature review . Nursing Children and Young People, 27 (10), 12-12. doi:10.7748/ncyp.27.10.12.s15 Bettany-Saltikov, J. (2010). Learning how to undertake a systematic review: Part 1 . Nursing Standard , 24 (40): 47-55. Grant, M.J., & Booth, A. (2009). A typology of reviews: An analysis of 14 review types and associated methodologies . Health Information and Libraries Journal, 26 (2), 91-108. doi:10.1111/j.1471-1842.2009.00848.x Kowalczyk, N., & Truluck, C. (2013). Literature reviews and systematic reviews: What is the difference? Radiologic Technology, 85 (2), 219-222. Munn, Z., Peters, M., Stern, C., Tufanaru, C., McArthur, A., & Aromataris, E. (2018). Systematic review or scoping review? Guidance for authors when choosing between a systematic or scoping review approach. BMC Medical Research Methodology, 18 (1), 1-7. doi:10.1186/s12874-018-0611-x Munn, Z., Stern, C., Aromataris, E., Lockwood, C., & Jordan, Z. (2018). What kind of systematic review should I conduct? A proposed typology and guidance for systematic reviewers in the medical and health sciences . BMC Medical Research Methodology , 18 (1), 5. https://doi-org.ezproxy.ecu.edu.au/10.1186/s12874-017-0468-4 Pawson, R., Greenhalgh, T., Harvey, G., & Walshe, K. (2005). Realist review: A new method of systematic review designed for complex policy interventions. Journal of Health Services Research and Policy, 10 (3), 21-34. https://doi.org/10.1258/1355819054308530 Robinson, P., & Lowe, J. (2015). Literature reviews vs systematic reviews . Australian and New Zealand Journal of Public Health, 39 (2), 103-103. doi:10.1111/1753-6405.12393 Tricco, A., Lillie, E., Zarin, W., O'Brien, K., Colquhoun, H., Levac, D., . . . Straus, S. (2018). Prisma extension for scoping reviews (prisma-scr): Checklist and explanation . Annals of Internal Medicine, 169 (7), 467-467. - << Previous: Getting started

- Next: Systematic review process >>

- Getting started

- Formulate the question

- SR protocol

- Levels of evidence and study design

- Searching for systematic reviews

- Search strategies

- Subject databases

- Keeping up to date/Alerts

- Trial registers

- Conference proceedings

- Critical appraisal

- Documenting and reporting

- Managing search results

- Statistical methods

- Journal information/publishing

- Contact a librarian

- Last Updated: May 15, 2024 11:15 AM

- URL: https://ecu.au.libguides.com/systematic-reviews

Edith Cowan University acknowledges and respects the Noongar people, who are the traditional custodians of the land upon which its campuses stand and its programs operate. In particular ECU pays its respects to the Elders, past and present, of the Noongar people, and embrace their culture, wisdom and knowledge.  About Systematic Reviews Understanding the Differences Between a Systematic Review vs Literature Review Automate every stage of your literature review to produce evidence-based research faster and more accurately.Let’s look at these differences in further detail. Goal of the ReviewThe objective of a literature review is to provide context or background information about a topic of interest. Hence the methodology is less comprehensive and not exhaustive. The aim is to provide an overview of a subject as an introduction to a paper or report. This overview is obtained firstly through evaluation of existing research, theories, and evidence, and secondly through individual critical evaluation and discussion of this content. A systematic review attempts to answer specific clinical questions (for example, the effectiveness of a drug in treating an illness). Answering such questions comes with a responsibility to be comprehensive and accurate. Failure to do so could have life-threatening consequences. The need to be precise then calls for a systematic approach. The aim of a systematic review is to establish authoritative findings from an account of existing evidence using objective, thorough, reliable, and reproducible research approaches, and frameworks. Level of Planning RequiredThe methodology involved in a literature review is less complicated and requires a lower degree of planning. For a systematic review, the planning is extensive and requires defining robust pre-specified protocols. It first starts with formulating the research question and scope of the research. The PICO’s approach (population, intervention, comparison, and outcomes) is used in designing the research question. Planning also involves establishing strict eligibility criteria for inclusion and exclusion of the primary resources to be included in the study. Every stage of the systematic review methodology is pre-specified to the last detail, even before starting the review process. It is recommended to register the protocol of your systematic review to avoid duplication. Journal publishers now look for registration in order to ensure the reviews meet predefined criteria for conducting a systematic review [1].  Search Strategy for Sourcing Primary ResourcesLearn more about distillersr. (Article continues below)  Quality Assessment of the Collected ResourcesA rigorous appraisal of collected resources for the quality and relevance of the data they provide is a crucial part of the systematic review methodology. A systematic review usually employs a dual independent review process, which involves two reviewers evaluating the collected resources based on pre-defined inclusion and exclusion criteria. The idea is to limit bias in selecting the primary studies. Such a strict review system is generally not a part of a literature review. Presentation of ResultsMost literature reviews present their findings in narrative or discussion form. These are textual summaries of the results used to critique or analyze a body of literature about a topic serving as an introduction. Due to this reason, literature reviews are sometimes also called narrative reviews. To know more about the differences between narrative reviews and systematic reviews , click here. A systematic review requires a higher level of rigor, transparency, and often peer-review. The results of a systematic review can be interpreted as numeric effect estimates using statistical methods or as a textual summary of all the evidence collected. Meta-analysis is employed to provide the necessary statistical support to evidence outcomes. They are usually conducted to examine the evidence present on a condition and treatment. The aims of a meta-analysis are to determine whether an effect exists, whether the effect is positive or negative, and establish a conclusive estimate of the effect [2]. Using statistical methods in generating the review results increases confidence in the review. Results of a systematic review are then used by clinicians to prescribe treatment or for pharmacovigilance purposes. The results of the review can also be presented as a qualitative assessment when the end goal is issuing recommendations or guidelines. Risk of BiasLiterature reviews are mostly used by authors to provide background information with the intended purpose of introducing their own research later. Since the search for included primary resources is also less exhaustive, it is more prone to bias. One of the main objectives for conducting a systematic review is to reduce bias in the evidence outcome. Extensive planning, strict eligibility criteria for inclusion and exclusion, and a statistical approach for computing the result reduce the risk of bias. Intervention studies consider risk of bias as the “likelihood of inaccuracy in the estimate of causal effect in that study.” In systematic reviews, assessing the risk of bias is critical in providing accurate assessments of overall intervention effect [3]. With numerous review methods available for analyzing, synthesizing, and presenting existing scientific evidence, it is important for researchers to understand the differences between the review methods. Choosing the right method for a review is crucial in achieving the objectives of the research. [1] “Systematic Review Protocols and Protocol Registries | NIH Library,” www.nihlibrary.nih.gov . https://www.nihlibrary.nih.gov/services/systematic-review-service/systematic-review-protocols-and-protocol-registries [2] A. B. Haidich, “Meta-analysis in medical research,” Hippokratia , vol. 14, no. Suppl 1, pp. 29–37, Dec. 2010, [Online]. Available: https://www.ncbi.nlm.nih.gov/pmc/articles/PMC3049418/#:~:text=Meta%2Danalyses%20are%20conducted%20to 3 Reasons to Connect InformationInitiativesYou are accessing a machine-readable page. In order to be human-readable, please install an RSS reader. All articles published by MDPI are made immediately available worldwide under an open access license. No special permission is required to reuse all or part of the article published by MDPI, including figures and tables. For articles published under an open access Creative Common CC BY license, any part of the article may be reused without permission provided that the original article is clearly cited. For more information, please refer to https://www.mdpi.com/openaccess . Feature papers represent the most advanced research with significant potential for high impact in the field. A Feature Paper should be a substantial original Article that involves several techniques or approaches, provides an outlook for future research directions and describes possible research applications. Feature papers are submitted upon individual invitation or recommendation by the scientific editors and must receive positive feedback from the reviewers. Editor’s Choice articles are based on recommendations by the scientific editors of MDPI journals from around the world. Editors select a small number of articles recently published in the journal that they believe will be particularly interesting to readers, or important in the respective research area. The aim is to provide a snapshot of some of the most exciting work published in the various research areas of the journal. Original Submission Date Received: . - Active Journals

- Find a Journal

- Proceedings Series

- For Authors

- For Reviewers

- For Editors

- For Librarians

- For Publishers

- For Societies

- For Conference Organizers

- Open Access Policy

- Institutional Open Access Program

- Special Issues Guidelines

- Editorial Process

- Research and Publication Ethics

- Article Processing Charges

- Testimonials

- Preprints.org

- SciProfiles

- Encyclopedia

Article Menu - Subscribe SciFeed

- Google Scholar

- on Google Scholar

- Table of Contents

Find support for a specific problem in the support section of our website. Please let us know what you think of our products and services. Visit our dedicated information section to learn more about MDPI. JSmol ViewerA systematic literature review of modalities, trends, and limitations in emotion recognition, affective computing, and sentiment analysis.  1. Introduction2. methodology, 2.1. research questions, 2.2. search process, 2.2.1. search terms, 2.2.2. inclusion and exclusion criteria, 2.2.3. quality assessment, 2.2.4. data extraction, 3.1. overview, 3.2. unimodal data approaches, 3.2.1. unimodal physical approaches, 3.2.2. unimodal speech data approaches. - Several articles mention the use of transfer learning for speech emotion recognition. This technique involves training models on one dataset and applying them to another. This can improve the efficiency of emotion recognition across different datasets.

- Some articles discuss multitask learning models, which are designed to simultaneously learn multiple related tasks. In the context of speech emotion recognition, this approach may help capture commonalities and differences across different datasets or emotions.

- Data augmentation techniques are mentioned in multiple articles, which involve generating additional training data from existing data, which can improve model performance and generalization.

- Attention mechanisms are a common trend for improving emotion recognition. Attention models allow the model to focus on specific features or segments of the input data that are most relevant for recognizing emotions, such as in multi-level attention-based approaches.

- Many articles discuss the use of deep learning models, such as convolutional neural networks (CNNs), recurrent neural networks (RNNs), and some variants like “Two-Stage Fuzzy Fusion Based-Convolution Neural Network, “Deep Convolutional LSTM”, and “Attention-Oriented Parallel CNN Encoders”.

- While deep learning is prevalent, some articles explore novel feature engineering methods, such as modulation spectral features and wavelet packet information gain entropy, to enhance emotion recognition.

- From the list of articles on unimodal emotion recognition through speech, 7.14% address the challenge of recognizing emotions across different datasets or corpora. This is an important trend for making emotion recognition models more versatile.

- A few articles focus on making emotion recognition models more interpretable and explainable, which is crucial for real-world applications and understanding how the model makes its predictions.

- Ensemble methods, which combine multiple models to make predictions, are mentioned in several articles as a way to improve the performance of emotion recognition systems.

- Some articles discuss emotion recognition in specific contexts, such as call/contact centers, school violence detection, depression detection, analysis of podcast recordings, noisy environment analysis, in-the-wild sentiment analysis, and speech emotion segmentation of vowel-like and non-vowel-like regions. This indicates a trend toward applying emotion recognition in diverse applications.

3.2.3. Unimodal Text Data Approaches3.2.4. unimodal physiological data approaches. - Attention and self-attention mechanisms: These suggest that researchers are paying attention to the relevance of different parts of EEG signals for emotion recognition.

- Generative adversarial networks (GANs): Used for generating synthetic EEG data in order to improve the robustness and generalization of the models.

- Semi-supervised learning and domain transfer: Allow emotion recognition with limited datasets or datasets that are applicable to different domains, suggesting a concern for scalability and generalization of models.

- Interpretability and explainability: There is a growing interest in models that are interpretable and explainable, suggesting a concern for understanding how models make decisions and facilitating user trust in them.

- Utilization of transformers and capsule networks: Newer neural network architectures such as transformers and capsule networks are being explored for emotion recognition, indicating an interest in enhancing the modeling and representation capabilities of EEG signals.

- Although studies with a unimodal physical approach using signals different from EEG, like ECG, EDA, HR, and PPG, are still scarce, these can provide information about the cardiovascular system and the body’s autonomic response to emotions. Their limitations are that they may not be as specific or sensitive in detecting subtle or changing emotions. Noise and artifacts, such as motion, can affect the quality of these signals in practical situations and can be influenced by non-emotional factors, such as physical exercise and fatigue. Various studies explore the utilization of ECG and PPG signals for emotion recognition and stress classification. Techniques such as CNNs, LSTMs, attention mechanisms, self-supervised learning, and data augmentation are employed to analyze these signals and extract meaningful features for emotion recognition tasks. Bayesian deep learning frameworks are utilized for probabilistic modeling and uncertainty estimation in emotion prediction from HB data. These approaches aim to enhance human–computer interaction, improve mental health monitoring, and develop personalized systems for emotion recognition based on individual user characteristics.

3.3. Multi-Physical Data Approaches- Most studies employ CNNs and RNNs, while others utilize variations of general neural networks, such as spiking neural networks (SNN) and tree-based neural networks. SNNs represent and transmit information through discrete bursts of neuronal activity, known as “spikes” or “pulses”, unlike conventional neural networks, which process information in continuous values. Additionally, several studies leverage advanced analysis models such as the stacked ensemble model and multimodal fusion models, which focus on integrating diverse sources of information to enhance decision-making. Transfer learning models and hybrid attention networks aim to capitalize on knowledge from related tasks or domains to improve performance in a target task. Attention-based neural networks prioritize capturing relevant information and patterns within the data. Semi-supervised and contrastive learning models offer alternative learning paradigms by incorporating both labeled and unlabeled data.

- The studies address diverse applications, including sarcasm, sentiment, and emotion recognition in conversations, financial distress prediction, performance evaluation in job interviews, emotion-based location recommendation systems, user experience (UX) analysis, emotion detection in video games, and in educational settings. This suggests that emotion recognition thorough multi-physical data analysis has a wide spectrum of applications in everyday life.

- Various audio and video signal processing techniques are employed, including pitch analysis, facial feature detection, cross-attention, and representational learning.

3.4. Multi-Physiological Data Approaches- The fusion of physiological signals, such as EEG, ECG, PPG, GSR, EMG, BVP, EOG, respiration, temperature, and movement signals, is a predominant trend in these studies. The combination of multiple physiological signals allows for a richer representation of emotions.

- Most studies apply deep learning models, such as CNNs, RNNs, and autoencoder neural networks (AE), for the processing and analysis of these signals. Supervised and unsupervised learning approaches are also used.

- These studies focus on a variety of applications, such as emotion recognition in healthcare environments, brain–computer interfaces for music, emotion detection in interactive virtual environments, stress assessment in mobility environments for visually impaired people, among others. This indicates that emotion recognition based on physiological signals has applications in healthcare, technology, and beyond.

- Some studies focus on personalized emotion recognition, suggesting tailoring of models for each individual. This may be relevant for personalized health and wellness applications. Others focus on interactive applications and virtual environments useful for entertainment and virtual therapy.

- It is important to mention that the studies within this classification are quite limited in comparison to the previously described modalities. Although it appears that they are using similar physiological signals, the databases differ in terms of their approaches and generation methods. Therefore, there is an opportunity to establish a protocol for generating these databases, allowing for meaningful comparisons among studies.

3.5. Multi-Physical–Physiological Data Approaches- Studies tend to combine multiple types of signals, such as EEG, facial expressions, voice signals, GSR, and other physiological data. Combining signals aims to take advantage of the complementarity of different modalities to improve accuracy in emotion detection.

- Machine learning models, in particular CNNs, are widely used in signal fusion for emotion recognition. CNN models can effectively process data from multiple modalities.

- Applications are also being explored in the health and wellness domain, such as emotion detection for emotional health analysis of people in smart environments.

- The use of standardized and widely accepted databases is important for comparing results between different studies; however, these are still limited.

- The trend towards non-intrusive sensors and wireless technology enables data collection in more natural and less intrusive environments, which facilitates the practical application of these systems in everyday environments.

4. Discussion- Facial expression analysis approaches are currently being applied across various domains, including naturalistic settings (“in the wild”), on-road driver monitoring, virtual reality environments, smart homes, IoT and edge devices, and assistive robots. There is also a focus on mental health assessment, including autism, depression, and schizophrenia, and distinguishing between genuine and unfelt facial expressions of emotion. Efforts are being made to improve performance in processing faces acquired at a distance despite the challenges posed by low-quality images. Furthermore, there is an emerging interest in utilizing facial expression analysis in human–computer interaction (HCI), learning environments, and multicultural contexts.

- The recognition of emotions through speech and text has experienced tremendous growth, largely due to the abundance of information facilitated by advancements in technology and social media. This has enabled individuals to express their opinions and sentiments through various media, including podcast recordings, live videos, and readily available data sources such as social media platforms like Twitter, Facebook, Instagram, and blogs. Additionally, researchers have utilized unconventional sources like stock market data and tourism-related reviews. The variety and richness of these data sources indicate a wide range of segments where such emotion recognition analyses can be applied effectively.

- EEG signals continue to be a prominent modality for emotion recognition due to their highly accurate insights into emotional states. Between 2022 and 2023, studies in this field experienced exponential growth. The identified trends include utilizing EEG for enhancing human–computer interaction, recognizing emotions in various contexts such as patients with consciousness disorders, movie viewing, virtual environments, and driving scenarios. EEG is being used for detecting and monitoring mental health issues. There is also a growing focus on personalization, leading towards more individualized and user-specific emotion recognition systems, Other physiological signals, such as ECG, EDA, and HR, are also gaining attention, albeit at a slower pace.

- In the realm of multi-physical, multi-physiological, and multi-physical–physiological approaches, it is the former that appears to be laying the groundwork, as evidenced by the abundance of studies in this area. The latter two approaches, incorporating fusions with physiological signals, are still relatively scarce but seem to be paving the way for future researchers to contribute to their growth. Multimodal approaches, which integrate both physical and physiological signals, are finding diverse applications in emotion recognition. These range from healthcare systems, individual and group mood research, personality recognition, pain intensity recognition, anxiety detection, work stress detection, stress classification and security monitoring in public spaces, to vehicle security monitoring, movie audience emotion recognition, applications for autism spectrum disorder detection, music interfacing, and virtual environments.

- Bidirectional encoder representations from transformers: Used in sentiment analysis and emotion recognition from text, BERT models can understand the context of words in sentences by pre-training on a large text and then fine-tuning for specific tasks like sentiment analysis.

- CNNs: These are commonly applied in facial emotion recognition, emotion recognition from physiological signals, and even in speech emotion recognition by analyzing spectrograms.

- RNNS and variants (LSTM, GRU): These models are suited for sequential data like speech and text. LSTMs and GRUs are particularly effective in speech emotion recognition and sentiment analysis of time-series data.

- Graph convolutional networks (GCNs): Applied in emotion recognition from EEG signals and conversation-based emotion recognition, these can model relational data and capture the complex dependencies in graph-structured data, like brain connectivity patterns or conversational contexts.

- Attention mechanisms and transformers: Enhancing the ability of models to focus on relevant parts of the data, attention mechanisms are integral to models like transformers for tasks that require understanding the context, such as sentiment analysis in long documents or emotion recognition in conversations.

- Ensemble models: Combining predictions from multiple models to improve accuracy, ensemble methods are used in multimodal emotion recognition, where inputs from different modalities (e.g., audio, text, and video) are integrated to make more accurate predictions.

- Autoencoders and generative adversarial networks (GANs): For tasks like data augmentation in emotion recognition from EEG or for generating synthetic data to improve model robustness, these unsupervised learning models can learn compact representations of data or generate new data samples, respectively.

- Multimodal fusion models: In applications requiring the integration of multiple data types (e.g., speech, text, and video for emotion recognition), fusion models combine features from different modalities to capture more comprehensive information for prediction tasks.

- Transfer learning: Utilizing pre-trained models on large datasets and fine-tuning them for specific affective computing tasks, transfer learning is particularly useful in scenarios with limited labeled data, such as sentiment analysis in niche domains.

- Spatio-temporal models: For tasks that involve data with both spatial and temporal dimensions (like video-based emotion recognition or physiological signal analysis), models that capture spatio-temporal dynamics are employed, combining approaches like CNNs for spatial features and RNNs/LSTMs for temporal features.

5. ConclusionsAuthor contributions, institutional review board statement, informed consent statement, data availability statement, acknowledgments, conflicts of interest. - Zhou, T.H.; Liang, W.; Liu, H.; Wang, L.; Ryu, K.H.; Nam, K.W. EEG Emotion Recognition Applied to the Effect Analysis of Music on Emotion Changes in Psychological Healthcare. Int. J. Environ. Res. Public Health 2022 , 20 , 378. [ Google Scholar ] [ CrossRef ] [ PubMed ]

- Hajek, P.; Munk, M. Speech Emotion Recognition and Text Sentiment Analysis for Financial Distress Prediction. Neural Comput. Appl. 2023 , 35 , 21463–21477. [ Google Scholar ] [ CrossRef ]

- Naim, I.; Tanveer, M.d.I.; Gildea, D.; Hoque, M.E. Automated Analysis and Prediction of Job Interview Performance. IEEE Trans. Affect. Comput. 2018 , 9 , 191–204. [ Google Scholar ] [ CrossRef ]

- Ayata, D.; Yaslan, Y.; Kamasak, M.E. Emotion Recognition from Multimodal Physiological Signals for Emotion Aware Healthcare Systems. J. Med. Biol. Eng. 2020 , 40 , 149–157. [ Google Scholar ] [ CrossRef ]

- Maithri, M.; Raghavendra, U.; Gudigar, A.; Samanth, J.; Barua, D.P.; Murugappan, M.; Chakole, Y.; Acharya, U.R. Automated Emotion Recognition: Current Trends and Future Perspectives. Comput. Methods Programs Biomed. 2022 , 215 , 106646. [ Google Scholar ] [ CrossRef ] [ PubMed ]

- Du, Z.; Wu, S.; Huang, D.; Li, W.; Wang, Y. Spatio-Temporal Encoder-Decoder Fully Convolutional Network for Video-Based Dimensional Emotion Recognition. IEEE Trans. Affect. Comput. 2021 , 12 , 565–578. [ Google Scholar ] [ CrossRef ]

- Montero Quispe, K.G.; Utyiama, D.M.S.; dos Santos, E.M.; Oliveira, H.A.B.F.; Souto, E.J.P. Applying Self-Supervised Representation Learning for Emotion Recognition Using Physiological Signals. Sensors 2022 , 22 , 9102. [ Google Scholar ] [ CrossRef ]

- Zhang, Y.; Wang, J.; Liu, Y.; Rong, L.; Zheng, Q.; Song, D.; Tiwari, P.; Qin, J. A Multitask Learning Model for Multimodal Sarcasm, Sentiment and Emotion Recognition in Conversations. Inf. Fusion 2023 , 93 , 282–301. [ Google Scholar ] [ CrossRef ]

- Leong, S.C.; Tang, Y.M.; Lai, C.H.; Lee, C.K.M. Facial Expression and Body Gesture Emotion Recognition: A Systematic Review on the Use of Visual Data in Affective Computing. Comput. Sci. Rev. 2023 , 48 , 100545. [ Google Scholar ] [ CrossRef ]

- Aranha, R.V.; Correa, C.G.; Nunes, F.L.S. Adapting Software with Affective Computing: A Systematic Review. IEEE Trans. Affect. Comput. 2021 , 12 , 883–899. [ Google Scholar ] [ CrossRef ]

- Kratzwald, B.; Ilić, S.; Kraus, M.; Feuerriegel, S.; Prendinger, H. Deep Learning for Affective Computing: Text-Based Emotion Recognition in Decision Support. Decis. Support. Syst. 2018 , 115 , 24–35. [ Google Scholar ] [ CrossRef ]

- Ab. Aziz, N.A.; K., T.; Ismail, S.N.M.S.; Hasnul, M.A.; Ab. Aziz, K.; Ibrahim, S.Z.; Abd. Aziz, A.; Raja, J.E. Asian Affective and Emotional State (A2ES) Dataset of ECG and PPG for Affective Computing Research. Algorithms 2023 , 16 , 130. [ Google Scholar ] [ CrossRef ]

- Jung, T.-P.; Sejnowski, T.J. Utilizing Deep Learning Towards Multi-Modal Bio-Sensing and Vision-Based Affective Computing. IEEE Trans. Affect. Comput. 2022 , 13 , 96–107. [ Google Scholar ] [ CrossRef ]

- Shah, S.; Ghomeshi, H.; Vakaj, E.; Cooper, E.; Mohammad, R. An Ensemble-Learning-Based Technique for Bimodal Sentiment Analysis. Big Data Cogn. Comput. 2023 , 7 , 85. [ Google Scholar ] [ CrossRef ]

- Tang, J.; Hou, M.; Jin, X.; Zhang, J.; Zhao, Q.; Kong, W. Tree-Based Mix-Order Polynomial Fusion Network for Multimodal Sentiment Analysis. Systems 2023 , 11 , 44. [ Google Scholar ] [ CrossRef ]

- Khamphakdee, N.; Seresangtakul, P. An Efficient Deep Learning for Thai Sentiment Analysis. Data 2023 , 8 , 90. [ Google Scholar ] [ CrossRef ]

- Jo, A.-H.; Kwak, K.-C. Speech Emotion Recognition Based on Two-Stream Deep Learning Model Using Korean Audio Information. Appl. Sci. 2023 , 13 , 2167. [ Google Scholar ] [ CrossRef ]

- Abdulrahman, A.; Baykara, M.; Alakus, T.B. A Novel Approach for Emotion Recognition Based on EEG Signal Using Deep Learning. Appl. Sci. 2022 , 12 , 10028. [ Google Scholar ] [ CrossRef ]

- Middya, A.I.; Nag, B.; Roy, S. Deep Learning Based Multimodal Emotion Recognition Using Model-Level Fusion of Audio–Visual Modalities. Knowl. Based Syst. 2022 , 244 , 108580. [ Google Scholar ] [ CrossRef ]

- Ali, M.; Mosa, A.H.; Al Machot, F.; Kyamakya, K. EEG-Based Emotion Recognition Approach for e-Healthcare Applications. In Proceedings of the 2016 Eighth International Conference on Ubiquitous and Future Networks (ICUFN), Vienna, Austria, 5–8 July 2016; pp. 946–950. [ Google Scholar ]

- Zepf, S.; Hernandez, J.; Schmitt, A.; Minker, W.; Picard, R.W. Driver Emotion Recognition for Intelligent Vehicles. ACM Comput. Surv. (CSUR) 2020 , 53 , 1–30. [ Google Scholar ] [ CrossRef ]

- Zaman, K.; Zhaoyun, S.; Shah, B.; Hussain, T.; Shah, S.M.; Ali, F.; Khan, U.S. A Novel Driver Emotion Recognition System Based on Deep Ensemble Classification. Complex. Intell. Syst. 2023 , 9 , 6927–6952. [ Google Scholar ] [ CrossRef ]

- Du, Y.; Crespo, R.G.; Martínez, O.S. Human Emotion Recognition for Enhanced Performance Evaluation in E-Learning. Prog. Artif. Intell. 2022 , 12 , 199–211. [ Google Scholar ] [ CrossRef ]

- Alaei, A.; Wang, Y.; Bui, V.; Stantic, B. Target-Oriented Data Annotation for Emotion and Sentiment Analysis in Tourism Related Social Media Data. Future Internet 2023 , 15 , 150. [ Google Scholar ] [ CrossRef ]

- Caratù, M.; Brescia, V.; Pigliautile, I.; Biancone, P. Assessing Energy Communities’ Awareness on Social Media with a Content and Sentiment Analysis. Sustainability 2023 , 15 , 6976. [ Google Scholar ] [ CrossRef ]

- Bota, P.J.; Wang, C.; Fred, A.L.N.; Placido Da Silva, H. A Review, Current Challenges, and Future Possibilities on Emotion Recognition Using Machine Learning and Physiological Signals. IEEE Access 2019 , 7 , 140990–141020. [ Google Scholar ] [ CrossRef ]

- Egger, M.; Ley, M.; Hanke, S. Emotion Recognition from Physiological Signal Analysis: A Review. Electron. Notes Theor. Comput. Sci. 2019 , 343 , 35–55. [ Google Scholar ] [ CrossRef ]

- Shu, L.; Xie, J.; Yang, M.; Li, Z.; Li, Z.; Liao, D.; Xu, X.; Yang, X. A Review of Emotion Recognition Using Physiological Signals. Sensors 2018 , 18 , 2074. [ Google Scholar ] [ CrossRef ]

- Canal, F.Z.; Müller, T.R.; Matias, J.C.; Scotton, G.G.; de Sa Junior, A.R.; Pozzebon, E.; Sobieranski, A.C. A Survey on Facial Emotion Recognition Techniques: A State-of-the-Art Literature Review. Inf. Sci. 2022 , 582 , 593–617. [ Google Scholar ] [ CrossRef ]

- Assabumrungrat, R.; Sangnark, S.; Charoenpattarawut, T.; Polpakdee, W.; Sudhawiyangkul, T.; Boonchieng, E.; Wilaiprasitporn, T. Ubiquitous Affective Computing: A Review. IEEE Sens. J. 2022 , 22 , 1867–1881. [ Google Scholar ] [ CrossRef ]

- Schmidt, P.; Reiss, A.; Dürichen, R.; Laerhoven, K. Van Wearable-Based Affect Recognition—A Review. Sensors 2019 , 19 , 4079. [ Google Scholar ] [ CrossRef ]

- Rouast, P.V.; Adam, M.T.P.; Chiong, R. Deep Learning for Human Affect Recognition: Insights and New Developments. IEEE Trans. Affect. Comput. 2021 , 12 , 524–543. [ Google Scholar ] [ CrossRef ]

- Ahmed, N.; Aghbari, Z.A.; Girija, S. A Systematic Survey on Multimodal Emotion Recognition Using Learning Algorithms. Intell. Syst. Appl. 2023 , 17 , 200171. [ Google Scholar ] [ CrossRef ]

- Kitchenham, B. Procedures for Performing Systematic Reviews ; Keele University: Keele, UK, 2004; Volume 33, pp. 1–26. [ Google Scholar ]

- Mollahosseini, A.; Hasani, B.; Mahoor, M.H. AffectNet: A Database for Facial Expression, Valence, and Arousal Computing in the Wild. IEEE Trans. Affect. Comput. 2019 , 10 , 18–31. [ Google Scholar ] [ CrossRef ]

- Al Jazaery, M.; Guo, G. Video-Based Depression Level Analysis by Encoding Deep Spatiotemporal Features. IEEE Trans. Affect. Comput. 2021 , 12 , 262–268. [ Google Scholar ] [ CrossRef ]

- Kollias, D.; Zafeiriou, S. Exploiting Multi-CNN Features in CNN-RNN Based Dimensional Emotion Recognition on the OMG in-the-Wild Dataset. IEEE Trans. Affect. Comput. 2021 , 12 , 595–606. [ Google Scholar ] [ CrossRef ]

- Li, S.; Deng, W. A Deeper Look at Facial Expression Dataset Bias. IEEE Trans. Affect. Comput. 2022 , 13 , 881–893. [ Google Scholar ] [ CrossRef ]

- Kulkarni, K.; Corneanu, C.A.; Ofodile, I.; Escalera, S.; Baro, X.; Hyniewska, S.; Allik, J.; Anbarjafari, G. Automatic Recognition of Facial Displays of Unfelt Emotions. IEEE Trans. Affect. Comput. 2021 , 12 , 377–390. [ Google Scholar ] [ CrossRef ]

- Punuri, S.B.; Kuanar, S.K.; Kolhar, M.; Mishra, T.K.; Alameen, A.; Mohapatra, H.; Mishra, S.R. Efficient Net-XGBoost: An Implementation for Facial Emotion Recognition Using Transfer Learning. Mathematics 2023 , 11 , 776. [ Google Scholar ] [ CrossRef ]

- Mukhiddinov, M.; Djuraev, O.; Akhmedov, F.; Mukhamadiyev, A.; Cho, J. Masked Face Emotion Recognition Based on Facial Landmarks and Deep Learning Approaches for Visually Impaired People. Sensors 2023 , 23 , 1080. [ Google Scholar ] [ CrossRef ]

- Babu, E.K.; Mistry, K.; Anwar, M.N.; Zhang, L. Facial Feature Extraction Using a Symmetric Inline Matrix-LBP Variant for Emotion Recognition. Sensors 2022 , 22 , 8635. [ Google Scholar ] [ CrossRef ]

- Mustafa Hilal, A.; Elkamchouchi, D.H.; Alotaibi, S.S.; Maray, M.; Othman, M.; Abdelmageed, A.A.; Zamani, A.S.; Eldesouki, M.I. Manta Ray Foraging Optimization with Transfer Learning Driven Facial Emotion Recognition. Sustainability 2022 , 14 , 14308. [ Google Scholar ] [ CrossRef ]

- Bisogni, C.; Cimmino, L.; De Marsico, M.; Hao, F.; Narducci, F. Emotion Recognition at a Distance: The Robustness of Machine Learning Based on Hand-Crafted Facial Features vs Deep Learning Models. Image Vis. Comput. 2023 , 136 , 104724. [ Google Scholar ] [ CrossRef ]

- Sun, Q.; Liang, L.; Dang, X.; Chen, Y. Deep Learning-Based Dimensional Emotion Recognition Combining the Attention Mechanism and Global Second-Order Feature Representations. Comput. Electr. Eng. 2022 , 104 , 108469. [ Google Scholar ] [ CrossRef ]

- Sudha, S.S.; Suganya, S.S. On-Road Driver Facial Expression Emotion Recognition with Parallel Multi-Verse Optimizer (PMVO) and Optical Flow Reconstruction for Partial Occlusion in Internet of Things (IoT). Meas. Sens. 2023 , 26 , 100711. [ Google Scholar ] [ CrossRef ]

- Barra, P.; De Maio, L.; Barra, S. Emotion Recognition by Web-Shaped Model. Multimed. Tools Appl. 2023 , 82 , 11321–11336. [ Google Scholar ] [ CrossRef ]

- Bhattacharya, A.; Choudhury, D.; Dey, D. Edge-Enhanced Bi-Dimensional Empirical Mode Decomposition-Based Emotion Recognition Using Fusion of Feature Set. Soft Comput. 2018 , 22 , 889–903. [ Google Scholar ] [ CrossRef ]

- Lucey, P.; Cohn, J.F.; Kanade, T.; Saragih, J.; Ambadar, Z.; Matthews, I. The Extended Cohn-Kanade Dataset (CK+): A Complete Dataset for Action Unit and Emotion-Specified Expression. In Proceedings of the 2010 IEEE Computer Society Conference on Computer Vision and Pattern Recognition-Workshops, San Francisco, CA, USA, 13–18 June 2010; pp. 94–101. [ Google Scholar ]

- Zhao, G.; Huang, X.; Taini, M.; Li, S.Z.; Pietikäinen, M. Facial Expression Recognition from Near-Infrared Videos. Image Vis. Comput. 2011 , 29 , 607–619. [ Google Scholar ] [ CrossRef ]

- Barros, P.; Churamani, N.; Lakomkin, E.; Siqueira, H.; Sutherland, A.; Wermter, S. The OMG-Emotion Behavior Dataset. In Proceedings of the 2018 International Joint Conference on Neural Networks (IJCNN), Rio de Janeiro, Brazil, 8–13 July 2018; pp. 1–7. [ Google Scholar ]

- Ullah, Z.; Qi, L.; Hasan, A.; Asim, M. Improved Deep CNN-Based Two Stream Super Resolution and Hybrid Deep Model-Based Facial Emotion Recognition. Eng. Appl. Artif. Intell. 2022 , 116 , 105486. [ Google Scholar ] [ CrossRef ]

- Zheng, W.; Zong, Y.; Zhou, X.; Xin, M. Cross-Domain Color Facial Expression Recognition Using Transductive Transfer Subspace Learning. IEEE Trans. Affect. Comput. 2018 , 9 , 21–37. [ Google Scholar ] [ CrossRef ]

- Tan, K.L.; Lee, C.P.; Lim, K.M. RoBERTa-GRU: A Hybrid Deep Learning Model for Enhanced Sentiment Analysis. Appl. Sci. 2023 , 13 , 3915. [ Google Scholar ] [ CrossRef ]

- Ren, M.; Huang, X.; Li, W.; Liu, J. Multi-Loop Graph Convolutional Network for Multimodal Conversational Emotion Recognition. J. Vis. Commun. Image Represent. 2023 , 94 , 103846. [ Google Scholar ] [ CrossRef ]

- Mai, S.; Hu, H.; Xu, J.; Xing, S. Multi-Fusion Residual Memory Network for Multimodal Human Sentiment Comprehension. IEEE Trans. Affect. Comput. 2022 , 13 , 320–334. [ Google Scholar ] [ CrossRef ]

- Yang, L.; Jiang, D.; Sahli, H. Integrating Deep and Shallow Models for Multi-Modal Depression Analysis—Hybrid Architectures. IEEE Trans. Affect. Comput. 2021 , 12 , 239–253. [ Google Scholar ] [ CrossRef ]

- Mocanu, B.; Tapu, R.; Zaharia, T. Multimodal Emotion Recognition Using Cross Modal Audio-Video Fusion with Attention and Deep Metric Learning. Image Vis. Comput. 2023 , 133 , 104676. [ Google Scholar ] [ CrossRef ]

- Noroozi, F.; Marjanovic, M.; Njegus, A.; Escalera, S.; Anbarjafari, G. Audio-Visual Emotion Recognition in Video Clips. IEEE Trans. Affect. Comput. 2019 , 10 , 60–75. [ Google Scholar ] [ CrossRef ]

- Davison, A.K.; Lansley, C.; Costen, N.; Tan, K.; Yap, M.H. SAMM: A Spontaneous Micro-Facial Movement Dataset. IEEE Trans. Affect. Comput. 2018 , 9 , 116–129. [ Google Scholar ] [ CrossRef ]

- Happy, S.L.; Routray, A. Fuzzy Histogram of Optical Flow Orientations for Micro-Expression Recognition. IEEE Trans. Affect. Comput. 2019 , 10 , 394–406. [ Google Scholar ] [ CrossRef ]

- Schmidt, P.; Reiss, A.; Duerichen, R.; Marberger, C.; Van Laerhoven, K. Introducing WESAD, a Multimodal Dataset for Wearable Stress and Affect Detection. In Proceedings of the Proceedings of the 20th ACM International Conference on Multimodal Interaction, New York, NY, USA, 2 October 2018; ACM: New York, NY, USA, 2018; pp. 400–408. [ Google Scholar ]

- Miranda-Correa, J.A.; Abadi, M.K.; Sebe, N.; Patras, I. AMIGOS: A Dataset for Affect, Personality and Mood Research on Individuals and Groups. IEEE Trans. Affect. Comput. 2021 , 12 , 479–493. [ Google Scholar ] [ CrossRef ]

- Subramanian, R.; Wache, J.; Abadi, M.K.; Vieriu, R.L.; Winkler, S.; Sebe, N. ASCERTAIN: Emotion and Personality Recognition Using Commercial Sensors. IEEE Trans. Affect. Comput. 2018 , 9 , 147–160. [ Google Scholar ] [ CrossRef ]

- Koelstra, S.; Muhl, C.; Soleymani, M.; Lee, J.-S.; Yazdani, A.; Ebrahimi, T.; Pun, T.; Nijholt, A.; Patras, I. DEAP: A Database for Emotion Analysis; Using Physiological Signals. IEEE Trans. Affect. Comput. 2012 , 3 , 18–31. [ Google Scholar ] [ CrossRef ]

- Zhang, Y.; Cheng, C.; Wang, S.; Xia, T. Emotion Recognition Using Heterogeneous Convolutional Neural Networks Combined with Multimodal Factorized Bilinear Pooling. Biomed. Signal Process Control 2022 , 77 , 103877. [ Google Scholar ] [ CrossRef ]

- The PRISMA 2020 Statement: An Updated Guideline for Reporting Systematic Reviews. Available online: https://www.prisma-statement.org/prisma-2020-statement (accessed on 12 August 2024).

Click here to enlarge figure | Database | Resulted Studies with Key Terms | After Years Filter | After Article Type | Relevant Order |

|---|

| IEEE | 2112 | 1152 | 536 | 200 | | Springer | 4121 | 1808 | 1694 | 200 | | Science Direct | 1041 | 582 | 480 | 200 | | MDPI | 686 | 643 | 635 | 200 | | Database | Quantity |

|---|

| IEEE | 148 | | Springer | 112 | | Science Direct | 166 | | MDPI | 183 | | Modality | 2018 | 2019 | 2020 | 2021 | 2022 | 2023 | Total |

|---|

| Multi-physical | 8 | 6 | | 8 | 22 | 27 | 71 | | Multi-physical–physiological | 2 | | | 3 | 6 | 7 | 18 | | Multi-physiological | 2 | | 6 | 3 | 6 | 4 | 21 | | Unimodal | 37 | 26 | 29 | 37 | 176 | 194 | 499 | | Total | 49 | 32 | 35 | 51 | 210 | 232 | 609 | | Article Title | Databases Used | Ref. |

|---|

| AffectNet: A Database for Facial Expression, Valence, and Arousal Computing in the Wild. | AffectNet | [ ] | | Video-Based Depression Level Analysis by Encoding Deep Spatiotemporal Features. | AVEC2013, AVEC2014 | [ ] | | Exploiting Multi-CNN Features in CNN-RNN Based Dimensional Emotion Recognition on the OMG in-the-Wild Dataset. | Aff-Wild, Aff-Wild2, OMG | [ ] | | A Deeper Look at Facial Expression Dataset Bias. | CK+, JAFFE, MMI, Oulu-CASIA, AffectNet, FER2013, RAF-DB 2.0, SFEW 2.0 | [ ] | | Automatic Recognition of Facial Displays of Unfelt Emotions. | CK+, OULU-CASIA, BP4D | [ ] | | Spatio-Temporal Encoder-Decoder Fully Convolutional Network for Video-Based Dimensional Emotion Recognition. | OMG, RECOLA, SEWA | [ ] | | Efficient Net-XGBoost: An Implementation for Facial Emotion Recognition Using Transfer Learning. | CK+, FER2013, JAFFE, KDEF | [ ] | | Masked Face Emotion Recognition Based on Facial Landmarks and Deep Learning Approaches for Visually Impaired People. | AffectNet | [ ] | | Facial Feature Extraction Using a Symmetric Inline Matrix-LBP Variant for Emotion Recognition. | JAFFE | [ ] | | Manta Ray Foraging Optimization with Transfer Learning Driven Facial Emotion Recognition. | CK+, FER-2013 | [ ] | | Emotion recognition at a distance: The robustness of machine learning based on hand-crafted facial features vs deep learning models. | CK+ | [ ] | | Deep learning-based dimensional emotion recognition combining the attention mechanism and global second-order feature representations. | AffectNet | [ ] | | On-road driver facial expression emotion recognition with parallel multi-verse optimizer (PMVO) and optical flow reconstruction for partial occlusion in internet of things (IoT). | CK+, KMU-FED | [ ] | | Emotion recognition by web-shaped model. | CK+, KDEF | [ ] | | Edge-enhanced bi-dimensional empirical mode decomposition-based emotion recognition using fusion of feature set | eNTERFACE, CK, JAFFE | [ ] | | A novel driver emotion recognition system based on deep ensemble classification | AffectNet, CK+, DFER, FER-2013, JAFFE, and custom- dataset) | [ ] | | 1.Facial emotion recognition for mental health assessment (depression, schizophrenia) | 14. Emotion recognition performance assessment from faces acquired at a distance. | | 2. Emotion analysis in human-computer interaction | 15. Facial emotion recognition for IoT and edge devices | | 3. Emotion recognition in the context of autism | 16. Idiosyncratic bias in emotion recognition | | 4. Driver emotion recognition for intelligent vehicles | 17. Emotion recognition in socially assistive robots | | 5. Assessment of emotional engagement in learning environments | 18. In the wild facial emotion recognition | | 6. Facial emotion recognition for apparent personality trait analysis | 19. Video-based emotion recognition | | 7. Facial emotion recognition for gender, age, and ethnicity estimation | 20. Spatio-temporal emotion recognition in videos | | 8. Emotion recognition in virtual reality and smart homes | 21. Spontaneous emotion recognition | | 9. Emotion recognition in healthcare and clinical settings | 22. Emotion recognition using facial components | | 10. Emotion recognition in real-world and COVID-19 masked scenarios | 23. Comparing emotion recognition from genuine and unfelt | | 11. Personalized and group-based emotion recognition | facial expressions. | | 12. Music-enhanced emotion recognition | | | 13. Cross-dataset emotion recognition | | | Database Name | Description | Advantages | Limitation |

|---|

MELD (Multimodal Emotion Lines Dataset)

[ ] | Focuses on emotion recognition in movie dialogues. It contains transcriptions of dialogues and their corresponding audio and video tracks. Emotions are labeled at the sentence and speaker levels. | Large amount of data, multimodal (text, audio, video). | Emotions induced by movies. Manually labeled. | IEMOCAP (Interactive Emotional Dyadic Motion Capture), 2005

[ ] | Focuses on emotional interactions between two individuals during acting sessions. It contains video and audio recordings of actors performing emotional scenes. | Realistic data, emotional interactions, a wide range of emotions. | Not real induced emotions (acting). | CMU-MOSI (Multimodal Corpus of Sentiment Intensity. 2014, 2017

[ ] | Focuses on sentiment intensity in speeches and interviews. It includes transcriptions of audio and video, along with sentiment annotations. Updated in the 2017 CMU-MOSEI. | Emotions are derived from real speeches and interviews. | Relatively small size. | AVEC (Affective Behavior in the Context of E-Learning with Social Signals 2007–2016

[ ] | AVEC is a series of competitions focused on the detection of emotions and behaviors in the context of online learning. It includes video and audio data of students participating in e-learning activities. | Emotions are naturally induced during online learning activities. | Context-specific data, enables emotion assessment in e-learning settings. | RAVDESS (The Ryerson Audio-Visual Database of Emotional Speech and Song) 2016

[ ] | Audio and video database that focuses on emotion recognition in speech and song. It includes performances by actors expressing various emotions. | Diverse data in terms of emotions, modalities, and contexts. | Does not contain natural dialogues. | SAVEE (Surrey Audio–Visual Expressed Emotion) 2010

[ ] | Focuses on emotion recognition in speech. It contains recordings of speakers expressing emotions through phrases and words. | Clean audio data. | | SAMM (Spontaneous Micro-expression Dataset)

[ ] | Focuses on spontaneous micro-expressions that last only a fraction of a second. It contains videos of people expressing emotions in real emotional situations. | Real spontaneous micro-expressions. | | CASME (Chinese Academy of Sciences Micro-Expression)

[ ] | Focus on the detection of micro-expressions in response to emotional stimuli. They contain videos of micro-expressions. | Induced by emotional stimuli. | Not multicultural. | | Database Name | Description | Advantages | Limitation |

|---|

WESAD (Wearable Stress and Affect Detection)

[ ] | It focuses on stress and affect recognition from physiological signals like ECG, EMG, and EDA, as well as motion signals from accelerometers. Data were collected while participants performed tasks and experienced emotions in a controlled laboratory setting, wearing wearable sensors. | Facilitates the development of wearable emotion recognition systems. | The dataset is relatively small, and participant diversity may be limited. | AMIGOS

[ ] | It is a multimodal dataset for personality traits and mood. Emotions are induced by emotional videos in two social contexts: one with individual viewers and one with groups of viewers. Participants’ EEG, ECG, and GSR signals were recorded using wearable sensors. Frontal HD videos and full-body videos in RGB and depth were also recorded. | Participants’ emotions were scored by self-assessment of valence, arousal, control, familiarity, liking, and basic emotions felt during the videos, as well as external assessments of valence and arousal. | Reduced number of participants. | DREAMER

[ ] | Records physiological ECG, EMG, and EDA signals and self-reported emotional responses. Collected during the presentation of emotional video clips. | Enables the study of emotional responses in a controlled environment and their comparison with self-reported emotions. | Emotions may be biased towards those induced by video clips, and the dataset size is limited. | | ASCERTAIN [ ] | Focus on linking personality traits and emotional states through physiological responses like EEG, ECG, GSR, and facial activity data while participants watched emotionally charged movie clips. | Suitable for studying emotions in stressful situations and their impact on human activity. | The variety of emotions induced is limited. | | DEAP (Database for Emotion Analysis using Physiological Signals), [ , ] | Includes physiological signals like EEG, ECG, EMG, and EDA, as well as audiovisual data.

Data were collected by exposing participants to audiovisual stimuli designed to elicit various emotions. | Provides a diverse range of emotions and physiological data for emotion analysis. | The size of the database is small. | MAHNOB-HCI (Multimodal Human Computer Interaction Database for Affect Analysis and Recognition)

[ , ]. | Includes multimodal data, such as audio, video, physiological, ECG, EDA, and kinematic data.

Data were collected while participants engaged in various human–computer interaction scenarios. | Offers a rich dataset for studying emotional responses during interactions with technology. | | | The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

Share and CiteGarcía-Hernández, R.A.; Luna-García, H.; Celaya-Padilla, J.M.; García-Hernández, A.; Reveles-Gómez, L.C.; Flores-Chaires, L.A.; Delgado-Contreras, J.R.; Rondon, D.; Villalba-Condori, K.O. A Systematic Literature Review of Modalities, Trends, and Limitations in Emotion Recognition, Affective Computing, and Sentiment Analysis. Appl. Sci. 2024 , 14 , 7165. https://doi.org/10.3390/app14167165 García-Hernández RA, Luna-García H, Celaya-Padilla JM, García-Hernández A, Reveles-Gómez LC, Flores-Chaires LA, Delgado-Contreras JR, Rondon D, Villalba-Condori KO. A Systematic Literature Review of Modalities, Trends, and Limitations in Emotion Recognition, Affective Computing, and Sentiment Analysis. Applied Sciences . 2024; 14(16):7165. https://doi.org/10.3390/app14167165 García-Hernández, Rosa A., Huizilopoztli Luna-García, José M. Celaya-Padilla, Alejandra García-Hernández, Luis C. Reveles-Gómez, Luis Alberto Flores-Chaires, J. Ruben Delgado-Contreras, David Rondon, and Klinge O. Villalba-Condori. 2024. "A Systematic Literature Review of Modalities, Trends, and Limitations in Emotion Recognition, Affective Computing, and Sentiment Analysis" Applied Sciences 14, no. 16: 7165. https://doi.org/10.3390/app14167165 Article MetricsArticle access statistics, further information, mdpi initiatives, follow mdpi.  Subscribe to receive issue release notifications and newsletters from MDPI journals  An official website of the United States government The .gov means it’s official. Federal government websites often end in .gov or .mil. Before sharing sensitive information, make sure you’re on a federal government site. The site is secure. The https:// ensures that you are connecting to the official website and that any information you provide is encrypted and transmitted securely. - Publications

- Account settings

Preview improvements coming to the PMC website in October 2024. Learn More or Try it out now . - Advanced Search

- Journal List

- PMC10248995