GPT Essay Checker for Students

How to Interpret the Result of AI Detection

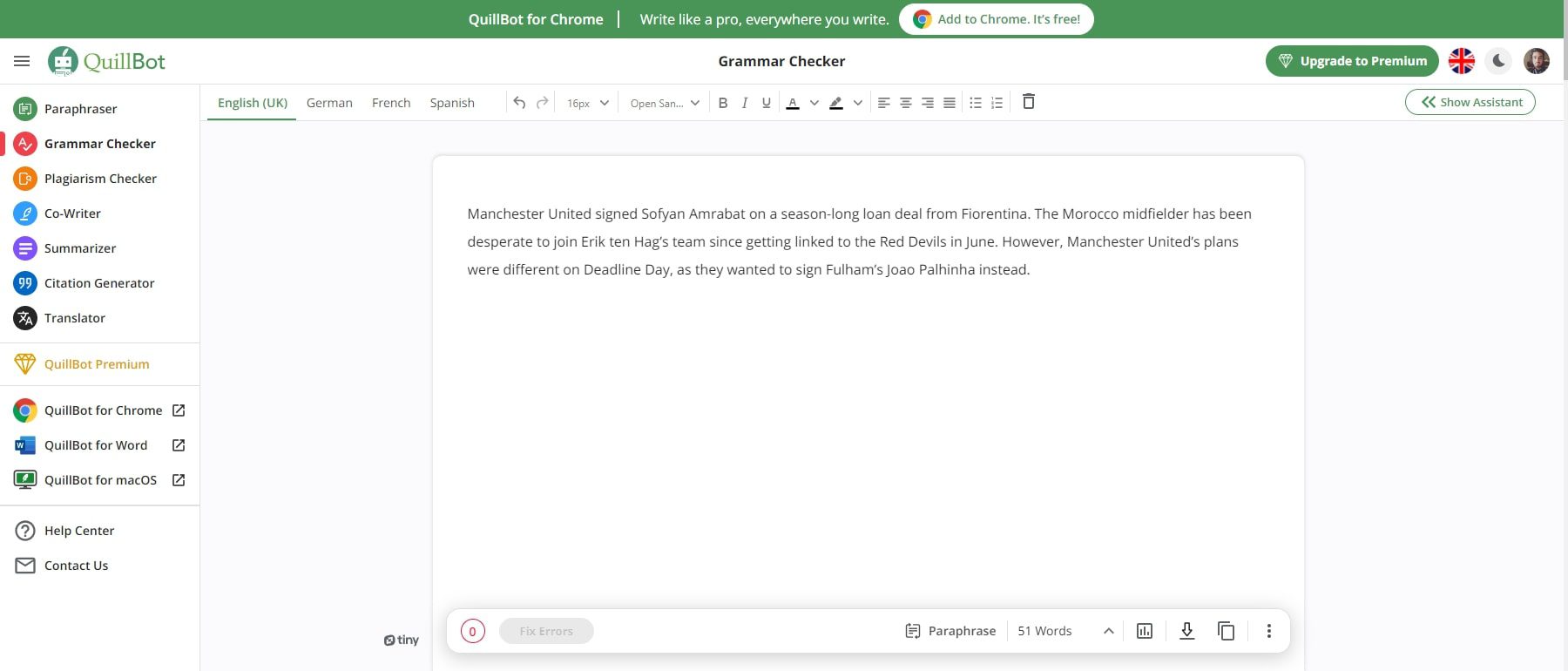

To use our GPT checker, you won’t need to do any preparation work!

Take the 3 steps:

- Copy and paste the text you want to be analyzed,

- Click the button,

- Follow the prompts to interpret the result.

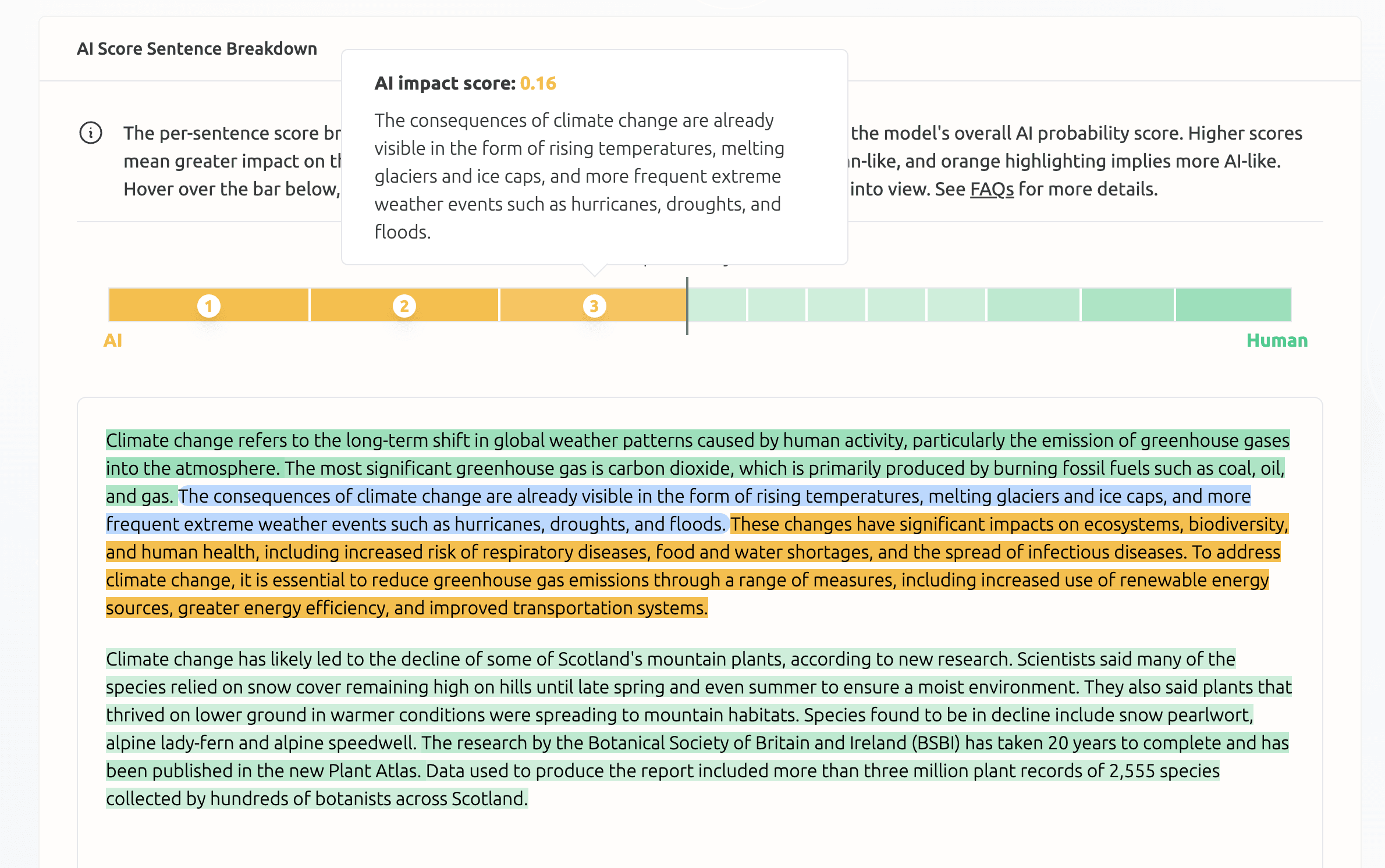

Our AI detector doesn’t give a definitive answer. It’s only a free beta test that will be improved later. For now, it provides a preliminary conclusion and analyzes the provided text, implementing the color-coding system that you can see above the analysis.

It is you who decides whether the text is written by a human or AI:

- Your text was likely generated by an AI if it is mostly red with some orange words. This means that the word choice of the whole document is nowhere near unique or unpredictable.

- Your text looks unique and human-made if our GPT essay checker adds plenty of orange, green, and blue to the color palette.

- 🔮 The Tool’s Benefits

🤖 Will AI Replace Human Writers?

✅ ai in essay writing.

- 🕵 How do GPT checkers work?

Chat GPT in Essay Writing – the Shortcomings

- The tool doesn’t know anything about what happened after 2021. Novel history is not its strong side. Sometimes it needs to be corrected about earlier events. For instance, request information about Heathrow Terminal 1 . The program will tell you it is functioning, although it has been closed since 2015.

- The reliability of answers is questionable. AI takes information from the web which abounds in fake news, bias, and conspiracy theories.

- References also need to be checked. The links that the tool generates are sometimes incorrect, and sometimes even fake.

- Two AI generated essays on the same topic can be very similar. Although a plagiarism checker will likely consider the texts original, your teacher will easily see the same structure and arguments.

- Chat GPT essay detectors are being actively developed now. Traditional plagiarism checkers are not good at finding texts made by ChatGPT. But this does not mean that an AI-generated piece cannot be detected at all.

🕵 How Do GPT Checkers Work?

An AI-generated text is too predictable. Its creation is based on the word frequency in each particular case.

Thus, its strong side (being life-like) makes it easily discernible for ChatGPT detectors.

Once again, conventional anti-plagiarism essay checkers won’t work there merely because this writing features originality. Meanwhile, it will be too similar to hundreds of other texts covering the same topic.

Here’s an everyday example. Two people give birth to a baby. When kids become adults, they are very much like their parents. But can we tell this particular human is a child of the other two humans? No, if we cannot make a genetic test. This GPT essay checker is a paternity test for written content.

❓ GPT Essay Checker FAQ

Updated: Oct 25th, 2023

- Abstracts written by ChatGPT fool scientists - Nature

- How to... use ChatGPT to boost your writing

- Will ChatGPT Kill the Student Essay? - The Atlantic

- ChatGPT: how to use the AI chatbot taking over the world

- Overview of ChatGPT - Technology Hits - Medium

- Free Essays

- Writing Tools

- Lit. Guides

- Donate a Paper

- Referencing Guides

- Free Textbooks

- Tongue Twisters

- Job Openings

- Expert Application

- Video Contest

- Writing Scholarship

- Discount Codes

- IvyPanda Shop

- Terms and Conditions

- Privacy Policy

- Cookies Policy

- Copyright Principles

- DMCA Request

- Service Notice

This page contains a free online GPT checker for essays and other academic writing projects. Being based on the brand-new technology, this AI essay detector is much more effective than traditional plagiarism checkers. With this AI checker, you’ll easily find out if an academic writing piece was written by a human or a chatbot. We provide a comprehensive guide on how to interpret the results of analysis. It is up to you to draw your own conclusions.

Ensuring Authenticity: Accurate AI Detector for All

Empowering individuals worldwide, our ai detector brings clarity in a landscape saturated with ai-generated content. our cutting-edge technology sets the benchmark in ai detection, trained to identify a spectrum of models including chatgpt, gpt4, bard, llama, and more..

Try sample text

0/5000 Characters

The most Accurate AI Content Detector

Advanced AI Detection System

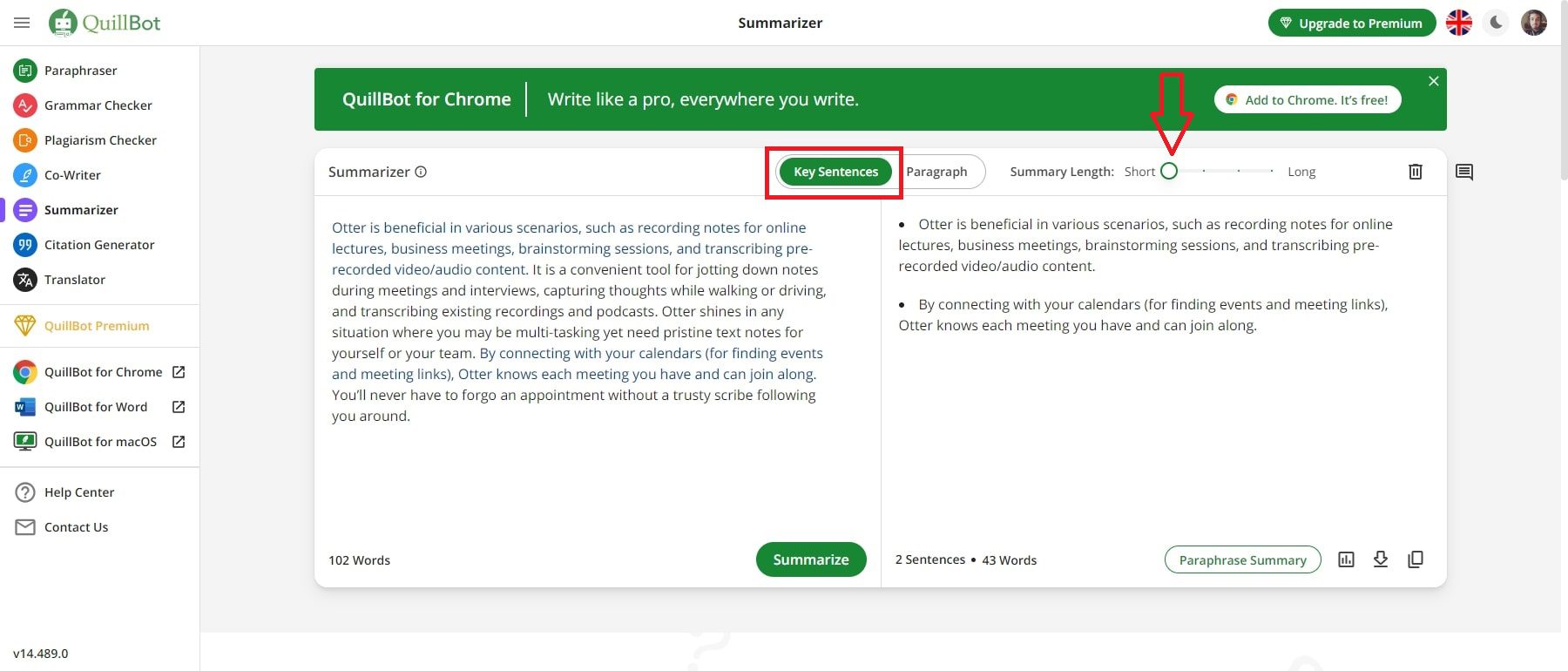

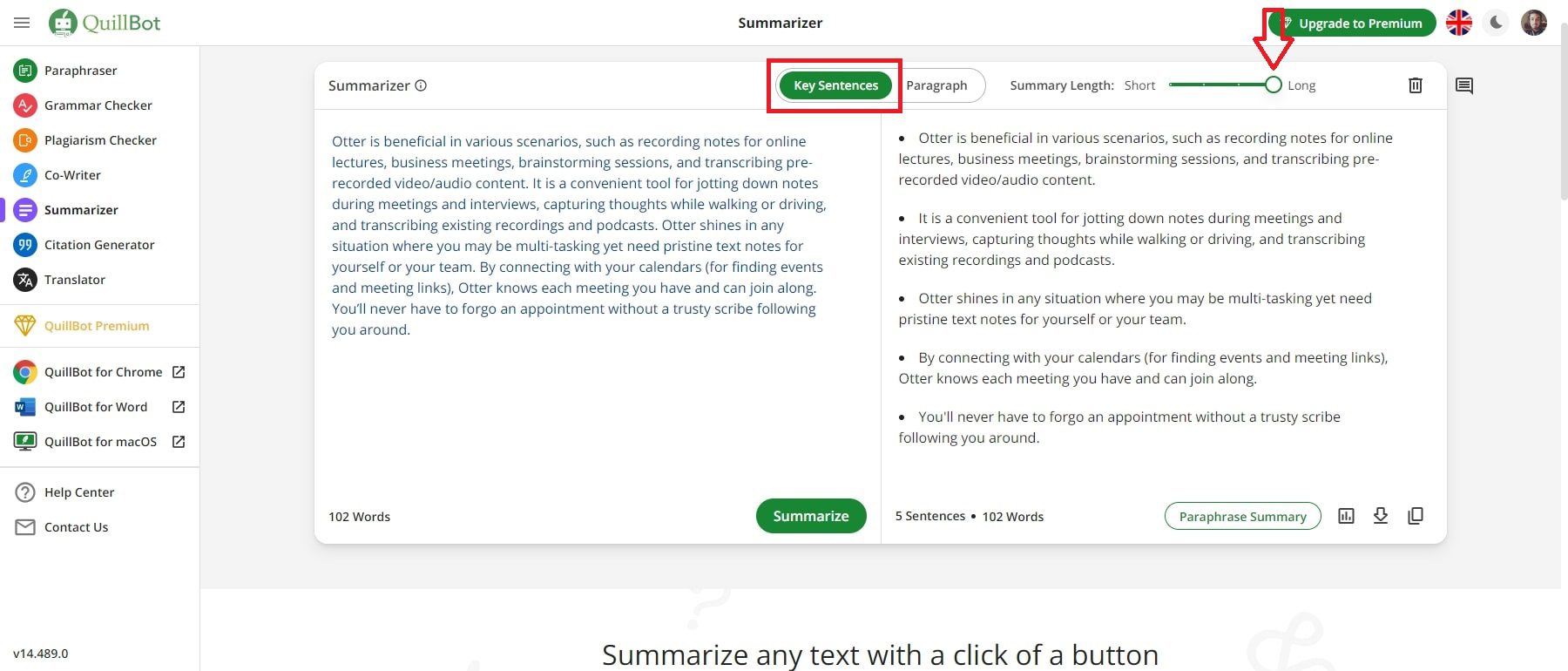

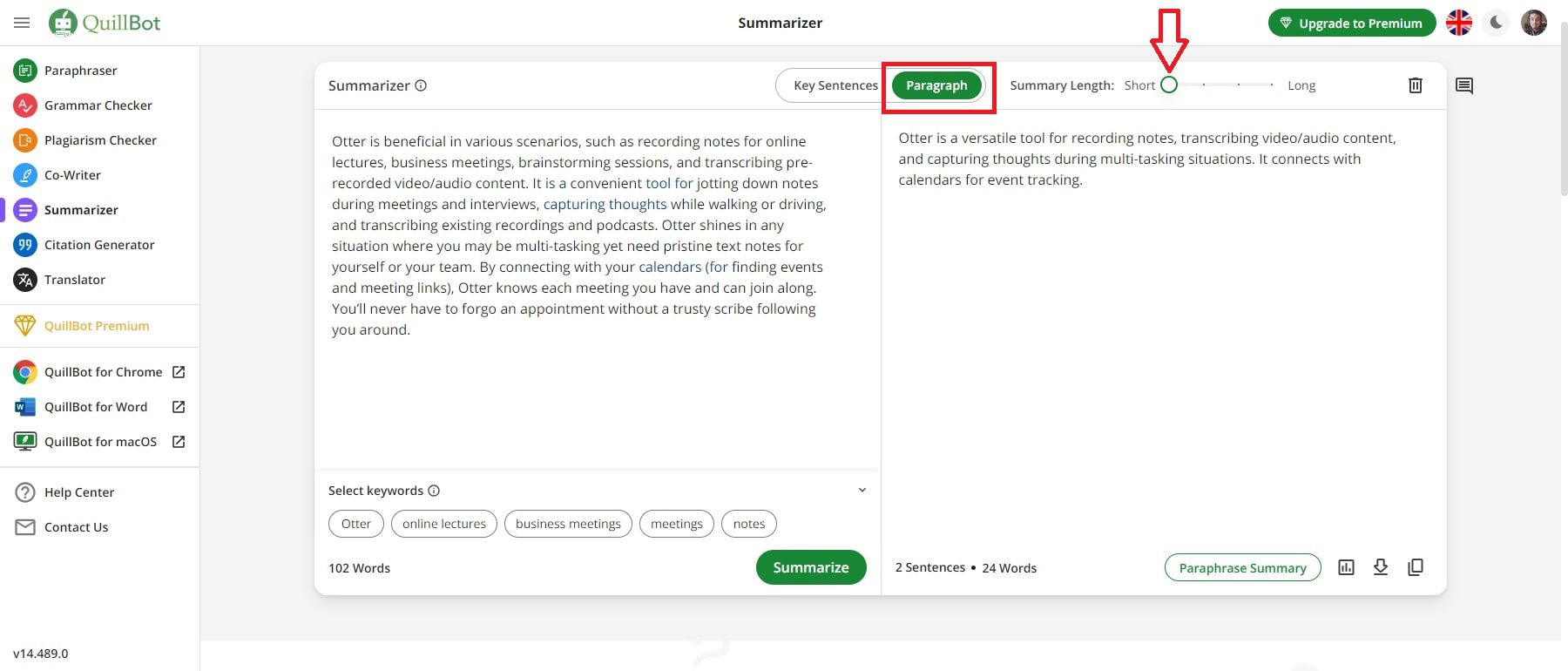

Sentence And Phrase Level Analysis for better understanding

Color-coded highlights For easier interpretation

By continuing you agree to our Terms of Service

Streamlined and Reliable AI Detector Solution – Get Started for Free

Join the Thriving Community of Users Relying on our Advanced AI Detector tool. Explore the Unmatched Capabilities Setting Us Apart with our AI text Detector.

Multilingual AI Detection

Our advanced AI detection models, trained on multiple languages, ensure accurate detection of AI generated content.

Report generation

Effortlessly generate detailed reports based on AI detection results, providing comprehensive insights into the integrity of your content.

Text insights

Gain valuable insights into your text content with our AI-powered analysis, enabling you to make informed decisions and enhance your content strategy.

The Most Advanced AI Detection System

Our AI writing detector conducts holistic analysis of text for AI detection, ensuring optimal accuracy across all languages. The Detailed Insights feature highlights AI-influencing factors, allowing you to pinpoint your efforts while making your content AI plagiarism-free.

State-of-the-art

We use an ensemble of state-of-the-art AI systems, including very large language models trained on millions of samples . This, paired with the most sophisticated language understanding algorithms, allows our AI detector to distinguish between AI and human-written content with unprecedented accuracy.

Over 80 languages

At Isgen, we value transparency and equal access for all. Compared to other AI Detectors on the market, which typically only support English or a very limited number of languages with lesser accuracy, our AI Content Detector stands out, supporting over 80 languages.

Detailed Insights

With Detailed Insights, you know exactly which parts, down to the sentence and phrase level, are being perceived as AI-generated and how they are impacting the overall AI Score. This has a huge consequence, allowing you to pinpoint your efforts while making your content AI plagiarism-free.

Deep Insights

Our deep insights pipeline is State-of-the-art in AI Detection and Model Interpretability. This is particularly important for educators and content writers to pinpoint areas of text that are highly plagiarized by AI.

With deep insights, you know precisely which sentences in the whole text are AI, Mixed, or written by a Human.

Phrase-level insights let you explore even further, highlighting which parts of a sentence are likely contributing to a higher AI score.

The above combined provides a granular view of detection results with color-coded highlights. The impact score list lets you easily scroll through the top AI, or Mixed sentences.

Our models can detect text written by any closed or open-source AI model , including GPT-4, Chat-GPT, Claude AI, Gemini, Microsoft Copilot, LLaMa, Grok, and Mistral. isgen boasts an accuracy of 96.4% on a benchmark where the most used AI Detector tool in the market has an accuracy of 81.22%. Our AI Detection tool provides a false positive ratio of nearly 0% , so you can safely rely on the model results and don’t worry about your written content being wrongly labeled as AI. Our multilingual AI Content Detector beats every other detector out there by a huge margin. Our study revealed that the most multilingual AI Detectors in the market are so inadequate and inconsistent that we couldn’t even compare the results directly, as a random model would have performed better than those detectors.

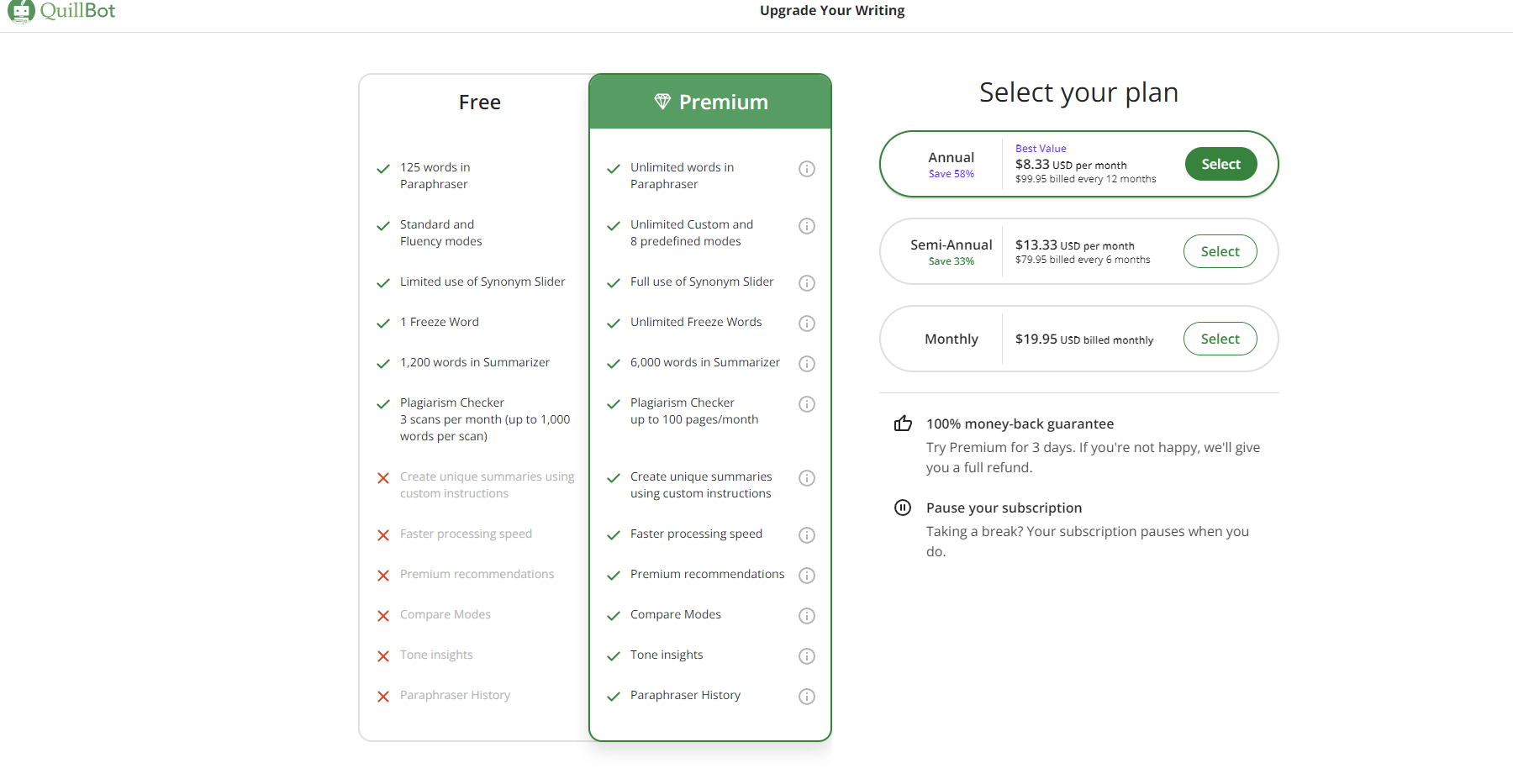

Choose your plan

Choose your ideal plan for effective AI content detection. Tailored for educators, students, and creators, our plans offer precision at great value. Start enhancing your content with global AI content detection today.

$ 0 / month

12000 words per month

50 calls per day

Basic AI detection system

Basic Insights

Single file upload

$ 8 / month

150,000 words per month

200 calls per day

Advanced AI detection system

Detailed Insights (word level)

Batch file upload

$ 14 / month

350,000 words per month

Unlimited calls per day

Priority support

Early access to new/experimental features

$ 23 / month

600,000 words per month

Unlock more of Isgen

Integrate our most powerful AI Text Detector with your own tools and workflow. Get access to Detailed Insights, AI Removal Assistant, and more upcoming features through the API.

The first ever AI-generated video and Deep Fake Detector on the market. Sign up now to get early access.

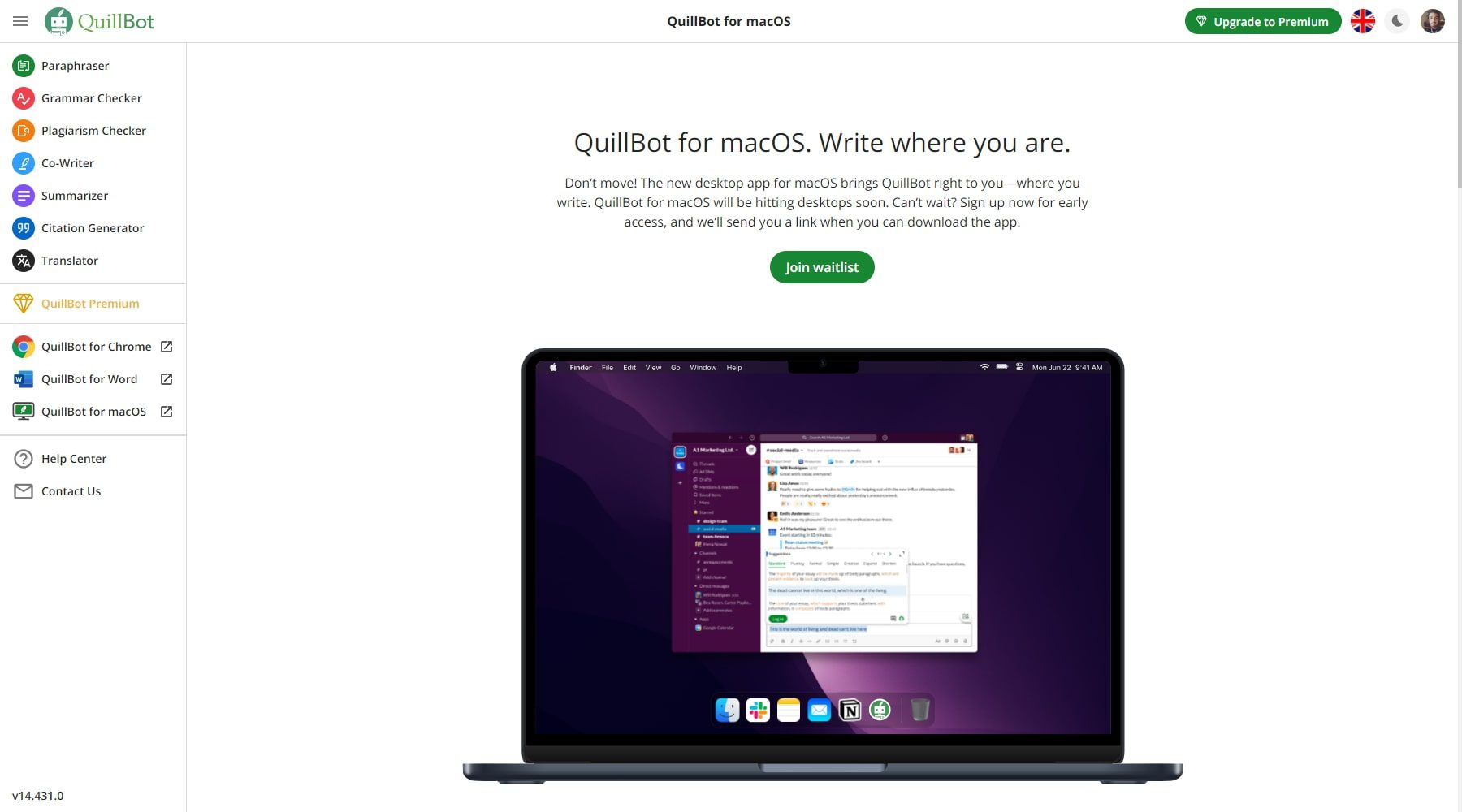

Use our AI Text Detector Anywhere on the internet. Always be certain of what you are reading, ensuring authenticity and human authorship. Use directly in google docs to see statistics of your writing on the go.

Frequently asked questions

What is Isgen

Isgen is a multilingual-first artificial intelligence company dedicated to promoting transparency by enabling everyone to validate AI-generated content. We provide detailed insights for students, writers, and content developers, highlighting to the word level why a particular piece could be perceived as AI-written, which enables them to evaluate their text, thus ensuring human authorship. As a company, isgen.ai is committed to providing equal access to all, offering the most accurate multi-lingual AI detection solution available in the market today.

How to detect AI generated content

You can assess AI-generated content using our AI Content Detector tool. To do this, simply paste the text directly into the input box, upload a file, or provide a URL to a web page you wish to scan for AI plagiarism. The tool will automatically detect multi-lingual content and use the relevant model for processing.

Can I use Isgen’s AI Detector for free?

Indeed, you may check for AI-generated content using the free AI detector provided by Isgen.ai. This enables you to test out our potent detecting capabilities for free.

Are AI Detectors 100% accurate

Is GPT-4 content detectable?

Absolutely; LLMs or Large Language Models, such as Chat-GPT, tend to write in a certain manner that can be easily distinguished from text written by humans. These models write text with low perplexity and burstiness, always following the same pattern and using the same strategy to present ideas. Consequently, these characteristics make their output easier to identify.

How Does Your AI Generated Text Detector Work?

Our AI-generated text detector uses advanced machine learning algorithms and models trained on millions of samples. To arrive at a conclusion, the software looks at the structure of sentences, word choices, and patterns. It then assigns a probability to each phrase in the input with a confidence level that it was generated by an AI or written by a Human.

Does Isgen only detect Chat-GPT output?

Isgen employs highly complex algorithms and matches the input text with millions of samples. Our AI writing detector has seen outputs from every Large Language Model, i.e., Mistral, Llama, Claude, Gemini/Bard, GPT-3.5, and GPT-4. In addition, we continuously update our algorithm with data from newer models.

Can you detect a combination of human and AI-generated content?

Yes, Isgen's algorithm is developed in such a way that it looks at the input text as a whole, as well as paragraph by paragraph, sentence by sentence and even at the word level. To make a system that has the lowest false positives, we have employed multiple techniques that look at the text from different perspectives, both semantically and syntactically. Thus, any mix of AI and Human text can be easily detected.

I am an educator and have found AI-Generated text in my student's work. Should I penalize the student based on the results?

AI Detectors, including Isgen, are not 100% accurate. There is a possibility of false positives. We have trained our models on millions of pieces of educational content; thus, the possibility of misclassification is very low. However, if a false positive occurs, you can do the following:

- Use our Deep and Detailed Insights tool to get an idea of which parts of the text were detected as AI with the highest impact score. It is likely that only those parts of the text are pushing up the AI Score.

- To assess the student's understanding of their writing, allow them to justify why the piece was not generated by AI. This justification could be in the form of an in-person assessment, a proof of how they came up with the ideas for the writing, or how they compiled the whole piece together. It could also be shown through the version history of the editor they were using.

- There's a possibility that they used AI to develop some ideas or create the first draft. Our models not only detect the written text (syntactically) but also understand how an AI model would structure a certain topic. In such cases, even though the content is largely written by the student and structured according to the AI model's suggestion, it could still be flagged as AI . For such false positives, allow the student to justify their use of AI and if it is within the bounds of the rules permitted by the institution.

Do you store my data?

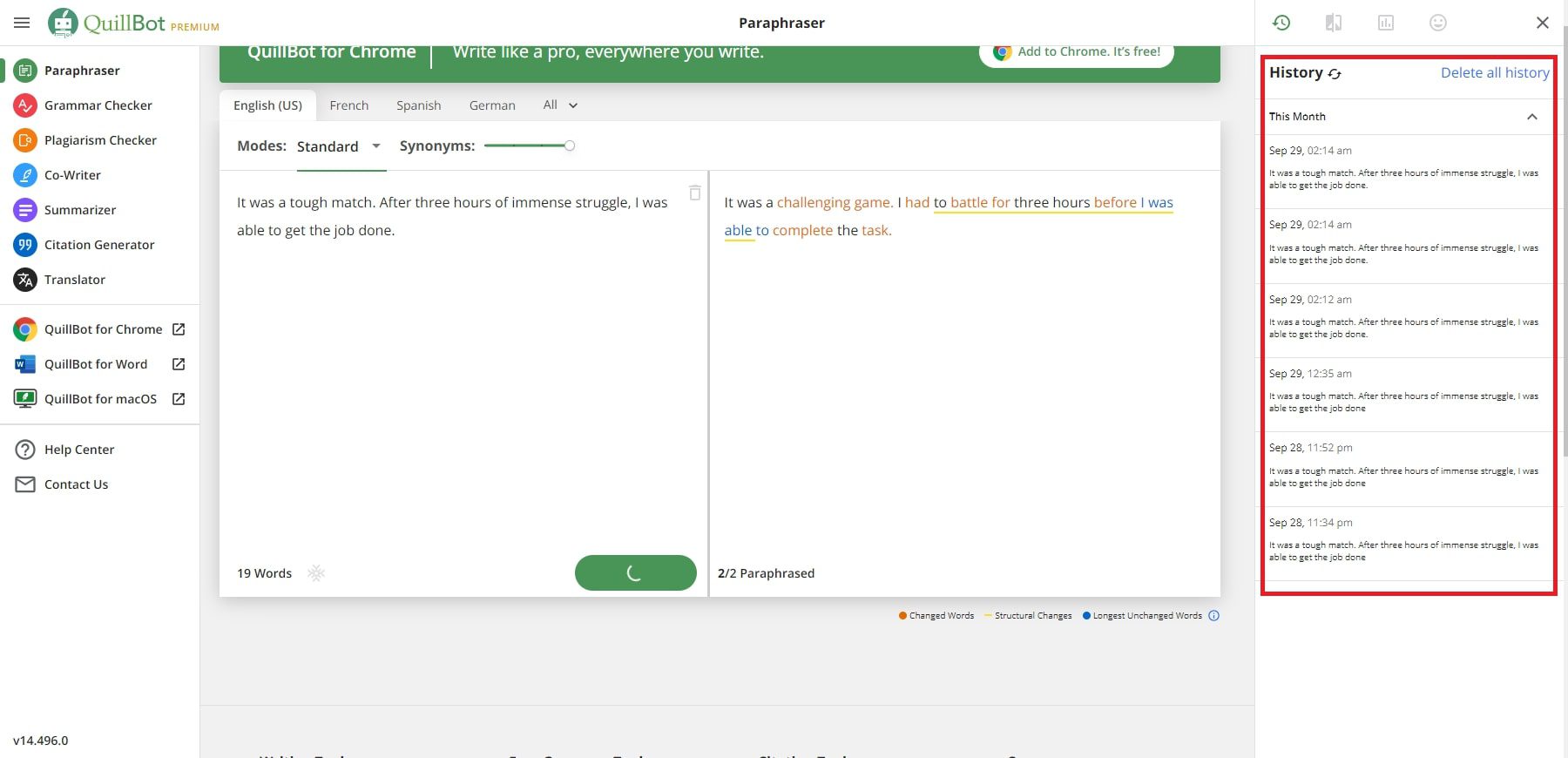

No, we do not store any data. Input history is maintained for users with a paid plan, but it can be deleted at any time.

Why Use an AI Detector?

The authenticity and uniqueness of published works are guaranteed by an AI detecting tool. Generative AI and Machine Learning make it simple to fabricate text, which can result in plagiarism and harm the author’s reputation in the workplace and school. An open AI detector, such as Isgen, finds originality by examining text to see if it was written by a human or an AI. The software offers advice on how to make text better, which is beneficial to writers and other content producers.

Supported languages

Isgen was developed using a multilingual first approach. as a result, we offer exceptional accuracy in the following languages.

Support for more languages is being added

ai detector for teachers free

ai detector for essays free

best free ai detector

college ai detector

best ai detector

ai generated text detector

open ai detector

detect gpt-4

chatgpt checker

claude ai detector

detect ai written content

detect essay

ai detector pro

ai detector free

ai writing detector

ai checker free

More than an AI detector Preserve What's Human

Since inventing AI detection, GPTZero incorporates the latest research in detecting ChatGPT, GPT4, Google-Gemini, LLaMa, and new AI models, and investigating their sources.

Was this text written by a human or AI ?

Our Commitment as the Leader in AI detection

Internationally recognized as the leader in AI detection, we set the standard for responding to AI-generated content with precision and reliability.

The First in Detection

GPTZero developed the first public open AI detector in 2022. We are first in terms of reliability and first to help when it matters.

Results You Can Trust

AI detection is more than accuracy. GPTZero was the first to invent sentence highlighting for interpretability. Today, our AI report is unique in explaining why AI was flagged.

Best In-Class Benchmarking

GPTZero partners with Penn State for independent benchmarking that continues to show best-in-class accuracy and reliability across AI models.

Cutting Edge Research

Top PHD and AI researchers from Princeton, Caltech, MILA, Vector, and OpenAI work with GPTZero to ensure your solution is the most up to date.

Debiased AI Detection

Our research team worked on Stanford University AI data to address AI biases, launching the first de-biased AI detection model in July 2023.

Military Grade Security

We uphold the highest data security standards with SOC-2 and SOC-3 certifications, meeting rigorous security benchmarks.

Discover our Detection Dashboard

Our dashboard was developed specifically with educator's needs in mind. Access a premium model with highest grade fidelity and interpretability.

Access a deeper scan with unprecedented levels of AI text analysis.

Source scanning

Scan documents for plagiarism and our AI copyright check.

Easily scan dozens of files at once, organize, save, and download reports.

Leading research in AI content detection modeling

Our AI detection model contains 7 components that process text to determine if it was written by AI. We utilize a multi-step approach that aims to produce predictions that reach maximum accuracy, with the least false positives. Our model specializes in detecting content from Chat GPT, GPT 4, Gemini, Claude and LLaMa models.

Quantify AI with Deep Scan

Unlock More of GPTZero

Origin chrome extension.

Use our AI detection tool as you browse the internet for AI content. Create a Writing Report on Google Docs to view statistics about your writing.

writing report

With our writing report, you are able to see behind the scenes of a google doc, including writing statistics, AI content, and a video of your writing process.

AI detection API

We built an easy to use API for organizations to detect AI content. Integrate GPTZero’s AI detection abilities into your own tools and workflow.

AI Text Detection and Analysis Trusted by Leading Organizations

Gptzero reviews.

GPTZero was the only consistent performer, classifying AI-generated text correctly. As for the rest … not so much.

GPTZero has been incomparably more accurate than any of the other AI checkers. For me, it’s the best solution to build trust with my clients.

This tool is a magnifying glass to help teachers get a closer look behind the scenes of a document, ultimately creating a better exchange of ideas that can help kids learn.

The granular detail provided by GPTZero allows administrators to observe AI usage across the institution. This data is helping guide us on what type of education, parameters, and policies need to be in place to promote an innovative and healthy use of AI.

After talking to the class, each student we compiled with GPTZero as possibly using AI ended up telling us they did, which made us extremely confident in GPTZero’s capabilities.

Sign up for GPTZero. Its feedback aligns well with my sense of what is going on in the writing - almost line-for-line.

I'm a huge fan of the writing reports that let me verify my documents are human-written. The writing video, in particular, is a great way to visualize the writing process!

Excellent chrome extension. I ran numerous tests on human written content and the results were 100% accurate.

Outstanding! This is an extraordinary tool to not only assess the end result but to view the real-time process it took to write the document.

GPTZero is the best AI detection tool for teachers and educators.

General FAQs about our AI Detector

Everything you need to know about GPTZero and our chat gpt detector. Can’t find an answer? You can talk to our customer service team .

What is GPTZero?

GPTZero is the leading AI detector for checking whether a document was written by a large language model such as ChatGPT. GPTZero detects AI on sentence, paragraph, and document level. Our model was trained on a large, diverse corpus of human-written and AI-generated text, with a focus on English prose. To date, GPTZero has served over 2.5 million users around the world, and works with over 100 organizations in education, hiring, publishing, legal, and more.

How do I use GPTZero?

Simply paste in the text you want to check, or upload your file, and we'll return an overall detection for your document, as well as sentence-by-sentence highlighting of sentences where we've detected AI. Unlike other detectors, we help you interpret the results with a description of the result, instead of just returning a number.

To get the power of our AI detector for larger texts, or a batch of files, sign up for a free account on our Dashboard .

If you want to run the AI detector as your browse, you can download our Chrome Extension, Origin , which allows you to scan the entire page in one click.

When should I use GPTZero?

Our users have seen the use of AI-generated text proliferate into education, certification, hiring and recruitment, social writing platforms, disinformation, and beyond. We've created GPTZero as a tool to highlight the possible use of AI in writing text. In particular, we focus on classifying AI use in prose.

Overall, our classifier is intended to be used to flag situations in which a conversation can be started (for example, between educators and students) to drive further inquiry and spread awareness of the risks of using AI in written work.

Does GPTZero only detect ChatGPT outputs?

No, GPTZero works robustly across a range of AI language models, including but not limited to ChatGPT, GPT-4, GPT-3, GPT-2, LLaMA, and AI services based on those models.

Why GPTZero over other detection models?

- GPTZero is the most accurate AI detector across use-cases, verified by multiple independent sources, including TechCrunch, which called us the best and most reliable AI detector after testing seven others.

- GPTZero builds and constantly improves our own technology. In our competitor analysis, we found that not only does GPTZero perform better, some competitor services are actually just forwarding the outputs of free, open-source models without additional training.

- In contrast to many other models, GPTZero is finetuned for student writing and academic prose. By doing so, we've seen large improvements in accuracies for this use-case.

What are the limitations of the classifier?

The nature of AI-generated content is changing constantly. As such, these results should not be used to punish students. We recommend educators to use our behind-the-scene Writing Reports as part of a holistic assessment of student work. There always exist edge cases with both instances where AI is classified as human, and human is classified as AI. Instead, we recommend educators take approaches that give students the opportunity to demonstrate their understanding in a controlled environment and craft assignments that cannot be solved with AI .

The accuracy of our model increases as more text is submitted to the model. As such, the accuracy of the model on the document-level classification will be greater than the accuracy on the paragraph-level, which is greater than the accuracy on the sentence level.

The accuracy of our model also increases for text similar in nature to our dataset. While we train on a highly diverse set of human and AI-generated text, the majority of our dataset is in English prose, written by adults.

Our classifier is not trained to identify AI-generated text after it has been heavily modified after generation (although we estimate this is a minority of the uses for AI-generation at the moment).

Currently, our classifier can sometimes flag other machine-generated or highly procedural text as AI-generated, and as such, should be used on more descriptive portions of text.

What can I do as an educator to reduce the risk of AI misuse?

- Help students understand the risks of using AI in their work (to learn more, see this article ), and value of learning to express themselves. For example, in real-life, real-time collaboration, pitching, and debate, how does your class improve their ability to communicate when AI is not available?

- Ask students to write about personal experiences and how they relate to the text, or reflect on their learning experience in your class.

- Ask students to critique the default answer given by Chat GPT to your question.

- Require that students cite real, primary sources of information to back up their specific claims, or ask them to write about recent events.

- Assess students based on a live discussion with their peers, and use peer assessment tools (such as the one provided by our partner, Peerceptiv ).

- Ask students to complete their assignments in class or in an interactive way, and shift lectures to be take-home.

- Ask students to produce multiple drafts of their work that they can revise as peers or through the educator, to help students understand that assignments are meant to teach a learning process.

- Ask students to produce work in a medium that is difficult to generate, such as powerpoint presentations, visual displays, videos, or audio recordings.

- Set expectations for your students that you will be checking the work through an AI detector like GPTZero, to deter misuse of AI.

I'm an educator who has found AI-generated text by my students. What do I do?

Firstly, at GPTZero, we don't believe that any AI detector is perfect. There always exist edge cases with both instances where AI is classified as human, and human is classified as AI. Nonetheless, we recommend that educators can do the following when they get a positive detection:

- Ask students to demonstrate their understanding in a controlled environment, whether that is through an in-person assessment, or through an editor that can track their edit history (for instance, using our Writing Reports through Google Docs). Check out our list of several recommendations on types of assignments that are difficult to solve with AI.

- Ask the student if they can produce artifacts of their writing process, whether it is drafts, revision histories, or brainstorming notes. For example, if the editor they used to write the text has an edit history (such as Google Docs), and it was typed out with several edits over a reasonable period of time, it is likely the student work is authentic. You can use GPTZero's Writing Reports to replay the student's writing process, and view signals that indicate the authenticity of the work.

- See if there is a history of AI-generated text in the student's work. We recommend looking for a long-term pattern of AI use, as opposed to a single instance, in order to determine whether the student is using AI.

What data did you train your model on?

Our model is trained on millions of documents spanning various domains of writing including creating writing, scientific writing, blogs, news articles, and more. We test our models on a never-before-seen set of human and AI articles from a section of our large-scale dataset, in addition to a smaller set of challenging articles that are outside its training distribution.

How do I use and interpret the results from your API?

To see the full schema and try examples yourself, check out our API documentation.

Our API returns a document_classification field which indicates the most likely classification of the document. The possible values are HUMAN_ONLY , MIXED , and AI_ONLY . We also provide a probability for each classification, which is returned in the class_probabilities field. The keys for this field are human , ai or mixed . To get the probability for the most likely classification, the predicted_class field can be used. The class probability corresponding to the predicted class can be interpreted as the chance that the detector is correct in its classification. I.e. 90% means that 90% of the time on similar documents our detector is correct in the prediction it makes. Lastly, each prediction comes with a confidence_category field, which can be high , medium , or low . Confidence categories are tuned such that when the confidence_category field is high 99.1% of human articles are classified as human, and 98.4% of AI articles are classified as AI.

Additionally, we highlight sentences that been detected to be written by AI. API users can access this highlighting through the highlight_sentence_for_ai field. The sentence-level classification should not be solely used to indicate that an essay contains AI (such as ChatGPT plagiarism). Rather, when a document gets a MIXED or AI_ONLY classification, the highlighted sentence will indicate where in the document we believe this occurred.

Are you storing data from API calls?

No. We do not store or collect the documents passed into any calls to our API. We wanted to be overly cautious on the side of storing data from any organizations using our API. However, we do store inputs from calls made from our dashboard. This data is only used in aggregate by GPTZero to further improve the service for our users. You can refer to our privacy policy for more details.

How do I cite GPTZero for an academic paper?

You can use the following bibtex citation:

MIT Technology Review

- Newsletters

How to spot AI-generated text

The internet is increasingly awash with text written by AI software. We need new tools to detect it.

- Melissa Heikkilä archive page

This sentence was written by an AI—or was it? OpenAI’s new chatbot, ChatGPT, presents us with a problem: How will we know whether what we read online is written by a human or a machine?

Since it was released in late November, ChatGPT has been used by over a million people. It has the AI community enthralled, and it is clear the internet is increasingly being flooded with AI-generated text. People are using it to come up with jokes, write children’s stories, and craft better emails.

ChatGPT is OpenAI’s spin-off of its large language model GPT-3 , which generates remarkably human-sounding answers to questions that it’s asked. The magic—and danger—of these large language models lies in the illusion of correctness. The sentences they produce look right—they use the right kinds of words in the correct order. But the AI doesn’t know what any of it means. These models work by predicting the most likely next word in a sentence. They haven’t a clue whether something is correct or false, and they confidently present information as true even when it is not.

In an already polarized, politically fraught online world, these AI tools could further distort the information we consume. If they are rolled out into the real world in real products, the consequences could be devastating.

We’re in desperate need of ways to differentiate between human- and AI-written text in order to counter potential misuses of the technology, says Irene Solaiman, policy director at AI startup Hugging Face, who used to be an AI researcher at OpenAI and studied AI output detection for the release of GPT-3’s predecessor GPT-2.

New tools will also be crucial to enforcing bans on AI-generated text and code, like the one recently announced by Stack Overflow, a website where coders can ask for help. ChatGPT can confidently regurgitate answers to software problems, but it’s not foolproof. Getting code wrong can lead to buggy and broken software, which is expensive and potentially chaotic to fix.

A spokesperson for Stack Overflow says that the company’s moderators are “examining thousands of submitted community member reports via a number of tools including heuristics and detection models” but would not go into more detail.

In reality, it is incredibly difficult, and the ban is likely almost impossible to enforce.

Today’s detection tool kit

There are various ways researchers have tried to detect AI-generated text. One common method is to use software to analyze different features of the text—for example, how fluently it reads, how frequently certain words appear, or whether there are patterns in punctuation or sentence length.

“If you have enough text, a really easy cue is the word ‘the’ occurs too many times,” says Daphne Ippolito, a senior research scientist at Google Brain, the company’s research unit for deep learning.

Because large language models work by predicting the next word in a sentence, they are more likely to use common words like “the,” “it,” or “is” instead of wonky, rare words. This is exactly the kind of text that automated detector systems are good at picking up, Ippolito and a team of researchers at Google found in research they published in 2019.

But Ippolito’s study also showed something interesting: the human participants tended to think this kind of “clean” text looked better and contained fewer mistakes, and thus that it must have been written by a person.

In reality, human-written text is riddled with typos and is incredibly variable, incorporating different styles and slang, while “language models very, very rarely make typos. They’re much better at generating perfect texts,” Ippolito says.

“A typo in the text is actually a really good indicator that it was human written,” she adds.

Large language models themselves can also be used to detect AI-generated text. One of the most successful ways to do this is to retrain the model on some texts written by humans, and others created by machines, so it learns to differentiate between the two, says Muhammad Abdul-Mageed, who is the Canada research chair in natural-language processing and machine learning at the University of British Columbia and has studied detection .

Scott Aaronson, a computer scientist at the University of Texas on secondment as a researcher at OpenAI for a year, meanwhile, has been developing watermarks for longer pieces of text generated by models such as GPT-3—“an otherwise unnoticeable secret signal in its choices of words, which you can use to prove later that, yes, this came from GPT,” he writes in his blog.

A spokesperson for OpenAI confirmed that the company is working on watermarks, and said its policies state that users should clearly indicate text generated by AI “in a way no one could reasonably miss or misunderstand.”

But these technical fixes come with big caveats. Most of them don’t stand a chance against the latest generation of AI language models, as they are built on GPT-2 or other earlier models. Many of these detection tools work best when there is a lot of text available; they will be less efficient in some concrete use cases, like chatbots or email assistants, which rely on shorter conversations and provide less data to analyze. And using large language models for detection also requires powerful computers, and access to the AI model itself, which tech companies don’t allow, Abdul-Mageed says.

The bigger and more powerful the model, the harder it is to build AI models to detect what text is written by a human and what isn’t, says Solaiman.

“What’s so concerning now is that [ChatGPT has] really impressive outputs. Detection models just can’t keep up. You’re playing catch-up this whole time,” she says.

Training the human eye

There is no silver bullet for detecting AI-written text, says Solaiman. “A detection model is not going to be your answer for detecting synthetic text in the same way that a safety filter is not going to be your answer for mitigating biases,” she says.

To have a chance of solving the problem, we’ll need improved technical fixes and more transparency around when humans are interacting with an AI, and people will need to learn to spot the signs of AI-written sentences.

“What would be really nice to have is a plug-in to Chrome or to whatever web browser you’re using that will let you know if any text on your web page is machine generated,” Ippolito says.

Some help is already out there. Researchers at Harvard and IBM developed a tool called Giant Language Model Test Room (GLTR), which supports humans by highlighting passages that might have been generated by a computer program.

But AI is already fooling us. Researchers at Cornell University found that people found fake news articles generated by GPT-2 credible about 66% of the time.

Another study found that untrained humans were able to correctly spot text generated by GPT-3 only at a level consistent with random chance.

The good news is that people can be trained to be better at spotting AI-generated text, Ippolito says. She built a game to test how many sentences a computer can generate before a player catches on that it’s not human, and found that people got gradually better over time.

“If you look at lots of generative texts and you try to figure out what doesn’t make sense about it, you can get better at this task,” she says. One way is to pick up on implausible statements, like the AI saying it takes 60 minutes to make a cup of coffee.

Artificial intelligence

What i learned from the un’s “ai for good” summit.

OpenAI’s CEO Sam Altman was the star speaker of the summit.

An AI startup made a hyperrealistic deepfake of me that’s so good it’s scary

Synthesia's new technology is impressive but raises big questions about a world where we increasingly can’t tell what’s real.

This AI-powered “black box” could make surgery safer

A new smart monitoring system could help doctors avoid mistakes—but it’s also alarming some surgeons and leading to sabotage.

- Simar Bajaj archive page

Is robotics about to have its own ChatGPT moment?

Researchers are using generative AI and other techniques to teach robots new skills—including tasks they could perform in homes.

Stay connected

Get the latest updates from mit technology review.

Discover special offers, top stories, upcoming events, and more.

Thank you for submitting your email!

It looks like something went wrong.

We’re having trouble saving your preferences. Try refreshing this page and updating them one more time. If you continue to get this message, reach out to us at [email protected] with a list of newsletters you’d like to receive.

AI Detector

Paste your content below, and we’ll tell you if any of it has been AI-generated within seconds with exceptional accuracy.

Certain platforms, like Grammarly, use ChatGPT and other genAI models for key functionalities and can be flagged as potential AI content. Learn more

An award-winning enterprise AI detector that has you fully covered.

With over 99% accuracy, model coverage that includes ChatGPT, Gemini, and Claude, and detection in 30 languages, you’re fully covered with the market’s most comprehensive AI detector and checker.

Combined with the award-winning Plagiarism Detector , Codeleaks Source Code Detector , and the all-new Writing Assistant , it’s the only full-spectrum suite dedicated to creating truly original, error-free content.

AI Detection Nearly A Decade In the Making

Since 2015, the Copyleaks AI engine has been learning how humans write by collecting and analyzing trillions of pages from user submitted inputs and more.

We Are Looking For Human Content, Not AI

Our AI engine has been trained to learn the patterns of human writing. Therefore, when the known patterns of human writing are disrupted, our AI flags it as potential AI content.

No one wants to fear false positives that can lead to false accusations. We tested over 20k human-written papers, and the rate of false positives was 0.2%, the lowest false positive rate of any ai detector available.

In addition, we are continually testing our AI Model and retraining it with new data and feedback, helping to improve accuracy.

Get Started Today With the AI Detector

Key features, complete ai model coverage .

AI detection across all AI models, including ChatGPT, Gemini, and Claude. Plus, once newer models are released, we can automatically detect it.

Unprecedented Speed and Accuracy

The AI Detector has over 99% overall accuracy and a 0.2% false positive rate, the lowest of any platform.

Plagiarism and Paraphrased AI Detection

The AI Detector is the only platform with a high confidence rate in detecting AI-generated text that has been potentially plagiarized and/or paraphrased.

AI-generated Source Code Detection

Ensure full transparency with the only AI detector tool that detects AI-generated source code, provides code licensing alerts, and more.

Detect Interspersed AI Content

Have full transparency around the presence of AI-generated content even if the text has been interspersed with human-written content.

Military-Grade Security

All Copyleaks products boast military-grade security along with GDPR compliance and both SOC 2 and SOC 3 certification . For full security details, click here .

AI Detection Across Multiple Languages

- Chinese (Simplified)

- Chinese (Traditional)

- Vietnamese

For a full list of supported languages & accuracy, click here .

AI Detector Use Cases

Ai model training.

Safeguard your AI by ensuring your models are trained exclusively on human-written content, not AI-generated.

Academic Integrity

Verify originality and ensure authenticity within all your academic content, from long-form essays to source code and everything in between.

Mitigate risks, protect your copyright and IP, and establish guardrails for responsible GenAI adoption across your organization.

Publishing & Copywriting

Ensure that what you’re publishing is original and authentic content, avoiding potential search engine penalties and other risks.

Oakland University Case Study

Learn how Oakland University adopted Copyleaks and changed the conversation around AI and plagiarism among their faculty and staff.

Campaign KPIs

Ease of use & Integration, Cost-effectiveness, Customer Support, Security

Lowell Christensen, CEO, SafeSearchKids

AI Detector is even better when paired.

Each of our dynamic product offerings is strong on its own. However, they are truly powerful when you use them together.

Get award-winning plagiarism detection in over 100 languages, identify multiple forms of paraphrasing, and much more.

Proactively mitigate risk and have complete transparency with the only solution that detects AI-generated code and more.

Enforce enterprise-wide policies, ensure responsible generative AI adoption, and proactively mitigate all potential risks.

A revolutionary solution for efficiently and accurately grading tens of thousands of standardized tests at the state, national, and university-wide levels.

Frequently Asked Questions

When a Language Model writes a sentence, it probes all of its pre-training data to output a statistically generated sentence, which is simply not how a human writes. It becomes apparent when analyzed against a vast corpus of human writing.

We can recognize AI text patterns utilizing multiple techniques.

Since 2015, we’ve collected, ingested, and analyzed trillions of crawled and user-sourced content pages from thousands of universities and enterprises worldwide to train our models to understand how humans write as opposed to AI.

Also, utilizing AI technology, our AI detector can accurately recognize the presence of other AI-generated text and the signals it leaves behind, adding an additional layer of accuracy.

There are several significant differences between other AI detector tools and ours.

For example:

• Credible data at scale, coupled with machine learning and widespread adoption, allows us to continually refine and improve our ability to understand complex text patterns, resulting in over 99% accuracy—higher than any other AI detector—and improving daily.

•As an enterprise-based platform, we’re the only solution with seamless API and LMS integrations, allowing you to bring the power of AI Detector directly to your native platform and at scale.

• The AI Detector is the only platform to read source code, including AI-generated. Furthermore, it can detect source code at the function level, helping identify when code has been plagiarized or modified, even if certain variables have been altered or entire portions have been changed

• Our AI Detector does not flag Grammarly’s non-AI features, which include spell check and grammar, as AI. Another AI Detector, in their own analysis, flagged 20% of Grammarly’s non-AI features as AI content, an astounding 20% false positive rate. Read the full analysis .

• Our AI Detector, along with all of Copyleaks’ products, are fully GDPR-compliant and SOC 2 and SOC 3-certified, a global standard audited by KPMG, the leading auditing organization for certification, ensuring the privacy and protection of personal data.

The chance for content written by a human to be falsely labeled as AI-generated content is 0.2%, the lowest of any AI detector available. Nevertheless, we strive to inspire authenticity and digital trust by creating secure environments to share ideas and learn confidently, and that comes with the responsibility to ensure complete accuracy, particularly around false accusations. To address this, we have taken several precautions, including:

- Our detection and the algorithms that power it are designed for detecting human-generated text versus AI-generated text. Detecting AI text tends to give a lower accuracy and increases the likelihood of false positives.

- To help accelerate our learning and refine the models used, we implemented a feedback loop where users can rate the accuracy of the results, which allows us to continually use examples of false positives, rare as they may be, to improve.

- We only introduce new model detection after thorough testing. Once our internal testing reaches a high confidence threshold, we leverage beta testers, giving an additional layer of assurance.

Yes. In July 2023, four researchers from across the globe published a study on the Cornell Tech-owned arXiv, declaring Copyleaks AI Detector the most accurate for checking and detecting Large Language Models (LLM) generated text. Since then, additional independent third-party studies have been released, each one highlighting the accuracy and efficiency of the AI Detector.

Read more about these third-party studies .

Only certain features of writing assistants can cause your content to be flagged by the AI Detector as AI-generated.

For example, Grammarly has a genAI-driven feature that rewrites your content to help improve it, shorten it, etc. As a result, this reworked content could get flagged as AI since it was rewritten by genAI.

However, the Copyleaks Writing Assistant does not get flagged as AI or any content that Grammarly changed to fix grammatical errors, mechanical issues, etc., because it does not use or uses minimal genAI to power these features or functionalities.

Read our analysis about writing assistant tools getting flagged as AI .

At Copyleaks, our products are routinely undergoing independent verification of privacy, security, and compliance control to achieve certifications against global standards to earn and retain the trust of the millions of Copyleaks users worldwide. Our current Copyleaks certifications and compliance standards include SOC2 & SOC3, GDPR, PCI Payment Card Industry Data Security Standard, and NIST Risk Management Framework (RMF). Please visit our Compliance and Certifications and Security Practices pages to learn more.

Yes. Our detection report highlights the specific elements of the text written by a human and those written by AI, even if the text has been interspersed.

Plagiarism Detector

Writing Assistant

Gen AI Governance

LMS Integration

AI Detector API

AI Detector Extension

Plagiarism Detector API

Writing Assistant API

News & Media

Affiliates

Biden Executive Order on AI

Plagiarism Checker

Help Center

Success Stories

Plagiarism Resources

Accessibility

Security Practices

Terms of Use

Privacy Policy

System Status

All rights reserved. Use of this website signifies your agreement to the Terms of Use .

Detect AI-Generated Text in Seconds for Free!

Have doubts if your content is 100% human-written? Enter your text and find out whether it was developed by real people or created by AI.

Instant Results

No registration is required. Upload the text and get results in seconds.

Detailed Report

Get a report indicating AI-written text along with comprehensive statistics.

100% Data Protection

We do not store your uploads nor do we share any of your information.

What Is AI Detector?

ChatGPT and similar tools are becoming increasingly popular. While they can be handy for specific purposes, it's still vital to understand that AI-created text may result in various penalties.

We have created our ChatGPT finder based on the same language patterns that ChatGPT and other similar AI-writers use, and we have trained our tool to distinguish between patterns of human-written and AI-generated text. You will receive a real-time report on how much of the content is fake.

Using AI-detector.net, you can be sure your texts are completely authentic and contain zero AI-written text.

Best Free AI Text Checker

AI-detector.net will provide you with detailed results within only a few seconds.

No need to pay or register—just paste the text, and you’ll get the result.

We use the same technology as ChatGPT to provide the most precise results.

Our ChatGPT detector can be used for various content types: essays, articles, and more.

Descriptive

You will get a detailed report with highlighted content that was likely written by AI.

Confidentiality

We care about your privacy and do not store any of your texts or personal information.

Who Is AI Detector for?

It is vital to know what content has been written by AI or humans, whether you’re looking at a blog post, browsing the Internet, or reading a college essay. Our free ChatGPT detector can help you to check any type of text.

Marketing and SEO-content

The vast majority of search engines penalize content if they recognize it as AI-generated. Use our AI text checker to verify that you’re posting only human-written content and to detect if your writers used any AI tools in the process.

Academic writing

Find out if your essays or theses include any signs of AI content tools usage. Copy and paste any assignment into the box above and find out within a few seconds whether it is AI-generated or written by a real human.

Business writing

Avoid misleading or inaccurate information in your emails, reports, or other texts, which may occur due to the use of ChatGPT or similar tools. Our AI detector will help you to protect your brand reputation and deliver clear messages to your customers.

How AI Content Checker Works?

Our free AI content detector allows you to assess any text within a few clicks and get the results in seconds.

What Technologies Can AI Checker Detect?

With the rise in popularity of various AI text generation platforms, it is vital to know whether content was written by humans or created by an AI platform. We have incorporated as many technologies as possible into our tool to detect potential issues in any given piece of content.

ChatGPT AI Detector

The first AI chatbot, launched in November 2022, quickly gained users’ attention for its detailed responses. However, it often provides inaccurate facts and false answers.

Our ChatGPT essay checker can easily detect the use of this technology so that you can be sure what was artificially created with the help of this algorithm.

GPT-3 and GPT-4 Detector

Our free service is capable of detecting GPT-4, as well as the earlier version of ChatGPT responses. We have implemented a state-of-art algorithm, which incorporates keyword extraction and sentiment analysis. This helps us to determine texts made with pre-trained language models.

The AI-Detector.net model uses contextual and structural clues to recognize machine-generated texts.

Other AI-Writing Tools

There are many online writing tools that use GPT-3 or similar natural language processing models. We have created our AI Detector with the capability to recognize topic modeling and find flag words and language patterns that are typical for artificial intelligence and uncommon for humans. That’s why it can easily verify whether something was written by AI or real people and show it to you in a detailed report.

Check Our Other Tools for Your Writing!

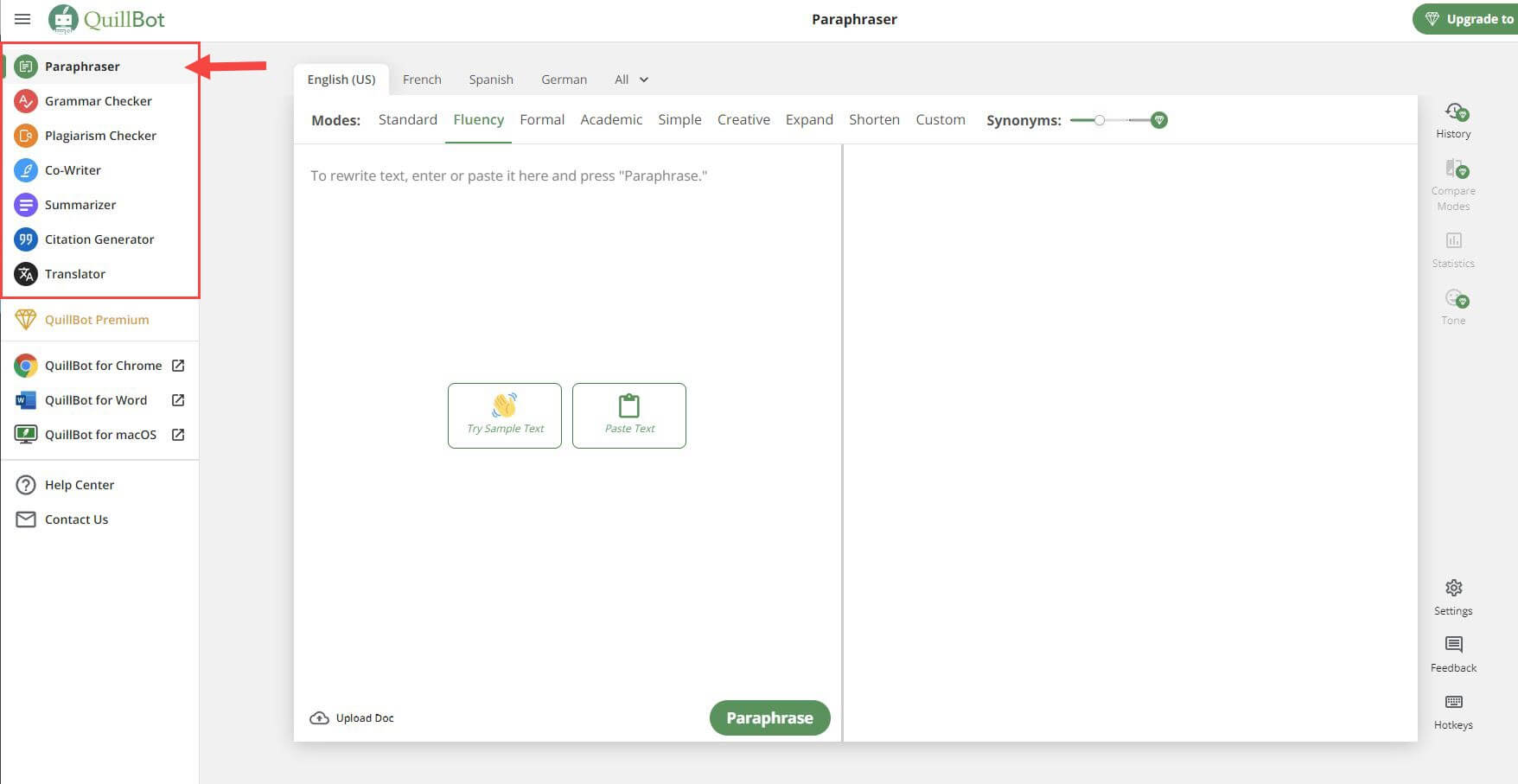

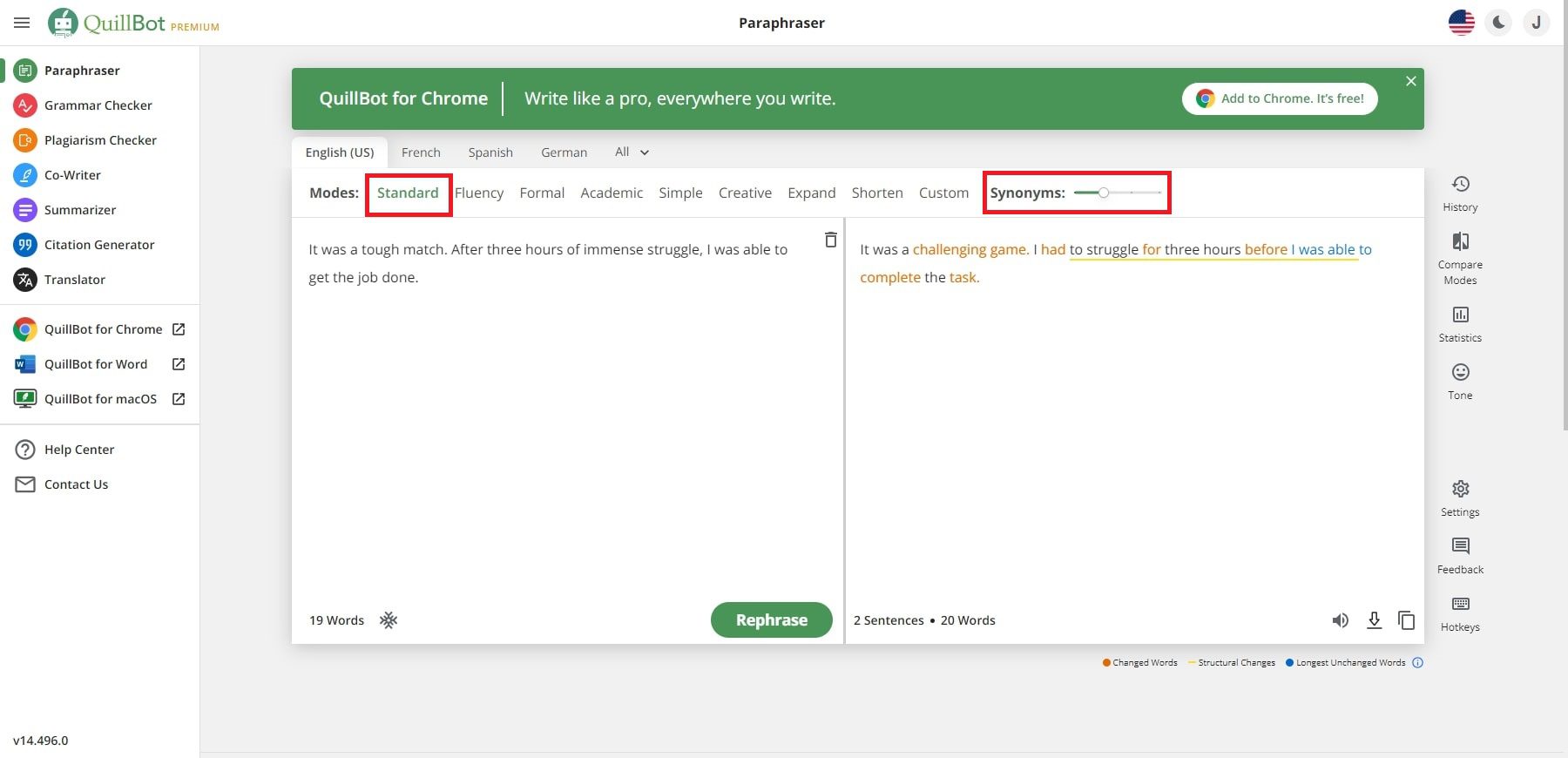

Make your text unique with our free rephrasing tool.

Main Idea Finder

Summarize a lengthy text into a shorter piece a flash with the help of our free online tool.

Random Topic Generator

Grab the list of ideas and research questions for your writing.

Reword generator

Use our AI-powered online paraphraser to rephrase any text in no time.

Essay Conclusion Generator

Stuck with conclusion? No worries! Make a brief summary for your paper within a click.

Thesis Statement Generator

Create a perfect thesis statement for your paper with our free thesis statement generator.

Text Summarizer

Condense any text into a brief summary within a few clicks.

Sentence Rewriter

Paraphrase any sentence or paragraph within a few seconds with our free sentence rewriter.

Thesis Checker

Make a perfect thesis that fits your paper with only a couple of clicks.

Essay Topic Generator

Grab a bunch of unique essay topic ideas in a flash using our handy online tool.

Thesis Maker

Craft a perfect thesis statement for any paper in three simple steps.

Research Title Generator

Can’t pick up a catchy title for your research paper? Use our title generator to get the list of ideas.

Research Question Generator

Get a list of research questions for your next project in no time with this online tool.

Rewrite My Essay

Rewrite any paper in a few clicks with this free online paraphrasing tool.

Summary Writer

Extract key ideas from any paper or article in seconds with the help of our free tool.

Thesis Statement Finder

Make a strong thesis statement for any paper using our free online thesis generator.

Ready to Check Your Content?

Try our AI detection tool out for free!

Have questions about our AI detector or found an error on our website? Use this form to reach us!

Get in Touch

Student Creates App to Detect Essays Written by AI

In response to the text-generating bot ChatGPT, the new tool measures sentence complexity and variation to predict whether an author was human

/https://tf-cmsv2-smithsonianmag-media.s3.amazonaws.com/accounts/headshot/MargaretOsborne.png)

Margaret Osborne

Daily Correspondent

:focal(1061x707:1062x708)/https://tf-cmsv2-smithsonianmag-media.s3.amazonaws.com/filer_public/47/1e/471e0924-d9c0-40b0-a8b0-61369a6df36f/gettyimages-1346781823.jpg)

In November, artificial intelligence company OpenAI released a powerful new bot called ChatGPT, a free tool that can generate text about a variety of topics based on a user’s prompts. The AI quickly captivated users across the internet, who asked it to write anything from song lyrics in the style of a particular artist to programming code.

But the technology has also sparked concerns of AI plagiarism among teachers, who have seen students use the app to write their assignments and claim the work as their own. Some professors have shifted their curricula because of ChatGPT, replacing take-home essays with in-class assignments, handwritten papers or oral exams, reports Kalley Huang for the New York Times .

“[ChatGPT] is very much coming up with original content,” Kendall Hartley , a professor of educational training at the University of Nevada, Las Vegas, tells Scripps News . “So, when I run it through the services that I use for plagiarism detection, it shows up as a zero.”

Now, a student at Princeton University has created a new tool to combat this form of plagiarism: an app that aims to determine whether text was written by a human or AI. Twenty-two-year-old Edward Tian developed the app, called GPTZero , while on winter break and unveiled it on January 2. Within the first week of its launch, more than 30,000 people used the tool, per NPR ’s Emma Bowman. On Twitter, it has garnered more than 7 million views.

GPTZero uses two variables to determine whether the author of a particular text is human: perplexity, or how complex the writing is, and burstiness, or how variable it is. Text that’s more complex with varied sentence length tends to be human-written, while prose that is more uniform and familiar to GPTZero tends to be written by AI.

But the app, while almost always accurate, isn’t foolproof. Tian tested it out using BBC articles and text generated by AI when prompted with the same headline. He tells BBC News ’ Nadine Yousif that the app determined the difference with a less than 2 percent false positive rate.

“This is at the same time a very useful tool for professors, and on the other hand a very dangerous tool—trusting it too much would lead to exacerbation of the false flags,” writes one GPTZero user, per the Guardian ’s Caitlin Cassidy.

Tian is now working on improving the tool’s accuracy, per NPR. And he’s not alone in his quest to detect plagiarism. OpenAI is also working on ways that ChatGPT’s text can easily be identified.

“We don’t want ChatGPT to be used for misleading purposes in schools or anywhere else,” a spokesperson for the company tells the Washington Post ’s Susan Svrluga in an email, “We’re already developing mitigations to help anyone identify text generated by that system.” One such idea is a watermark , or an unnoticeable signal that accompanies text written by a bot.

Tian says he’s not against artificial intelligence, and he’s even excited about its capabilities, per BBC News. But he wants more transparency surrounding when the technology is used.

“A lot of people are like … ‘You’re trying to shut down a good thing we’ve got going here!’” he tells the Post . “That’s not the case. I am not opposed to students using AI where it makes sense. … It’s just we have to adopt this technology responsibly.”

Get the latest stories in your inbox every weekday.

/https://tf-cmsv2-smithsonianmag-media.s3.amazonaws.com/accounts/headshot/MargaretOsborne.png)

Margaret Osborne | | READ MORE

Margaret Osborne is a freelance journalist based in the southwestern U.S. Her work has appeared in the Sag Harbor Express and has aired on WSHU Public Radio.

Have a language expert improve your writing

Check your paper for plagiarism in 10 minutes, generate your apa citations for free.

- Knowledge Base

Using AI tools

- How Do AI Detectors Work? | Methods & Reliability

How Do AI Detectors Work? | Methods & Reliability

Published on May 1, 2023 by Jack Caulfield . Revised on September 6, 2023.

AI detectors (also called AI writing detectors or AI content detectors ) are tools designed to detect when a text was partially or entirely generated by artificial intelligence (AI) tools such as ChatGPT .

AI detectors may be used to detect when a piece of writing is likely to have been generated by AI. This is useful, for example, to educators who want to check that their students are doing their own writing or moderators trying to remove fake product reviews and other spam content.

However, these tools are quite new and experimental, and they’re generally considered somewhat unreliable for now. Below, we explain how they work, how reliable they really are, and how they’re being used.

Instantly correct all language mistakes in your text

Upload your document to correct all your mistakes in minutes

Table of contents

How do ai detectors work, how reliable are ai detectors, ai detectors vs. plagiarism checkers, what are ai detectors used for, detecting ai writing manually, ai image and video detectors, other interesting articles, frequently asked questions.

AI detectors are usually based on language models similar to those used in the AI writing tools they’re trying to detect. The language model essentially looks at the input and asks “Is this the sort of thing that I would have written?” If the answer is “yes,” it concludes that the text is probably AI-generated.

Specifically, the models look for two things in a text: perplexity and burstiness . The lower these two variables are, the more likely the text is to be AI-generated. But what do these unusual terms mean?

Perplexity is a measure of how unpredictable a text is: how likely it is to perplex (confuse) the average reader (i.e., make no sense or read unnaturally).

- AI language models aim to produce texts with low perplexity , which are more likely to make sense and read smoothly but are also more predictable.

- Human writing tends to have higher perplexity : more creative language choices, but also more typos.

Language models work by predicting what word would naturally come next in a sentence and inserting it. For example, in the sentence “I couldn’t get to sleep last …” there are more and less plausible continuations, as shown in the table below.

| Example continuation | Perplexity |

|---|---|

| I couldn’t get to sleep last | Probably the most likely continuation |

| I couldn’t get to sleep last | Less likely, but it makes grammatical and logical sense |

| I couldn’t get to sleep last | The sentence is coherent but quite unusually structured and long-winded |

| I couldn’t get to sleep last | Grammatically incorrect and illogical |

L ow perplexity is taken as evidence that a text is AI-generated.

Burstiness is a measure of variation in sentence structure and length—something like perplexity, but on the level of sentences rather than words:

- A text with little variation in sentence structure and sentence length has low burstiness .

- A text with greater variation has high burstiness .

AI text tends to be less “bursty” than human text. Because language models predict the most likely word to come next, they tend to produce sentences of average length (say, 10–20 words) and with conventional structures. This is why AI writing can sometimes seem monotonous.

Low burstiness indicates that a text is likely to be AI-generated.

A potential alternative: Watermarks

OpenAI, the company behind ChatGPT, claims to be working on a “watermarking” system where text generated by the tool could be given an invisible watermark that can then be detected by another system to know for sure that a text was AI-generated.

However, this system has not been developed yet, and the details of how it might work are unknown. It’s also not clear whether the proposed watermarks will remain when the generated text is edited. So while this may be a promising method of AI detection in the future, we just don’t know yet.

Check for common mistakes

Use the best grammar checker available to check for common mistakes in your text.

Fix mistakes for free

In our experience, AI detectors normally work well, especially with longer texts, but can easily fail if the AI output was prompted to be less predictable or was edited or paraphrased after being generated. And detectors can easily misidentify human-written text as AI-generated if it happens to match the criteria (low perplexity and burstiness).

Our research into the best AI detectors indicates that no tool can provide complete accuracy; the highest accuracy we found was 84% in a premium tool or 68% in the best free tool.

These tools give a useful indication of how likely it is that a text was AI-generated, but we advise against treating them as evidence on their own. As language models continue to develop, it’s likely that detection tools will always have to race to keep up with them.

Even the more confident providers usually admit that their tools can’t be used as definitive evidence that a text is AI-generated, and universities so far don’t put much faith in them.

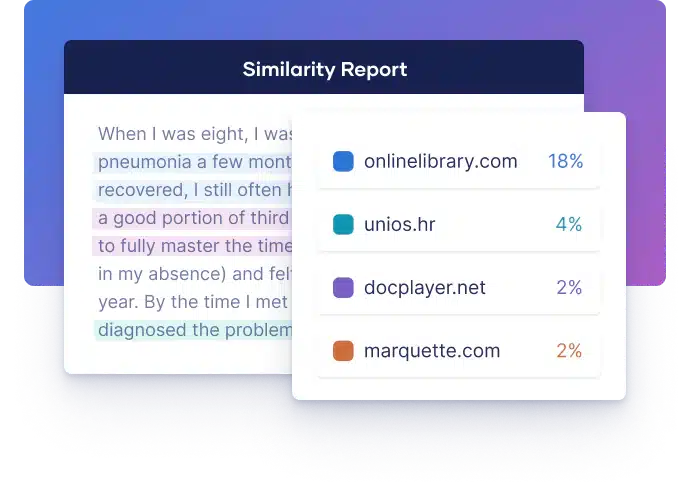

AI detectors and plagiarism checkers may both be used by universities to discourage academic dishonesty , but they differ in terms of how they work and what they’re looking for:

- AI detectors try to find text that looks like it was generated by an AI writing tool. They do this by measuring specific characteristics of the text (perplexity and burstiness)—not by comparing it to a database.

- Plagiarism checkers try to find text that is copied from a different source. They do this by comparing the text to a large database of previously published sources, student theses, and so on, and detecting similarities —not by measuring specific characteristics of the text.

However, we’ve found that plagiarism checkers do flag parts of AI-generated texts as plagiarism. This is because AI writing draws on sources that it doesn’t cite. While it usually generates original sentences, it may also include sentences directly copied from existing texts, or at least very similar.

This is most likely to happen with popular or general-knowledge topics and less likely with more specialized topics that have been written about less. Moreover, as more AI-generated text appears online, AI writing may become more likely to be flagged as plagiarism—simply because other similarly worded AI-generated texts already exist on the same topic.

So, while plagiarism checkers aren’t designed to double as AI detectors, they may still flag AI writing as partially plagiarized in many cases . But they’re certainly less effective at finding AI writing than an AI detector.

AI detectors are intended for anyone who wants to check whether a piece of text might have been generated by AI. Potential users include:

- Educators (teachers and university instructors) who want to check that their students’ work is original

- Publishers who want to ensure that they only publish human-written content

- Recruiters who want to ensure that candidates’ cover letters are their own writing

- Web content writers who want to publish AI-generated content but are concerned that it may rank lower in search engines if it is identified as AI writing

- Social media moderators, and others fighting automated misinformation, who want to identify AI-generated spam and fake news

Because of concerns about their reliability, most users are reluctant to fully rely on AI detectors for now, but they are already gaining popularity as an indication that a text was AI-generated when the user already had their suspicions.

As well as using AI detectors, you can also learn to spot the identifying features of AI writing yourself. It’s difficult to do so reliably—human writing can sometimes seem robotic, and AI writing tools are more and more convincingly human—but you can develop a good instinct for it.

The specific criteria that AI detectors use—low perplexity and burstiness—are quite technical, but you can try to spot them manually by looking for text:

- That reads monotonously, with little variation in sentence structure or length

- With predictable, generic word choices and few surprises

You can also use approaches that AI detectors don’t, by watching out for:

- Overly polite language: Chatbots like ChatGPT are designed to play the role of a helpful assistant, so their language is very polite and formal by default—not very conversational.

- Hedging language: Look for a lack of bold, original statements and for a tendency to overuse generic hedging phrases: “It’s important to note that …” “ X is widely regarded as …” “ X is considered …” “Some might say that …”

- Inconsistency in voice: If you know the usual writing style and voice of the person whose writing you’re checking (e.g., a student), then you can usually see when they submit something that reads very differently from how they normally write.

- Unsourced or incorrectly cited claims: In the context of academic writing , it’s important to cite your sources . AI writing tools tend not to do this or to do it incorrectly (e.g., citing nonexistent or irrelevant sources).

- Logical errors: AI writing, although it’s increasingly fluent, may not always be coherent in terms of its actual content. Look for points where the text contradicts itself, makes an implausible statement, or presents disjointed arguments.

In general, just trying out some AI writing tools, seeing what kinds of texts they can generate, and getting used to their style of writing are good ways to improve your ability to spot text that may be AI-generated.

AI image and video generators such as DALL-E, Midjourney, and Synthesia are also gaining popularity, and it’s increasingly important to be able to detect AI images and videos (also called “deepfakes”) to prevent them from being used to spread misinformation.

Due to the technology’s current limitations, there are some obvious giveaways in a lot of AI-generated images and videos: anatomical errors like hands with too many fingers; unnatural movements; inclusion of nonsensical text; and unconvincing faces.

But as these AI images and videos become more advanced, they may become harder to detect manually. Some AI image and video detectors are already out there: for example, Deepware, Intel’s FakeCatcher, and Illuminarty. We haven’t tested the reliability of these tools.

If you want more tips on using AI tools , understanding plagiarism , and citing sources , make sure to check out some of our other articles with explanations, examples, and formats.

- Citing ChatGPT

- Best grammar checker

- Best paraphrasing tool

- ChatGPT in your studies

- Deep learning

- Types of plagiarism

- Self-plagiarism

- Avoiding plagiarism

- Academic integrity

- Best plagiarism checker

Citing sources

- Citation styles

- In-text citation

- Citation examples

- Annotated bibliography

AI detectors aim to identify the presence of AI-generated text (e.g., from ChatGPT ) in a piece of writing, but they can’t do so with complete accuracy. In our comparison of the best AI detectors , we found that the 10 tools we tested had an average accuracy of 60%. The best free tool had 68% accuracy, the best premium tool 84%.

Because of how AI detectors work , they can never guarantee 100% accuracy, and there is always at least a small risk of false positives (human text being marked as AI-generated). Therefore, these tools should not be relied upon to provide absolute proof that a text is or isn’t AI-generated. Rather, they can provide a good indication in combination with other evidence.

Tools called AI detectors are designed to label text as AI-generated or human. AI detectors work by looking for specific characteristics in the text, such as a low level of randomness in word choice and sentence length. These characteristics are typical of AI writing, allowing the detector to make a good guess at when text is AI-generated.

But these tools can’t guarantee 100% accuracy. Check out our comparison of the best AI detectors to learn more.

You can also manually watch for clues that a text is AI-generated—for example, a very different style from the writer’s usual voice or a generic, overly polite tone.

Yes, in some contexts it may be appropriate to cite ChatGPT in your work, especially if you use it as a primary source (e.g., you’re studying the abilities of AI language models).

Some universities may also require you to cite or acknowledge it if you used it to help you in the research or writing process (e.g., to help you develop research questions ). Check your institution’s guidelines.

Since ChatGPT isn’t always trustworthy and isn’t a credible source , you should not cite it as a source of factual information.

In APA Style , you can cite a ChatGPT response as a personal communication, since the answers it gave you are not retrievable for other users. Cite it like this in the text: (ChatGPT, personal communication, February 11, 2023).

You can access ChatGPT by signing up for a free account:

- Follow this link to the ChatGPT website.

- Click on “Sign up” and fill in the necessary details (or use your Google account). It’s free to sign up and use the tool.

- Type a prompt into the chat box to get started!

A ChatGPT app is also available for iOS, and an Android app is planned for the future. The app works similarly to the website, and you log in with the same account for both.

It’s not clear whether ChatGPT will stop being available for free in the future—and if so, when. The tool was originally released in November 2022 as a “research preview.” It was released for free so that the model could be tested on a very large user base.

The framing of the tool as a “preview” suggests that it may not be available for free in the long run, but so far, no plans have been announced to end free access to the tool.

A premium version, ChatGPT Plus, is available for $20 a month and provides access to features like GPT-4, a more advanced version of the model. It may be that this is the only way OpenAI (the publisher of ChatGPT) plans to monetize it and that the basic version will remain free. Or it may be that the high costs of running the tool’s servers lead them to end the free version in the future. We don’t know yet.

Cite this Scribbr article

If you want to cite this source, you can copy and paste the citation or click the “Cite this Scribbr article” button to automatically add the citation to our free Citation Generator.

Caulfield, J. (2023, September 06). How Do AI Detectors Work? | Methods & Reliability. Scribbr. Retrieved June 7, 2024, from https://www.scribbr.com/ai-tools/how-do-ai-detectors-work/

Is this article helpful?

Jack Caulfield

Other students also liked, best ai detector | free & premium tools tested, university policies on ai writing tools | overview & list, best plagiarism checkers compared.

Jack Caulfield (Scribbr Team)

Thanks for reading! Hope you found this article helpful. If anything is still unclear, or if you didn’t find what you were looking for here, leave a comment and we’ll see if we can help.

Still have questions?

"i thought ai proofreading was useless but..".

I've been using Scribbr for years now and I know it's a service that won't disappoint. It does a good job spotting mistakes”

- Skip to main content

- Keyboard shortcuts for audio player

A new tool helps teachers detect if AI wrote an assignment

Janet W. Lee

Several big school districts such as New York and Los Angeles have blocked access to a new chatbot that uses artificial intelligence to produce essays. One student has a new tool to help.

Copyright © 2023 NPR. All rights reserved. Visit our website terms of use and permissions pages at www.npr.org for further information.

NPR transcripts are created on a rush deadline by an NPR contractor. This text may not be in its final form and may be updated or revised in the future. Accuracy and availability may vary. The authoritative record of NPR’s programming is the audio record.

AI content detector

Use our free AI detector to check up to 5,000 words, and decide if you want to make adjustments before you publish. Read the disclaimer first.

AI content detection is only available in the Writer app as an API . Find out more in our help center article .

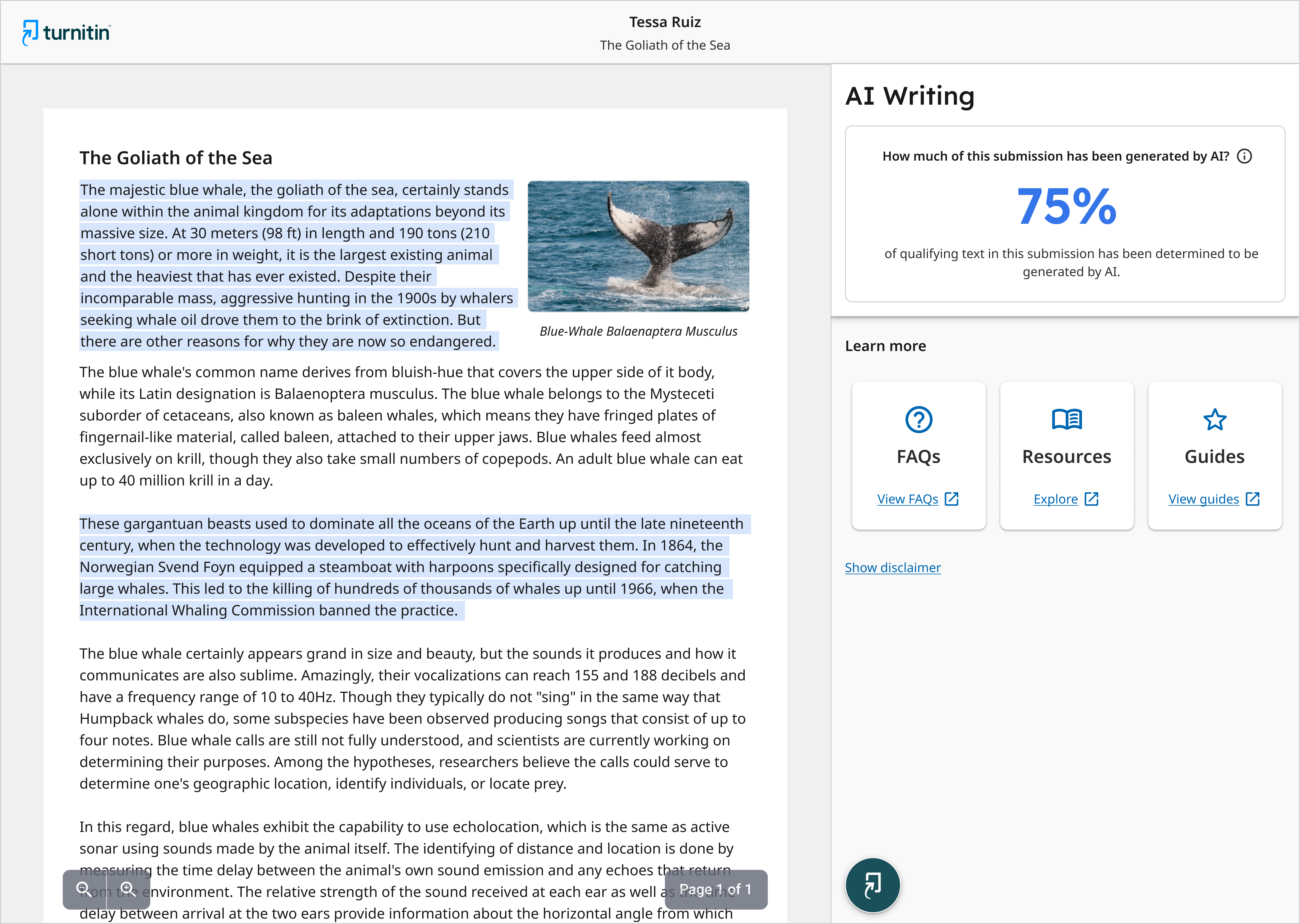

Turnitin's AI writing detection available now

Turnitin’s ai writing detection helps educators, publishers and researchers identify when ai writing tools such as chatgpt may have been used in submitted work..

Academic integrity in the age of AI writing

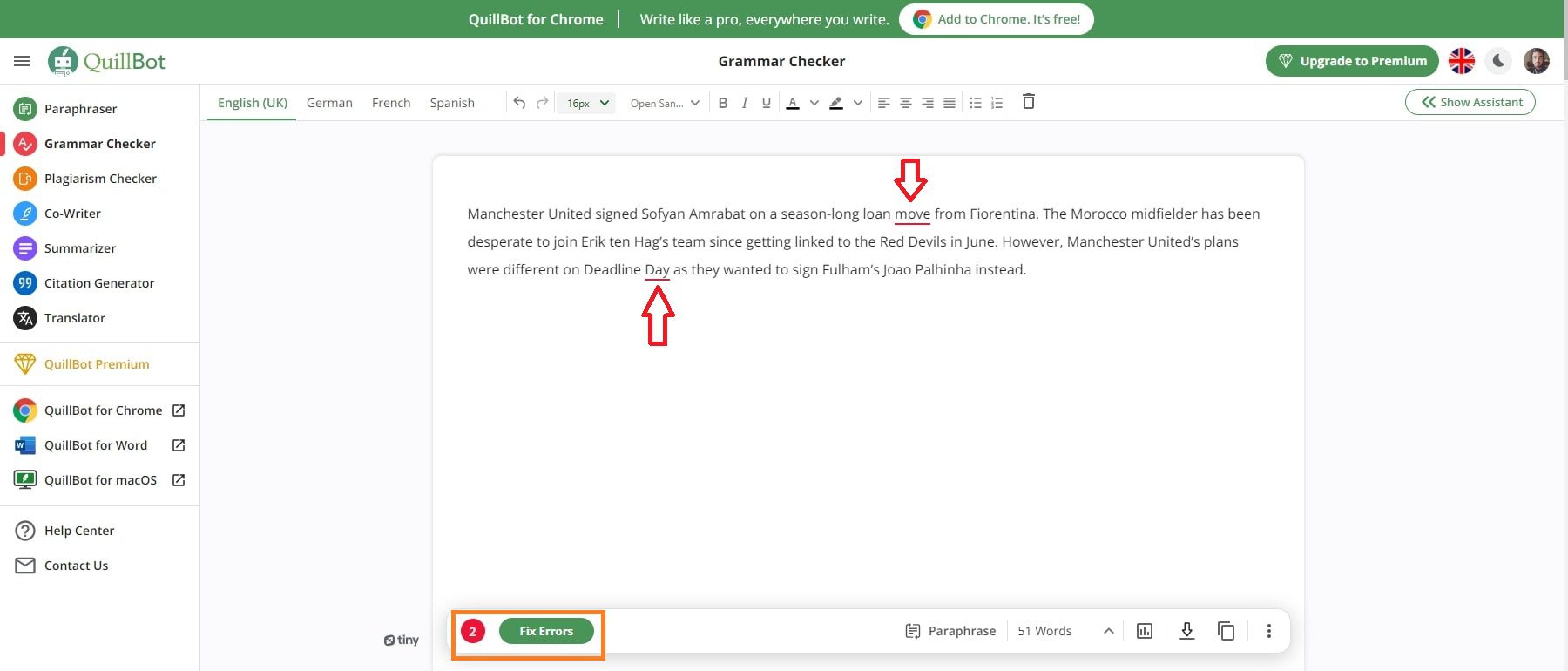

Over the years, academic integrity has been both supported and tested by technology. Today, educators are facing a new frontier with AI writing and ChatGPT.

Here at Turnitin, we believe that AI can be a positive force that, when used responsibly, has the potential to support and enhance the learning process. We also believe that equitable access to AI tools is vital, which is why we’re working with students and educators to develop technology that can support and enhance the learning process. However, it is important to acknowledge new challenges alongside the opportunities.

We recognize that for educators, there is an immediate need to know when and where AI and AI writing tools have been used by students. This is why we are now offering AI detection capabilities for educators in our products.

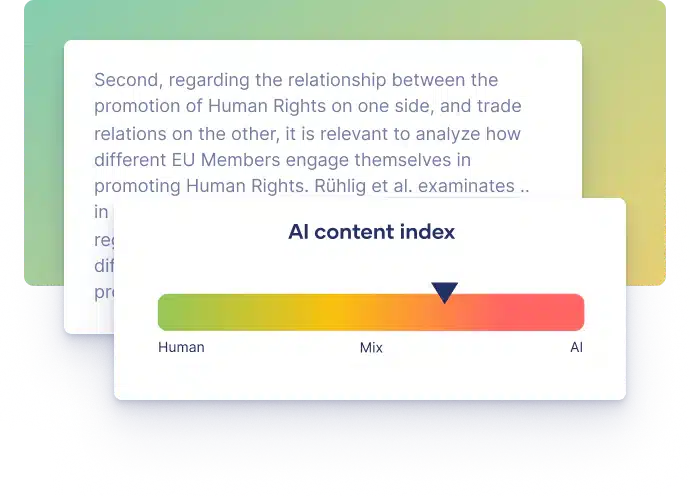

Gain insights on how much of a student’s submission is authentic, human writing versus AI-generated from ChatGPT or other tools.

Reporting identifies likely AI-written text and provides information educators need to determine their next course of action. We’ve designed our solution with educators, for educators.

AI writing detection complements Turnitin’s similarity checking workflow and is integrated with your LMS, providing a seamless, familiar experience.

Turnitin’s AI writing detection capability available with Originality, helps educators identify AI-generated content in student work while safeguarding the interests of students.

Turnitin ai innovation lab.

Welcome to the Turnitin AI Innovation Lab, a hub for new and upcoming product developments in the area of AI writing. You can follow our progress on detection initiatives for AI writing, ChatGPT, and AI-paraphrasing.

Understanding the false positive rate for sentences of our AI writing detection capability

We’d like to share more insight on our sentence level false positive rate and tips on how to use our AI writing detection metrics.

Understanding false positives within our AI writing detection capabilities

We’d like to share some insight on how our AI detection model deals with false positives and what constitutes a false positive.

Have questions? Read these FAQs on Turnitin’s AI writing detection capabilities

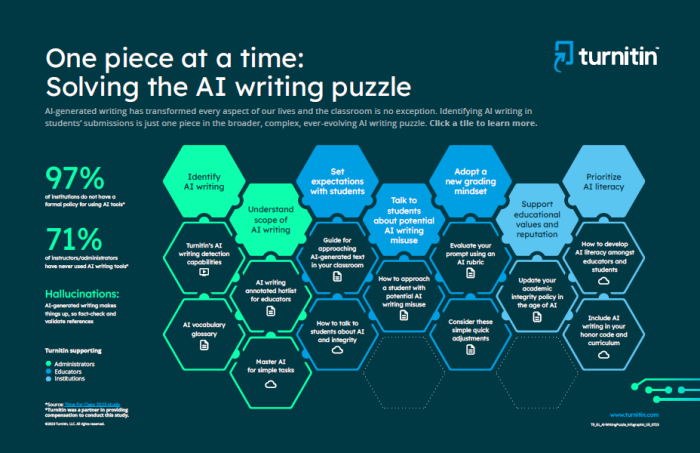

Helping solve the ai writing puzzle one piece at a time.

AI-generated writing has transformed every aspect of our lives, including the classroom. However, identifying AI writing in students’ submissions is just one piece in the broader, complex, ever-evolving AI writing puzzle.

Research corner