Control Group vs. Experimental Group: Everything You Need To Know About The Difference Between Control Group And Experimental Group

As someone who is deeply interested in the field of research, you may have heard the terms control group and experimental group thrown around a lot. If you’re not very familiar with these terms, it can be daunting to determine the role they play in research and why they are so important. In layman’s terms, a control group is a group that does not receive any experimental treatment and is used as a benchmark for the group that does receive the treatment. Meanwhile, the experimental group is a group that receives the treatment and is compared to the control group that does not receive the treatment. To put it simply, the main difference between a control group and an experimental group is whether or not they receive the experimental treatment.

Table of Contents

What Is Control Group?

A control group is a group in an experiment that does not receive the experimental treatment and is used as a comparison for the group that does receive the treatment. It is a critical aspect of experimental research to determine whether the treatment caused the outcome rather than another factor. The control group ensures that any observed effects can be attributed to the treatment and not a result of other variables. The quality of the control group can affect the validity of the experiment. Therefore, researchers must carefully design and select participants for the control group to ensure that it accurately represents the population and provides meaningful results. Overall, control groups are essential to gain accurate and reliable results in experimental research.

What Is Experimental Group?

Key differences between control group and experimental group, control group vs. experimental group similarities.

The control group and experimental group are two essential components of any research study. The main similarity between these groups is that they are both used to assess the effects of a treatment or intervention. The control group is intended to provide a baseline measurement of the outcomes that are expected in the absence of the intervention. In contrast, the experimental group is exposed to the intervention or treatment and is observed for any changes or improvements in outcomes. In summary, both groups serve as comparisons for one another, and their use increases the credibility and validity of research findings.

Control Group vs. Experimental Group Pros and Cons

Control group pros & cons, control group pros, control group cons, experimental group pros & cons, experimental group pros.

The Experimental Group, in scientific studies and experimentation, is a group that receives the experimental treatment and is compared to a control group that does not receive the treatment. There are several advantages or pros of this group. First, the experimental group allows researchers to determine the effectiveness of a new treatment or procedure. Second, it helps in identifying side effects of the treatment on the subjects. Third, it provides clear evidence regarding the cause and effect relationships between variables. Additionally, the experimental group enables researchers to validate their findings and test the hypothesis. These benefits make the Experimental Group essential in accurately assessing the effectiveness of new treatments or procedures.

Experimental Group Cons

Comparison table: 5 key differences between control group and experimental group.

| Purpose | Used as a comparison to the experimental group | Receives the intervention being tested |

| Treatment | Receives no intervention or a placebo | Receives the treatment being tested |

| Randomization | Randomly selected from the population being studied | Randomly selected from the population being studied |

| Sample Size | Large enough to provide statistical power | Large enough to provide statistical power |

| Analysis | Statistical analysis is performed to compare outcomes | Statistical analysis is performed to compare outcomes |

Comparison Chart

Comparison video, conclusion: what is the difference between control group and experimental group.

Federation vs. Confederation: Everything You Need To Know About The Difference Between Federation And Confederation

Farthest vs. Furthest: Everything You Need To Know About The Difference Between Farthest And Furthest

Miralax vs. Colace: Everything You Need To Know About The Difference Between Miralax And Colace

Dulcolax vs. Miralax: Everything You Need To Know About The Difference Between Dulcolax And Miralax

Adh vs. Aldosterone: Everything You Need To Know About The Difference Between Adh And Aldosterone

Chromebook vs. Laptop: Everything You Need To Know About The Difference Between Chromebook And Laptop

Leave a reply cancel reply, add difference 101 to your homescreen.

The Importance of Experimental and Control Groups in Research Design

Table of Contents

Have you ever wondered how scientists ensure the treatments or interventions they study actually cause the outcomes they observe? The secret lies in their research design, specifically in the use of experimental and control group s. These groups are the backbone of psychological experiments, and they play a critical role in helping researchers determine the effectiveness of new therapies, the impact of social changes, or the potential benefits of education al programs.

Understanding experimental and control groups

At the heart of any psychological experiment, you’ll find two key components: the experimental group and the control group . The experimental group consists of participants who receive the treatment or experience the manipulation that the researchers are testing. In contrast, the control group does not receive the treatment or experience the manipulation. By comparing these two groups, researchers can isolate the effects of the treatment from other factors that might influence the outcomes.

Why control groups matter

Control groups serve as a benchmark for comparison. Imagine a study exploring the effects of a new educational program on student performance. Without a control group, which continues with the standard curriculum, it would be difficult to conclude whether observed improvements in the experimental group were due to the new program or other variables like maturation, the placebo effect, or even seasonal changes.

The power of random assignment

Random assignment is the process of allocating participants to either the experimental or control group by chance. This method is crucial because it helps balance out any pre-existing differences between group members. For instance, if one group inadvertently had more participants with a natural aptitude for the subject matter, it would skew the results. Random assignment minimizes this risk, leading to more reliable and valid findings.

Controlling for extraneous variables

Extraneous variables are factors other than the independent variable that might affect the dependent variable. These can include participant characteristics, environmental conditions, and researchers’ biases. Effective research design, with well-structured experimental and control groups, helps control these extraneous variables, ensuring that the treatment is the only difference between the groups.

Types of extraneous variables

Different types of extraneous variables can threaten the validity of an experiment. Some common examples include:

- Participant variables: Differences in participants’ age, gender, or background.

- Situational variables: Variations in the environment where the experiment takes place, such as time of day or room temperature.

- Researcher variables: The researcher’s behavior or expectations influencing the results.

Strategies to control extraneous variables

Researchers can use several strategies to control for extraneous variables, such as:

- Standardization: Keeping procedures consistent for all participants.

- Blinding: Preventing participants or researchers from knowing who is in the experimental or control group.

- Matching: Pairing participants in the experimental and control groups based on certain characteristics.

Enhancing validity through comparisons

Validity refers to the accuracy of an experiment’s results. By comparing the experimental group to the control group, researchers can strengthen the validity of their findings. This comparison helps to ensure that the changes observed in the experimental group are indeed due to the treatment and not some other factor.

Internal versus external validity

Internal validity is the degree to which an experiment accurately establishes a cause-and-effect relationship between the treatment and the observed outcome. External validity, on the other hand, is the extent to which the results can be generalized to other settings, populations, or times. Both types of validity are crucial for the overall credibility of the research.

Improving validity with control groups

Control groups help improve both internal and external validity. For internal validity , they provide a baseline to measure the treatment’s effect. For external validity, if the control group is representative of the wider population, the findings are more likely to hold true in real-world settings.

Real-world implications of experimental and control groups

The use of experimental and control groups extends far beyond the laboratory. In the real world, these research designs inform public policy, healthcare decisions, educational reforms, and more. By rigorously testing new interventions against control conditions, researchers can make evidence-based recommendations that have the potential to improve countless lives.

Examples of experimental research in action

Consider the following scenarios where experimental and control groups have made a significant impact:

- Medical trials: Testing new medications or treatments to ensure they are both safe and effective before they are approved for public use.

- Education: Evaluating the effectiveness of new teaching methods or curricula to enhance student learning outcomes.

- Psychology: Investigating the efficacy of different therapeutic approaches for mental health conditions.

Challenges and ethical considerations

While experimental and control groups are powerful tools, they also come with challenges and ethical considerations. Researchers must navigate issues such as participant consent, the potential for harm, and the equitable distribution of the treatment under study.

Experimental and control groups are the cornerstone of rigorous psychological research. They allow scientists to draw meaningful conclusions about cause and effect, control for extraneous variables, and enhance the validity of their findings. As a result, this research design is not just an academic exercise; it has profound implications for our understanding of human behavior and the improvement of society.

What do you think? How might the principles of experimental and control groups be applied to evaluate the effectiveness of decisions in your own life? Can you think of a situation where a control group might have given you clearer insights into the results of your actions?

How useful was this post?

Click on a star to rate it!

Average rating 0 / 5. Vote count: 0

No votes so far! Be the first to rate this post.

We are sorry that this post was not useful for you!

Let us improve this post!

Tell us how we can improve this post?

Submit a Comment Cancel reply

Your email address will not be published. Required fields are marked *

Save my name, email, and website in this browser for the next time I comment.

Submit Comment

Research Methods in Psychology

1 Introduction to Psychological Research – Objectives and Goals, Problems, Hypothesis and Variables

- Nature of Psychological Research

- The Context of Discovery

- Context of Justification

- Characteristics of Psychological Research

- Goals and Objectives of Psychological Research

2 Introduction to Psychological Experiments and Tests

- Independent and Dependent Variables

- Extraneous Variables

- Experimental and Control Groups

- Introduction of Test

- Types of Psychological Test

- Uses of Psychological Tests

3 Steps in Research

- Research Process

- Identification of the Problem

- Review of Literature

- Formulating a Hypothesis

- Identifying Manipulating and Controlling Variables

- Formulating a Research Design

- Constructing Devices for Observation and Measurement

- Sample Selection and Data Collection

- Data Analysis and Interpretation

- Hypothesis Testing

- Drawing Conclusion

4 Types of Research and Methods of Research

- Historical Research

- Descriptive Research

- Correlational Research

- Qualitative Research

- Ex-Post Facto Research

- True Experimental Research

- Quasi-Experimental Research

5 Definition and Description Research Design, Quality of Research Design

- Research Design

- Purpose of Research Design

- Design Selection

- Criteria of Research Design

- Qualities of Research Design

6 Experimental Design (Control Group Design and Two Factor Design)

- Experimental Design

- Control Group Design

- Two Factor Design

7 Survey Design

- Survey Research Designs

- Steps in Survey Design

- Structuring and Designing the Questionnaire

- Interviewing Methodology

- Data Analysis

- Final Report

8 Single Subject Design

- Single Subject Design: Definition and Meaning

- Phases Within Single Subject Design

- Requirements of Single Subject Design

- Characteristics of Single Subject Design

- Types of Single Subject Design

- Advantages of Single Subject Design

- Disadvantages of Single Subject Design

9 Observation Method

- Definition and Meaning of Observation

- Characteristics of Observation

- Types of Observation

- Advantages and Disadvantages of Observation

- Guides for Observation Method

10 Interview and Interviewing

- Definition of Interview

- Types of Interview

- Aspects of Qualitative Research Interviews

- Interview Questions

- Convergent Interviewing as Action Research

- Research Team

11 Questionnaire Method

- Definition and Description of Questionnaires

- Types of Questionnaires

- Purpose of Questionnaire Studies

- Designing Research Questionnaires

- The Methods to Make a Questionnaire Efficient

- The Types of Questionnaire to be Included in the Questionnaire

- Advantages and Disadvantages of Questionnaire

- When to Use a Questionnaire?

12 Case Study

- Definition and Description of Case Study Method

- Historical Account of Case Study Method

- Designing Case Study

- Requirements for Case Studies

- Guideline to Follow in Case Study Method

- Other Important Measures in Case Study Method

- Case Reports

13 Report Writing

- Purpose of a Report

- Writing Style of the Report

- Report Writing – the Do’s and the Don’ts

- Format for Report in Psychology Area

- Major Sections in a Report

14 Review of Literature

- Purposes of Review of Literature

- Sources of Review of Literature

- Types of Literature

- Writing Process of the Review of Literature

- Preparation of Index Card for Reviewing and Abstracting

15 Methodology

- Definition and Purpose of Methodology

- Participants (Sample)

- Apparatus and Materials

16 Result, Analysis and Discussion of the Data

- Definition and Description of Results

- Statistical Presentation

- Tables and Figures

17 Summary and Conclusion

- Summary Definition and Description

- Guidelines for Writing a Summary

- Writing the Summary and Choosing Words

- A Process for Paraphrasing and Summarising

- Summary of a Report

- Writing Conclusions

18 References in Research Report

- Reference List (the Format)

- References (Process of Writing)

- Reference List and Print Sources

- Electronic Sources

- Book on CD Tape and Movie

- Reference Specifications

- General Guidelines to Write References

Share on Mastodon

Experimental Design: Types, Examples & Methods

Saul McLeod, PhD

Editor-in-Chief for Simply Psychology

BSc (Hons) Psychology, MRes, PhD, University of Manchester

Saul McLeod, PhD., is a qualified psychology teacher with over 18 years of experience in further and higher education. He has been published in peer-reviewed journals, including the Journal of Clinical Psychology.

Learn about our Editorial Process

Olivia Guy-Evans, MSc

Associate Editor for Simply Psychology

BSc (Hons) Psychology, MSc Psychology of Education

Olivia Guy-Evans is a writer and associate editor for Simply Psychology. She has previously worked in healthcare and educational sectors.

On This Page:

Experimental design refers to how participants are allocated to different groups in an experiment. Types of design include repeated measures, independent groups, and matched pairs designs.

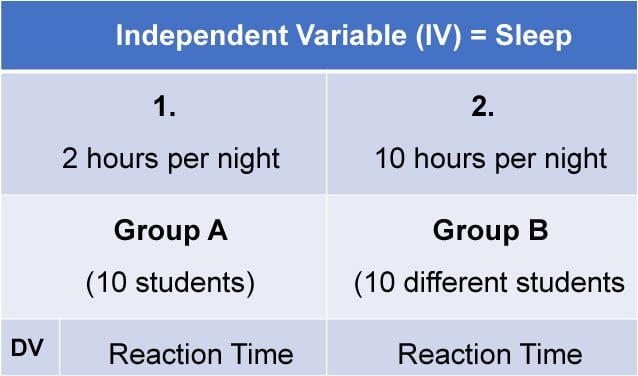

Probably the most common way to design an experiment in psychology is to divide the participants into two groups, the experimental group and the control group, and then introduce a change to the experimental group, not the control group.

The researcher must decide how he/she will allocate their sample to the different experimental groups. For example, if there are 10 participants, will all 10 participants participate in both groups (e.g., repeated measures), or will the participants be split in half and take part in only one group each?

Three types of experimental designs are commonly used:

1. Independent Measures

Independent measures design, also known as between-groups , is an experimental design where different participants are used in each condition of the independent variable. This means that each condition of the experiment includes a different group of participants.

This should be done by random allocation, ensuring that each participant has an equal chance of being assigned to one group.

Independent measures involve using two separate groups of participants, one in each condition. For example:

- Con : More people are needed than with the repeated measures design (i.e., more time-consuming).

- Pro : Avoids order effects (such as practice or fatigue) as people participate in one condition only. If a person is involved in several conditions, they may become bored, tired, and fed up by the time they come to the second condition or become wise to the requirements of the experiment!

- Con : Differences between participants in the groups may affect results, for example, variations in age, gender, or social background. These differences are known as participant variables (i.e., a type of extraneous variable ).

- Control : After the participants have been recruited, they should be randomly assigned to their groups. This should ensure the groups are similar, on average (reducing participant variables).

2. Repeated Measures Design

Repeated Measures design is an experimental design where the same participants participate in each independent variable condition. This means that each experiment condition includes the same group of participants.

Repeated Measures design is also known as within-groups or within-subjects design .

- Pro : As the same participants are used in each condition, participant variables (i.e., individual differences) are reduced.

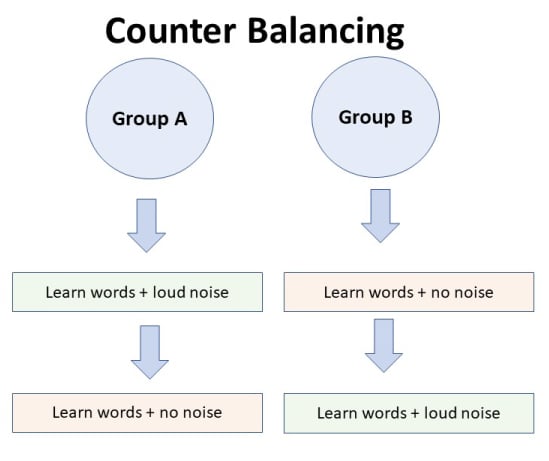

- Con : There may be order effects. Order effects refer to the order of the conditions affecting the participants’ behavior. Performance in the second condition may be better because the participants know what to do (i.e., practice effect). Or their performance might be worse in the second condition because they are tired (i.e., fatigue effect). This limitation can be controlled using counterbalancing.

- Pro : Fewer people are needed as they participate in all conditions (i.e., saves time).

- Control : To combat order effects, the researcher counter-balances the order of the conditions for the participants. Alternating the order in which participants perform in different conditions of an experiment.

Counterbalancing

Suppose we used a repeated measures design in which all of the participants first learned words in “loud noise” and then learned them in “no noise.”

We expect the participants to learn better in “no noise” because of order effects, such as practice. However, a researcher can control for order effects using counterbalancing.

The sample would be split into two groups: experimental (A) and control (B). For example, group 1 does ‘A’ then ‘B,’ and group 2 does ‘B’ then ‘A.’ This is to eliminate order effects.

Although order effects occur for each participant, they balance each other out in the results because they occur equally in both groups.

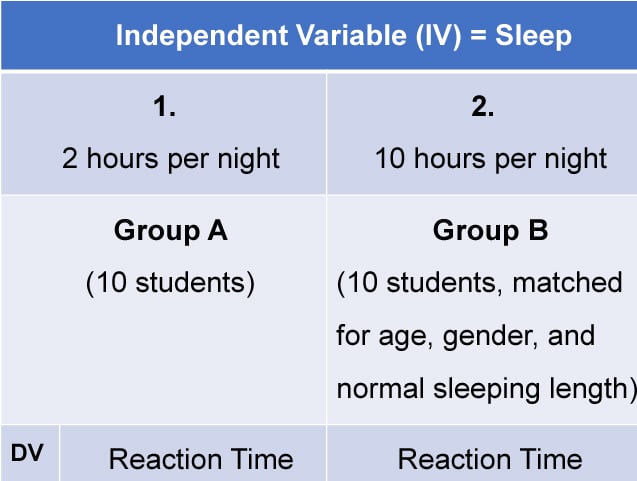

3. Matched Pairs Design

A matched pairs design is an experimental design where pairs of participants are matched in terms of key variables, such as age or socioeconomic status. One member of each pair is then placed into the experimental group and the other member into the control group .

One member of each matched pair must be randomly assigned to the experimental group and the other to the control group.

- Con : If one participant drops out, you lose 2 PPs’ data.

- Pro : Reduces participant variables because the researcher has tried to pair up the participants so that each condition has people with similar abilities and characteristics.

- Con : Very time-consuming trying to find closely matched pairs.

- Pro : It avoids order effects, so counterbalancing is not necessary.

- Con : Impossible to match people exactly unless they are identical twins!

- Control : Members of each pair should be randomly assigned to conditions. However, this does not solve all these problems.

Experimental design refers to how participants are allocated to an experiment’s different conditions (or IV levels). There are three types:

1. Independent measures / between-groups : Different participants are used in each condition of the independent variable.

2. Repeated measures /within groups : The same participants take part in each condition of the independent variable.

3. Matched pairs : Each condition uses different participants, but they are matched in terms of important characteristics, e.g., gender, age, intelligence, etc.

Learning Check

Read about each of the experiments below. For each experiment, identify (1) which experimental design was used; and (2) why the researcher might have used that design.

1 . To compare the effectiveness of two different types of therapy for depression, depressed patients were assigned to receive either cognitive therapy or behavior therapy for a 12-week period.

The researchers attempted to ensure that the patients in the two groups had similar severity of depressed symptoms by administering a standardized test of depression to each participant, then pairing them according to the severity of their symptoms.

2 . To assess the difference in reading comprehension between 7 and 9-year-olds, a researcher recruited each group from a local primary school. They were given the same passage of text to read and then asked a series of questions to assess their understanding.

3 . To assess the effectiveness of two different ways of teaching reading, a group of 5-year-olds was recruited from a primary school. Their level of reading ability was assessed, and then they were taught using scheme one for 20 weeks.

At the end of this period, their reading was reassessed, and a reading improvement score was calculated. They were then taught using scheme two for a further 20 weeks, and another reading improvement score for this period was calculated. The reading improvement scores for each child were then compared.

4 . To assess the effect of the organization on recall, a researcher randomly assigned student volunteers to two conditions.

Condition one attempted to recall a list of words that were organized into meaningful categories; condition two attempted to recall the same words, randomly grouped on the page.

Experiment Terminology

Ecological validity.

The degree to which an investigation represents real-life experiences.

Experimenter effects

These are the ways that the experimenter can accidentally influence the participant through their appearance or behavior.

Demand characteristics

The clues in an experiment lead the participants to think they know what the researcher is looking for (e.g., the experimenter’s body language).

Independent variable (IV)

The variable the experimenter manipulates (i.e., changes) is assumed to have a direct effect on the dependent variable.

Dependent variable (DV)

Variable the experimenter measures. This is the outcome (i.e., the result) of a study.

Extraneous variables (EV)

All variables which are not independent variables but could affect the results (DV) of the experiment. Extraneous variables should be controlled where possible.

Confounding variables

Variable(s) that have affected the results (DV), apart from the IV. A confounding variable could be an extraneous variable that has not been controlled.

Random Allocation

Randomly allocating participants to independent variable conditions means that all participants should have an equal chance of taking part in each condition.

The principle of random allocation is to avoid bias in how the experiment is carried out and limit the effects of participant variables.

Order effects

Changes in participants’ performance due to their repeating the same or similar test more than once. Examples of order effects include:

(i) practice effect: an improvement in performance on a task due to repetition, for example, because of familiarity with the task;

(ii) fatigue effect: a decrease in performance of a task due to repetition, for example, because of boredom or tiredness.

If you're seeing this message, it means we're having trouble loading external resources on our website.

If you're behind a web filter, please make sure that the domains *.kastatic.org and *.kasandbox.org are unblocked.

To log in and use all the features of Khan Academy, please enable JavaScript in your browser.

Biology archive

Course: biology archive > unit 1.

- The scientific method

Controlled experiments

- The scientific method and experimental design

Introduction

How are hypotheses tested.

- One pot of seeds gets watered every afternoon.

- The other pot of seeds doesn't get any water at all.

Control and experimental groups

Independent and dependent variables, independent variables, dependent variables, variability and repetition, controlled experiment case study: co 2 and coral bleaching.

- What your control and experimental groups would be

- What your independent and dependent variables would be

- What results you would predict in each group

Experimental setup

- Some corals were grown in tanks of normal seawater, which is not very acidic ( pH around 8.2 ). The corals in these tanks served as the control group .

- Other corals were grown in tanks of seawater that were more acidic than usual due to addition of CO 2 . One set of tanks was medium-acidity ( pH about 7.9 ), while another set was high-acidity ( pH about 7.65 ). Both the medium-acidity and high-acidity groups were experimental groups .

- In this experiment, the independent variable was the acidity ( pH ) of the seawater. The dependent variable was the degree of bleaching of the corals.

- The researchers used a large sample size and repeated their experiment. Each tank held 5 fragments of coral, and there were 5 identical tanks for each group (control, medium-acidity, and high-acidity). Note: None of these tanks was "acidic" on an absolute scale. That is, the pH values were all above the neutral pH of 7.0 . However, the two groups of experimental tanks were moderately and highly acidic to the corals , that is, relative to their natural habitat of plain seawater.

Analyzing the results

Non-experimental hypothesis tests, case study: coral bleaching and temperature, attribution:, works cited:.

- Hoegh-Guldberg, O. (1999). Climate change, coral bleaching, and the future of the world's coral reefs. Mar. Freshwater Res. , 50 , 839-866. Retrieved from www.reef.edu.au/climate/Hoegh-Guldberg%201999.pdf.

- Anthony, K. R. N., Kline, D. I., Diaz-Pulido, G., Dove, S., and Hoegh-Guldberg, O. (2008). Ocean acidification causes bleaching and productivity loss in coral reef builders. PNAS , 105 (45), 17442-17446. http://dx.doi.org/10.1073/pnas.0804478105 .

- University of California Museum of Paleontology. (2016). Misconceptions about science. In Understanding science . Retrieved from http://undsci.berkeley.edu/teaching/misconceptions.php .

- Hoegh-Guldberg, O. and Smith, G. J. (1989). The effect of sudden changes in temperature, light and salinity on the density and export of zooxanthellae from the reef corals Stylophora pistillata (Esper, 1797) and Seriatopora hystrix (Dana, 1846). J. Exp. Mar. Biol. Ecol. , 129 , 279-303. Retrieved from http://www.reef.edu.au/ohg/res-pic/HG%20papers/HG%20and%20Smith%201989%20BLEACH.pdf .

Additional references:

Want to join the conversation.

- Upvote Button navigates to signup page

- Downvote Button navigates to signup page

- Flag Button navigates to signup page

Control Group

- Reference work entry

- First Online: 01 January 2020

- Cite this reference work entry

- Sven Hilbert 3 , 4 , 5

25 Accesses

A control group is one of multiple groups in an experimental treatment study, used as a baseline for the estimation of the effect of interest in the other groups.

Introduction

Experimental treatment studies are designed to estimate the effect of a particular treatment on one or more variables. Typically, the variables of interest are observed before and after treatment to detect changes that occurred in between. The two observations of the variables are called pretest and posttest to indicate their temporal position before and after the treatment. However, any differences between pre- and posttest need not be caused by the treatment. Therefore, experimental treatment studies use at least two groups: the experimental group receives the treatment while the control group does not. The effect of the treatment can be estimated by comparing the change observed in the treatment group with the change observed in the control group.

Treatment Groups as Independent Variables in an...

This is a preview of subscription content, log in via an institution to check access.

Access this chapter

Subscribe and save.

- Get 10 units per month

- Download Article/Chapter or eBook

- 1 Unit = 1 Article or 1 Chapter

- Cancel anytime

- Available as PDF

- Read on any device

- Instant download

- Own it forever

- Available as EPUB and PDF

- Durable hardcover edition

- Dispatched in 3 to 5 business days

- Free shipping worldwide - see info

Tax calculation will be finalised at checkout

Purchases are for personal use only

Institutional subscriptions

Author information

Authors and affiliations.

Department of Psychology, Psychological Methods and Assessment, Münich, Germany

Sven Hilbert

Faculty of Psychology, Educational Science, and Sport Science, University of Regensburg, Regensburg, Germany

Psychological Methods and Assessment, LMU Munich, Munich, Germany

You can also search for this author in PubMed Google Scholar

Corresponding author

Correspondence to Sven Hilbert .

Editor information

Editors and affiliations.

Oakland University, Rochester, MI, USA

Virgil Zeigler-Hill

Todd K. Shackelford

Section Editor information

Humboldt University, Germany, Berlin, Germany

Matthias Ziegler

Rights and permissions

Reprints and permissions

Copyright information

© 2020 Springer Nature Switzerland AG

About this entry

Cite this entry.

Hilbert, S. (2020). Control Group. In: Zeigler-Hill, V., Shackelford, T.K. (eds) Encyclopedia of Personality and Individual Differences. Springer, Cham. https://doi.org/10.1007/978-3-319-24612-3_1290

Download citation

DOI : https://doi.org/10.1007/978-3-319-24612-3_1290

Published : 22 April 2020

Publisher Name : Springer, Cham

Print ISBN : 978-3-319-24610-9

Online ISBN : 978-3-319-24612-3

eBook Packages : Behavioral Science and Psychology Reference Module Humanities and Social Sciences Reference Module Business, Economics and Social Sciences

Share this entry

Anyone you share the following link with will be able to read this content:

Sorry, a shareable link is not currently available for this article.

Provided by the Springer Nature SharedIt content-sharing initiative

- Publish with us

Policies and ethics

- Find a journal

- Track your research

- History & Society

- Science & Tech

- Biographies

- Animals & Nature

- Geography & Travel

- Arts & Culture

- Games & Quizzes

- On This Day

- One Good Fact

- New Articles

- Lifestyles & Social Issues

- Philosophy & Religion

- Politics, Law & Government

- World History

- Health & Medicine

- Browse Biographies

- Birds, Reptiles & Other Vertebrates

- Bugs, Mollusks & Other Invertebrates

- Environment

- Fossils & Geologic Time

- Entertainment & Pop Culture

- Sports & Recreation

- Visual Arts

- Demystified

- Image Galleries

- Infographics

- Top Questions

- Britannica Kids

- Saving Earth

- Space Next 50

- Student Center

- When did science begin?

- Where was science invented?

control group

Our editors will review what you’ve submitted and determine whether to revise the article.

- Verywell Mind - What Is a Control Group?

- National Center for Biotechnology Information - PubMed Central - Control Group Design: Enhancing Rigor in Research of Mind-Body Therapies for Depression

control group , the standard to which comparisons are made in an experiment. Many experiments are designed to include a control group and one or more experimental groups; in fact, some scholars reserve the term experiment for study designs that include a control group. Ideally, the control group and the experimental groups are identical in every way except that the experimental groups are subjected to treatments or interventions believed to have an effect on the outcome of interest while the control group is not. Inclusion of a control group greatly strengthens researchers’ ability to draw conclusions from a study. Indeed, only in the presence of a control group can a researcher determine whether a treatment under investigation truly has a significant effect on an experimental group, and the possibility of making an erroneous conclusion is reduced. See also scientific method .

A typical use of a control group is in an experiment in which the effect of a treatment is unknown and comparisons between the control group and the experimental group are used to measure the effect of the treatment. For instance, in a pharmaceutical study to determine the effectiveness of a new drug on the treatment of migraines , the experimental group will be administered the new drug and the control group will be administered a placebo (a drug that is inert, or assumed to have no effect). Each group is then given the same questionnaire and asked to rate the effectiveness of the drug in relieving symptoms . If the new drug is effective, the experimental group is expected to have a significantly better response to it than the control group. Another possible design is to include several experimental groups, each of which is given a different dosage of the new drug, plus one control group. In this design, the analyst will compare results from each of the experimental groups to the control group. This type of experiment allows the researcher to determine not only if the drug is effective but also the effectiveness of different dosages. In the absence of a control group, the researcher’s ability to draw conclusions about the new drug is greatly weakened, due to the placebo effect and other threats to validity. Comparisons between the experimental groups with different dosages can be made without including a control group, but there is no way to know if any of the dosages of the new drug are more or less effective than the placebo.

It is important that every aspect of the experimental environment be as alike as possible for all subjects in the experiment. If conditions are different for the experimental and control groups, it is impossible to know whether differences between groups are actually due to the difference in treatments or to the difference in environment. For example, in the new migraine drug study, it would be a poor study design to administer the questionnaire to the experimental group in a hospital setting while asking the control group to complete it at home. Such a study could lead to a misleading conclusion, because differences in responses between the experimental and control groups could have been due to the effect of the drug or could have been due to the conditions under which the data were collected. For instance, perhaps the experimental group received better instructions or was more motivated by being in the hospital setting to give accurate responses than the control group.

In non-laboratory and nonclinical experiments, such as field experiments in ecology or economics , even well-designed experiments are subject to numerous and complex variables that cannot always be managed across the control group and experimental groups. Randomization, in which individuals or groups of individuals are randomly assigned to the treatment and control groups, is an important tool to eliminate selection bias and can aid in disentangling the effects of the experimental treatment from other confounding factors. Appropriate sample sizes are also important.

A control group study can be managed in two different ways. In a single-blind study, the researcher will know whether a particular subject is in the control group, but the subject will not know. In a double-blind study , neither the subject nor the researcher will know which treatment the subject is receiving. In many cases, a double-blind study is preferable to a single-blind study, since the researcher cannot inadvertently affect the results or their interpretation by treating a control subject differently from an experimental subject.

- Science Notes Posts

- Contact Science Notes

- Todd Helmenstine Biography

- Anne Helmenstine Biography

- Free Printable Periodic Tables (PDF and PNG)

- Periodic Table Wallpapers

- Interactive Periodic Table

- Periodic Table Posters

- Science Experiments for Kids

- How to Grow Crystals

- Chemistry Projects

- Fire and Flames Projects

- Holiday Science

- Chemistry Problems With Answers

- Physics Problems

- Unit Conversion Example Problems

- Chemistry Worksheets

- Biology Worksheets

- Periodic Table Worksheets

- Physical Science Worksheets

- Science Lab Worksheets

- My Amazon Books

Control Group Definition and Examples

The control group is the set of subjects that does not receive the treatment in a study. In other words, it is the group where the independent variable is held constant. This is important because the control group is a baseline for measuring the effects of a treatment in an experiment or study. A controlled experiment is one which includes one or more control groups.

- The experimental group experiences a treatment or change in the independent variable. In contrast, the independent variable is constant in the control group.

- A control group is important because it allows meaningful comparison. The researcher compares the experimental group to it to assess whether or not there is a relationship between the independent and dependent variable and the magnitude of the effect.

- There are different types of control groups. A controlled experiment has one more control group.

Control Group vs Experimental Group

The only difference between the control group and experimental group is that subjects in the experimental group receive the treatment being studied, while participants in the control group do not. Otherwise, all other variables between the two groups are the same.

Control Group vs Control Variable

A control group is not the same thing as a control variable. A control variable or controlled variable is any factor that is held constant during an experiment. Examples of common control variables include temperature, duration, and sample size. The control variables are the same for both the control and experimental groups.

Types of Control Groups

There are different types of control groups:

- Placebo group : A placebo group receives a placebo , which is a fake treatment that resembles the treatment in every respect except for the active ingredient. Both the placebo and treatment may contain inactive ingredients that produce side effects. Without a placebo group, these effects might be attributed to the treatment.

- Positive control group : A positive control group has conditions that guarantee a positive test result. The positive control group demonstrates an experiment is capable of producing a positive result. Positive controls help researchers identify problems with an experiment.

- Negative control group : A negative control group consists of subjects that are not exposed to a treatment. For example, in an experiment looking at the effect of fertilizer on plant growth, the negative control group receives no fertilizer.

- Natural control group : A natural control group usually is a set of subjects who naturally differ from the experimental group. For example, if you compare the effects of a treatment on women who have had children, the natural control group includes women who have not had children. Non-smokers are a natural control group in comparison to smokers.

- Randomized control group : The subjects in a randomized control group are randomly selected from a larger pool of subjects. Often, subjects are randomly assigned to either the control or experimental group. Randomization reduces bias in an experiment. There are different methods of randomly assigning test subjects.

Control Group Examples

Here are some examples of different control groups in action:

Negative Control and Placebo Group

For example, consider a study of a new cancer drug. The experimental group receives the drug. The placebo group receives a placebo, which contains the same ingredients as the drug formulation, minus the active ingredient. The negative control group receives no treatment. The reason for including the negative group is because the placebo group experiences some level of placebo effect, which is a response to experiencing some form of false treatment.

Positive and Negative Controls

For example, consider an experiment looking at whether a new drug kills bacteria. The experimental group exposes bacterial cultures to the drug. If the group survives, the drug is ineffective. If the group dies, the drug is effective.

The positive control group has a culture of bacteria that carry a drug resistance gene. If the bacteria survive drug exposure (as intended), then it shows the growth medium and conditions allow bacterial growth. If the positive control group dies, it indicates a problem with the experimental conditions. A negative control group of bacteria lacking drug resistance should die. If the negative control group survives, something is wrong with the experimental conditions.

- Bailey, R. A. (2008). Design of Comparative Experiments . Cambridge University Press. ISBN 978-0-521-68357-9.

- Chaplin, S. (2006). “The placebo response: an important part of treatment”. Prescriber . 17 (5): 16–22. doi: 10.1002/psb.344

- Hinkelmann, Klaus; Kempthorne, Oscar (2008). Design and Analysis of Experiments, Volume I: Introduction to Experimental Design (2nd ed.). Wiley. ISBN 978-0-471-72756-9.

- Pithon, M.M. (2013). “Importance of the control group in scientific research.” Dental Press J Orthod . 18 (6):13-14. doi: 10.1590/s2176-94512013000600003

- Stigler, Stephen M. (1992). “A Historical View of Statistical Concepts in Psychology and Educational Research”. American Journal of Education . 101 (1): 60–70. doi: 10.1086/444032

Related Posts

- Bipolar Disorder

- Therapy Center

- When To See a Therapist

- Types of Therapy

- Best Online Therapy

- Best Couples Therapy

- Managing Stress

- Sleep and Dreaming

- Understanding Emotions

- Self-Improvement

- Healthy Relationships

- Student Resources

- Personality Types

- Sweepstakes

- Guided Meditations

- Verywell Mind Insights

- 2024 Verywell Mind 25

- Mental Health in the Classroom

- Editorial Process

- Meet Our Review Board

- Crisis Support

Experimental Group in Psychology Experiments

In a randomized and controlled psychology experiment , the researchers are examining the impact of an experimental condition on a group of participants (does the independent variable 'X' cause a change in the dependent variable 'Y'?). To determine cause and effect, there must be at least two groups to compare, the experimental group and the control group.

The participants who are in the experimental condition are those who receive the treatment or intervention of interest. The data from their outcomes are collected and compared to the data from a group that did not receive the experimental treatment. The control group may have received no treatment at all, or they may have received a placebo treatment or the standard treatment in current practice.

Comparing the experimental group to the control group allows researchers to see how much of an impact the intervention had on the participants.

A Closer Look at Experimental Groups

Imagine that you want to do an experiment to determine if listening to music while working out can lead to greater weight loss. After getting together a group of participants, you randomly assign them to one of three groups. One group listens to upbeat music while working out, one group listens to relaxing music, and the third group listens to no music at all. All of the participants work out for the same amount of time and the same number of days each week.

In this experiment, the group of participants listening to no music while working out is the control group. They serve as a baseline with which to compare the performance of the other two groups. The other two groups in the experiment are the experimental groups. They each receive some level of the independent variable, which in this case is listening to music while working out.

In this experiment, you find that the participants who listened to upbeat music experienced the greatest weight loss result, largely because those who listened to this type of music exercised with greater intensity than those in the other two groups. By comparing the results from your experimental groups with the results of the control group, you can more clearly see the impact of the independent variable.

Some Things to Know

When it comes to using experimental groups in a psychology experiment, there are a few important things to know:

- In order to determine the impact of an independent variable, it is important to have at least two different treatment conditions. This usually involves using a control group that receives no treatment against an experimental group that receives the treatment. However, there can also be a number of different experimental groups in the same experiment.

- Care must be taken when assigning participants to groups. So how do researchers determine who is in the control group and who is in the experimental group? In an ideal situation, the researchers would use random assignment to place participants in groups. In random assignment, each individual stands an equal shot at being assigned to either group. Participants might be randomly assigned using methods such as a coin flip or a number draw. By using random assignment, researchers can help ensure that the groups are not unfairly stacked with people who share characteristics that might unfairly skew the results.

- Variables must be well-defined. Before you begin manipulating things in an experiment, you need to have very clear operational definitions in place. These definitions clearly explain what your variables are, including exactly how you are manipulating the independent variable and exactly how you are measuring the outcomes.

A Word From Verywell

Experiments play an important role in the research process and allow psychologists to investigate cause-and-effect relationships between different variables. Having one or more experimental groups allows researchers to vary different levels or types of the experimental variable and then compare the effects of these changes against a control group. The goal of this experimental manipulation is to gain a better understanding of the different factors that may have an impact on how people think, feel, and act.

Byrd-Bredbenner C, Wu F, Spaccarotella K, Quick V, Martin-Biggers J, Zhang Y. Systematic review of control groups in nutrition education intervention research . Int J Behav Nutr Phys Act. 2017;14(1):91. doi:10.1186/s12966-017-0546-3

Steingrimsdottir HS, Arntzen E. On the utility of within-participant research design when working with patients with neurocognitive disorders . Clin Interv Aging. 2015;10:1189-1200. doi:10.2147/CIA.S81868

Oberste M, Hartig P, Bloch W, et al. Control group paradigms in studies investigating acute effects of exercise on cognitive performance—An experiment on expectation-driven placebo effects . Front Hum Neurosci. 2017;11:600. doi:10.3389/fnhum.2017.00600

Kim H. Statistical notes for clinical researchers: Analysis of covariance (ANCOVA) . Restor Dent Endod . 2018;43(4):e43. doi:10.5395/rde.2018.43.e43

Bate S, Karp NA. A common control group — Optimising the experiment design to maximise sensitivity . PLoS ONE. 2014;9(12):e114872. doi:10.1371/journal.pone.0114872

Myers A, Hansen C. Experimental Psychology . 7th Ed. Cengage Learning; 2012.

By Kendra Cherry, MSEd Kendra Cherry, MS, is a psychosocial rehabilitation specialist, psychology educator, and author of the "Everything Psychology Book."

Frequently asked questions

What’s the difference between a control group and an experimental group.

An experimental group, also known as a treatment group, receives the treatment whose effect researchers wish to study, whereas a control group does not. They should be identical in all other ways.

Frequently asked questions: Methodology

Quantitative observations involve measuring or counting something and expressing the result in numerical form, while qualitative observations involve describing something in non-numerical terms, such as its appearance, texture, or color.

To make quantitative observations , you need to use instruments that are capable of measuring the quantity you want to observe. For example, you might use a ruler to measure the length of an object or a thermometer to measure its temperature.

Scope of research is determined at the beginning of your research process , prior to the data collection stage. Sometimes called “scope of study,” your scope delineates what will and will not be covered in your project. It helps you focus your work and your time, ensuring that you’ll be able to achieve your goals and outcomes.

Defining a scope can be very useful in any research project, from a research proposal to a thesis or dissertation . A scope is needed for all types of research: quantitative , qualitative , and mixed methods .

To define your scope of research, consider the following:

- Budget constraints or any specifics of grant funding

- Your proposed timeline and duration

- Specifics about your population of study, your proposed sample size , and the research methodology you’ll pursue

- Any inclusion and exclusion criteria

- Any anticipated control , extraneous , or confounding variables that could bias your research if not accounted for properly.

Inclusion and exclusion criteria are predominantly used in non-probability sampling . In purposive sampling and snowball sampling , restrictions apply as to who can be included in the sample .

Inclusion and exclusion criteria are typically presented and discussed in the methodology section of your thesis or dissertation .

The purpose of theory-testing mode is to find evidence in order to disprove, refine, or support a theory. As such, generalisability is not the aim of theory-testing mode.

Due to this, the priority of researchers in theory-testing mode is to eliminate alternative causes for relationships between variables . In other words, they prioritise internal validity over external validity , including ecological validity .

Convergent validity shows how much a measure of one construct aligns with other measures of the same or related constructs .

On the other hand, concurrent validity is about how a measure matches up to some known criterion or gold standard, which can be another measure.

Although both types of validity are established by calculating the association or correlation between a test score and another variable , they represent distinct validation methods.

Validity tells you how accurately a method measures what it was designed to measure. There are 4 main types of validity :

- Construct validity : Does the test measure the construct it was designed to measure?

- Face validity : Does the test appear to be suitable for its objectives ?

- Content validity : Does the test cover all relevant parts of the construct it aims to measure.

- Criterion validity : Do the results accurately measure the concrete outcome they are designed to measure?

Criterion validity evaluates how well a test measures the outcome it was designed to measure. An outcome can be, for example, the onset of a disease.

Criterion validity consists of two subtypes depending on the time at which the two measures (the criterion and your test) are obtained:

- Concurrent validity is a validation strategy where the the scores of a test and the criterion are obtained at the same time

- Predictive validity is a validation strategy where the criterion variables are measured after the scores of the test

Attrition refers to participants leaving a study. It always happens to some extent – for example, in randomised control trials for medical research.

Differential attrition occurs when attrition or dropout rates differ systematically between the intervention and the control group . As a result, the characteristics of the participants who drop out differ from the characteristics of those who stay in the study. Because of this, study results may be biased .

Criterion validity and construct validity are both types of measurement validity . In other words, they both show you how accurately a method measures something.

While construct validity is the degree to which a test or other measurement method measures what it claims to measure, criterion validity is the degree to which a test can predictively (in the future) or concurrently (in the present) measure something.

Construct validity is often considered the overarching type of measurement validity . You need to have face validity , content validity , and criterion validity in order to achieve construct validity.

Convergent validity and discriminant validity are both subtypes of construct validity . Together, they help you evaluate whether a test measures the concept it was designed to measure.

- Convergent validity indicates whether a test that is designed to measure a particular construct correlates with other tests that assess the same or similar construct.

- Discriminant validity indicates whether two tests that should not be highly related to each other are indeed not related. This type of validity is also called divergent validity .

You need to assess both in order to demonstrate construct validity. Neither one alone is sufficient for establishing construct validity.

Face validity and content validity are similar in that they both evaluate how suitable the content of a test is. The difference is that face validity is subjective, and assesses content at surface level.

When a test has strong face validity, anyone would agree that the test’s questions appear to measure what they are intended to measure.

For example, looking at a 4th grade math test consisting of problems in which students have to add and multiply, most people would agree that it has strong face validity (i.e., it looks like a math test).

On the other hand, content validity evaluates how well a test represents all the aspects of a topic. Assessing content validity is more systematic and relies on expert evaluation. of each question, analysing whether each one covers the aspects that the test was designed to cover.

A 4th grade math test would have high content validity if it covered all the skills taught in that grade. Experts(in this case, math teachers), would have to evaluate the content validity by comparing the test to the learning objectives.

Content validity shows you how accurately a test or other measurement method taps into the various aspects of the specific construct you are researching.

In other words, it helps you answer the question: “does the test measure all aspects of the construct I want to measure?” If it does, then the test has high content validity.

The higher the content validity, the more accurate the measurement of the construct.

If the test fails to include parts of the construct, or irrelevant parts are included, the validity of the instrument is threatened, which brings your results into question.

Construct validity refers to how well a test measures the concept (or construct) it was designed to measure. Assessing construct validity is especially important when you’re researching concepts that can’t be quantified and/or are intangible, like introversion. To ensure construct validity your test should be based on known indicators of introversion ( operationalisation ).

On the other hand, content validity assesses how well the test represents all aspects of the construct. If some aspects are missing or irrelevant parts are included, the test has low content validity.

- Discriminant validity indicates whether two tests that should not be highly related to each other are indeed not related

Construct validity has convergent and discriminant subtypes. They assist determine if a test measures the intended notion.

The reproducibility and replicability of a study can be ensured by writing a transparent, detailed method section and using clear, unambiguous language.

Reproducibility and replicability are related terms.

- A successful reproduction shows that the data analyses were conducted in a fair and honest manner.

- A successful replication shows that the reliability of the results is high.

- Reproducing research entails reanalysing the existing data in the same manner.

- Replicating (or repeating ) the research entails reconducting the entire analysis, including the collection of new data .

Snowball sampling is a non-probability sampling method . Unlike probability sampling (which involves some form of random selection ), the initial individuals selected to be studied are the ones who recruit new participants.

Because not every member of the target population has an equal chance of being recruited into the sample, selection in snowball sampling is non-random.

Snowball sampling is a non-probability sampling method , where there is not an equal chance for every member of the population to be included in the sample .

This means that you cannot use inferential statistics and make generalisations – often the goal of quantitative research . As such, a snowball sample is not representative of the target population, and is usually a better fit for qualitative research .

Snowball sampling relies on the use of referrals. Here, the researcher recruits one or more initial participants, who then recruit the next ones.

Participants share similar characteristics and/or know each other. Because of this, not every member of the population has an equal chance of being included in the sample, giving rise to sampling bias .

Snowball sampling is best used in the following cases:

- If there is no sampling frame available (e.g., people with a rare disease)

- If the population of interest is hard to access or locate (e.g., people experiencing homelessness)

- If the research focuses on a sensitive topic (e.g., extra-marital affairs)

Stratified sampling and quota sampling both involve dividing the population into subgroups and selecting units from each subgroup. The purpose in both cases is to select a representative sample and/or to allow comparisons between subgroups.

The main difference is that in stratified sampling, you draw a random sample from each subgroup ( probability sampling ). In quota sampling you select a predetermined number or proportion of units, in a non-random manner ( non-probability sampling ).

Random sampling or probability sampling is based on random selection. This means that each unit has an equal chance (i.e., equal probability) of being included in the sample.

On the other hand, convenience sampling involves stopping people at random, which means that not everyone has an equal chance of being selected depending on the place, time, or day you are collecting your data.

Convenience sampling and quota sampling are both non-probability sampling methods. They both use non-random criteria like availability, geographical proximity, or expert knowledge to recruit study participants.

However, in convenience sampling, you continue to sample units or cases until you reach the required sample size.

In quota sampling, you first need to divide your population of interest into subgroups (strata) and estimate their proportions (quota) in the population. Then you can start your data collection , using convenience sampling to recruit participants, until the proportions in each subgroup coincide with the estimated proportions in the population.

A sampling frame is a list of every member in the entire population . It is important that the sampling frame is as complete as possible, so that your sample accurately reflects your population.

Stratified and cluster sampling may look similar, but bear in mind that groups created in cluster sampling are heterogeneous , so the individual characteristics in the cluster vary. In contrast, groups created in stratified sampling are homogeneous , as units share characteristics.

Relatedly, in cluster sampling you randomly select entire groups and include all units of each group in your sample. However, in stratified sampling, you select some units of all groups and include them in your sample. In this way, both methods can ensure that your sample is representative of the target population .

When your population is large in size, geographically dispersed, or difficult to contact, it’s necessary to use a sampling method .

This allows you to gather information from a smaller part of the population, i.e. the sample, and make accurate statements by using statistical analysis. A few sampling methods include simple random sampling , convenience sampling , and snowball sampling .

The two main types of social desirability bias are:

- Self-deceptive enhancement (self-deception): The tendency to see oneself in a favorable light without realizing it.

- Impression managemen t (other-deception): The tendency to inflate one’s abilities or achievement in order to make a good impression on other people.

Response bias refers to conditions or factors that take place during the process of responding to surveys, affecting the responses. One type of response bias is social desirability bias .

Demand characteristics are aspects of experiments that may give away the research objective to participants. Social desirability bias occurs when participants automatically try to respond in ways that make them seem likeable in a study, even if it means misrepresenting how they truly feel.

Participants may use demand characteristics to infer social norms or experimenter expectancies and act in socially desirable ways, so you should try to control for demand characteristics wherever possible.

A systematic review is secondary research because it uses existing research. You don’t collect new data yourself.

Ethical considerations in research are a set of principles that guide your research designs and practices. These principles include voluntary participation, informed consent, anonymity, confidentiality, potential for harm, and results communication.

Scientists and researchers must always adhere to a certain code of conduct when collecting data from others .

These considerations protect the rights of research participants, enhance research validity , and maintain scientific integrity.

Research ethics matter for scientific integrity, human rights and dignity, and collaboration between science and society. These principles make sure that participation in studies is voluntary, informed, and safe.

Research misconduct means making up or falsifying data, manipulating data analyses, or misrepresenting results in research reports. It’s a form of academic fraud.

These actions are committed intentionally and can have serious consequences; research misconduct is not a simple mistake or a point of disagreement but a serious ethical failure.

Anonymity means you don’t know who the participants are, while confidentiality means you know who they are but remove identifying information from your research report. Both are important ethical considerations .

You can only guarantee anonymity by not collecting any personally identifying information – for example, names, phone numbers, email addresses, IP addresses, physical characteristics, photos, or videos.

You can keep data confidential by using aggregate information in your research report, so that you only refer to groups of participants rather than individuals.

Peer review is a process of evaluating submissions to an academic journal. Utilising rigorous criteria, a panel of reviewers in the same subject area decide whether to accept each submission for publication.

For this reason, academic journals are often considered among the most credible sources you can use in a research project – provided that the journal itself is trustworthy and well regarded.

In general, the peer review process follows the following steps:

- First, the author submits the manuscript to the editor.

- Reject the manuscript and send it back to author, or

- Send it onward to the selected peer reviewer(s)

- Next, the peer review process occurs. The reviewer provides feedback, addressing any major or minor issues with the manuscript, and gives their advice regarding what edits should be made.

- Lastly, the edited manuscript is sent back to the author. They input the edits, and resubmit it to the editor for publication.

Peer review can stop obviously problematic, falsified, or otherwise untrustworthy research from being published. It also represents an excellent opportunity to get feedback from renowned experts in your field.

It acts as a first defence, helping you ensure your argument is clear and that there are no gaps, vague terms, or unanswered questions for readers who weren’t involved in the research process.

Peer-reviewed articles are considered a highly credible source due to this stringent process they go through before publication.

Many academic fields use peer review , largely to determine whether a manuscript is suitable for publication. Peer review enhances the credibility of the published manuscript.

However, peer review is also common in non-academic settings. The United Nations, the European Union, and many individual nations use peer review to evaluate grant applications. It is also widely used in medical and health-related fields as a teaching or quality-of-care measure.

Peer assessment is often used in the classroom as a pedagogical tool. Both receiving feedback and providing it are thought to enhance the learning process, helping students think critically and collaboratively.

- In a single-blind study , only the participants are blinded.

- In a double-blind study , both participants and experimenters are blinded.

- In a triple-blind study , the assignment is hidden not only from participants and experimenters, but also from the researchers analysing the data.

Blinding is important to reduce bias (e.g., observer bias , demand characteristics ) and ensure a study’s internal validity .

If participants know whether they are in a control or treatment group , they may adjust their behaviour in ways that affect the outcome that researchers are trying to measure. If the people administering the treatment are aware of group assignment, they may treat participants differently and thus directly or indirectly influence the final results.

Blinding means hiding who is assigned to the treatment group and who is assigned to the control group in an experiment .

Explanatory research is a research method used to investigate how or why something occurs when only a small amount of information is available pertaining to that topic. It can help you increase your understanding of a given topic.

Explanatory research is used to investigate how or why a phenomenon occurs. Therefore, this type of research is often one of the first stages in the research process , serving as a jumping-off point for future research.

Exploratory research is a methodology approach that explores research questions that have not previously been studied in depth. It is often used when the issue you’re studying is new, or the data collection process is challenging in some way.

Exploratory research is often used when the issue you’re studying is new or when the data collection process is challenging for some reason.

You can use exploratory research if you have a general idea or a specific question that you want to study but there is no preexisting knowledge or paradigm with which to study it.

To implement random assignment , assign a unique number to every member of your study’s sample .

Then, you can use a random number generator or a lottery method to randomly assign each number to a control or experimental group. You can also do so manually, by flipping a coin or rolling a die to randomly assign participants to groups.

Random selection, or random sampling , is a way of selecting members of a population for your study’s sample.

In contrast, random assignment is a way of sorting the sample into control and experimental groups.

Random sampling enhances the external validity or generalisability of your results, while random assignment improves the internal validity of your study.

Random assignment is used in experiments with a between-groups or independent measures design. In this research design, there’s usually a control group and one or more experimental groups. Random assignment helps ensure that the groups are comparable.

In general, you should always use random assignment in this type of experimental design when it is ethically possible and makes sense for your study topic.

Clean data are valid, accurate, complete, consistent, unique, and uniform. Dirty data include inconsistencies and errors.

Dirty data can come from any part of the research process, including poor research design , inappropriate measurement materials, or flawed data entry.

Data cleaning takes place between data collection and data analyses. But you can use some methods even before collecting data.

For clean data, you should start by designing measures that collect valid data. Data validation at the time of data entry or collection helps you minimize the amount of data cleaning you’ll need to do.

After data collection, you can use data standardisation and data transformation to clean your data. You’ll also deal with any missing values, outliers, and duplicate values.

Data cleaning involves spotting and resolving potential data inconsistencies or errors to improve your data quality. An error is any value (e.g., recorded weight) that doesn’t reflect the true value (e.g., actual weight) of something that’s being measured.

In this process, you review, analyse, detect, modify, or remove ‘dirty’ data to make your dataset ‘clean’. Data cleaning is also called data cleansing or data scrubbing.

Data cleaning is necessary for valid and appropriate analyses. Dirty data contain inconsistencies or errors , but cleaning your data helps you minimise or resolve these.

Without data cleaning, you could end up with a Type I or II error in your conclusion. These types of erroneous conclusions can be practically significant with important consequences, because they lead to misplaced investments or missed opportunities.

Observer bias occurs when a researcher’s expectations, opinions, or prejudices influence what they perceive or record in a study. It usually affects studies when observers are aware of the research aims or hypotheses. This type of research bias is also called detection bias or ascertainment bias .

The observer-expectancy effect occurs when researchers influence the results of their own study through interactions with participants.

Researchers’ own beliefs and expectations about the study results may unintentionally influence participants through demand characteristics .

You can use several tactics to minimise observer bias .

- Use masking (blinding) to hide the purpose of your study from all observers.

- Triangulate your data with different data collection methods or sources.

- Use multiple observers and ensure inter-rater reliability.

- Train your observers to make sure data is consistently recorded between them.

- Standardise your observation procedures to make sure they are structured and clear.

Naturalistic observation is a valuable tool because of its flexibility, external validity , and suitability for topics that can’t be studied in a lab setting.

The downsides of naturalistic observation include its lack of scientific control , ethical considerations , and potential for bias from observers and subjects.

Naturalistic observation is a qualitative research method where you record the behaviours of your research subjects in real-world settings. You avoid interfering or influencing anything in a naturalistic observation.

You can think of naturalistic observation as ‘people watching’ with a purpose.

Closed-ended, or restricted-choice, questions offer respondents a fixed set of choices to select from. These questions are easier to answer quickly.

Open-ended or long-form questions allow respondents to answer in their own words. Because there are no restrictions on their choices, respondents can answer in ways that researchers may not have otherwise considered.

You can organise the questions logically, with a clear progression from simple to complex, or randomly between respondents. A logical flow helps respondents process the questionnaire easier and quicker, but it may lead to bias. Randomisation can minimise the bias from order effects.

Questionnaires can be self-administered or researcher-administered.

Self-administered questionnaires can be delivered online or in paper-and-pen formats, in person or by post. All questions are standardised so that all respondents receive the same questions with identical wording.

Researcher-administered questionnaires are interviews that take place by phone, in person, or online between researchers and respondents. You can gain deeper insights by clarifying questions for respondents or asking follow-up questions.

In a controlled experiment , all extraneous variables are held constant so that they can’t influence the results. Controlled experiments require:

- A control group that receives a standard treatment, a fake treatment, or no treatment

- Random assignment of participants to ensure the groups are equivalent

Depending on your study topic, there are various other methods of controlling variables .

A true experiment (aka a controlled experiment) always includes at least one control group that doesn’t receive the experimental treatment.

However, some experiments use a within-subjects design to test treatments without a control group. In these designs, you usually compare one group’s outcomes before and after a treatment (instead of comparing outcomes between different groups).

For strong internal validity , it’s usually best to include a control group if possible. Without a control group, it’s harder to be certain that the outcome was caused by the experimental treatment and not by other variables.

A questionnaire is a data collection tool or instrument, while a survey is an overarching research method that involves collecting and analysing data from people using questionnaires.